mirror of

https://github.com/jumpserver/jumpserver.git

synced 2025-04-29 03:44:41 +00:00

Compare commits

8 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

e77c8a0ec6 | ||

|

|

a9620a3cbe | ||

|

|

769e7dc8a0 | ||

|

|

2a70449411 | ||

|

|

8df720f19e | ||

|

|

dabbb45f6e | ||

|

|

ce24c1c3fd | ||

|

|

3c54c82ce9 |

@ -8,6 +8,3 @@ celerybeat.pid

|

||||

.vagrant/

|

||||

apps/xpack/.git

|

||||

.history/

|

||||

.idea

|

||||

.venv/

|

||||

.env

|

||||

4

.gitattributes

vendored

4

.gitattributes

vendored

@ -0,0 +1,4 @@

|

||||

*.mmdb filter=lfs diff=lfs merge=lfs -text

|

||||

*.mo filter=lfs diff=lfs merge=lfs -text

|

||||

*.ipdb filter=lfs diff=lfs merge=lfs -text

|

||||

|

||||

11

.github/ISSUE_TEMPLATE/----.md

vendored

Normal file

11

.github/ISSUE_TEMPLATE/----.md

vendored

Normal file

@ -0,0 +1,11 @@

|

||||

---

|

||||

name: 需求建议

|

||||

about: 提出针对本项目的想法和建议

|

||||

title: "[Feature] "

|

||||

labels: 类型:需求

|

||||

assignees:

|

||||

- ibuler

|

||||

- baijiangjie

|

||||

---

|

||||

|

||||

**请描述您的需求或者改进建议.**

|

||||

72

.github/ISSUE_TEMPLATE/1_bug_report.yml

vendored

72

.github/ISSUE_TEMPLATE/1_bug_report.yml

vendored

@ -1,72 +0,0 @@

|

||||

name: '🐛 Bug Report'

|

||||

description: 'Report an Bug'

|

||||

title: '[Bug] '

|

||||

labels: ['🐛 Bug']

|

||||

assignees:

|

||||

- baijiangjie

|

||||

body:

|

||||

- type: input

|

||||

attributes:

|

||||

label: 'Product Version'

|

||||

description: The versions prior to v2.28 (inclusive) are no longer supported.

|

||||

validations:

|

||||

required: true

|

||||

|

||||

- type: checkboxes

|

||||

attributes:

|

||||

label: 'Product Edition'

|

||||

options:

|

||||

- label: 'Community Edition'

|

||||

- label: 'Enterprise Edition'

|

||||

- label: 'Enterprise Trial Edition'

|

||||

validations:

|

||||

required: true

|

||||

|

||||

- type: checkboxes

|

||||

attributes:

|

||||

label: 'Installation Method'

|

||||

options:

|

||||

- label: 'Online Installation (One-click command installation)'

|

||||

- label: 'Offline Package Installation'

|

||||

- label: 'All-in-One'

|

||||

- label: '1Panel'

|

||||

- label: 'Kubernetes'

|

||||

- label: 'Source Code'

|

||||

|

||||

- type: textarea

|

||||

attributes:

|

||||

label: 'Environment Information'

|

||||

description: Please provide a clear and concise description outlining your environment information.

|

||||

validations:

|

||||

required: true

|

||||

|

||||

- type: textarea

|

||||

attributes:

|

||||

label: '🐛 Bug Description'

|

||||

description:

|

||||

Please provide a clear and concise description of the defect. If the issue is complex, please provide detailed explanations. <br/>

|

||||

Unclear descriptions will not be processed. Please ensure you provide enough detail and information to support replicating and fixing the defect.

|

||||

validations:

|

||||

required: true

|

||||

|

||||

- type: textarea

|

||||

attributes:

|

||||

label: 'Recurrence Steps'

|

||||

description: Please provide a clear and concise description outlining how to reproduce the issue.

|

||||

validations:

|

||||

required: true

|

||||

|

||||

- type: textarea

|

||||

attributes:

|

||||

label: 'Expected Behavior'

|

||||

description: Please provide a clear and concise description of what you expect to happen.

|

||||

|

||||

- type: textarea

|

||||

attributes:

|

||||

label: 'Additional Information'

|

||||

description: Please add any additional background information about the issue here.

|

||||

|

||||

- type: textarea

|

||||

attributes:

|

||||

label: 'Attempted Solutions'

|

||||

description: If you have already attempted to solve the issue, please list the solutions you have tried here.

|

||||

60

.github/ISSUE_TEMPLATE/2_question.yml

vendored

60

.github/ISSUE_TEMPLATE/2_question.yml

vendored

@ -1,60 +0,0 @@

|

||||

name: '🤔 Question'

|

||||

description: 'Pose a question'

|

||||

title: '[Question] '

|

||||

labels: ['🤔 Question']

|

||||

assignees:

|

||||

- baijiangjie

|

||||

body:

|

||||

- type: input

|

||||

attributes:

|

||||

label: 'Product Version'

|

||||

description: The versions prior to v2.28 (inclusive) are no longer supported.

|

||||

validations:

|

||||

required: true

|

||||

|

||||

- type: checkboxes

|

||||

attributes:

|

||||

label: 'Product Edition'

|

||||

options:

|

||||

- label: 'Community Edition'

|

||||

- label: 'Enterprise Edition'

|

||||

- label: 'Enterprise Trial Edition'

|

||||

validations:

|

||||

required: true

|

||||

|

||||

- type: checkboxes

|

||||

attributes:

|

||||

label: 'Installation Method'

|

||||

options:

|

||||

- label: 'Online Installation (One-click command installation)'

|

||||

- label: 'Offline Package Installation'

|

||||

- label: 'All-in-One'

|

||||

- label: '1Panel'

|

||||

- label: 'Kubernetes'

|

||||

- label: 'Source Code'

|

||||

|

||||

- type: textarea

|

||||

attributes:

|

||||

label: 'Environment Information'

|

||||

description: Please provide a clear and concise description outlining your environment information.

|

||||

validations:

|

||||

required: true

|

||||

|

||||

- type: textarea

|

||||

attributes:

|

||||

label: '🤔 Question Description'

|

||||

description: |

|

||||

Please provide a clear and concise description of the defect. If the issue is complex, please provide detailed explanations. <br/>

|

||||

Unclear descriptions will not be processed.

|

||||

validations:

|

||||

required: true

|

||||

|

||||

- type: textarea

|

||||

attributes:

|

||||

label: 'Expected Behavior'

|

||||

description: Please provide a clear and concise description of what you expect to happen.

|

||||

|

||||

- type: textarea

|

||||

attributes:

|

||||

label: 'Additional Information'

|

||||

description: Please add any additional background information about the issue here.

|

||||

56

.github/ISSUE_TEMPLATE/3_feature_request.yml

vendored

56

.github/ISSUE_TEMPLATE/3_feature_request.yml

vendored

@ -1,56 +0,0 @@

|

||||

name: '⭐️ Feature Request'

|

||||

description: 'Suggest an idea'

|

||||

title: '[Feature] '

|

||||

labels: ['⭐️ Feature Request']

|

||||

assignees:

|

||||

- baijiangjie

|

||||

- ibuler

|

||||

body:

|

||||

- type: input

|

||||

attributes:

|

||||

label: 'Product Version'

|

||||

description: The versions prior to v2.28 (inclusive) are no longer supported.

|

||||

validations:

|

||||

required: true

|

||||

|

||||

- type: checkboxes

|

||||

attributes:

|

||||

label: 'Product Edition'

|

||||

options:

|

||||

- label: 'Community Edition'

|

||||

- label: 'Enterprise Edition'

|

||||

- label: 'Enterprise Trial Edition'

|

||||

validations:

|

||||

required: true

|

||||

|

||||

- type: checkboxes

|

||||

attributes:

|

||||

label: 'Installation Method'

|

||||

options:

|

||||

- label: 'Online Installation (One-click command installation)'

|

||||

- label: 'Offline Package Installation'

|

||||

- label: 'All-in-One'

|

||||

- label: '1Panel'

|

||||

- label: 'Kubernetes'

|

||||

- label: 'Source Code'

|

||||

|

||||

- type: textarea

|

||||

attributes:

|

||||

label: '⭐️ Feature Description'

|

||||

description: |

|

||||

Please add a clear and concise description of the problem you aim to solve with this feature request.<br/>

|

||||

Unclear descriptions will not be processed.

|

||||

validations:

|

||||

required: true

|

||||

|

||||

- type: textarea

|

||||

attributes:

|

||||

label: 'Proposed Solution'

|

||||

description: Please provide a clear and concise description of the solution you desire.

|

||||

validations:

|

||||

required: true

|

||||

|

||||

- type: textarea

|

||||

attributes:

|

||||

label: 'Additional Information'

|

||||

description: Please add any additional background information about the issue here.

|

||||

72

.github/ISSUE_TEMPLATE/4_bug_report_cn.yml

vendored

72

.github/ISSUE_TEMPLATE/4_bug_report_cn.yml

vendored

@ -1,72 +0,0 @@

|

||||

name: '🐛 反馈缺陷'

|

||||

description: '反馈一个缺陷'

|

||||

title: '[Bug] '

|

||||

labels: ['🐛 Bug']

|

||||

assignees:

|

||||

- baijiangjie

|

||||

body:

|

||||

- type: input

|

||||

attributes:

|

||||

label: '产品版本'

|

||||

description: 不再支持 v2.28(含)之前的版本。

|

||||

validations:

|

||||

required: true

|

||||

|

||||

- type: checkboxes

|

||||

attributes:

|

||||

label: '版本类型'

|

||||

options:

|

||||

- label: '社区版'

|

||||

- label: '企业版'

|

||||

- label: '企业试用版'

|

||||

validations:

|

||||

required: true

|

||||

|

||||

- type: checkboxes

|

||||

attributes:

|

||||

label: '安装方式'

|

||||

options:

|

||||

- label: '在线安装 (一键命令安装)'

|

||||

- label: '离线包安装'

|

||||

- label: 'All-in-One'

|

||||

- label: '1Panel'

|

||||

- label: 'Kubernetes'

|

||||

- label: '源码安装'

|

||||

|

||||

- type: textarea

|

||||

attributes:

|

||||

label: '环境信息'

|

||||

description: 请提供一个清晰且简洁的描述,说明你的环境信息。

|

||||

validations:

|

||||

required: true

|

||||

|

||||

- type: textarea

|

||||

attributes:

|

||||

label: '🐛 缺陷描述'

|

||||

description: |

|

||||

请提供一个清晰且简洁的缺陷描述,如果问题比较复杂,也请详细说明。<br/>

|

||||

针对不清晰的描述信息将不予处理。请确保提供足够的细节和信息,以支持对缺陷进行复现和修复。

|

||||

validations:

|

||||

required: true

|

||||

|

||||

- type: textarea

|

||||

attributes:

|

||||

label: '复现步骤'

|

||||

description: 请提供一个清晰且简洁的描述,说明如何复现问题。

|

||||

validations:

|

||||

required: true

|

||||

|

||||

- type: textarea

|

||||

attributes:

|

||||

label: '期望结果'

|

||||

description: 请提供一个清晰且简洁的描述,说明你期望发生什么。

|

||||

|

||||

- type: textarea

|

||||

attributes:

|

||||

label: '补充信息'

|

||||

description: 在这里添加关于问题的任何其他背景信息。

|

||||

|

||||

- type: textarea

|

||||

attributes:

|

||||

label: '尝试过的解决方案'

|

||||

description: 如果你已经尝试解决问题,请在此列出你尝试过的解决方案。

|

||||

61

.github/ISSUE_TEMPLATE/5_question_cn.yml

vendored

61

.github/ISSUE_TEMPLATE/5_question_cn.yml

vendored

@ -1,61 +0,0 @@

|

||||

name: '🤔 问题咨询'

|

||||

description: '提出一个问题'

|

||||

title: '[Question] '

|

||||

labels: ['🤔 Question']

|

||||

assignees:

|

||||

- baijiangjie

|

||||

body:

|

||||

- type: input

|

||||

attributes:

|

||||

label: '产品版本'

|

||||

description: 不再支持 v2.28(含)之前的版本。

|

||||

validations:

|

||||

required: true

|

||||

|

||||

- type: checkboxes

|

||||

attributes:

|

||||

label: '版本类型'

|

||||

options:

|

||||

- label: '社区版'

|

||||

- label: '企业版'

|

||||

- label: '企业试用版'

|

||||

validations:

|

||||

required: true

|

||||

|

||||

- type: checkboxes

|

||||

attributes:

|

||||

label: '安装方式'

|

||||

options:

|

||||

- label: '在线安装 (一键命令安装)'

|

||||

- label: '离线包安装'

|

||||

- label: 'All-in-One'

|

||||

- label: '1Panel'

|

||||

- label: 'Kubernetes'

|

||||

- label: '源码安装'

|

||||

|

||||

- type: textarea

|

||||

attributes:

|

||||

label: '环境信息'

|

||||

description: 请在此详细描述你的环境信息,如操作系统、浏览器和部署架构等。

|

||||

validations:

|

||||

required: true

|

||||

|

||||

- type: textarea

|

||||

attributes:

|

||||

label: '🤔 问题描述'

|

||||

description: |

|

||||

请提供一个清晰且简洁的问题描述,如果问题比较复杂,也请详细说明。<br/>

|

||||

针对不清晰的描述信息将不予处理。

|

||||

validations:

|

||||

required: true

|

||||

|

||||

- type: textarea

|

||||

attributes:

|

||||

label: '期望结果'

|

||||

description: 请提供一个清晰且简洁的描述,说明你期望发生什么。

|

||||

|

||||

- type: textarea

|

||||

attributes:

|

||||

label: '补充信息'

|

||||

description: 在这里添加关于问题的任何其他背景信息。

|

||||

|

||||

56

.github/ISSUE_TEMPLATE/6_feature_request_cn.yml

vendored

56

.github/ISSUE_TEMPLATE/6_feature_request_cn.yml

vendored

@ -1,56 +0,0 @@

|

||||

name: '⭐️ 功能需求'

|

||||

description: '提出需求或建议'

|

||||

title: '[Feature] '

|

||||

labels: ['⭐️ Feature Request']

|

||||

assignees:

|

||||

- baijiangjie

|

||||

- ibuler

|

||||

body:

|

||||

- type: input

|

||||

attributes:

|

||||

label: '产品版本'

|

||||

description: 不再支持 v2.28(含)之前的版本。

|

||||

validations:

|

||||

required: true

|

||||

|

||||

- type: checkboxes

|

||||

attributes:

|

||||

label: '版本类型'

|

||||

options:

|

||||

- label: '社区版'

|

||||

- label: '企业版'

|

||||

- label: '企业试用版'

|

||||

validations:

|

||||

required: true

|

||||

|

||||

- type: checkboxes

|

||||

attributes:

|

||||

label: '安装方式'

|

||||

options:

|

||||

- label: '在线安装 (一键命令安装)'

|

||||

- label: '离线包安装'

|

||||

- label: 'All-in-One'

|

||||

- label: '1Panel'

|

||||

- label: 'Kubernetes'

|

||||

- label: '源码安装'

|

||||

|

||||

- type: textarea

|

||||

attributes:

|

||||

label: '⭐️ 需求描述'

|

||||

description: |

|

||||

请添加一个清晰且简洁的问题描述,阐述你希望通过这个功能需求解决的问题。<br/>

|

||||

针对不清晰的描述信息将不予处理。

|

||||

validations:

|

||||

required: true

|

||||

|

||||

- type: textarea

|

||||

attributes:

|

||||

label: '解决方案'

|

||||

description: 请清晰且简洁地描述你想要的解决方案。

|

||||

validations:

|

||||

required: true

|

||||

|

||||

- type: textarea

|

||||

attributes:

|

||||

label: '补充信息'

|

||||

description: 在这里添加关于问题的任何其他背景信息。

|

||||

22

.github/ISSUE_TEMPLATE/bug---.md

vendored

Normal file

22

.github/ISSUE_TEMPLATE/bug---.md

vendored

Normal file

@ -0,0 +1,22 @@

|

||||

---

|

||||

name: Bug 提交

|

||||

about: 提交产品缺陷帮助我们更好的改进

|

||||

title: "[Bug] "

|

||||

labels: 类型:Bug

|

||||

assignees:

|

||||

- baijiangjie

|

||||

---

|

||||

|

||||

**JumpServer 版本( v2.28 之前的版本不再支持 )**

|

||||

|

||||

|

||||

**浏览器版本**

|

||||

|

||||

|

||||

**Bug 描述**

|

||||

|

||||

|

||||

**Bug 重现步骤(有截图更好)**

|

||||

1.

|

||||

2.

|

||||

3.

|

||||

10

.github/ISSUE_TEMPLATE/question.md

vendored

Normal file

10

.github/ISSUE_TEMPLATE/question.md

vendored

Normal file

@ -0,0 +1,10 @@

|

||||

---

|

||||

name: 问题咨询

|

||||

about: 提出针对本项目安装部署、使用及其他方面的相关问题

|

||||

title: "[Question] "

|

||||

labels: 类型:提问

|

||||

assignees:

|

||||

- baijiangjie

|

||||

---

|

||||

|

||||

**请描述您的问题.**

|

||||

10

.github/dependabot.yml

vendored

10

.github/dependabot.yml

vendored

@ -1,10 +0,0 @@

|

||||

version: 2

|

||||

updates:

|

||||

- package-ecosystem: "uv"

|

||||

directory: "/"

|

||||

schedule:

|

||||

interval: "weekly"

|

||||

day: "monday"

|

||||

time: "09:30"

|

||||

timezone: "Asia/Shanghai"

|

||||

target-branch: dev

|

||||

74

.github/workflows/build-base-image.yml

vendored

74

.github/workflows/build-base-image.yml

vendored

@ -1,74 +0,0 @@

|

||||

name: Build and Push Base Image

|

||||

|

||||

on:

|

||||

pull_request:

|

||||

branches:

|

||||

- 'dev'

|

||||

- 'v*'

|

||||

paths:

|

||||

- poetry.lock

|

||||

- pyproject.toml

|

||||

- Dockerfile-base

|

||||

- package.json

|

||||

- go.mod

|

||||

- yarn.lock

|

||||

- pom.xml

|

||||

- install_deps.sh

|

||||

- utils/clean_site_packages.sh

|

||||

types:

|

||||

- opened

|

||||

- synchronize

|

||||

- reopened

|

||||

|

||||

jobs:

|

||||

build-and-push:

|

||||

runs-on: ubuntu-22.04

|

||||

steps:

|

||||

- name: Checkout repository

|

||||

uses: actions/checkout@v4

|

||||

with:

|

||||

ref: ${{ github.event.pull_request.head.ref }}

|

||||

|

||||

- name: Set up QEMU

|

||||

uses: docker/setup-qemu-action@v3

|

||||

with:

|

||||

image: tonistiigi/binfmt:qemu-v7.0.0-28

|

||||

|

||||

- name: Set up Docker Buildx

|

||||

uses: docker/setup-buildx-action@v3

|

||||

|

||||

- name: Login to DockerHub

|

||||

uses: docker/login-action@v2

|

||||

with:

|

||||

username: ${{ secrets.DOCKERHUB_USERNAME }}

|

||||

password: ${{ secrets.DOCKERHUB_TOKEN }}

|

||||

|

||||

- name: Extract date

|

||||

id: vars

|

||||

run: echo "IMAGE_TAG=$(date +'%Y%m%d_%H%M%S')" >> $GITHUB_ENV

|

||||

|

||||

- name: Extract repository name

|

||||

id: repo

|

||||

run: echo "REPO=$(basename ${{ github.repository }})" >> $GITHUB_ENV

|

||||

|

||||

- name: Build and push multi-arch image

|

||||

uses: docker/build-push-action@v6

|

||||

with:

|

||||

platforms: linux/amd64,linux/arm64

|

||||

push: true

|

||||

file: Dockerfile-base

|

||||

tags: jumpserver/core-base:${{ env.IMAGE_TAG }}

|

||||

|

||||

- name: Update Dockerfile

|

||||

run: |

|

||||

sed -i 's|-base:.* AS stage-build|-base:${{ env.IMAGE_TAG }} AS stage-build|' Dockerfile

|

||||

|

||||

- name: Commit changes

|

||||

run: |

|

||||

git config --global user.name 'github-actions[bot]'

|

||||

git config --global user.email 'github-actions[bot]@users.noreply.github.com'

|

||||

git add Dockerfile

|

||||

git commit -m "perf: Update Dockerfile with new base image tag"

|

||||

git push origin ${{ github.event.pull_request.head.ref }}

|

||||

env:

|

||||

GITHUB_TOKEN: ${{ secrets.GITHUB_TOKEN }}

|

||||

31

.github/workflows/check-compilemessages.yml

vendored

31

.github/workflows/check-compilemessages.yml

vendored

@ -1,31 +0,0 @@

|

||||

name: Check I18n files CompileMessages

|

||||

|

||||

on:

|

||||

pull_request:

|

||||

branches:

|

||||

- 'dev'

|

||||

paths:

|

||||

- 'apps/i18n/core/**/*.po'

|

||||

types:

|

||||

- opened

|

||||

- synchronize

|

||||

- reopened

|

||||

jobs:

|

||||

compile-messages-check:

|

||||

runs-on: ubuntu-latest

|

||||

|

||||

steps:

|

||||

- name: Checkout repository

|

||||

uses: actions/checkout@v4

|

||||

|

||||

- name: Set up Docker Buildx

|

||||

uses: docker/setup-buildx-action@v3

|

||||

|

||||

- name: Build and check compilemessages

|

||||

uses: docker/build-push-action@v6

|

||||

with:

|

||||

platforms: linux/amd64

|

||||

push: false

|

||||

file: Dockerfile

|

||||

target: stage-build

|

||||

tags: jumpserver/core:stage-build

|

||||

24

.github/workflows/discord-release.yml

vendored

24

.github/workflows/discord-release.yml

vendored

@ -1,24 +0,0 @@

|

||||

name: Publish Release to Discord

|

||||

|

||||

on:

|

||||

release:

|

||||

types: [published]

|

||||

|

||||

jobs:

|

||||

send_discord_notification:

|

||||

runs-on: ubuntu-latest

|

||||

if: startsWith(github.event.release.tag_name, 'v4.')

|

||||

steps:

|

||||

- name: Send release notification to Discord

|

||||

env:

|

||||

WEBHOOK_URL: ${{ secrets.DISCORD_CHANGELOG_WEBHOOK }}

|

||||

run: |

|

||||

# 获取标签名称和 release body

|

||||

TAG_NAME="${{ github.event.release.tag_name }}"

|

||||

RELEASE_BODY="${{ github.event.release.body }}"

|

||||

|

||||

# 使用 jq 构建 JSON 数据,以确保安全传递

|

||||

JSON_PAYLOAD=$(jq -n --arg tag "# JumpServer $TAG_NAME Released! 🚀" --arg body "$RELEASE_BODY" '{content: "\($tag)\n\($body)"}')

|

||||

|

||||

# 使用 curl 发送 JSON 数据

|

||||

curl -X POST -H "Content-Type: application/json" -d "$JSON_PAYLOAD" "$WEBHOOK_URL"

|

||||

24

.github/workflows/docs-release.yml

vendored

24

.github/workflows/docs-release.yml

vendored

@ -1,24 +0,0 @@

|

||||

name: Auto update docs changelog

|

||||

|

||||

on:

|

||||

release:

|

||||

types: [published]

|

||||

|

||||

jobs:

|

||||

update_docs_changelog:

|

||||

runs-on: ubuntu-latest

|

||||

if: startsWith(github.event.release.tag_name, 'v4.')

|

||||

steps:

|

||||

- name: Checkout repository

|

||||

uses: actions/checkout@v4

|

||||

- name: Update docs changelog

|

||||

env:

|

||||

TAG_NAME: ${{ github.event.release.tag_name }}

|

||||

DOCS_TOKEN: ${{ secrets.DOCS_TOKEN }}

|

||||

run: |

|

||||

git config --global user.name 'BaiJiangJie'

|

||||

git config --global user.email 'jiangjie.bai@fit2cloud.com'

|

||||

|

||||

git clone https://$DOCS_TOKEN@github.com/jumpservice/documentation.git

|

||||

cd documentation/utils

|

||||

bash update_changelog.sh

|

||||

4

.github/workflows/issue-close-require.yml

vendored

4

.github/workflows/issue-close-require.yml

vendored

@ -12,9 +12,7 @@ jobs:

|

||||

uses: actions-cool/issues-helper@v2

|

||||

with:

|

||||

actions: 'close-issues'

|

||||

labels: '⏳ Pending feedback'

|

||||

labels: '状态:待反馈'

|

||||

inactive-day: 30

|

||||

body: |

|

||||

You haven't provided feedback for over 30 days.

|

||||

We will close this issue. If you have any further needs, you can reopen it or submit a new issue.

|

||||

您超过 30 天未反馈信息,我们将关闭该 issue,如有需求您可以重新打开或者提交新的 issue。

|

||||

|

||||

2

.github/workflows/issue-close.yml

vendored

2

.github/workflows/issue-close.yml

vendored

@ -13,4 +13,4 @@ jobs:

|

||||

if: ${{ !github.event.issue.pull_request }}

|

||||

with:

|

||||

actions: 'remove-labels'

|

||||

labels: '🔔 Pending processing,⏳ Pending feedback'

|

||||

labels: '状态:待处理,状态:待反馈'

|

||||

8

.github/workflows/issue-comment.yml

vendored

8

.github/workflows/issue-comment.yml

vendored

@ -13,13 +13,13 @@ jobs:

|

||||

uses: actions-cool/issues-helper@v2

|

||||

with:

|

||||

actions: 'add-labels'

|

||||

labels: '🔔 Pending processing'

|

||||

labels: '状态:待处理'

|

||||

|

||||

- name: Remove require reply label

|

||||

uses: actions-cool/issues-helper@v2

|

||||

with:

|

||||

actions: 'remove-labels'

|

||||

labels: '⏳ Pending feedback'

|

||||

labels: '状态:待反馈'

|

||||

|

||||

add-label-if-is-member:

|

||||

runs-on: ubuntu-latest

|

||||

@ -55,11 +55,11 @@ jobs:

|

||||

uses: actions-cool/issues-helper@v2

|

||||

with:

|

||||

actions: 'add-labels'

|

||||

labels: '⏳ Pending feedback'

|

||||

labels: '状态:待反馈'

|

||||

|

||||

- name: Remove require handle label

|

||||

if: contains(steps.member_names.outputs.data, github.event.comment.user.login)

|

||||

uses: actions-cool/issues-helper@v2

|

||||

with:

|

||||

actions: 'remove-labels'

|

||||

labels: '🔔 Pending processing'

|

||||

labels: '状态:待处理'

|

||||

|

||||

2

.github/workflows/issue-open.yml

vendored

2

.github/workflows/issue-open.yml

vendored

@ -13,4 +13,4 @@ jobs:

|

||||

if: ${{ !github.event.issue.pull_request }}

|

||||

with:

|

||||

actions: 'add-labels'

|

||||

labels: '🔔 Pending processing'

|

||||

labels: '状态:待处理'

|

||||

36

.github/workflows/jms-build-test.yml

vendored

Normal file

36

.github/workflows/jms-build-test.yml

vendored

Normal file

@ -0,0 +1,36 @@

|

||||

name: "Run Build Test"

|

||||

on:

|

||||

push:

|

||||

branches:

|

||||

- pr@*

|

||||

- repr@*

|

||||

|

||||

jobs:

|

||||

build:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- uses: actions/checkout@v3

|

||||

|

||||

- uses: docker/setup-qemu-action@v2

|

||||

|

||||

- uses: docker/setup-buildx-action@v2

|

||||

|

||||

- uses: docker/build-push-action@v3

|

||||

with:

|

||||

context: .

|

||||

push: false

|

||||

tags: jumpserver/core-ce:test

|

||||

file: Dockerfile-ce

|

||||

build-args: |

|

||||

APT_MIRROR=http://deb.debian.org

|

||||

PIP_MIRROR=https://pypi.org/simple

|

||||

PIP_JMS_MIRROR=https://pypi.org/simple

|

||||

cache-from: type=gha

|

||||

cache-to: type=gha,mode=max

|

||||

|

||||

- uses: LouisBrunner/checks-action@v1.5.0

|

||||

if: always()

|

||||

with:

|

||||

token: ${{ secrets.GITHUB_TOKEN }}

|

||||

name: Check Build

|

||||

conclusion: ${{ job.status }}

|

||||

63

.github/workflows/jms-build-test.yml.disabled

vendored

63

.github/workflows/jms-build-test.yml.disabled

vendored

@ -1,63 +0,0 @@

|

||||

name: "Run Build Test"

|

||||

on:

|

||||

push:

|

||||

paths:

|

||||

- 'Dockerfile'

|

||||

- 'Dockerfile*'

|

||||

- 'Dockerfile-*'

|

||||

- 'pyproject.toml'

|

||||

- 'poetry.lock'

|

||||

|

||||

jobs:

|

||||

build:

|

||||

runs-on: ubuntu-latest

|

||||

strategy:

|

||||

matrix:

|

||||

component: [core]

|

||||

version: [v4]

|

||||

steps:

|

||||

- uses: actions/checkout@v4

|

||||

- uses: docker/setup-buildx-action@v3

|

||||

|

||||

- name: Login to GitHub Container Registry

|

||||

uses: docker/login-action@v3

|

||||

with:

|

||||

registry: ghcr.io

|

||||

username: ${{ github.repository_owner }}

|

||||

password: ${{ secrets.GITHUB_TOKEN }}

|

||||

|

||||

- name: Prepare Build

|

||||

run: |

|

||||

sed -i 's@^FROM registry.fit2cloud.com/jumpserver@FROM ghcr.io/jumpserver@g' Dockerfile-ee

|

||||

|

||||

- name: Build CE Image

|

||||

uses: docker/build-push-action@v5

|

||||

with:

|

||||

context: .

|

||||

push: true

|

||||

file: Dockerfile

|

||||

tags: ghcr.io/jumpserver/${{ matrix.component }}:${{ matrix.version }}-ce

|

||||

platforms: linux/amd64

|

||||

build-args: |

|

||||

VERSION=${{ matrix.version }}

|

||||

APT_MIRROR=http://deb.debian.org

|

||||

PIP_MIRROR=https://pypi.org/simple

|

||||

outputs: type=image,oci-mediatypes=true,compression=zstd,compression-level=3,force-compression=true

|

||||

cache-from: type=gha

|

||||

cache-to: type=gha,mode=max

|

||||

|

||||

- name: Build EE Image

|

||||

uses: docker/build-push-action@v5

|

||||

with:

|

||||

context: .

|

||||

push: false

|

||||

file: Dockerfile-ee

|

||||

tags: ghcr.io/jumpserver/${{ matrix.component }}:${{ matrix.version }}

|

||||

platforms: linux/amd64

|

||||

build-args: |

|

||||

VERSION=${{ matrix.version }}

|

||||

APT_MIRROR=http://deb.debian.org

|

||||

PIP_MIRROR=https://pypi.org/simple

|

||||

outputs: type=image,oci-mediatypes=true,compression=zstd,compression-level=3,force-compression=true

|

||||

cache-from: type=gha

|

||||

cache-to: type=gha,mode=max

|

||||

@ -10,4 +10,3 @@ jobs:

|

||||

- uses: jumpserver/action-generic-handler@master

|

||||

env:

|

||||

GITHUB_TOKEN: ${{ secrets.PRIVATE_TOKEN }}

|

||||

I18N_TOKEN: ${{ secrets.I18N_TOKEN }}

|

||||

|

||||

28

.github/workflows/llm-code-review.yml.bak

vendored

28

.github/workflows/llm-code-review.yml.bak

vendored

@ -1,28 +0,0 @@

|

||||

name: LLM Code Review

|

||||

|

||||

permissions:

|

||||

contents: read

|

||||

pull-requests: write

|

||||

|

||||

on:

|

||||

pull_request:

|

||||

types: [opened, reopened, synchronize]

|

||||

|

||||

jobs:

|

||||

llm-code-review:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- uses: fit2cloud/LLM-CodeReview-Action@main

|

||||

env:

|

||||

GITHUB_TOKEN: ${{ secrets.FIT2CLOUDRD_LLM_CODE_REVIEW_TOKEN }}

|

||||

OPENAI_API_KEY: ${{ secrets.ALIYUN_LLM_API_KEY }}

|

||||

LANGUAGE: English

|

||||

OPENAI_API_ENDPOINT: https://dashscope.aliyuncs.com/compatible-mode/v1

|

||||

MODEL: qwen2-1.5b-instruct

|

||||

PROMPT: "Please check the following code differences for any irregularities, potential issues, or optimization suggestions, and provide your answers in English."

|

||||

top_p: 1

|

||||

temperature: 1

|

||||

# max_tokens: 10000

|

||||

MAX_PATCH_LENGTH: 10000

|

||||

IGNORE_PATTERNS: "/node_modules,*.md,/dist,/.github"

|

||||

FILE_PATTERNS: "*.java,*.go,*.py,*.vue,*.ts,*.js,*.css,*.scss,*.html"

|

||||

45

.github/workflows/translate-readme.yml

vendored

45

.github/workflows/translate-readme.yml

vendored

@ -1,45 +0,0 @@

|

||||

name: Translate README

|

||||

on:

|

||||

workflow_dispatch:

|

||||

inputs:

|

||||

source_readme:

|

||||

description: "Source README"

|

||||

required: false

|

||||

default: "./readmes/README.en.md"

|

||||

target_langs:

|

||||

description: "Target Languages"

|

||||

required: false

|

||||

default: "zh-hans,zh-hant,ja,pt-br,es,ru"

|

||||

gen_dir_path:

|

||||

description: "Generate Dir Name"

|

||||

required: false

|

||||

default: "readmes/"

|

||||

push_branch:

|

||||

description: "Push Branch"

|

||||

required: false

|

||||

default: "pr@dev@translate_readme"

|

||||

prompt:

|

||||

description: "AI Translate Prompt"

|

||||

required: false

|

||||

default: ""

|

||||

|

||||

gpt_mode:

|

||||

description: "GPT Mode"

|

||||

required: false

|

||||

default: "gpt-4o-mini"

|

||||

|

||||

jobs:

|

||||

build:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- name: Auto Translate

|

||||

uses: jumpserver-dev/action-translate-readme@main

|

||||

env:

|

||||

GITHUB_TOKEN: ${{ secrets.PRIVATE_TOKEN }}

|

||||

OPENAI_API_KEY: ${{ secrets.GPT_API_TOKEN }}

|

||||

GPT_MODE: ${{ github.event.inputs.gpt_mode }}

|

||||

SOURCE_README: ${{ github.event.inputs.source_readme }}

|

||||

TARGET_LANGUAGES: ${{ github.event.inputs.target_langs }}

|

||||

PUSH_BRANCH: ${{ github.event.inputs.push_branch }}

|

||||

GEN_DIR_PATH: ${{ github.event.inputs.gen_dir_path }}

|

||||

PROMPT: ${{ github.event.inputs.prompt }}

|

||||

6

.gitignore

vendored

6

.gitignore

vendored

@ -43,9 +43,3 @@ releashe

|

||||

data/*

|

||||

test.py

|

||||

.history/

|

||||

.test/

|

||||

*.mo

|

||||

apps.iml

|

||||

*.db

|

||||

*.mmdb

|

||||

*.ipdb

|

||||

|

||||

@ -1,4 +1,3 @@

|

||||

[settings]

|

||||

line_length=120

|

||||

known_first_party=common,users,assets,perms,authentication,jumpserver,notification,ops,orgs,rbac,settings,terminal,tickets

|

||||

|

||||

|

||||

@ -1,2 +0,0 @@

|

||||

[MESSAGES CONTROL]

|

||||

disable=missing-module-docstring,missing-class-docstring,missing-function-docstring,too-many-ancestors

|

||||

@ -1,10 +1,5 @@

|

||||

# Contributing

|

||||

|

||||

As a contributor, you should agree that:

|

||||

|

||||

- The producer can adjust the open-source agreement to be more strict or relaxed as deemed necessary.

|

||||

- Your contributed code may be used for commercial purposes, including but not limited to its cloud business operations.

|

||||

|

||||

## Create pull request

|

||||

PR are always welcome, even if they only contain small fixes like typos or a few lines of code. If there will be a significant effort, please document it as an issue and get a discussion going before starting to work on it.

|

||||

|

||||

|

||||

68

Dockerfile

68

Dockerfile

@ -1,68 +0,0 @@

|

||||

FROM jumpserver/core-base:20250427_062456 AS stage-build

|

||||

|

||||

ARG VERSION

|

||||

|

||||

WORKDIR /opt/jumpserver

|

||||

|

||||

ADD . .

|

||||

|

||||

RUN echo > /opt/jumpserver/config.yml \

|

||||

&& \

|

||||

if [ -n "${VERSION}" ]; then \

|

||||

sed -i "s@VERSION = .*@VERSION = '${VERSION}'@g" apps/jumpserver/const.py; \

|

||||

fi

|

||||

|

||||

RUN set -ex \

|

||||

&& export SECRET_KEY=$(head -c100 < /dev/urandom | base64 | tr -dc A-Za-z0-9 | head -c 48) \

|

||||

&& . /opt/py3/bin/activate \

|

||||

&& cd apps \

|

||||

&& python manage.py compilemessages

|

||||

|

||||

|

||||

FROM python:3.11-slim-bullseye

|

||||

ENV LANG=en_US.UTF-8 \

|

||||

PATH=/opt/py3/bin:$PATH

|

||||

|

||||

ARG DEPENDENCIES=" \

|

||||

libldap2-dev \

|

||||

libx11-dev"

|

||||

|

||||

ARG TOOLS=" \

|

||||

cron \

|

||||

ca-certificates \

|

||||

default-libmysqlclient-dev \

|

||||

openssh-client \

|

||||

sshpass \

|

||||

bubblewrap"

|

||||

|

||||

ARG APT_MIRROR=http://deb.debian.org

|

||||

|

||||

RUN set -ex \

|

||||

&& sed -i "s@http://.*.debian.org@${APT_MIRROR}@g" /etc/apt/sources.list \

|

||||

&& ln -sf /usr/share/zoneinfo/Asia/Shanghai /etc/localtime \

|

||||

&& apt-get update > /dev/null \

|

||||

&& apt-get -y install --no-install-recommends ${DEPENDENCIES} \

|

||||

&& apt-get -y install --no-install-recommends ${TOOLS} \

|

||||

&& mkdir -p /root/.ssh/ \

|

||||

&& echo "Host *\n\tStrictHostKeyChecking no\n\tUserKnownHostsFile /dev/null\n\tCiphers +aes128-cbc\n\tKexAlgorithms +diffie-hellman-group1-sha1\n\tHostKeyAlgorithms +ssh-rsa" > /root/.ssh/config \

|

||||

&& echo "no" | dpkg-reconfigure dash \

|

||||

&& apt-get clean all \

|

||||

&& rm -rf /var/lib/apt/lists/* \

|

||||

&& echo "0 3 * * * root find /tmp -type f -mtime +1 -size +1M -exec rm -f {} \; && date > /tmp/clean.log" > /etc/cron.d/cleanup_tmp \

|

||||

&& chmod 0644 /etc/cron.d/cleanup_tmp

|

||||

|

||||

COPY --from=stage-build /opt /opt

|

||||

COPY --from=stage-build /usr/local/bin /usr/local/bin

|

||||

COPY --from=stage-build /opt/jumpserver/apps/libs/ansible/ansible.cfg /etc/ansible/

|

||||

|

||||

WORKDIR /opt/jumpserver

|

||||

|

||||

VOLUME /opt/jumpserver/data

|

||||

|

||||

ENTRYPOINT ["./entrypoint.sh"]

|

||||

|

||||

EXPOSE 8080

|

||||

|

||||

STOPSIGNAL SIGQUIT

|

||||

|

||||

CMD ["start", "all"]

|

||||

@ -1,61 +0,0 @@

|

||||

FROM python:3.11-slim-bullseye

|

||||

ARG TARGETARCH

|

||||

COPY --from=ghcr.io/astral-sh/uv:0.6.14 /uv /uvx /usr/local/bin/

|

||||

# Install APT dependencies

|

||||

ARG DEPENDENCIES=" \

|

||||

ca-certificates \

|

||||

wget \

|

||||

g++ \

|

||||

make \

|

||||

pkg-config \

|

||||

default-libmysqlclient-dev \

|

||||

freetds-dev \

|

||||

gettext \

|

||||

libkrb5-dev \

|

||||

libldap2-dev \

|

||||

libsasl2-dev"

|

||||

|

||||

ARG APT_MIRROR=http://deb.debian.org

|

||||

|

||||

RUN --mount=type=cache,target=/var/cache/apt,sharing=locked,id=core \

|

||||

--mount=type=cache,target=/var/lib/apt,sharing=locked,id=core \

|

||||

set -ex \

|

||||

&& rm -f /etc/apt/apt.conf.d/docker-clean \

|

||||

&& echo 'Binary::apt::APT::Keep-Downloaded-Packages "true";' > /etc/apt/apt.conf.d/keep-cache \

|

||||

&& sed -i "s@http://.*.debian.org@${APT_MIRROR}@g" /etc/apt/sources.list \

|

||||

&& apt-get update > /dev/null \

|

||||

&& apt-get -y install --no-install-recommends ${DEPENDENCIES} \

|

||||

&& echo "no" | dpkg-reconfigure dash

|

||||

|

||||

# Install bin tools

|

||||

ARG CHECK_VERSION=v1.0.4

|

||||

RUN set -ex \

|

||||

&& wget https://github.com/jumpserver-dev/healthcheck/releases/download/${CHECK_VERSION}/check-${CHECK_VERSION}-linux-${TARGETARCH}.tar.gz \

|

||||

&& tar -xf check-${CHECK_VERSION}-linux-${TARGETARCH}.tar.gz \

|

||||

&& mv check /usr/local/bin/ \

|

||||

&& chown root:root /usr/local/bin/check \

|

||||

&& chmod 755 /usr/local/bin/check \

|

||||

&& rm -f check-${CHECK_VERSION}-linux-${TARGETARCH}.tar.gz

|

||||

|

||||

# Install Python dependencies

|

||||

WORKDIR /opt/jumpserver

|

||||

|

||||

ARG PIP_MIRROR=https://pypi.org/simple

|

||||

ENV POETRY_PYPI_MIRROR_URL=${PIP_MIRROR}

|

||||

ENV ANSIBLE_COLLECTIONS_PATHS=/opt/py3/lib/python3.11/site-packages/ansible_collections

|

||||

ENV LANG=en_US.UTF-8 \

|

||||

PATH=/opt/py3/bin:$PATH

|

||||

|

||||

ENV UV_LINK_MODE=copy

|

||||

|

||||

RUN --mount=type=cache,target=/root/.cache \

|

||||

--mount=type=bind,source=pyproject.toml,target=pyproject.toml \

|

||||

--mount=type=bind,source=requirements/clean_site_packages.sh,target=clean_site_packages.sh \

|

||||

--mount=type=bind,source=requirements/collections.yml,target=collections.yml \

|

||||

--mount=type=bind,source=requirements/static_files.sh,target=utils/static_files.sh \

|

||||

set -ex \

|

||||

&& uv venv \

|

||||

&& uv pip install -i${PIP_MIRROR} -r pyproject.toml \

|

||||

&& ln -sf $(pwd)/.venv /opt/py3 \

|

||||

&& bash utils/static_files.sh \

|

||||

&& bash clean_site_packages.sh

|

||||

125

Dockerfile-ce

Normal file

125

Dockerfile-ce

Normal file

@ -0,0 +1,125 @@

|

||||

FROM python:3.11-slim-bullseye as stage-1

|

||||

ARG TARGETARCH

|

||||

|

||||

ARG VERSION

|

||||

ENV VERSION=$VERSION

|

||||

|

||||

WORKDIR /opt/jumpserver

|

||||

ADD . .

|

||||

RUN echo > /opt/jumpserver/config.yml \

|

||||

&& cd utils && bash -ixeu build.sh

|

||||

|

||||

FROM python:3.11-slim-bullseye as stage-2

|

||||

ARG TARGETARCH

|

||||

|

||||

ARG BUILD_DEPENDENCIES=" \

|

||||

g++ \

|

||||

make \

|

||||

pkg-config"

|

||||

|

||||

ARG DEPENDENCIES=" \

|

||||

freetds-dev \

|

||||

libpq-dev \

|

||||

libffi-dev \

|

||||

libjpeg-dev \

|

||||

libkrb5-dev \

|

||||

libldap2-dev \

|

||||

libsasl2-dev \

|

||||

libssl-dev \

|

||||

libxml2-dev \

|

||||

libxmlsec1-dev \

|

||||

libxmlsec1-openssl \

|

||||

freerdp2-dev \

|

||||

libaio-dev"

|

||||

|

||||

ARG TOOLS=" \

|

||||

ca-certificates \

|

||||

curl \

|

||||

default-libmysqlclient-dev \

|

||||

default-mysql-client \

|

||||

git \

|

||||

git-lfs \

|

||||

unzip \

|

||||

xz-utils \

|

||||

wget"

|

||||

|

||||

ARG APT_MIRROR=http://mirrors.ustc.edu.cn

|

||||

RUN --mount=type=cache,target=/var/cache/apt,sharing=locked,id=core-apt \

|

||||

--mount=type=cache,target=/var/lib/apt,sharing=locked,id=core-apt \

|

||||

sed -i "s@http://.*.debian.org@${APT_MIRROR}@g" /etc/apt/sources.list \

|

||||

&& rm -f /etc/apt/apt.conf.d/docker-clean \

|

||||

&& ln -sf /usr/share/zoneinfo/Asia/Shanghai /etc/localtime \

|

||||

&& apt-get update \

|

||||

&& apt-get -y install --no-install-recommends ${BUILD_DEPENDENCIES} \

|

||||

&& apt-get -y install --no-install-recommends ${DEPENDENCIES} \

|

||||

&& apt-get -y install --no-install-recommends ${TOOLS} \

|

||||

&& echo "no" | dpkg-reconfigure dash

|

||||

|

||||

WORKDIR /opt/jumpserver

|

||||

|

||||

ARG PIP_MIRROR=https://pypi.tuna.tsinghua.edu.cn/simple

|

||||

RUN --mount=type=cache,target=/root/.cache \

|

||||

--mount=type=bind,source=poetry.lock,target=/opt/jumpserver/poetry.lock \

|

||||

--mount=type=bind,source=pyproject.toml,target=/opt/jumpserver/pyproject.toml \

|

||||

set -ex \

|

||||

&& python3 -m venv /opt/py3 \

|

||||

&& pip install poetry -i ${PIP_MIRROR} \

|

||||

&& poetry config virtualenvs.create false \

|

||||

&& . /opt/py3/bin/activate \

|

||||

&& poetry install

|

||||

|

||||

FROM python:3.11-slim-bullseye

|

||||

ARG TARGETARCH

|

||||

ENV LANG=zh_CN.UTF-8 \

|

||||

PATH=/opt/py3/bin:$PATH

|

||||

|

||||

ARG DEPENDENCIES=" \

|

||||

libjpeg-dev \

|

||||

libx11-dev \

|

||||

freerdp2-dev \

|

||||

libxmlsec1-openssl"

|

||||

|

||||

ARG TOOLS=" \

|

||||

ca-certificates \

|

||||

curl \

|

||||

default-libmysqlclient-dev \

|

||||

default-mysql-client \

|

||||

iputils-ping \

|

||||

locales \

|

||||

nmap \

|

||||

openssh-client \

|

||||

patch \

|

||||

sshpass \

|

||||

telnet \

|

||||

vim \

|

||||

wget"

|

||||

|

||||

ARG APT_MIRROR=http://mirrors.ustc.edu.cn

|

||||

RUN --mount=type=cache,target=/var/cache/apt,sharing=locked,id=core-apt \

|

||||

--mount=type=cache,target=/var/lib/apt,sharing=locked,id=core-apt \

|

||||

sed -i "s@http://.*.debian.org@${APT_MIRROR}@g" /etc/apt/sources.list \

|

||||

&& rm -f /etc/apt/apt.conf.d/docker-clean \

|

||||

&& ln -sf /usr/share/zoneinfo/Asia/Shanghai /etc/localtime \

|

||||

&& apt-get update \

|

||||

&& apt-get -y install --no-install-recommends ${DEPENDENCIES} \

|

||||

&& apt-get -y install --no-install-recommends ${TOOLS} \

|

||||

&& mkdir -p /root/.ssh/ \

|

||||

&& echo "Host *\n\tStrictHostKeyChecking no\n\tUserKnownHostsFile /dev/null\n\tCiphers +aes128-cbc\n\tKexAlgorithms +diffie-hellman-group1-sha1\n\tHostKeyAlgorithms +ssh-rsa" > /root/.ssh/config \

|

||||

&& echo "no" | dpkg-reconfigure dash \

|

||||

&& echo "zh_CN.UTF-8" | dpkg-reconfigure locales \

|

||||

&& sed -i "s@# export @export @g" ~/.bashrc \

|

||||

&& sed -i "s@# alias @alias @g" ~/.bashrc

|

||||

|

||||

COPY --from=stage-2 /opt/py3 /opt/py3

|

||||

COPY --from=stage-1 /opt/jumpserver/release/jumpserver /opt/jumpserver

|

||||

|

||||

WORKDIR /opt/jumpserver

|

||||

|

||||

ARG VERSION

|

||||

ENV VERSION=$VERSION

|

||||

|

||||

VOLUME /opt/jumpserver/data

|

||||

|

||||

EXPOSE 8080

|

||||

|

||||

ENTRYPOINT ["./entrypoint.sh"]

|

||||

@ -1,30 +1,5 @@

|

||||

ARG VERSION=dev

|

||||

|

||||

FROM registry.fit2cloud.com/jumpserver/xpack:${VERSION} AS build-xpack

|

||||

FROM jumpserver/core:${VERSION}-ce

|

||||

|

||||

COPY --from=build-xpack /opt/xpack /opt/jumpserver/apps/xpack

|

||||

|

||||

ARG TOOLS=" \

|

||||

g++ \

|

||||

curl \

|

||||

iputils-ping \

|

||||

netcat-openbsd \

|

||||

nmap \

|

||||

telnet \

|

||||

vim \

|

||||

wget"

|

||||

|

||||

RUN set -ex \

|

||||

&& apt-get update \

|

||||

&& apt-get -y install --no-install-recommends ${TOOLS} \

|

||||

&& apt-get clean all \

|

||||

&& rm -rf /var/lib/apt/lists/*

|

||||

|

||||

WORKDIR /opt/jumpserver

|

||||

|

||||

ARG PIP_MIRROR=https://pypi.org/simple

|

||||

|

||||

RUN set -ex \

|

||||

&& uv pip install -i${PIP_MIRROR} --group xpack

|

||||

ARG VERSION

|

||||

FROM registry.fit2cloud.com/jumpserver/xpack:${VERSION} as build-xpack

|

||||

FROM registry.fit2cloud.com/jumpserver/core-ce:${VERSION}

|

||||

|

||||

COPY --from=build-xpack /opt/xpack /opt/jumpserver/apps/xpack

|

||||

204

README.md

204

README.md

@ -1,123 +1,125 @@

|

||||

<div align="center">

|

||||

<a name="readme-top"></a>

|

||||

<a href="https://jumpserver.com" target="_blank"><img src="https://download.jumpserver.org/images/jumpserver-logo.svg" alt="JumpServer" width="300" /></a>

|

||||

|

||||

## An open-source PAM tool (Bastion Host)

|

||||

<p align="center">

|

||||

<a href="https://jumpserver.org"><img src="https://download.jumpserver.org/images/jumpserver-logo.svg" alt="JumpServer" width="300" /></a>

|

||||

</p>

|

||||

<h3 align="center">广受欢迎的开源堡垒机</h3>

|

||||

|

||||

[![][license-shield]][license-link]

|

||||

[![][docs-shield]][docs-link]

|

||||

[![][deepwiki-shield]][deepwiki-link]

|

||||

[![][discord-shield]][discord-link]

|

||||

[![][docker-shield]][docker-link]

|

||||

[![][github-release-shield]][github-release-link]

|

||||

[![][github-stars-shield]][github-stars-link]

|

||||

|

||||

[English](/README.md) · [中文(简体)](/readmes/README.zh-hans.md) · [中文(繁體)](/readmes/README.zh-hant.md) · [日本語](/readmes/README.ja.md) · [Português (Brasil)](/readmes/README.pt-br.md) · [Español](/readmes/README.es.md) · [Русский](/readmes/README.ru.md)

|

||||

|

||||

</div>

|

||||

<br/>

|

||||

|

||||

## What is JumpServer?

|

||||

|

||||

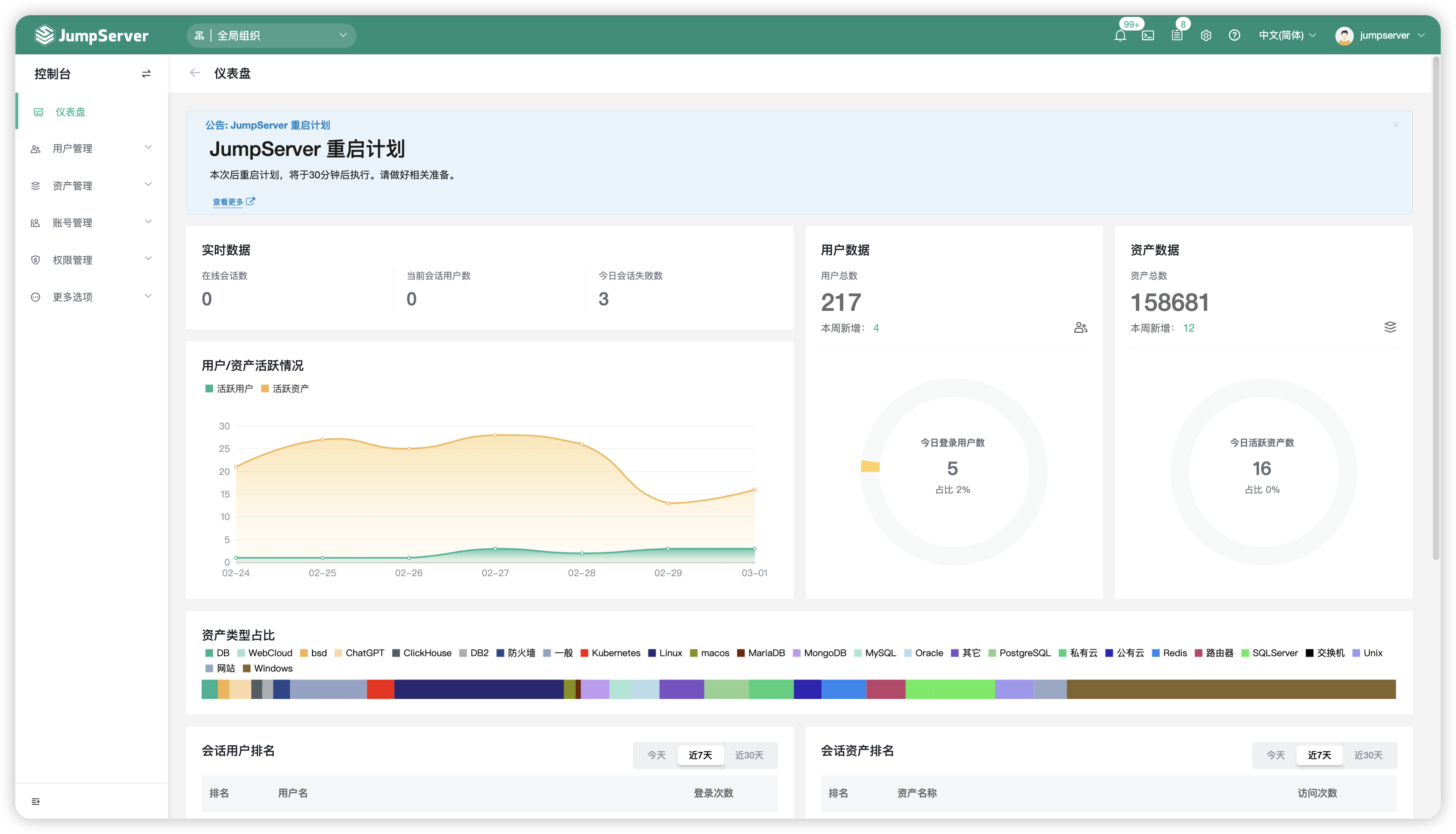

JumpServer is an open-source Privileged Access Management (PAM) tool that provides DevOps and IT teams with on-demand and secure access to SSH, RDP, Kubernetes, Database and RemoteApp endpoints through a web browser.

|

||||

<p align="center">

|

||||

<a href="https://www.gnu.org/licenses/gpl-3.0.html"><img src="https://img.shields.io/github/license/jumpserver/jumpserver" alt="License: GPLv3"></a>

|

||||

<a href="https://hub.docker.com/u/jumpserver"><img src="https://img.shields.io/docker/pulls/jumpserver/jms_all.svg" alt="Docker pulls"></a>

|

||||

<a href="https://github.com/jumpserver/jumpserver/releases/latest"><img src="https://img.shields.io/github/v/release/jumpserver/jumpserver" alt="Latest release"></a>

|

||||

<a href="https://github.com/jumpserver/jumpserver"><img src="https://img.shields.io/github/stars/jumpserver/jumpserver?color=%231890FF&style=flat-square" alt="Stars"></a>

|

||||

</p>

|

||||

|

||||

|

||||

<picture>

|

||||

<source media="(prefers-color-scheme: light)" srcset="https://github.com/user-attachments/assets/dd612f3d-c958-4f84-b164-f31b75454d7f">

|

||||

<source media="(prefers-color-scheme: dark)" srcset="https://github.com/user-attachments/assets/28676212-2bc4-4a9f-ae10-3be9320647e3">

|

||||

<img src="https://github.com/user-attachments/assets/dd612f3d-c958-4f84-b164-f31b75454d7f" alt="Theme-based Image">

|

||||

</picture>

|

||||

<p align="center">

|

||||

9 年时间,倾情投入,用心做好一款开源堡垒机。

|

||||

</p>

|

||||

|

||||

------------------------------

|

||||

JumpServer 是广受欢迎的开源堡垒机,是符合 4A 规范的专业运维安全审计系统。

|

||||

|

||||

## Quickstart

|

||||

JumpServer 堡垒机帮助企业以更安全的方式管控和登录各种类型的资产,包括:

|

||||

|

||||

Prepare a clean Linux Server ( 64 bit, >= 4c8g )

|

||||

- **SSH**: Linux / Unix / 网络设备 等;

|

||||

- **Windows**: Web 方式连接 / 原生 RDP 连接;

|

||||

- **数据库**: MySQL / MariaDB / PostgreSQL / Oracle / SQLServer / ClickHouse 等;

|

||||

- **NoSQL**: Redis / MongoDB 等;

|

||||

- **GPT**: ChatGPT 等;

|

||||

- **云服务**: Kubernetes / VMware vSphere 等;

|

||||

- **Web 站点**: 各类系统的 Web 管理后台;

|

||||

- **应用**: 通过 Remote App 连接各类应用。

|

||||

|

||||

```sh

|

||||

curl -sSL https://github.com/jumpserver/jumpserver/releases/latest/download/quick_start.sh | bash

|

||||

```

|

||||

## 产品特色

|

||||

|

||||

Access JumpServer in your browser at `http://your-jumpserver-ip/`

|

||||

- Username: `admin`

|

||||

- Password: `ChangeMe`

|

||||

- **开源**: 零门槛,线上快速获取和安装;

|

||||

- **无插件**: 仅需浏览器,极致的 Web Terminal 使用体验;

|

||||

- **分布式**: 支持分布式部署和横向扩展,轻松支持大规模并发访问;

|

||||

- **多云支持**: 一套系统,同时管理不同云上面的资产;

|

||||

- **多租户**: 一套系统,多个子公司或部门同时使用;

|

||||

- **云端存储**: 审计录像云端存储,永不丢失;

|

||||

|

||||

[](https://www.youtube.com/watch?v=UlGYRbKrpgY "JumpServer Quickstart")

|

||||

## UI 展示

|

||||

|

||||

## Screenshots

|

||||

<table style="border-collapse: collapse; border: 1px solid black;">

|

||||

<tr>

|

||||

<td style="padding: 5px;background-color:#fff;"><img src= "https://github.com/jumpserver/jumpserver/assets/32935519/99fabe5b-0475-4a53-9116-4c370a1426c4" alt="JumpServer Console" /></td>

|

||||

<td style="padding: 5px;background-color:#fff;"><img src= "https://github.com/user-attachments/assets/7c1f81af-37e8-4f07-8ac9-182895e1062e" alt="JumpServer PAM" /></td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td style="padding: 5px;background-color:#fff;"><img src= "https://github.com/jumpserver/jumpserver/assets/32935519/a424d731-1c70-4108-a7d8-5bbf387dda9a" alt="JumpServer Audits" /></td>

|

||||

<td style="padding: 5px;background-color:#fff;"><img src= "https://github.com/jumpserver/jumpserver/assets/32935519/393d2c27-a2d0-4dea-882d-00ed509e00c9" alt="JumpServer Workbench" /></td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td style="padding: 5px;background-color:#fff;"><img src= "https://github.com/user-attachments/assets/eaa41f66-8cc8-4f01-a001-0d258501f1c9" alt="JumpServer RBAC" /></td>

|

||||

<td style="padding: 5px;background-color:#fff;"><img src= "https://github.com/jumpserver/jumpserver/assets/32935519/3a2611cd-8902-49b8-b82b-2a6dac851f3e" alt="JumpServer Settings" /></td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td style="padding: 5px;background-color:#fff;"><img src= "https://github.com/jumpserver/jumpserver/assets/32935519/1e236093-31f7-4563-8eb1-e36d865f1568" alt="JumpServer SSH" /></td>

|

||||

<td style="padding: 5px;background-color:#fff;"><img src= "https://github.com/jumpserver/jumpserver/assets/32935519/69373a82-f7ab-41e8-b763-bbad2ba52167" alt="JumpServer RDP" /></td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td style="padding: 5px;background-color:#fff;"><img src= "https://github.com/jumpserver/jumpserver/assets/32935519/5bed98c6-cbe8-4073-9597-d53c69dc3957" alt="JumpServer K8s" /></td>

|

||||

<td style="padding: 5px;background-color:#fff;"><img src= "https://github.com/jumpserver/jumpserver/assets/32935519/b80ad654-548f-42bc-ba3d-c1cfdf1b46d6" alt="JumpServer DB" /></td>

|

||||

</tr>

|

||||

</table>

|

||||

|

||||

|

||||

## Components

|

||||

## 在线体验

|

||||

|

||||

JumpServer consists of multiple key components, which collectively form the functional framework of JumpServer, providing users with comprehensive capabilities for operations management and security control.

|

||||

- 环境地址:<https://demo.jumpserver.org/>

|

||||

|

||||

| Project | Status | Description |

|

||||

|--------------------------------------------------------|------------------------------------------------------------------------------------------------------------------------------------------------------------------------|---------------------------------------------------------------------------------------------------------|

|

||||

| [Lina](https://github.com/jumpserver/lina) | <a href="https://github.com/jumpserver/lina/releases"><img alt="Lina release" src="https://img.shields.io/github/release/jumpserver/lina.svg" /></a> | JumpServer Web UI |

|

||||

| [Luna](https://github.com/jumpserver/luna) | <a href="https://github.com/jumpserver/luna/releases"><img alt="Luna release" src="https://img.shields.io/github/release/jumpserver/luna.svg" /></a> | JumpServer Web Terminal |

|

||||

| [KoKo](https://github.com/jumpserver/koko) | <a href="https://github.com/jumpserver/koko/releases"><img alt="Koko release" src="https://img.shields.io/github/release/jumpserver/koko.svg" /></a> | JumpServer Character Protocol Connector |

|

||||

| [Lion](https://github.com/jumpserver/lion) | <a href="https://github.com/jumpserver/lion/releases"><img alt="Lion release" src="https://img.shields.io/github/release/jumpserver/lion.svg" /></a> | JumpServer Graphical Protocol Connector |

|

||||

| [Chen](https://github.com/jumpserver/chen) | <a href="https://github.com/jumpserver/chen/releases"><img alt="Chen release" src="https://img.shields.io/github/release/jumpserver/chen.svg" /> | JumpServer Web DB |

|

||||

| [Tinker](https://github.com/jumpserver/tinker) | <img alt="Tinker" src="https://img.shields.io/badge/release-private-red" /> | JumpServer Remote Application Connector (Windows) |

|

||||

| [Panda](https://github.com/jumpserver/Panda) | <img alt="Panda" src="https://img.shields.io/badge/release-private-red" /> | JumpServer EE Remote Application Connector (Linux) |

|

||||

| [Razor](https://github.com/jumpserver/razor) | <img alt="Chen" src="https://img.shields.io/badge/release-private-red" /> | JumpServer EE RDP Proxy Connector |

|

||||

| [Magnus](https://github.com/jumpserver/magnus) | <img alt="Magnus" src="https://img.shields.io/badge/release-private-red" /> | JumpServer EE Database Proxy Connector |

|

||||

| [Nec](https://github.com/jumpserver/nec) | <img alt="Nec" src="https://img.shields.io/badge/release-private-red" /> | JumpServer EE VNC Proxy Connector |

|

||||

| [Facelive](https://github.com/jumpserver/facelive) | <img alt="Facelive" src="https://img.shields.io/badge/release-private-red" /> | JumpServer EE Facial Recognition |

|

||||

| :warning: 注意 |

|

||||

|:-----------------------------|

|

||||

| 该环境仅作体验目的使用,我们会定时清理、重置数据! |

|

||||

| 请勿修改体验环境用户的密码! |

|

||||

| 请勿在环境中添加业务生产环境地址、用户名密码等敏感信息! |

|

||||

|

||||

## 快速开始

|

||||

|

||||

## Contributing

|

||||

- [快速入门](https://docs.jumpserver.org/zh/v3/quick_start/)

|

||||

- [产品文档](https://docs.jumpserver.org)

|

||||

- [在线学习](https://edu.fit2cloud.com/page/2635362)

|

||||

- [知识库](https://kb.fit2cloud.com/categories/jumpserver)

|

||||

|

||||

Welcome to submit PR to contribute. Please refer to [CONTRIBUTING.md][contributing-link] for guidelines.

|

||||

## 案例研究

|

||||

|

||||

## License

|

||||

- [腾讯音乐娱乐集团:基于JumpServer的安全运维审计解决方案](https://blog.fit2cloud.com/?p=a04cdf0d-6704-4d18-9b40-9180baecd0e2)

|

||||

- [腾讯海外游戏:基于JumpServer构建游戏安全运营能力](https://blog.fit2cloud.com/?p=3704)

|

||||

- [万华化学:通过JumpServer管理全球化分布式IT资产,并且实现与云管平台的联动](https://blog.fit2cloud.com/?p=3504)

|

||||

- [雪花啤酒:JumpServer堡垒机使用体会](https://blog.fit2cloud.com/?p=3412)

|

||||

- [顺丰科技:JumpServer 堡垒机护航顺丰科技超大规模资产安全运维](https://blog.fit2cloud.com/?p=1147)

|

||||

- [沐瞳游戏:通过JumpServer管控多项目分布式资产](https://blog.fit2cloud.com/?p=3213)

|

||||

- [携程:JumpServer 堡垒机部署与运营实战](https://blog.fit2cloud.com/?p=851)

|

||||

- [大智慧:JumpServer 堡垒机让“大智慧”的混合 IT 运维更智慧](https://blog.fit2cloud.com/?p=882)

|

||||

- [小红书:JumpServer 堡垒机大规模资产跨版本迁移之路](https://blog.fit2cloud.com/?p=516)

|

||||

- [中手游:JumpServer堡垒机助力中手游提升多云环境下安全运维能力](https://blog.fit2cloud.com/?p=732)

|

||||

- [中通快递:JumpServer主机安全运维实践](https://blog.fit2cloud.com/?p=708)

|

||||

- [东方明珠:JumpServer高效管控异构化、分布式云端资产](https://blog.fit2cloud.com/?p=687)

|

||||

- [江苏农信:JumpServer堡垒机助力行业云安全运维](https://blog.fit2cloud.com/?p=666)

|

||||

|

||||

Copyright (c) 2014-2025 FIT2CLOUD, All rights reserved.

|

||||

## 社区交流

|

||||

|

||||

Licensed under The GNU General Public License version 3 (GPLv3) (the "License"); you may not use this file except in compliance with the License. You may obtain a copy of the License at

|

||||

如果您在使用过程中有任何疑问或对建议,欢迎提交 [GitHub Issue](https://github.com/jumpserver/jumpserver/issues/new/choose)。

|

||||

|

||||

您也可以到我们的 [社区论坛](https://bbs.fit2cloud.com/c/js/5) 当中进行交流沟通。

|

||||

|

||||

### 参与贡献

|

||||

|

||||

欢迎提交 PR 参与贡献。 参考 [CONTRIBUTING.md](https://github.com/jumpserver/jumpserver/blob/dev/CONTRIBUTING.md)

|

||||

|

||||

## 组件项目

|

||||

|

||||

| 项目 | 状态 | 描述 |

|

||||

|--------------------------------------------------------|------------------------------------------------------------------------------------------------------------------------------------------------------------------------|-----------------------------------------------------------------------------------|

|

||||

| [Lina](https://github.com/jumpserver/lina) | <a href="https://github.com/jumpserver/lina/releases"><img alt="Lina release" src="https://img.shields.io/github/release/jumpserver/lina.svg" /></a> | JumpServer Web UI 项目 |

|

||||

| [Luna](https://github.com/jumpserver/luna) | <a href="https://github.com/jumpserver/luna/releases"><img alt="Luna release" src="https://img.shields.io/github/release/jumpserver/luna.svg" /></a> | JumpServer Web Terminal 项目 |

|

||||

| [KoKo](https://github.com/jumpserver/koko) | <a href="https://github.com/jumpserver/koko/releases"><img alt="Koko release" src="https://img.shields.io/github/release/jumpserver/koko.svg" /></a> | JumpServer 字符协议 Connector 项目 |

|

||||

| [Lion](https://github.com/jumpserver/lion-release) | <a href="https://github.com/jumpserver/lion-release/releases"><img alt="Lion release" src="https://img.shields.io/github/release/jumpserver/lion-release.svg" /></a> | JumpServer 图形协议 Connector 项目,依赖 [Apache Guacamole](https://guacamole.apache.org/) |

|

||||

| [Razor](https://github.com/jumpserver/razor) | <img alt="Chen" src="https://img.shields.io/badge/release-私有发布-red" /> | JumpServer RDP 代理 Connector 项目 |

|

||||

| [Tinker](https://github.com/jumpserver/tinker) | <img alt="Tinker" src="https://img.shields.io/badge/release-私有发布-red" /> | JumpServer 远程应用 Connector 项目 (Windows) |

|

||||

| [Panda](https://github.com/jumpserver/Panda) | <img alt="Panda" src="https://img.shields.io/badge/release-私有发布-red" /> | JumpServer 远程应用 Connector 项目 (Linux) |

|

||||

| [Magnus](https://github.com/jumpserver/magnus-release) | <a href="https://github.com/jumpserver/magnus-release/releases"><img alt="Magnus release" src="https://img.shields.io/github/release/jumpserver/magnus-release.svg" /> | JumpServer 数据库代理 Connector 项目 |

|

||||

| [Chen](https://github.com/jumpserver/chen-release) | <a href="https://github.com/jumpserver/chen-release/releases"><img alt="Chen release" src="https://img.shields.io/github/release/jumpserver/chen-release.svg" /> | JumpServer Web DB 项目,替代原来的 OmniDB |

|

||||

| [Kael](https://github.com/jumpserver/kael) | <a href="https://github.com/jumpserver/kael/releases"><img alt="Kael release" src="https://img.shields.io/github/release/jumpserver/kael.svg" /> | JumpServer 连接 GPT 资产的组件项目 |

|

||||

| [Wisp](https://github.com/jumpserver/wisp) | <a href="https://github.com/jumpserver/wisp/releases"><img alt="Magnus release" src="https://img.shields.io/github/release/jumpserver/wisp.svg" /> | JumpServer 各系统终端组件和 Core API 通信的组件项目 |

|

||||

| [Clients](https://github.com/jumpserver/clients) | <a href="https://github.com/jumpserver/clients/releases"><img alt="Clients release" src="https://img.shields.io/github/release/jumpserver/clients.svg" /> | JumpServer 客户端 项目 |

|

||||

| [Installer](https://github.com/jumpserver/installer) | <a href="https://github.com/jumpserver/installer/releases"><img alt="Installer release" src="https://img.shields.io/github/release/jumpserver/installer.svg" /> | JumpServer 安装包 项目 |

|

||||

|

||||

## 安全说明

|

||||

|

||||

JumpServer是一款安全产品,请参考 [基本安全建议](https://docs.jumpserver.org/zh/master/install/install_security/)

|

||||

进行安装部署。如果您发现安全相关问题,请直接联系我们:

|

||||

|

||||

- 邮箱:support@fit2cloud.com

|

||||

- 电话:400-052-0755

|

||||

|

||||

## License & Copyright

|