Zep now has the ability to search over chat history summaries. This PR

adds support for doing so. More here: https://blog.getzep.com/zep-v0-17/

@baskaryan @eyurtsev

Fixed a typo

<!-- Thank you for contributing to LangChain!

Replace this entire comment with:

- **Description:** Fixed a typo on the code

- **Issue:** the issue # it fixes (if applicable),

Please make sure your PR is passing linting and testing before

submitting. Run `make format`, `make lint` and `make test` to check this

locally.

See contribution guidelines for more information on how to write/run

tests, lint, etc:

https://github.com/langchain-ai/langchain/blob/master/.github/CONTRIBUTING.md

If you're adding a new integration, please include:

1. a test for the integration, preferably unit tests that do not rely on

network access,

2. an example notebook showing its use. It lives in `docs/extras`

directory.

If no one reviews your PR within a few days, please @-mention one of

@baskaryan, @eyurtsev, @hwchase17.

-->

<!-- Thank you for contributing to LangChain!

Replace this entire comment with:

- **Description:** a description of the change,

- **Issue:** the issue # it fixes (if applicable),

- **Dependencies:** any dependencies required for this change,

- **Tag maintainer:** for a quicker response, tag the relevant

maintainer (see below),

- **Twitter handle:** we announce bigger features on Twitter. If your PR

gets announced, and you'd like a mention, we'll gladly shout you out!

Please make sure your PR is passing linting and testing before

submitting. Run `make format`, `make lint` and `make test` to check this

locally.

See contribution guidelines for more information on how to write/run

tests, lint, etc:

https://github.com/langchain-ai/langchain/blob/master/.github/CONTRIBUTING.md

If you're adding a new integration, please include:

1. a test for the integration, preferably unit tests that do not rely on

network access,

2. an example notebook showing its use. It lives in `docs/extras`

directory.

If no one reviews your PR within a few days, please @-mention one of

@baskaryan, @eyurtsev, @hwchase17.

-->

---------

Co-authored-by: Matvey Arye <mat@timescale.com>

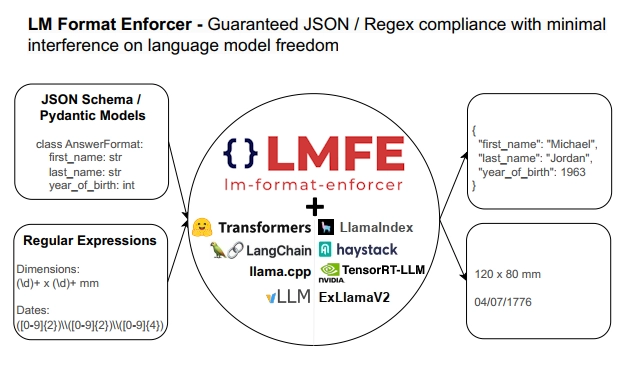

## Description

This PR adds support for

[lm-format-enforcer](https://github.com/noamgat/lm-format-enforcer) to

LangChain.

The library is similar to jsonformer / RELLM which are supported in

Langchain, but has several advantages such as

- Batching and Beam search support

- More complete JSON Schema support

- LLM has control over whitespace, improving quality

- Better runtime performance due to only calling the LLM's generate()

function once per generate() call.

The integration is loosely based on the jsonformer integration in terms

of project structure.

## Dependencies

No compile-time dependency was added, but if `lm-format-enforcer` is not

installed, a runtime error will occur if it is trying to be used.

## Tests

Due to the integration modifying the internal parameters of the

underlying huggingface transformer LLM, it is not possible to test

without building a real LM, which requires internet access. So, similar

to the jsonformer and RELLM integrations, the testing is via the

notebook.

## Twitter Handle

[@noamgat](https://twitter.com/noamgat)

Looking forward to hearing feedback!

---------

Co-authored-by: Bagatur <baskaryan@gmail.com>

**Description:** Textract PDF Loader generating linearized output,

meaning it will replicate the structure of the source document as close

as possible based on the features passed into the call (e. g. LAYOUT,

FORMS, TABLES). With LAYOUT reading order for multi-column documents or

identification of lists and figures is supported and with TABLES it will

generate the table structure as well. FORMS will indicate "key: value"

with columms.

- **Issue:** the issue fixes#12068

- **Dependencies:** amazon-textract-textractor is added, which provides

the linearization

- **Tag maintainer:** @3coins

---------

Co-authored-by: Bagatur <baskaryan@gmail.com>

Following this tutoral about using OpenAI Embeddings with FAISS

https://python.langchain.com/docs/integrations/vectorstores/faiss

```python

from langchain.embeddings.openai import OpenAIEmbeddings

from langchain.text_splitter import CharacterTextSplitter

from langchain.vectorstores import FAISS

from langchain.document_loaders import TextLoader

from langchain.document_loaders import TextLoader

loader = TextLoader("../../../extras/modules/state_of_the_union.txt")

documents = loader.load()

text_splitter = CharacterTextSplitter(chunk_size=1000, chunk_overlap=0)

docs = text_splitter.split_documents(documents)

embeddings = OpenAIEmbeddings()

```

This works fine

```python

db = FAISS.from_documents(docs, embeddings)

query = "What did the president say about Ketanji Brown Jackson"

docs = db.similarity_search(query)

```

But the async version is not

```python

db = await FAISS.afrom_documents(docs, embeddings) # NotImplementedError

query = "What did the president say about Ketanji Brown Jackson"

docs = await db.asimilarity_search(query) # this will use await asyncio.get_event_loop().run_in_executor under the hood and will not call OpenAIEmbeddings.aembed_query but call OpenAIEmbeddings.embed_query

```

So this PR add async/await supports for FAISS

---------

Co-authored-by: Eugene Yurtsev <eyurtsev@gmail.com>

- Description: adding support to Activeloop's DeepMemory feature that

boosts recall up to 25%. Added Jupyter notebook showcasing the feature

and also made index params explicit.

- Twitter handle: will really appreciate if we could announce this on

twitter.

---------

Co-authored-by: adolkhan <adilkhan.sarsen@alumni.nu.edu.kz>

**Description**

This small change will make chunk_size a configurable parameter for

loading documents into a Supabase database.

**Issue**

https://github.com/langchain-ai/langchain/issues/11422

**Dependencies**

No chanages

**Twitter**

@ j1philli

**Reminder**

If no one reviews your PR within a few days, please @-mention one of

@baskaryan, @eyurtsev, @hwchase17.

---------

Co-authored-by: Greg Richardson <greg.nmr@gmail.com>

**Description:**

This PR adds support for the [Pro version of Titan Takeoff

Server](https://docs.titanml.co/docs/category/pro-features). Users of

the Pro version will have to import the TitanTakeoffPro model, which is

different from TitanTakeoff.

**Issue:**

Also minor fixes to docs for Titan Takeoff (Community version)

**Dependencies:**

No additional dependencies

**Twitter handle:** @becoming_blake

@baskaryan @hwchase17

This PR adds a data [E2B's](https://e2b.dev/) analysis/code interpreter

sandbox as a tool

---------

Co-authored-by: Bagatur <baskaryan@gmail.com>

Co-authored-by: Jakub Novak <jakub@e2b.dev>

- Move Document AI provider to the Google provider page

- Change Vertex AI Matching Engine to Vector Search

- Change references from GCP to Google Cloud

- Add Gmail chat loader to Google provider page

- Change Serper page title to "Serper - Google Search API" since it is

not a Google product.

- replace `requests` package with `langchain.requests`

- add `_acall` support

- add `_stream` and `_astream`

- freshen up the documentation a bit

- update vendor doc

**Description: Allow to inject boto3 client for Cross account access

type of scenarios in using SagemakerEndpointEmbeddings and also updated

the documentation for same in the sample notebook**

**Issue:SagemakerEndpointEmbeddings cross account capability #10634

#10184**

Dependencies: None

Tag maintainer:

Twitter handle:lethargicoder

Co-authored-by: Vikram(VS) <vssht@amazon.com>

Adding Tavily Search API as a tool. I will be the maintainer and

assaf_elovic is the twitter handler.

---------

Co-authored-by: Bagatur <baskaryan@gmail.com>

- **Description:**

- Replace Telegram with Whatsapp in whatsapp.ipynb

- Add # to mark the telegram as heading in telegram.ipynb

- **Issue:** None

- **Dependencies:** None

Added a notebook with examples of the creation of a retriever from the

SingleStoreDB vector store, and further usage.

Co-authored-by: Volodymyr Tkachuk <vtkachuk-ua@singlestore.com>

Updated the elasticsearch self query retriever to use the match clause

for LIKE operator instead of the non-analyzed fuzzy search clause.

Other small updates include:

- fixing the stack inference integration test where the index's default

pipeline didn't use the inference pipeline created

- adding a user-agent to the old implementation to track usage

- improved the documentation for ElasticsearchStore filters

### Description:

To provide an eas llm service access methods in this pull request by

impletementing `PaiEasEndpoint` and `PaiEasChatEndpoint` classes in

`langchain.llms` and `langchain.chat_models` modules. Base on this pr,

langchain users can build up a chain to call remote eas llm service and

get the llm inference results.

### About EAS Service

EAS is a Alicloud product on Alibaba Cloud Machine Learning Platform for

AI which is short for AliCloud PAI. EAS provides model inference

deployment services for the users. We build up a llm inference services

on EAS with a general llm docker images. Therefore, end users can

quickly setup their llm remote instances to load majority of the

hugginface llm models, and serve as a backend for most of the llm apps.

### Dependencies

This pr does't involve any new dependencies.

---------

Co-authored-by: 子洪 <gaoyihong.gyh@alibaba-inc.com>

The Docs folder changed its structure, and the notebook example for

SingleStoreDChatMessageHistory has not been copied to the new place due

to a merge conflict. Adding the example to the correct place.

Co-authored-by: Volodymyr Tkachuk <vtkachuk-ua@singlestore.com>

- Update Zep Memory and Retriever docstrings

- Zep Memory Retriever: Add support for native MMR

- Add MMR example to existing ZepRetriever Notebook

@baskaryan

- Description: Considering the similarity computation method of

[BGE](https://github.com/FlagOpen/FlagEmbedding) model is cosine

similarity, set normalize_embeddings to be True.

- Tag maintainer: @baskaryan

Co-authored-by: Erick Friis <erick@langchain.dev>

Description: A large language models developed by Baichuan Intelligent

Technology,https://www.baichuan-ai.com/home

Issue: None

Dependencies: None

Tag maintainer:

Twitter handle:

Fixed a typo :

"asyncrhonized" > "asynchronized"

If no one reviews your PR within a few days, please @-mention one of

@baskaryan, @eyurtsev, @hwchase17.

-->

Hello Folks,

Alibaba Cloud OpenSearch has released a new version of the vector

storage engine, which has significantly improved performance compared to

the previous version. At the same time, the sdk has also undergone

changes, requiring adjustments alibaba opensearch vector store code to

adapt.

This PR includes:

Adapt to the latest version of Alibaba Cloud OpenSearch API.

More comprehensive unit testing.

Improve documentation.

I have read your contributing guidelines. And I have passed the tests

below

- [x] make format

- [x] make lint

- [x] make coverage

- [x] make test

---------

Co-authored-by: zhaoshengbo <shengbo.zsb@alibaba-inc.com>

**Description:**

While working on the Docusaurus site loader #9138, I noticed some

outdated docs and tests for the Sitemap Loader.

**Issue:**

This is tangentially related to #6691 in reference to doc links. I plan

on digging in to a few of these issue when I find time next.

Related to #10800

- Errors in the Docstring of GradientLLM / Gradient.ai LLM

- Renamed the `model_id` to `model` and adapting this in all tests.

Reason to so is to be in Sync with `GradientEmbeddings` and other LLM's.

- inmproving tests so they check the headers in the sent request.

- making the aiosession a private attribute in the docs, as in the

future `pip install gradientai` will be replacing aiosession.

- adding a example how to fine-tune on the Prompt Template as suggested

in #10800

Hi,

After submitting https://github.com/langchain-ai/langchain/pull/11357,

we realized that the notebooks are moved to a new location. Sending a

new PR to update the doc.

---------

Co-authored-by: everly-studio <127131037+everly-studio@users.noreply.github.com>

Reverts langchain-ai/langchain#11714

This has linting and formatting issues, plus it's added to chat models

folder but doesn't subclass Chat Model base class

Motivation and Context

At present, the Baichuan Large Language Model is relatively popular and

efficient in performance. Due to widespread market recognition, this

model has been added to enhance the scalability of Langchain's ability

to access the big language model, so as to facilitate application access

and usage for interested users.

System Info

langchain: 0.0.295

python:3.8.3

IDE:vs code

Description

Add the following files:

1. Add baichuan_baichuaninc_endpoint.py in the

libs/langchain/langchain/chat_models

2. Modify the __init__.py file,which is located in the

libs/langchain/langchain/chat_models/__init__.py:

a. Add "from langchain.chat_models.baichuan_baichuaninc_endpoint import

BaichuanChatEndpoint"

b. Add "BaichuanChatEndpoint" In the file's __ All__ method

Your contribution

I am willing to help implement this feature and submit a PR, but I would

appreciate guidance from the maintainers or community to ensure the

changes are made correctly and in line with the project's standards and

practices.

Instead of accessing `langchain.debug`, `langchain.verbose`, or

`langchain.llm_cache`, please use the new getter/setter functions in

`langchain.globals`:

- `langchain.globals.set_debug()` and `langchain.globals.get_debug()`

- `langchain.globals.set_verbose()` and

`langchain.globals.get_verbose()`

- `langchain.globals.set_llm_cache()` and

`langchain.globals.get_llm_cache()`

Using the old globals directly will now raise a warning.

---------

Co-authored-by: Harrison Chase <hw.chase.17@gmail.com>

**Description:**

Add a document loader for the RSpace Electronic Lab Notebook

(www.researchspace.com), so that scientific documents and research notes

can be easily pulled into Langchain pipelines.

**Issue**

This is an new contribution, rather than an issue fix.

**Dependencies:**

There are no new required dependencies.

In order to use the loader, clients will need to install rspace_client

SDK using `pip install rspace_client`

---------

Co-authored-by: richarda23 <richard.c.adams@infinityworks.com>

Co-authored-by: Bagatur <baskaryan@gmail.com>

**Description:** Modify Anyscale integration to work with [Anyscale

Endpoint](https://docs.endpoints.anyscale.com/)

and it supports invoke, async invoke, stream and async invoke features

---------

Co-authored-by: Bagatur <baskaryan@gmail.com>

- **Description:** implements a retriever on top of DocAI Warehouse (to

interact with existing enterprise documents)

https://cloud.google.com/document-ai-warehouse?hl=en

- **Issue:** new functionality

@baskaryan

---------

Co-authored-by: Bagatur <baskaryan@gmail.com>

**Description:** This PR adds support for ChatOpenAI models in the

Infino callback handler. In particular, this PR implements

`on_chat_model_start` callback, so that ChatOpenAI models are supported.

With this change, Infino callback handler can be used to track latency,

errors, and prompt tokens for ChatOpenAI models too (in addition to the

support for OpenAI and other non-chat models it has today). The existing

example notebook is updated to show how to use this integration as well.

cc/ @naman-modi @savannahar68

**Issue:** https://github.com/langchain-ai/langchain/issues/11607

**Dependencies:** None

**Tag maintainer:** @hwchase17

**Twitter handle:** [@vkakade](https://twitter.com/vkakade)

This PR adds support for the Azure Cosmos DB MongoDB vCore Vector Store

https://learn.microsoft.com/en-us/azure/cosmos-db/mongodb/vcore/https://learn.microsoft.com/en-us/azure/cosmos-db/mongodb/vcore/vector-search

Summary:

- **Description:** added vector store integration for Azure Cosmos DB

MongoDB vCore Vector Store,

- **Issue:** the issue # it fixes#11627,

- **Dependencies:** pymongo dependency,

- **Tag maintainer:** @hwchase17,

- **Twitter handle:** @izzyacademy

---------

Co-authored-by: Israel Ekpo <israel.ekpo@gmail.com>

Co-authored-by: Israel Ekpo <44282278+izzyacademy@users.noreply.github.com>

Co-authored-by: Bagatur <baskaryan@gmail.com>

- **Description:** This is an update to OctoAI LLM provider that adds

support for llama2 endpoints hosted on OctoAI and updates MPT-7b url

with the current one.

@baskaryan

Thanks!

---------

Co-authored-by: ML Wiz <bassemgeorgi@gmail.com>