* Fixed several spelling errors under colossalai * Fix the spelling error in colossalai and docs directory * Cautious Changed the spelling error under the example folder * Update runtime_preparation_pass.py revert autograft to autograd * Update search_chunk.py utile to until * Update check_installation.py change misteach to mismatch in line 91 * Update 1D_tensor_parallel.md revert to perceptron * Update 2D_tensor_parallel.md revert to perceptron in line 73 * Update 2p5D_tensor_parallel.md revert to perceptron in line 71 * Update 3D_tensor_parallel.md revert to perceptron in line 80 * Update README.md revert to resnet in line 42 * Update reorder_graph.py revert to indice in line 7 * Update p2p.py revert to megatron in line 94 * Update initialize.py revert to torchrun in line 198 * Update routers.py change to detailed in line 63 * Update routers.py change to detailed in line 146 * Update README.md revert random number in line 402

2.2 KiB

Colossal-AI Overview

Author: Shenggui Li, Siqi Mai

About Colossal-AI

With the development of deep learning model size, it is important to shift to a new training paradigm. The traditional training method with no parallelism and optimization became a thing of the past and new training methods are the key to make training large-scale models efficient and cost-effective.

Colossal-AI is designed to be a unified system to provide an integrated set of training skills and utilities to the user. You can find the common training utilities such as mixed precision training and gradient accumulation. Besides, we provide an array of parallelism including data, tensor and pipeline parallelism. We optimize tensor parallelism with different multi-dimensional distributed matrix-matrix multiplication algorithm. We also provided different pipeline parallelism methods to allow the user to scale their model across nodes efficiently. More advanced features such as offloading can be found in this tutorial documentation in detail as well.

General Usage

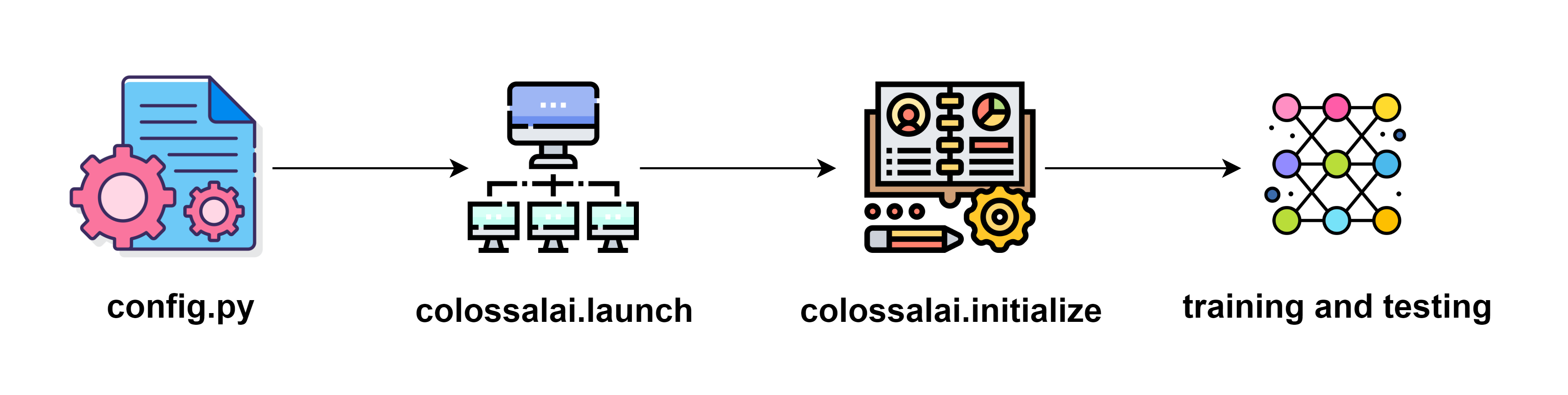

We aim to make Colossal-AI easy to use and non-intrusive to user code. There is a simple general workflow if you want to use Colossal-AI.

- Prepare a configuration file where specifies the features you want to use and your parameters.

- Initialize distributed backend with

colossalai.launch - Inject the training features into your training components (e.g. model, optimizer) with

colossalai.initialize. - Run training and testing

We will cover the whole workflow in the basic tutorials section.

Future Development

The Colossal-AI system will be expanded to include more training skills, these new developments may include but are not limited to:

- optimization of distributed operations

- optimization of training on heterogenous system

- implementation of training utilities to reduce model size and speed up training while preserving model performance

- expansion of existing parallelism methods

We welcome ideas and contribution from the community and you can post your idea for future development in our forum.