doc:chatdata and chatdb

|

Before Width: | Height: | Size: 301 KiB |

|

Before Width: | Height: | Size: 201 KiB |

|

Before Width: | Height: | Size: 263 KiB |

|

Before Width: | Height: | Size: 514 KiB |

|

Before Width: | Height: | Size: 153 KiB |

|

Before Width: | Height: | Size: 131 KiB |

|

Before Width: | Height: | Size: 140 KiB |

|

Before Width: | Height: | Size: 45 KiB |

|

Before Width: | Height: | Size: 16 KiB |

|

Before Width: | Height: | Size: 148 KiB |

|

Before Width: | Height: | Size: 189 KiB |

|

Before Width: | Height: | Size: 52 KiB |

|

Before Width: | Height: | Size: 95 KiB |

|

Before Width: | Height: | Size: 81 KiB |

|

Before Width: | Height: | Size: 175 KiB |

|

Before Width: | Height: | Size: 87 KiB |

|

Before Width: | Height: | Size: 110 KiB |

@@ -2,7 +2,7 @@ ChatData & ChatDB

|

||||

==================================

|

||||

ChatData generates SQL from natural language and executes it. ChatDB involves conversing with metadata from the

|

||||

Database, including metadata about databases, tables, and

|

||||

fields.

|

||||

fields.

|

||||

|

||||

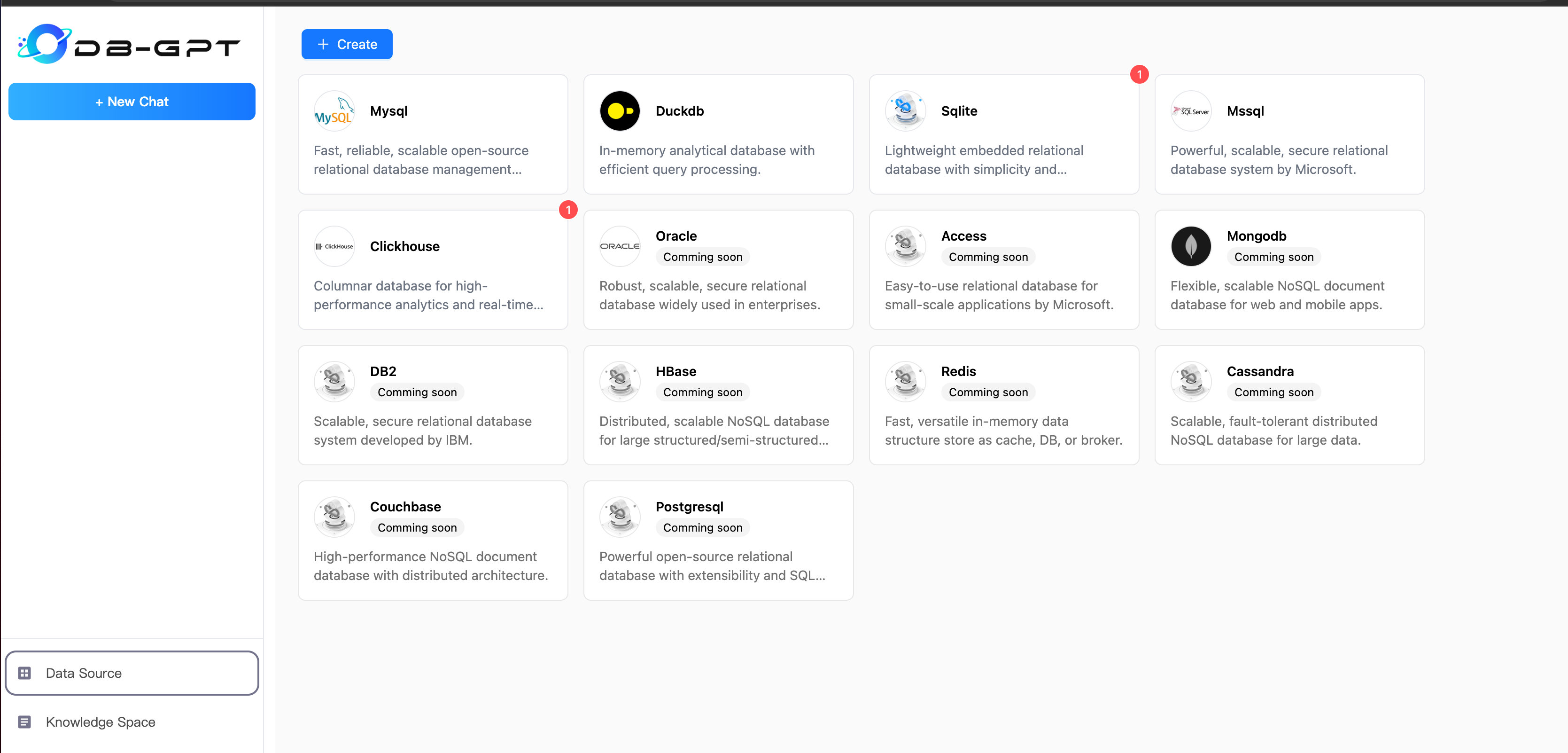

### 1.Choose Datasource

|

||||

|

||||

@@ -17,15 +17,15 @@ you can execute sql script to generate data.

|

||||

|

||||

#### 1.1 Datasource management

|

||||

|

||||

|

||||

|

||||

|

||||

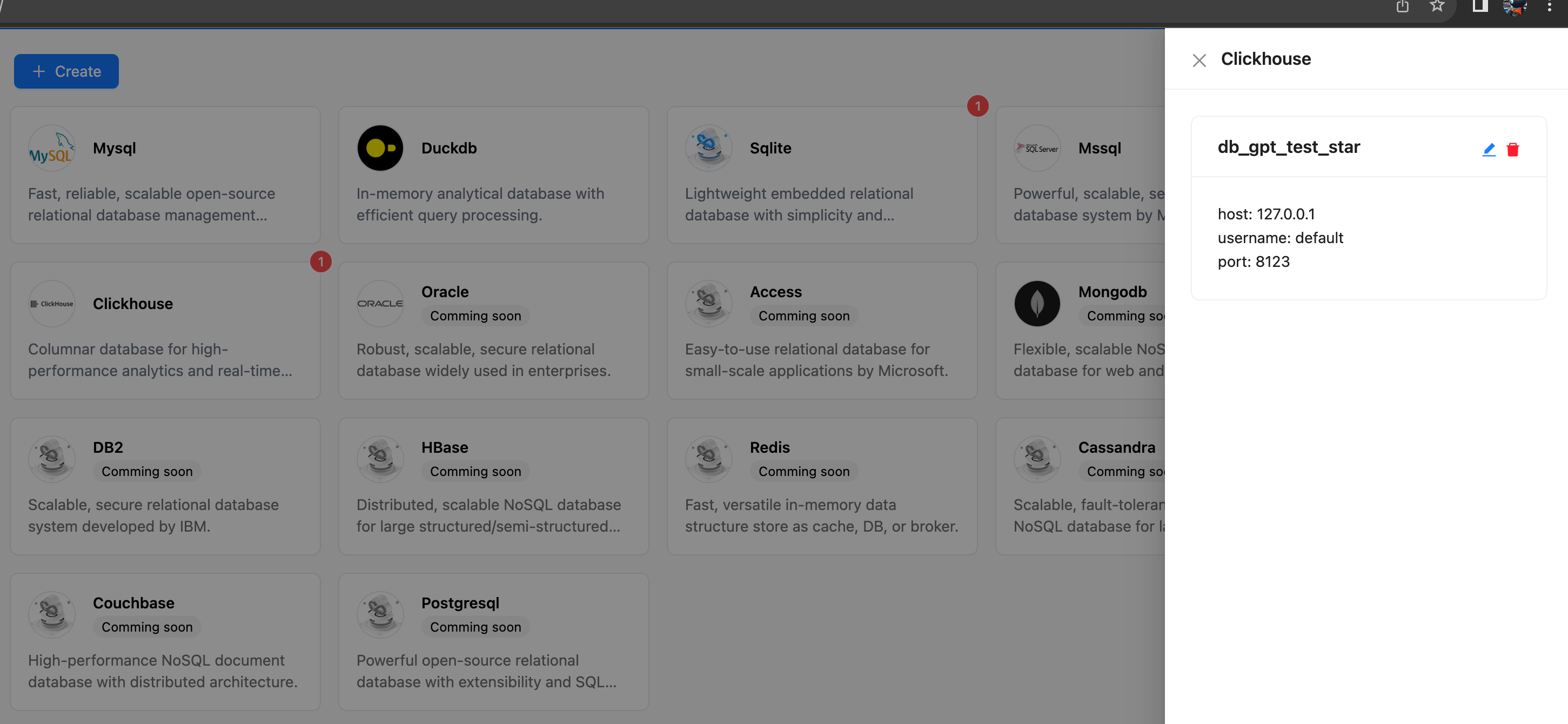

#### 1.2 Connection management

|

||||

|

||||

|

||||

|

||||

|

||||

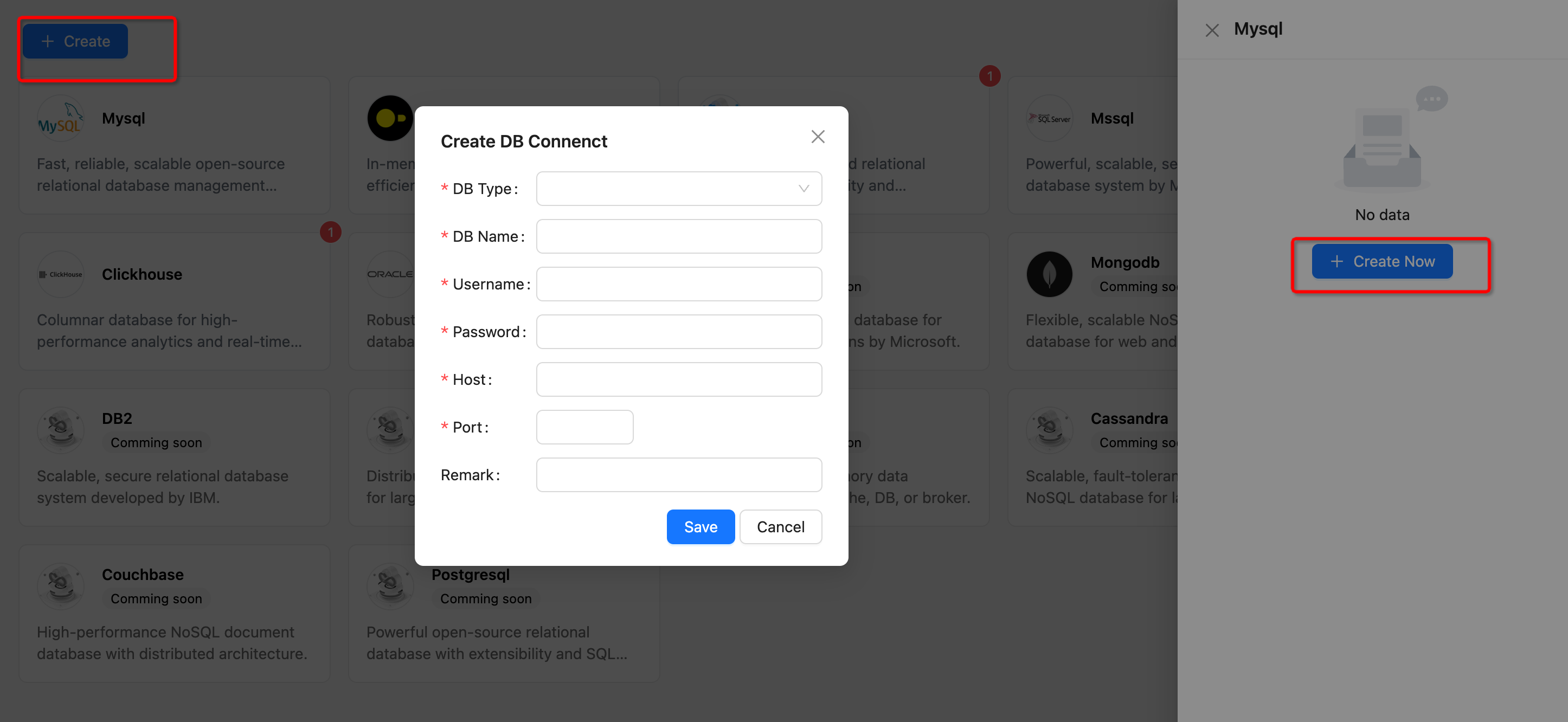

#### 1.3 Add Datasource

|

||||

|

||||

|

||||

|

||||

|

||||

```{note}

|

||||

now DB-GPT support Datasource Type

|

||||

@@ -34,16 +34,22 @@ now DB-GPT support Datasource Type

|

||||

* Sqlite

|

||||

* DuckDB

|

||||

* Clickhouse

|

||||

* Mssql

|

||||

```

|

||||

|

||||

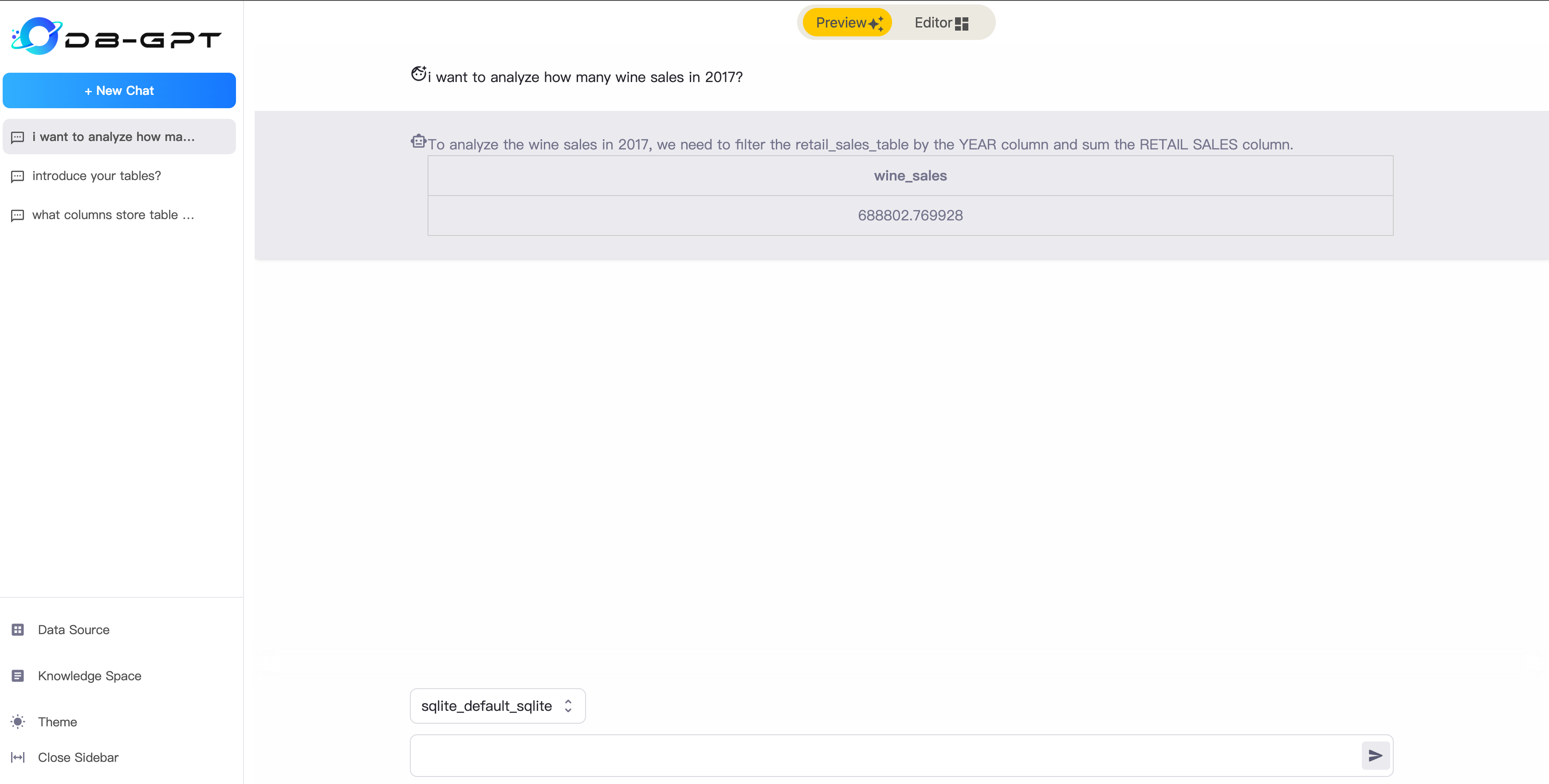

### 2.ChatData

|

||||

|

||||

##### Preview Mode

|

||||

After successfully setting up the data source, you can start conversing with the database. You can ask it to generate

|

||||

SQL for you or inquire about relevant information on the database's metadata.

|

||||

|

||||

|

||||

|

||||

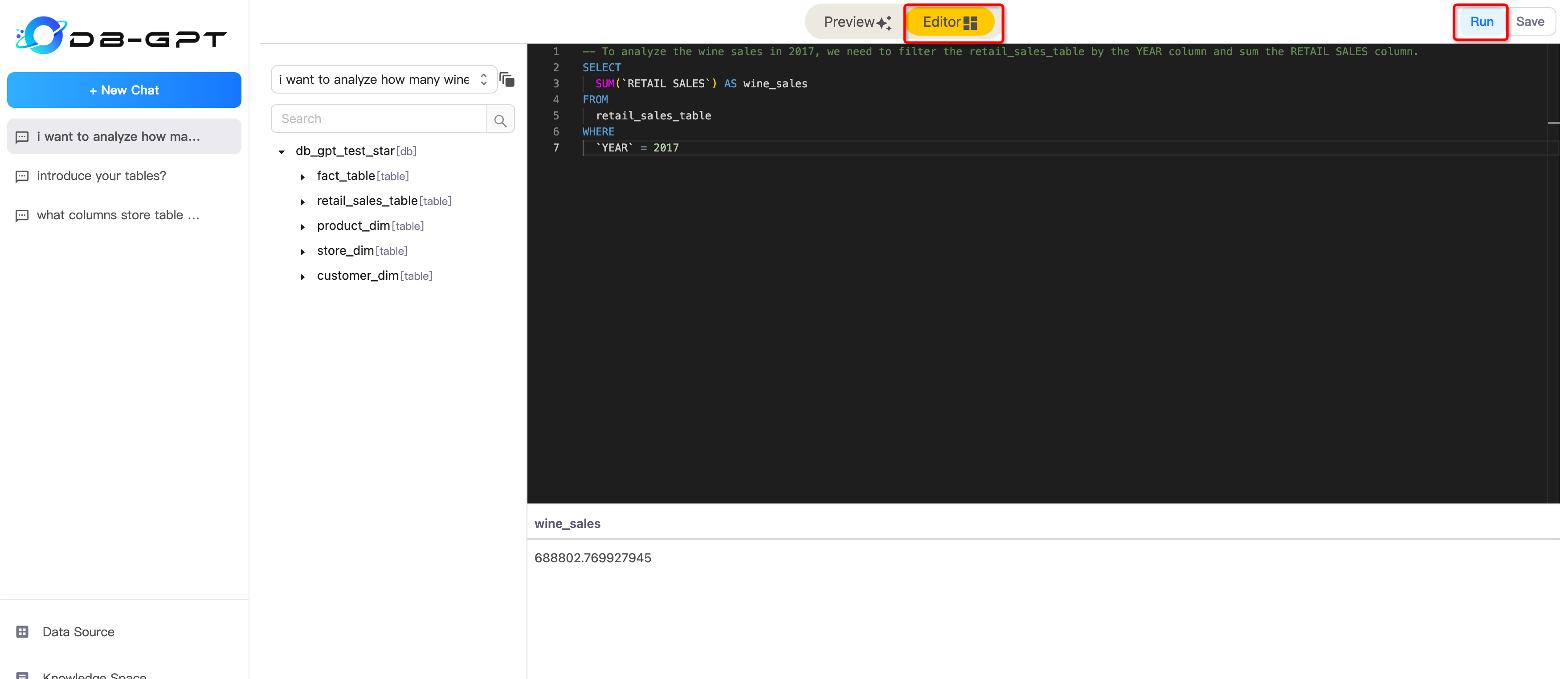

##### Editor Mode

|

||||

In Editor Mode, you can edit your sql and execute it.

|

||||

|

||||

|

||||

|

||||

### 3.ChatDB

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

@@ -2,7 +2,7 @@ KBQA

|

||||

==================================

|

||||

DB-GPT supports a knowledge question-answering module, which aims to create an intelligent expert in the field of databases and provide professional knowledge-based answers to database practitioners.

|

||||

|

||||

|

||||

|

||||

|

||||

## KBQA abilities

|

||||

|

||||

@@ -32,7 +32,7 @@ execute DBGPT/assets/schema/knowledge_management.sql

|

||||

|

||||

#### 1.Create Knowledge Space

|

||||

If you are using Knowledge Space for the first time, you need to create a Knowledge Space and set your name, owner, description.

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

@@ -40,20 +40,20 @@ If you are using Knowledge Space for the first time, you need to create a Knowle

|

||||

DB-GPT now support Multi Knowledge Source, including Text, WebUrl, and Document(PDF, Markdown, Word, PPT, HTML and CSV).

|

||||

After successfully uploading a document for translation, the backend system will automatically read and split and chunk the document, and then import it into the vector database. Alternatively, you can manually synchronize the document. You can also click on details to view the specific document slicing content.

|

||||

##### 2.1 Choose Knowledge Type:

|

||||

|

||||

|

||||

|

||||

##### 2.2 Upload Document:

|

||||

|

||||

|

||||

|

||||

|

||||

#### 3.Chat With Knowledge

|

||||

|

||||

|

||||

|

||||

#### 4.Adjust Space arguments

|

||||

Each knowledge space supports argument customization, including the relevant arguments for vector retrieval and the arguments for knowledge question-answering prompts.

|

||||

##### 4.1 Embedding

|

||||

Embedding Argument

|

||||

|

||||

|

||||

|

||||

```{tip} Embedding arguments

|

||||

* topk:the top k vectors based on similarity score.

|

||||

@@ -66,7 +66,7 @@ Embedding Argument

|

||||

|

||||

##### 4.2 Prompt

|

||||

Prompt Argument

|

||||

|

||||

|

||||

|

||||

```{tip} Prompt arguments

|

||||

* scene:A contextual parameter used to define the setting or environment in which the prompt is being used.

|

||||

|

||||

@@ -8,7 +8,7 @@ msgid ""

|

||||

msgstr ""

|

||||

"Project-Id-Version: DB-GPT 👏👏 0.3.5\n"

|

||||

"Report-Msgid-Bugs-To: \n"

|

||||

"POT-Creation-Date: 2023-08-22 13:28+0800\n"

|

||||

"POT-Creation-Date: 2023-08-29 20:30+0800\n"

|

||||

"PO-Revision-Date: YEAR-MO-DA HO:MI+ZONE\n"

|

||||

"Last-Translator: FULL NAME <EMAIL@ADDRESS>\n"

|

||||

"Language: zh_CN\n"

|

||||

@@ -20,17 +20,19 @@ msgstr ""

|

||||

"Generated-By: Babel 2.12.1\n"

|

||||

|

||||

#: ../../getting_started/application/chatdb/chatdb.md:1

|

||||

#: 45e6efede20d49d49f9e658bae7f4c08

|

||||

#: 46745445059c40848770d89655d4452a

|

||||

msgid "ChatData & ChatDB"

|

||||

msgstr "ChatData & ChatDB"

|

||||

|

||||

#: ../../getting_started/application/chatdb/chatdb.md:3

|

||||

#: 777a279edbf4491095d24922ebe873a7

|

||||

#: 494c5e475fbb420eaab49739b696a2ce

|

||||

#, fuzzy

|

||||

msgid ""

|

||||

"ChatData generates SQL from natural language and executes it. ChatDB "

|

||||

"involves conversing with metadata from the Database, including metadata "

|

||||

"about databases, tables, and fields."

|

||||

"demonstration](https://github.com/eosphoros-ai/DB-"

|

||||

"GPT/assets/13723926/d8bfeee9-e982-465e-a2b8-1164b673847e)"

|

||||

msgstr ""

|

||||

"ChatData 是会将自然语言生成SQL,并将其执行。ChatDB是与Database里面的元数据,包括库、表、字段的元数据进行对话."

|

||||

@@ -39,118 +41,175 @@ msgstr ""

|

||||

#: ../../getting_started/application/chatdb/chatdb.md:20

|

||||

#: ../../getting_started/application/chatdb/chatdb.md:24

|

||||

#: ../../getting_started/application/chatdb/chatdb.md:28

|

||||

#: ../../getting_started/application/chatdb/chatdb.md:40

|

||||

#: ../../getting_started/application/chatdb/chatdb.md:45

|

||||

#: 310dbee2849740f6946efa1a062bb87c 8e07c72f882341da8c342a657e88f162

|

||||

#: a016749ede034252b30557415226db56 c8513e01123443cbbb0e1afc26d46fff

|

||||

#: dad795c828cf453ab6faf22497bc0465

|

||||

#: ../../getting_started/application/chatdb/chatdb.md:42

|

||||

#: ../../getting_started/application/chatdb/chatdb.md:47

|

||||

#: ../../getting_started/application/chatdb/chatdb.md:53

|

||||

#: 0b20219c11a14f9ebdfac5ebabcdcd8d 0f8e5d9baaec4602ae57b55b4db286cf

|

||||

#: 3a2ef73b33c74d838b5c0ea41b83430d 9de27f6a12dd447eb9434c3b10dce97e

|

||||

#: 9fc2b16790534cf9a79ac57d7b54ff27

|

||||

msgid "db plugins demonstration"

|

||||

msgstr "db plugins demonstration"

|

||||

|

||||

#: ../../getting_started/application/chatdb/chatdb.md:7

|

||||

#: 5c9db27b472f4edfab8e0a0d6ea0f958

|

||||

#: 8d4f856b1b734434a80d1a9cc43b1611

|

||||

msgid "1.Choose Datasource"

|

||||

msgstr "1.Choose Datasource"

|

||||

|

||||

#: ../../getting_started/application/chatdb/chatdb.md:9

|

||||

#: 1a503a45cb134430b6619812a27cf371

|

||||

#: 9218c985e6e24cebab8c098bc49119a3

|

||||

msgid ""

|

||||

"If you are using DB-GPT for the first time, you need to add a data source"

|

||||

" and set the relevant connection information for the data source."

|

||||

msgstr "如果你是第一次使用DB-GPT, 首先需要添加数据源,设置数据源的相关连接信息"

|

||||

|

||||

#: ../../getting_started/application/chatdb/chatdb.md:13

|

||||

#: 70a1af47305b4adebb7d10cc87c63b5c

|

||||

#: ec508b8298bf4657aca722875d34d858

|

||||

msgid "there are some example data in DB-GPT-NEW/DB-GPT/docker/examples"

|

||||

msgstr "在DB-GPT-NEW/DB-GPT/docker/examples有数据示例"

|

||||

|

||||

#: ../../getting_started/application/chatdb/chatdb.md:15

|

||||

#: d53369cf3c714865b2cacf5a8e203848

|

||||

#: c92428030b914053ad4c01ab9d78ccff

|

||||

msgid "you can execute sql script to generate data."

|

||||

msgstr "你可以通过执行sql脚本生成测试数据"

|

||||

|

||||

#: ../../getting_started/application/chatdb/chatdb.md:18

|

||||

#: c031dd7c1f704cd8b5cb868222cfa318

|

||||

#: fa5f5b1bf8994d349ba80b63be472c7f

|

||||

msgid "1.1 Datasource management"

|

||||

msgstr "1.1 Datasource management"

|

||||

|

||||

#: ../../getting_started/application/chatdb/chatdb.md:20

|

||||

#: 7125e6532f0549cf9886a2e4d7de90ce

|

||||

msgid ""

|

||||

msgstr ""

|

||||

#: 6bb044bd35b3469ebee61baf394ce613

|

||||

msgid ""

|

||||

""

|

||||

msgstr ""

|

||||

|

||||

#: ../../getting_started/application/chatdb/chatdb.md:22

|

||||

#: b8097bea350444378b331878aa863e0a

|

||||

#: 23254a25f3464970a7b3e3d7dafa832a

|

||||

msgid "1.2 Connection management"

|

||||

msgstr "1.2 Connection管理"

|

||||

|

||||

#: ../../getting_started/application/chatdb/chatdb.md:24

|

||||

#: 996b028a57db49099dd4560f713b9d57

|

||||

msgid ""

|

||||

msgstr ""

|

||||

#: e244169193dc48fab1b692f7410aed0b

|

||||

msgid ""

|

||||

""

|

||||

msgstr ""

|

||||

|

||||

#: ../../getting_started/application/chatdb/chatdb.md:26

|

||||

#: 3ecdb59a329e44fdb69fde720c86de82

|

||||

#: 32507323a3884f35991f60646b6077bb

|

||||

msgid "1.3 Add Datasource"

|

||||

msgstr "1.3 添加Datasource"

|

||||

|

||||

#: ../../getting_started/application/chatdb/chatdb.md:28

|

||||

#: 9e1a14be9a75473ca73fb463d52eb688

|

||||

#: 3665c149527b4fc3944549454ce81bcf

|

||||

msgid ""

|

||||

""

|

||||

msgstr ""

|

||||

""

|

||||

""

|

||||

msgstr ""

|

||||

|

||||

#: ../../getting_started/application/chatdb/chatdb.md:31

|

||||

#: 9e7471c68e2849438cfb8838ab2936df

|

||||

#: 23100fa4b1b642699f1faae80f78419b

|

||||

msgid "now DB-GPT support Datasource Type"

|

||||

msgstr "DB-GPT支持数据源类型"

|

||||

|

||||

#: ../../getting_started/application/chatdb/chatdb.md:32

|

||||

#: cec1011e56d543219cddd38a461c8c96

|

||||

#: ../../getting_started/application/chatdb/chatdb.md:33

|

||||

#: 54f6ac1232294e72975f2ec8f92a19fd

|

||||

msgid "Mysql"

|

||||

msgstr "Mysql"

|

||||

|

||||

#: ../../getting_started/application/chatdb/chatdb.md:33

|

||||

#: f2aa6b4365a44a729bc7effa472ac2d5

|

||||

#: ../../getting_started/application/chatdb/chatdb.md:34

|

||||

#: e2aff57c70fd4f6b81da9548f59e97b7

|

||||

msgid "Sqlite"

|

||||

msgstr "Sqlite"

|

||||

|

||||

#: ../../getting_started/application/chatdb/chatdb.md:34

|

||||

#: 9695394c8509482ab86e96fead3d11ca

|

||||

#: ../../getting_started/application/chatdb/chatdb.md:35

|

||||

#: fc2a02bf5b004896a3c68b0f27f82c7b

|

||||

msgid "DuckDB"

|

||||

msgstr "DuckDB"

|

||||

|

||||

#: ../../getting_started/application/chatdb/chatdb.md:35

|

||||

#: 92a13f0678764840842c140d244cebe9

|

||||

#: ../../getting_started/application/chatdb/chatdb.md:36

|

||||

#: 1c97c47b248741b290265c7d72875d7a

|

||||

msgid "Clickhouse"

|

||||

msgstr "Clickhouse"

|

||||

|

||||

#: ../../getting_started/application/chatdb/chatdb.md:38

|

||||

#: 727244f8ae0b4cb7a8a7aa13c17e717e

|

||||

#: ../../getting_started/application/chatdb/chatdb.md:37

|

||||

#: 5ebd3d4f0ca94f50b5f536f673d68610

|

||||

#, fuzzy

|

||||

msgid "Mssql"

|

||||

msgstr "Mysql"

|

||||

|

||||

#: ../../getting_started/application/chatdb/chatdb.md:40

|

||||

#: dcdac0c0e6e24305ad601e5ccd82c877

|

||||

msgid "2.ChatData"

|

||||

msgstr "2.ChatData"

|

||||

|

||||

#: ../../getting_started/application/chatdb/chatdb.md:40

|

||||

#: 165eb7ebefcd4bd0aabc5d664f3c86b1

|

||||

#: ../../getting_started/application/chatdb/chatdb.md:41

|

||||

#: c15bd38f6f754e0b8820a8afc0a8358b

|

||||

msgid "Preview Mode"

|

||||

msgstr "Preview Mode"

|

||||

|

||||

#: ../../getting_started/application/chatdb/chatdb.md:42

|

||||

#: b43ffb3cf0734fc8b17ab3865856eda8

|

||||

#, fuzzy

|

||||

msgid ""

|

||||

"After successfully setting up the data source, you can start conversing "

|

||||

"with the database. You can ask it to generate SQL for you or inquire "

|

||||

"about relevant information on the database's metadata. "

|

||||

"demonstration](https://github.com/eosphoros-ai/DB-"

|

||||

"GPT/assets/13723926/8acf6a42-e511-48ff-aabf-3d9037485c1c)"

|

||||

msgstr ""

|

||||

"设置数据源成功后就可以和数据库进行对话了。你可以让它帮你生成SQL,也可以和问它数据库元数据的相关信息。 "

|

||||

|

||||

#: ../../getting_started/application/chatdb/chatdb.md:44

|

||||

#: 1562102c112248aab781dd58f7f328dd

|

||||

#: ../../getting_started/application/chatdb/chatdb.md:46

|

||||

#: 3f31a98fbf804b3495344ee95505e037

|

||||

msgid "Editor Mode"

|

||||

msgstr "Editor Mode"

|

||||

|

||||

#: ../../getting_started/application/chatdb/chatdb.md:47

|

||||

#: e3c071be4daa40d0b03af97dbafe1713

|

||||

msgid ""

|

||||

"In Editor Mode, you can edit your sql and execute it. "

|

||||

msgstr "编辑器模式下可以在线编辑sql进行调试. "

|

||||

|

||||

#: ../../getting_started/application/chatdb/chatdb.md:51

|

||||

#: 6c694afb12dc4ef28bb58db80d15190c

|

||||

msgid "3.ChatDB"

|

||||

msgstr "3.ChatDB"

|

||||

|

||||

#: ../../getting_started/application/chatdb/chatdb.md:45

|

||||

#: 8cbcf39784464bc1b62abf10f17658cc

|

||||

msgid ""

|

||||

msgstr ""

|

||||

#: ../../getting_started/application/chatdb/chatdb.md:53

|

||||

#: 631503240cf64cc8b80a9f5e43aae0dd

|

||||

msgid ""

|

||||

""

|

||||

msgstr ""

|

||||

|

||||

#~ msgid ""

|

||||

#~ msgstr ""

|

||||

|

||||

#~ msgid ""

|

||||

#~ ""

|

||||

#~ msgstr ""

|

||||

#~ ""

|

||||

|

||||

#~ msgid ""

|

||||

#~ ""

|

||||

#~ msgstr ""

|

||||

#~ ""

|

||||

|

||||

#~ msgid ""

|

||||

#~ msgstr ""

|

||||

|

||||

|

||||

@@ -8,7 +8,7 @@ msgid ""

|

||||

msgstr ""

|

||||

"Project-Id-Version: DB-GPT 👏👏 0.3.5\n"

|

||||

"Report-Msgid-Bugs-To: \n"

|

||||

"POT-Creation-Date: 2023-08-18 14:11+0800\n"

|

||||

"POT-Creation-Date: 2023-08-29 20:30+0800\n"

|

||||

"PO-Revision-Date: YEAR-MO-DA HO:MI+ZONE\n"

|

||||

"Last-Translator: FULL NAME <EMAIL@ADDRESS>\n"

|

||||

"Language: zh_CN\n"

|

||||

@@ -20,12 +20,12 @@ msgstr ""

|

||||

"Generated-By: Babel 2.12.1\n"

|

||||

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:1

|

||||

#: c342c2c426ca40458d0de471f729bc42

|

||||

#: 1a06a61cf9d042d780ec19d9385594e9

|

||||

msgid "KBQA"

|

||||

msgstr ""

|

||||

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:3

|

||||

#: 5bd2a6513a1f4560a94b85e28345b030

|

||||

#: 96d298acd0de48b3ae06134bc2bbc214

|

||||

msgid ""

|

||||

"DB-GPT supports a knowledge question-answering module, which aims to "

|

||||

"create an intelligent expert in the field of databases and provide "

|

||||

@@ -33,112 +33,116 @@ msgid ""

|

||||

msgstr " DB-GPT支持知识问答模块,知识问答的初衷是打造DB领域的智能专家,为数据库从业人员解决专业的知识问题回答"

|

||||

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:5

|

||||

#: fb32ebbf11ec4f3f925f0dc157e5bb1e

|

||||

msgid ""

|

||||

msgstr ""

|

||||

#: d1ea2c61f0c34ba294af01c4e7f5a57e

|

||||

msgid ""

|

||||

""

|

||||

msgstr ""

|

||||

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:5

|

||||

#: e44acc4009154e3396ebc8c69922c4e8

|

||||

#: ef5384441b5a40dc92613dc6e2574406

|

||||

msgid "chat_knowledge"

|

||||

msgstr "chat_knowledge"

|

||||

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:7

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:10

|

||||

#: 15d2742a567f4f2bb669086523691d11 d2228fc5179440729b594c9ac0626c2c

|

||||

#: 4959ed3310614273bf1f092119bd556d d6592a16245b49fb89daee4864c1c448

|

||||

msgid "KBQA abilities"

|

||||

msgstr "KBQA现有能力"

|

||||

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:11

|

||||

#: f7d0eac20e6d46fc8090f6d8c8786306

|

||||

#: b476c161c24f472395ec4c97bc769f4d

|

||||

msgid "Knowledge Space."

|

||||

msgstr "知识空间"

|

||||

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:12

|

||||

#: efde56662dbd4d7687fff81e2983e365

|

||||

#: 7f85e2cda7a2424db23ba57c7dab9595

|

||||

msgid "Multi Source Knowledge Source Embedding."

|

||||

msgstr "多数据源Embedding"

|

||||

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:13

|

||||

#: 6788330bed0948b1804723540cbebe7b

|

||||

#: 33f69a1bf9fb463c99cb5d5d20ec73d1

|

||||

msgid "Embedding Argument Adjust"

|

||||

msgstr "Embedding参数自定义"

|

||||

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:14

|

||||

#: f7b04ae3bc3949bda0c8d1153953cabf

|

||||

#: dc51e39242f342ddbe58ffa174beb6ab

|

||||

msgid "Chat Knowledge"

|

||||

msgstr "知识问答"

|

||||

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:15

|

||||

#: 7c6365cf0026441d91e1d8ad7b97ff00

|

||||

#: a6dad0b121c54b3ea20c70a5db70a620

|

||||

msgid "Multi Vector DB"

|

||||

msgstr "多向量数据库管理"

|

||||

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:19

|

||||

#: e03c3631efc94e339fae6493b0866c44

|

||||

#: 674b836c5b724239bbddd82bad862277

|

||||

msgid ""

|

||||

"If your DB type is Sqlite, there is nothing to do to build KBQA service "

|

||||

"database schema."

|

||||

msgstr ""

|

||||

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:21

|

||||

#: 670b9666927f4662872b80e648b13670

|

||||

#: ebeabfa4e0b4449eae8c3415a34c6c65

|

||||

msgid ""

|

||||

"If your DB type is Mysql or other DBTYPE, you will build kbqa service "

|

||||

"database schema."

|

||||

msgstr ""

|

||||

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:23

|

||||

#: f4586b306b3542b4afaf431c0f215e98

|

||||

#: 71163cedcdf64a5aac842bae688f4e23

|

||||

msgid "Mysql"

|

||||

msgstr ""

|

||||

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:24

|

||||

#: 6c8ef148ec0f429ebbde6a8b6c1686f0

|

||||

#: da70cf9689db4434b95285c2b3610181

|

||||

msgid ""

|

||||

"$ mysql -h127.0.0.1 -uroot -paa12345678 < "

|

||||

"./assets/schema/knowledge_management.sql"

|

||||

msgstr ""

|

||||

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:26

|

||||

#: 0ac3c50d793d4b9db9a8e2df72c46ec8

|

||||

#: 3b4ddf0ec10b4851abb9dffc23d1a0ee

|

||||

msgid "or"

|

||||

msgstr ""

|

||||

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:28

|

||||

#: 3572f74a11a1408eb1537f91fc2492f6

|

||||

#: d100bbdc602c4ac79ffe90c867727983

|

||||

msgid "execute DBGPT/assets/schema/knowledge_management.sql"

|

||||

msgstr ""

|

||||

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:31

|

||||

#: 5ed3fd5cd4b547d5b01be4780ec11886

|

||||

#: d55e49f983e940108df5b91a9fb655b8

|

||||

msgid "Steps to KBQA In DB-GPT"

|

||||

msgstr "怎样一步一步使用KBQA"

|

||||

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:33

|

||||

#: 5d882ed64d4d49daa2e582f67aad6f88

|

||||

#: 3c7b3d6af58b4dca803a1bb31601067e

|

||||

msgid "1.Create Knowledge Space"

|

||||

msgstr "1.首先创建知识空间"

|

||||

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:34

|

||||

#: bfdb91ff12c04094964d9f8a0c9bbfac

|

||||

#: baa2fb6ab666407cbfc1c89021259930

|

||||

#, fuzzy

|

||||

msgid ""

|

||||

"If you are using Knowledge Space for the first time, you need to create a"

|

||||

" Knowledge Space and set your name, owner, description. "

|

||||

""

|

||||

""

|

||||

msgstr "如果你是第一次使用,先创建知识空间,指定名字,拥有者和描述信息"

|

||||

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:34

|

||||

#: ddbcb1112d5942db987a470bb935e1e4

|

||||

#: 48890c22982840eabe3e39b80d26fb90

|

||||

msgid "create_space"

|

||||

msgstr "create_space"

|

||||

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:39

|

||||

#: da62c5b0796c47f0a5e482e79e70e352

|

||||

#: 2d271dabf8d0492e921795d68a764e03

|

||||

msgid "2.Create Knowledge Document"

|

||||

msgstr "2.上传知识"

|

||||

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:40

|

||||

#: 89e5377735f24f1d854f85ca4a387063

|

||||

#: 5ed95d36b0c147bebde38f4a6f55e5a3

|

||||

msgid ""

|

||||

"DB-GPT now support Multi Knowledge Source, including Text, WebUrl, and "

|

||||

"Document(PDF, Markdown, Word, PPT, HTML and CSV). After successfully "

|

||||

@@ -153,55 +157,62 @@ msgstr ""

|

||||

"CSV)。上传文档成功后后台会自动将文档内容进行读取,切片,然后导入到向量数据库中,当然你也可以手动进行同步,你也可以点击详情查看具体的文档切片内容"

|

||||

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:42

|

||||

#: afd3140206664d079047e9225be40dc1

|

||||

#: 36d3223b83614b49a92b0017d5c8ce82

|

||||

msgid "2.1 Choose Knowledge Type:"

|

||||

msgstr "2.1 选择知识类型"

|

||||

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:43

|

||||

#: 0ea63130eb5741979a9603a768b759e6

|

||||

msgid ""

|

||||

msgstr ""

|

||||

#: 5a2b383ffec14ad2a1598b093426e9e7

|

||||

msgid ""

|

||||

""

|

||||

msgstr ""

|

||||

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:43

|

||||

#: cf7aacec8463485a811a1aa06d30f050

|

||||

#: b1c5c73905934de4ac8cea00e482840a

|

||||

msgid "document"

|

||||

msgstr "document"

|

||||

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:45

|

||||

#: 0db8df4eb26b4139816e586136098c9b

|

||||

#: c214c2780b0545a3a3edbd97f69829ed

|

||||

msgid "2.2 Upload Document:"

|

||||

msgstr "2.2上传文档"

|

||||

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:46

|

||||

#: e4331acfd6a24a2094cbbe21fd46ac8b

|

||||

msgid ""

|

||||

msgstr ""

|

||||

#: 732b8e32de8247249c9c908c258ba133

|

||||

msgid ""

|

||||

""

|

||||

msgstr ""

|

||||

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:46

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:50

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:55

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:68

|

||||

#: b8e7fc9cf2ef42fa8313d5f0b0c0e0ba

|

||||

#: 4498b802e7724ad4b2b8edc044abd7fe 59633ce8e91c4e8b9f31cddee14a3f91

|

||||

#: f5c26d53dc584543912b24b8b8bc31ea f905664e67224a778d7c3ef0de7c42a8

|

||||

msgid "upload"

|

||||

msgstr "upload"

|

||||

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:49

|

||||

#: e5a87f0715a642f58cae29ce43d72648

|

||||

#: aa694e12c6884d0fbb5679af0535b05f

|

||||

msgid "3.Chat With Knowledge"

|

||||

msgstr "3.知识问答"

|

||||

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:50

|

||||

#: cefec99c30ab4757896b328371725dd7

|

||||

msgid ""

|

||||

msgstr ""

|

||||

#: 0c0cdbabb3934655a65c1c4304837efb

|

||||

msgid ""

|

||||

""

|

||||

msgstr ""

|

||||

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:52

|

||||

#: 5d870c26dfaa4916a5e17646d835c5fd

|

||||

#: ce53a33431714d31b56eb0450a1363f4

|

||||

msgid "4.Adjust Space arguments"

|

||||

msgstr "4.调整知识参数"

|

||||

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:53

|

||||

#: 56afbbc1dc144c43be0b3e5ff8c3eb55

|

||||

#: b37255e6cd6b433e8eeb01d73c9ccf19

|

||||

msgid ""

|

||||

"Each knowledge space supports argument customization, including the "

|

||||

"relevant arguments for vector retrieval and the arguments for knowledge "

|

||||

@@ -209,74 +220,78 @@ msgid ""

|

||||

msgstr "每一个知识空间都支持参数自定义, 包括向量召回的相关参数以及知识问答Promp参数"

|

||||

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:54

|

||||

#: 994eb05bc17148608f5efc03a12797ff

|

||||

#: 3af4c2d8d2bf410698c73d339d0d1779

|

||||

msgid "4.1 Embedding"

|

||||

msgstr "4.1 Embedding"

|

||||

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:55

|

||||

#: ab00c34d964a4a0ab6472a4ab68c6b9a

|

||||

msgid "Embedding Argument "

|

||||

msgstr "Embedding Argument "

|

||||

#: fdd136a1fe654751a22def62bdca853f

|

||||

msgid ""

|

||||

"Embedding Argument "

|

||||

msgstr ""

|

||||

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:59

|

||||

#: 0bdc576f5c4f4d46b84dfd3e57e2e004

|

||||

#: 8566534562574811bc08375b741dfcc9

|

||||

msgid "Embedding arguments"

|

||||

msgstr "Embedding arguments"

|

||||

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:60

|

||||

#: 515ed658cf8742e1898df7b6b0ed8fec

|

||||

#: 87fdcd70759b43cb9d9a7929247c34a6

|

||||

msgid "topk:the top k vectors based on similarity score."

|

||||

msgstr "topk:相似性检索出tok条文档"

|

||||

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:61

|

||||

#: b2cec278eee640c2b1dea2e52032ca16

|

||||

#: c9fc1f2052c2437e9c4654f8053f02b5

|

||||

msgid "recall_score:set a threshold score for the retrieval of similar vectors."

|

||||

msgstr "recall_score:向量检索相关度衡量指标分数"

|

||||

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:62

|

||||

#: ba0a2a2ff9454a7598d2217b8aefa565

|

||||

#: 11e837b098ee41ef9d5791b5d27f4891

|

||||

msgid "recall_type:recall type."

|

||||

msgstr "recall_type:召回类型"

|

||||

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:63

|

||||

#: b33b6627142d41c4a4e83b56d446c0c7

|

||||

#: 01d10823d12c454cad589b37c56b7b2b

|

||||

msgid "model:A model used to create vector representations of text or other data."

|

||||

msgstr "model:embdding模型"

|

||||

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:64

|

||||

#: 9113a950faca4515967a905256a29e8e

|

||||

#: 5fab346c27f24da783b4f30c55f8e291

|

||||

msgid "chunk_size:The size of the data chunks used in processing."

|

||||

msgstr "chunk_size:文档切片阈值大小"

|

||||

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:65

|

||||

#: dccc04f7efae40309ecaccb33375cf9c

|

||||

#: 3a031f1b6d014167aed24584d7fba187

|

||||

msgid "chunk_overlap:The amount of overlap between adjacent data chunks."

|

||||

msgstr "chunk_overlap:文本块之间的最大重叠量。保留一些重叠可以保持文本块之间的连续性(例如使用滑动窗口)"

|

||||

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:67

|

||||

#: 1740d538f7e7427a8f031b9e1e045f15

|

||||

#: b14bb51708d644c5a2e1fbbcc53f62c3

|

||||

msgid "4.2 Prompt"

|

||||

msgstr "4.2 Prompt"

|

||||

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:68

|

||||

#: a0faf9300f804cc1b99371946c1c86e0

|

||||

msgid "Prompt Argument "

|

||||

msgstr "Prompt Argument "

|

||||

#: f9d61953773a470ab12a3eb5ef00ad9e

|

||||

msgid ""

|

||||

"Prompt Argument "

|

||||

msgstr ""

|

||||

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:72

|

||||

#: b8e6d1b4afdb4dcca99ed23107f3da0b

|

||||

#: fe9923b1f26647718443428ae520bb88

|

||||

msgid "Prompt arguments"

|

||||

msgstr "Prompt arguments"

|

||||

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:73

|

||||

#: 9d3acae04dae4beb86577e58a9f0a8ed

|

||||

#: 7de3b80bc5864407b34a66cd655c3af8

|

||||

msgid ""

|

||||

"scene:A contextual parameter used to define the setting or environment in"

|

||||

" which the prompt is being used."

|

||||

msgstr "scene:上下文环境的场景定义"

|

||||

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:74

|

||||

#: f33e888dd6494330a36ee4b091aa2f3a

|

||||

#: 4902e2e4a2c14cf9b29ca2084960e964

|

||||

msgid ""

|

||||

"template:A pre-defined structure or format for the prompt, which can help"

|

||||

" ensure that the AI system generates responses that are consistent with "

|

||||

@@ -284,72 +299,90 @@ msgid ""

|

||||

msgstr "template:预定义的提示结构或格式,可以帮助确保AI系统生成与所期望的风格或语气一致的回复。"

|

||||

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:75

|

||||

#: 18337db544f4428ba32622a0d7d711f3

|

||||

#: ad60c0ba862443ed80bcdf5895e6b2c1

|

||||

msgid "max_token:The maximum number of tokens or words allowed in a prompt."

|

||||

msgstr "max_token: prompt token最大值"

|

||||

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:77

|

||||

#: 531a209f474246249ffaf66dec484dcc

|

||||

#: 1a381bf794b4437c844c71abe0ca1f85

|

||||

msgid "5.Change Vector Database"

|

||||

msgstr "5.Change Vector Database"

|

||||

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:79

|

||||

#: d6665f2efd73412a9dd5ac2ffd88b369

|

||||

#: 567b9c154d6a48b29536600b324ceb8b

|

||||

msgid "Vector Store SETTINGS"

|

||||

msgstr "Vector Store SETTINGS"

|

||||

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:80

|

||||

#: 8a6f5b7af5b942928a76556425d943bd

|

||||

#: a54cfb386b3244b998c520ca6499883c

|

||||

msgid "Chroma"

|

||||

msgstr "Chroma"

|

||||

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:81

|

||||

#: bbde039fef3445bf84cc2ded916e6960

|

||||

#: 6cea4f4289c649d49101b2f37f14eae4

|

||||

msgid "VECTOR_STORE_TYPE=Chroma"

|

||||

msgstr "VECTOR_STORE_TYPE=Chroma"

|

||||

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:82

|

||||

#: 984d7eceee8c474880174f3a3ba0df7a

|

||||

#: f3c494223e994ba0beb32abc8df19388

|

||||

msgid "MILVUS"

|

||||

msgstr "MILVUS"

|

||||

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:83

|

||||

#: 8dbf41b03c58457896c4a9595fcb9517

|

||||

#: e710aa4a2b184618bfc57f955f6e5b41

|

||||

msgid "VECTOR_STORE_TYPE=Milvus"

|

||||

msgstr "VECTOR_STORE_TYPE=Milvus"

|

||||

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:84

|

||||

#: 853a391ff6fc47af90e8fbaea06ee948

|

||||

#: ec6e4c1215f94776b47a5b035f3e82ed

|

||||

msgid "MILVUS_URL=127.0.0.1"

|

||||

msgstr "MILVUS_URL=127.0.0.1"

|

||||

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:85

|

||||

#: ef21d47a76eb495dbafb3dd0e99ae3b2

|

||||

#: 14b5fd2ddf5d417a8d12f9dd0de77c4f

|

||||

msgid "MILVUS_PORT=19530"

|

||||

msgstr "MILVUS_PORT=19530"

|

||||

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:86

|

||||

#: c06ba32595bb4572a468f8ca277c30fd

|

||||

#: 558fbd783bf647b59af485cf501867ca

|

||||

msgid "MILVUS_USERNAME"

|

||||

msgstr "MILVUS_USERNAME"

|

||||

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:87

|

||||

#: f09d2171e98c4b379cb7548715b3f60f

|

||||

#: d7778707f4c840979a5a91430b947af8

|

||||

msgid "MILVUS_PASSWORD"

|

||||

msgstr "MILVUS_PASSWORD"

|

||||

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:88

|

||||

#: e0f8de6b5bfb4689be9a7777796e5477

|

||||

#: a6bcecc6451749048616031cbc1f07b6

|

||||

msgid "MILVUS_SECURE="

|

||||

msgstr "MILVUS_SECURE="

|

||||

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:90

|

||||

#: 15fee5a1d2d9404f955a27d77656b690

|

||||

#: acfde2a941b141d28189c4f63f803234

|

||||

msgid "WEAVIATE"

|

||||

msgstr "WEAVIATE"

|

||||

|

||||

#: ../../getting_started/application/kbqa/kbqa.md:91

|

||||

#: 632fe609a7694d51b4b908fed5a80415

|

||||

#: 59d8f6f31a5549799e52851eab72bfc2

|

||||

msgid "WEAVIATE_URL=https://kt-region-m8hcy0wc.weaviate.network"

|

||||

msgstr "WEAVIATE_URL=https://kt-region-m8hcy0wc.weaviate.networkc"

|

||||

|

||||

#~ msgid ""

|

||||

#~ msgstr ""

|

||||

|

||||

#~ msgid ""

|

||||

#~ msgstr ""

|

||||

|

||||

#~ msgid ""

|

||||

#~ msgstr ""

|

||||

|

||||

#~ msgid ""

|

||||

#~ msgstr ""

|

||||

|

||||

#~ msgid "Embedding Argument "

|

||||

#~ msgstr "Embedding Argument "

|

||||

|

||||

#~ msgid "Prompt Argument "

|

||||

#~ msgstr "Prompt Argument "

|

||||

|

||||

|

||||

@@ -8,7 +8,7 @@ msgid ""

|

||||

msgstr ""

|

||||

"Project-Id-Version: DB-GPT 👏👏 0.3.5\n"

|

||||

"Report-Msgid-Bugs-To: \n"

|

||||

"POT-Creation-Date: 2023-08-21 16:59+0800\n"

|

||||

"POT-Creation-Date: 2023-08-29 20:30+0800\n"

|

||||

"PO-Revision-Date: YEAR-MO-DA HO:MI+ZONE\n"

|

||||

"Last-Translator: FULL NAME <EMAIL@ADDRESS>\n"

|

||||

"Language: zh_CN\n"

|

||||

@@ -20,12 +20,12 @@ msgstr ""

|

||||

"Generated-By: Babel 2.12.1\n"

|

||||

|

||||

#: ../../getting_started/faq/deploy/deploy_faq.md:1

|

||||

#: 4466dae5cd1048cd9c22450be667b05a

|

||||

#: 7f17a8fdda104de18433b228ec3c1935

|

||||

msgid "Installation FAQ"

|

||||

msgstr "Installation FAQ"

|

||||

|

||||

#: ../../getting_started/faq/deploy/deploy_faq.md:5

|

||||

#: dfa13f5fdf1e4fb9af92b58a5bae2ae9

|

||||

#: dc734d91ec464177834ab2e2df25f70a

|

||||

#, fuzzy

|

||||

msgid ""

|

||||

"Q1: execute `pip install -e .` error, found some package cannot find "

|

||||

@@ -35,18 +35,18 @@ msgstr ""

|

||||

"cannot find correct version."

|

||||

|

||||

#: ../../getting_started/faq/deploy/deploy_faq.md:6

|

||||

#: c694387b681149d18707be047b46fa87

|

||||

#: dd04e05135ee4065b44e6196041fd15f

|

||||

msgid "change the pip source."

|

||||

msgstr "替换pip源."

|

||||

|

||||

#: ../../getting_started/faq/deploy/deploy_faq.md:13

|

||||

#: ../../getting_started/faq/deploy/deploy_faq.md:20

|

||||

#: 5423bc84710c42ee8ba07e95467ce3ac 99aa6bb16764443f801a342eb8f212ce

|

||||

#: 159d77d65f014357ae4caa5aeba32080 7a5250f10d104e73a64012a89fb00e6f

|

||||

msgid "or"

|

||||

msgstr "或者"

|

||||

|

||||

#: ../../getting_started/faq/deploy/deploy_faq.md:27

|

||||

#: 6cc878fe282f4a9ab024d0b884c57894

|

||||

#: 15af207c532541a2906a260266d8d2ca

|

||||

msgid ""

|

||||

"Q2: sqlalchemy.exc.OperationalError: (sqlite3.OperationalError) unable to"

|

||||

" open database file"

|

||||

@@ -55,39 +55,73 @@ msgstr ""

|

||||

" open database file"

|

||||

|

||||

#: ../../getting_started/faq/deploy/deploy_faq.md:29

|

||||

#: 18a71bc1062a4b1c8247068d4d49e25d

|

||||

#: b45fd323f5c84a428393e2d0e749ab17

|

||||

msgid "make sure you pull latest code or create directory with mkdir pilot/data"

|

||||

msgstr "make sure you pull latest code or create directory with mkdir pilot/data"

|

||||

|

||||

#: ../../getting_started/faq/deploy/deploy_faq.md:31

|

||||

#: 0987d395af24440a95dd9367e3004a0b

|

||||

#: 468efa45ffe147ebb3a7bf737823f9da

|

||||

msgid "Q3: The model keeps getting killed."

|

||||

msgstr "Q3: The model keeps getting killed."

|

||||

|

||||

#: ../../getting_started/faq/deploy/deploy_faq.md:33

|

||||

#: bfd90cb8f2914bba84a44573a9acdd6d

|

||||

#: ca4bb900b69740bbb40c7cb78bd8ba00

|

||||

msgid ""

|

||||

"your GPU VRAM size is not enough, try replace your hardware or replace "

|

||||

"other llms."

|

||||

msgstr "GPU显存不够, 增加显存或者换一个显存小的模型"

|

||||

|

||||

#: ../../getting_started/faq/deploy/deploy_faq.md:35

|

||||

#: 09a9baca454d4b868fedffa4febe7c5c

|

||||

#: 13d52bc8a91a4e209d0b90f68f1be853

|

||||

msgid "Q4: How to access website on the public network"

|

||||

msgstr ""

|

||||

|

||||

#: ../../getting_started/faq/deploy/deploy_faq.md:37

|

||||

#: 3ad8d2cf2b4348a6baed5f3e302cd58c

|

||||

#: 5f0c0e894fdb477eba928f9c946a9bd8

|

||||

msgid ""

|

||||

"You can try to use gradio's [network](https://github.com/gradio-"

|

||||

"app/gradio/blob/main/gradio/networking.py) to achieve."

|

||||

msgstr ""

|

||||

|

||||

#: ../../getting_started/faq/deploy/deploy_faq.md:48

|

||||

#: 90b35959c5854b69acad9b701e21e65f

|

||||

#: c30ab098c2e547df81038814f870dec4

|

||||

msgid "Open `url` with your browser to see the website."

|

||||

msgstr ""

|

||||

|

||||

#: ../../getting_started/faq/deploy/deploy_faq.md:50

|

||||

#: d298adc56dce463d8deb3d3550bc382c

|

||||

msgid "Q5: (Windows) execute `pip install -e .` error"

|

||||

msgstr ""

|

||||

|

||||

#: ../../getting_started/faq/deploy/deploy_faq.md:52

|

||||

#: a4b683c41fed443a8016b56c24235612

|

||||

msgid "The error log like the following:"

|

||||

msgstr ""

|

||||

|

||||

#: ../../getting_started/faq/deploy/deploy_faq.md:71

|

||||

#: ccbb33427cba40fca68b075cb796c670

|

||||

msgid ""

|

||||

"Download and install `Microsoft C++ Build Tools` from [visual-cpp-build-"

|

||||

"tools](https://visualstudio.microsoft.com/visual-cpp-build-tools/)"

|

||||

msgstr ""

|

||||

|

||||

#: ../../getting_started/faq/deploy/deploy_faq.md:75

|

||||

#: b9535d65d65f4a368f7b0ee6f14c7da8

|

||||

msgid "Q6: `Torch not compiled with CUDA enabled`"

|

||||

msgstr ""

|

||||

|

||||

#: ../../getting_started/faq/deploy/deploy_faq.md:82

|

||||

#: f9cf565e02b240b4a4044293122e9910

|

||||

msgid "Install [CUDA Toolkit](https://developer.nvidia.com/cuda-toolkit-archive)"

|

||||

msgstr ""

|

||||

|

||||

#: ../../getting_started/faq/deploy/deploy_faq.md:83

|

||||

#: 6a15c0284228430381f56ac3bc825361

|

||||

msgid ""

|

||||

"Reinstall PyTorch [start-locally](https://pytorch.org/get-started/locally"

|

||||

"/#start-locally) with CUDA support."

|

||||

msgstr ""

|

||||

|

||||

#~ msgid ""

|

||||

#~ "Q2: When use Mysql, Access denied "

|

||||

#~ "for user 'root@localhost'(using password :NO)"

|

||||

|

||||

@@ -8,7 +8,7 @@ msgid ""

|

||||

msgstr ""

|

||||

"Project-Id-Version: DB-GPT 👏👏 0.3.5\n"

|

||||

"Report-Msgid-Bugs-To: \n"

|

||||

"POT-Creation-Date: 2023-08-21 16:59+0800\n"

|

||||

"POT-Creation-Date: 2023-08-29 20:30+0800\n"

|

||||

"PO-Revision-Date: YEAR-MO-DA HO:MI+ZONE\n"

|

||||

"Last-Translator: FULL NAME <EMAIL@ADDRESS>\n"

|

||||

"Language: zh_CN\n"

|

||||

@@ -20,34 +20,34 @@ msgstr ""

|

||||

"Generated-By: Babel 2.12.1\n"

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md:1

|

||||

#: 14020ee624b545a5a034b7e357f42545

|

||||

#: 963de2c147e4491085e40a367ede1cb3

|

||||

msgid "Installation From Source"

|

||||

msgstr "源码安装"

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md:3

|

||||

#: eeafb53bf0e846518457084d84edece7

|

||||

#: 3597fef8c1c24663ba9ddf0240dd8a1e

|

||||

msgid ""

|

||||

"This tutorial gives you a quick walkthrough about use DB-GPT with you "

|

||||

"environment and data."

|

||||

msgstr "本教程为您提供了关于如何使用DB-GPT的使用指南。"

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md:5

|

||||

#: 1d6ee2f0f1ae43e9904da4c710b13e28

|

||||

#: e9bd2165f24e41c2bebb4fed1672fd54

|

||||

msgid "Installation"

|

||||

msgstr "安装"

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md:7

|

||||

#: 6ebdb4ae390e4077af2388c48a73430d

|

||||

#: 7a52cf49d60a4e76a43d8e534dcac6b8

|

||||

msgid "To get started, install DB-GPT with the following steps."

|

||||

msgstr "请按照以下步骤安装DB-GPT"

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md:9

|

||||

#: 910cfe79d1064bd191d56957b76d37fa

|

||||

#: 4dbc9d0146bb4574b31222a14c45eb46

|

||||

msgid "1. Hardware Requirements"

|

||||

msgstr "1. 硬件要求"

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md:10

|

||||

#: 6207b8e32b7c4b669c8874ff9267627e

|

||||

#: eee7223178704420a179781df476e855

|

||||

msgid ""

|

||||

"As our project has the ability to achieve ChatGPT performance of over "

|

||||

"85%, there are certain hardware requirements. However, overall, the "

|

||||

@@ -56,176 +56,176 @@ msgid ""

|

||||

msgstr "由于我们的项目有能力达到85%以上的ChatGPT性能,所以对硬件有一定的要求。但总体来说,我们在消费级的显卡上即可完成项目的部署使用,具体部署的硬件说明如下:"

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md

|

||||

#: 45babe2e028746559e437880fdbcd5d3

|

||||

#: 21613161531642fb8d62d589b8d4feaa

|

||||

msgid "GPU"

|

||||

msgstr "GPU"

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md

|

||||

#: 7adbc2bb5e384d419b53badfaf36b962 9307790f5c464f58a54c94659451a037

|

||||

#: cf5a8b7a75034011b7305d8bd09cf69c e8b55944bb9d4b91976027b3a2ae09d0

|

||||

msgid "VRAM Size"

|

||||

msgstr "显存"

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md

|

||||

#: 305fdfdbd4674648a059b65736be191c

|

||||

#: 36641aaf9b81420fae3c2a2d89816d8a

|

||||

msgid "Performance"

|

||||

msgstr "Performance"

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md

|

||||

#: 0e719a22b08844d9be04b4bcaeb4ad87

|

||||

#: ebc46f5c3f944ca6944774ac264ac801

|

||||

msgid "RTX 4090"

|

||||

msgstr "RTX 4090"

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md

|

||||

#: 482dd0da73f3495198ee1c9c8fb7e8ed ed30edd1a6944d6c8cb6a06c9c12d4db

|

||||

#: c387fa1e47ec4ae4bb145fbd4c18cd99 efbe071097b4421dbf06080d4ab1ec70

|

||||

msgid "24 GB"

|

||||

msgstr "24 GB"

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md

|

||||

#: b9a9b3179d844b97a578193eacfec8cc

|

||||

#: 48d5632d53e0413d937bd4c13716591a

|

||||

msgid "Smooth conversation inference"

|

||||

msgstr "Smooth conversation inference"

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md

|

||||

#: 171da7c9f0744b5aa335a5411f126eb7

|

||||

#: 5d7a590b885c4f128716da6347d91304

|

||||

msgid "RTX 3090"

|

||||

msgstr "RTX 3090"

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md

|

||||

#: fbb497f41e61437ba089008c573b0cc7

|

||||

#: 93a35c5359e9432b9fac149e67087b4b

|

||||

msgid "Smooth conversation inference, better than V100"

|

||||

msgstr "Smooth conversation inference, better than V100"

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md

|

||||

#: 2cb4fba16b664e1e9c22a1076f837a80

|

||||

#: ec6d393791ae49eea9b2ff79f803e76c

|

||||

msgid "V100"

|

||||

msgstr "V100"

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md

|

||||

#: 05cccda43ffb41d7b73c2d5dfbc7f1c5 8c471150ab0746d8998ddca30ad86404

|

||||

#: 7da6411bc1c642e9a1063e988044e9f2 852dac8854514958b45f7f125c11f625

|

||||

msgid "16 GB"

|

||||

msgstr "16 GB"

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md

|

||||

#: 335fa32f77a349abb9a813a3a9dd6974 8546d09080b0421597540e92ab485254

|

||||

#: 1a3c78ad04114d5ebf25edba7ebdfcb7 b8ed26b4da564660bd70f0a3af1282a8

|

||||

msgid "Conversation inference possible, noticeable stutter"

|

||||

msgstr "Conversation inference possible, noticeable stutter"

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md

|

||||

#: dd7a18f4bc144413b90863598c5e9a83

|

||||

#: 36603d7af55742eebc2f023fdf798f43

|

||||

msgid "T4"

|

||||

msgstr "T4"

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md:19

|

||||

#: 932dc4db2fba4272b72c31eb7d319255

|

||||

#: 0a4d0daf5d44421893db967825c0e158

|

||||

msgid ""

|

||||

"if your VRAM Size is not enough, DB-GPT supported 8-bit quantization and "

|

||||

"4-bit quantization."

|

||||

msgstr "如果你的显存不够,DB-GPT支持8-bit和4-bit量化版本"

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md:21

|

||||

#: 7dd6fabaf1ea43718f26e8b83a7299e3

|

||||

#: 3523d76dc2a8414495ad46f72c304226

|

||||

msgid ""

|

||||

"Here are some of the VRAM size usage of the models we tested in some "

|

||||

"common scenarios."

|

||||

msgstr "这里是量化版本的相关说明"

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md

|

||||

#: b50ded065d4943e3a5bfdfdf3a723f82

|

||||

#: 8ff21b833bf64962901e2e7a52e1322a

|

||||

msgid "Model"

|

||||

msgstr "Model"

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md

|

||||

#: 30621cf2407f4beca262eb47023d0b84

|

||||

#: be429d17a47643c797d989148d62583a

|

||||

msgid "Quantize"

|

||||

msgstr "Quantize"

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md

|

||||

#: 492112b927ce46308c50917beaa9e23a 8450f0b95a05475d9136906ec64d43b2

|

||||

#: 2ec73c552480461dada4f44763c4623e c51b0f85b34845798d926db31eaf720c

|

||||

msgid "vicuna-7b-v1.5"

|

||||

msgstr "vicuna-7b-v1.5"

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md

|

||||

#: 1379c29cb10340848ce3e9bf9ec67348 39221fd99f0a41d29141fb73e1c9217d

|

||||

#: 3fd499ced4884e2aa6633784432f085c 6e872d37f2ab4571961465972092f439

|

||||

#: 917c7d492a4943f5963a210f9c997cb7 fb204224fb484019b344315d03f50571

|

||||

#: ff9f5aea13d04176912ebf141cc15d44

|

||||

#: 2550147d36074525b23021a14dc55b24 5200cf5ca316459184cd247285adeb9c

|

||||

#: 9698f16cfa3d4ac589e7741e2371766c a3c90ef3728240a9927da25688ca500b

|

||||

#: c197d7bfe2be4f29995d6e6882a73c36 c8742a66fae049f9b2c6d333dd3fc209

|

||||

#: ed4e5c0cc67c4cd3acbb45def0dbac67

|

||||

msgid "4-bit"

|

||||

msgstr "4-bit"

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md

|

||||

#: 13976c780bc3451fae4ad398b39f5245 32fe2b6c2f1e40c8928c8537f9239d07

|

||||

#: 74f4ff229a314abf97c8fa4d6d73c339

|

||||

#: 1985bf309e324843aa849c38c9819b03 998c11e1c9b846d596c4423a22a438c8

|

||||

#: ffe2badbf75b4f119e22481b47866cc5

|

||||

msgid "8 GB"

|

||||

msgstr "8 GB"

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md

|

||||

#: 14ca9edebe794c7692738e5d14a15c06 453394769a20437da7a7a758c35345af

|

||||

#: 860b51d1ab6742c5bdefc6e1ffc923a3 988110ac5e7f418d8a46ea9c42238ecc

|

||||

#: d446bc441f8946d9a95a2a965157478e d683ae90e3da473f876cb948dd5dce5e

|

||||

#: f1e2f6fb36624185ab2876bdc87301ec

|

||||

#: 23fed1f7361b46139294f979144f55b9 44b5316ad4be45acaca850ac350613dd

|

||||

#: 5d2642a53e444757b176c8ff86504f45 5e9a0cf9bab54b6ea805dd4c9b209b51

|

||||

#: 6a2ffb34d35a4d64bcb18d88b6ed3e83 b70e17fc9a184074ac4bc641312fc8e8

|

||||

#: bb6bd13b7d09417ea26949cf385d037c

|

||||

msgid "8-bit"

|

||||

msgstr "8-bit"

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md

|

||||

#: 1b6230690780434184bab3a68d501b60 734f124ce767437097d9cb3584796df5

|

||||

#: 7da1c33a6d4746eba7f976cc43e6ad59 c0e7bd4672014afe88be1e11b8e772da

|

||||

#: d045ee257f8a40e8bdad0e2b91c64018 e231e0b6f7ee4f5b85d28a18bbc32175

|

||||

#: 0718757036b04690bd6fa98959ae41d3 2401f442291c4fd8a7fdf69d1745b604

|

||||

#: 3c3af4923aa5498ca85d87610712f9ff 620ef43ffb5441a7ac0bfcd920729518

|

||||

#: bf626548d1624794ad4e16f0acbdf59f e24c281f31b44b89bb1a9151b7e0ba9a

|

||||

msgid "12 GB"

|

||||

msgstr "12 GB"

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md

|

||||

#: 2ee16dd760e74439bbbec13186f9e44e 65628a09b7bf487eb4b4f0268eab2751

|

||||

#: 685bc5ce8e4a411a8f59026674b5967d 8223632d5da5462b977c3bd9cb2731a2

|

||||

msgid "vicuna-13b-v1.5"

|

||||

msgstr "vicuna-13b-v1.5"

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md

|

||||

#: 3d633ad2c90547d1b47a06aafe4aa177 4a9e81e6303748ada67fa8b6ec1a8f57

|

||||

#: d33dc735503649348590e03efabef94d

|

||||

#: 09e37f82a825411191ab74a634706866 80138163ba034d819eeb165384392655

|

||||

#: da076416fa8c4fb7b28939ca7c76d06b

|

||||

msgid "20 GB"

|

||||

msgstr "20 GB"

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md

|

||||

#: 6c02020d52e94f27b2eb1d10b20e9cca d58f181d10c04980b6d0bdc2be51c01c

|

||||

#: 4551174e7f8449bd93fb14de039ed400 9879cd654383482784992f410ba478aa

|

||||

msgid "llama-2-7b"

|

||||

msgstr "llama-2-7b"

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md

|

||||

#: b9f25fa66dc04e539dfa30b2297ac8aa e1e80256f5444e9aacebb64e053a6b70

|

||||

#: 0a74ee29547445e4a1389386249528a4 af6fa6d9dec54179808820fda8b8210b

|

||||

msgid "llama-2-13b"

|

||||

msgstr "llama-2-13b"

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md

|

||||

#: 077c1d5b6db248d4aa6a3b0c5b2cc237 987cc7f04eea4d94acd7ca3ee0fdfe20

|

||||

#: 1aadc9ec7645467596e991f770e5bce5 32aa71dcf6d9497ba48af4c2c192e3ef

|

||||

msgid "llama-2-70b"

|

||||

msgstr "llama-2-70b"

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md

|

||||

#: 18a1070ab2e048c7b3ea90e25d58b38f

|

||||

#: 1e63e7f32ec74f4e8290254ce348c50d

|

||||

msgid "48 GB"

|

||||

msgstr "48 GB"

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md

|

||||

#: da0474d2c8214e678021181191a651e5

|

||||

#: d5416570171e49a88a75161f4e5f0c77

|

||||

msgid "80 GB"

|

||||

msgstr "80 GB"

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md

|

||||

#: 49e5fedf491b4b569459c23e4f6ebd69 8b54730a306a46f7a531c13ef825b20a

|

||||

#: 598393baadb7468ca649db8de42fd413 f6aaa22133ac4721b6740ab75c43819d

|

||||

msgid "baichuan-7b"

|

||||

msgstr "baichuan-7b"

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md

|

||||

#: 2158255ea61c4a0c9e96bec0df21fa06 694d9b2fe90740c5b9704089d7ddac9d

|

||||

#: 454e6b05fc2f4f318afc7d7e5f4477b9 ebd9204f511949d695b20caa8d2b5701

|

||||

msgid "baichuan-13b"

|

||||

msgstr "baichuan-13b"

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md:40

|

||||

#: a9f9e470d41f4122a95cbc8bd2bc26dc

|

||||

#: a74a45bb031c41a9a88fab4023791430

|

||||

msgid "2. Install"

|

||||

msgstr "2. Install"

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md:45

|

||||

#: c78fd491a6374224ab95dc39849f871f

|

||||

#: 480a54d6f3b944b2a26414561ccd5cf4

|

||||

msgid ""

|

||||

"We use Sqlite as default database, so there is no need for database "

|

||||

"installation. If you choose to connect to other databases, you can "

|

||||

@@ -240,12 +240,12 @@ msgstr ""

|

||||

" Miniconda](https://docs.conda.io/en/latest/miniconda.html)"

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md:54

|

||||

#: 12180cd023a04152b1591a87d96d227a

|

||||

#: 8d9d99b9b3f6444f8e2b289ff0b3d1cf

|

||||

msgid "Before use DB-GPT Knowledge"

|

||||

msgstr "在使用知识库之前"

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md:60

|

||||

#: 2d5c1e241a0b47de81c91eca2c4999c6

|

||||

#: 3ba68f5b12134958ad3495fc03e6261f

|

||||

msgid ""

|

||||

"Once the environment is installed, we have to create a new folder "

|

||||

"\"models\" in the DB-GPT project, and then we can put all the models "

|

||||

@@ -253,27 +253,27 @@ msgid ""

|

||||

msgstr "如果你已经安装好了环境需要创建models, 然后到huggingface官网下载模型"

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md:63

|

||||

#: 8a79092303e74ab2974fb6edd3d14a1c

|

||||

#: ae6612ce4b6845cfa9396fcad5549bd0

|

||||

msgid "Notice make sure you have install git-lfs"

|

||||

msgstr ""

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md:65

|

||||

#: 68e9b02da9994192856fd4572732041d

|

||||

#: fbfa0caf0615484bbe861b327a67c847

|

||||

msgid "centos:yum install git-lfs"

|

||||

msgstr ""

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md:67

|

||||

#: 30cae887feee4ec897d787801d1900db

|

||||

#: ca10fedecab243fba8a0d11b14ccddde

|

||||

msgid "ubuntu:app-get install git-lfs"

|

||||

msgstr ""

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md:69

|

||||

#: 292a52e7f50242c59e7e95bafc8102da

|

||||

#: a3026601a2304ce3a38e162cb7f44d2f

|

||||

msgid "macos:brew install git-lfs"

|

||||

msgstr ""

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md:86

|

||||

#: a66af00ea6a0403dbc90df23b0e3c40e

|

||||

#: ffda61cebb534429bfa1d3a375647077

|

||||

msgid ""

|

||||

"The model files are large and will take a long time to download. During "

|

||||

"the download, let's configure the .env file, which needs to be copied and"

|

||||

@@ -281,7 +281,7 @@ msgid ""

|

||||

msgstr "模型文件很大,需要很长时间才能下载。在下载过程中,让我们配置.env文件,它需要从。env.template中复制和创建。"

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md:88

|

||||

#: 98934f9d2dda41e9b9bc20078ed750eb

|

||||

#: f30593a4496c498d807bc1bf48fc333e

|

||||

msgid ""

|

||||

"if you want to use openai llm service, see [LLM Use FAQ](https://db-"

|

||||

"gpt.readthedocs.io/en/latest/getting_started/faq/llm/llm_faq.html)"

|

||||

@@ -290,19 +290,19 @@ msgstr ""

|

||||

"gpt.readthedocs.io/en/latest/getting_started/faq/llm/llm_faq.html)"

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md:91

|

||||

#: 727fc8be76dc40d59416b20273faee13

|

||||

#: adbf4130afb442e0b2ebe9ecadc27927

|

||||

msgid "cp .env.template .env"

|

||||

msgstr "cp .env.template .env"

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md:94

|

||||

#: 65a5307640484800b2d667585e92b340

|

||||

#: 67a0efdf4138465b84f50946c50aef33

|

||||

msgid ""

|

||||

"You can configure basic parameters in the .env file, for example setting "

|

||||

"LLM_MODEL to the model to be used"

|

||||

msgstr "您可以在.env文件中配置基本参数,例如将LLM_MODEL设置为要使用的模型。"

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md:96

|

||||

#: ab83b0d6663441e588ceb52bb2e5934c

|

||||

#: ec7328fea11344b8ac6b821a1cd10531

|

||||

msgid ""

|

||||

"([Vicuna-v1.5](https://huggingface.co/lmsys/vicuna-13b-v1.5) based on "

|

||||

"llama-2 has been released, we recommend you set `LLM_MODEL=vicuna-"

|

||||

@@ -313,45 +313,50 @@ msgstr ""

|

||||

"目前Vicuna-v1.5模型(基于llama2)已经开源了,我们推荐你使用这个模型通过设置LLM_MODEL=vicuna-13b-v1.5"

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md:98

|

||||

#: 4ed331ceacb84a339a7e0038029e356e

|

||||

#: 386d91268dc54f3a92172758afe25d3e

|

||||

msgid "3. Run"

|

||||

msgstr "3. Run"

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md:100

|

||||

#: e674fe7a6a9542dfae6e76f8c586cb04

|

||||

#: 4672d31b78dc44719ad41f05fe0bf103

|

||||

msgid "**(Optional) load examples into SQLlite**"

|

||||

msgstr ""

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md:105

|

||||

#: b2de940ecd9444d2ab9ebf8762565fb6

|

||||

#: 174bb3d5c82c41ad92fa2147dbfe0bdc

|

||||

msgid "On windows platform:"

|

||||

msgstr ""

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md:110

|

||||

#: aa25742904354373a10b8aaa62fa6005

|

||||

msgid "1.Run db-gpt server"

|

||||

msgstr "1.Run db-gpt server"

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md:111

|

||||

#: 929d863e28eb4c5b8bd4c53956d3bc76

|

||||

#: ../../getting_started/install/deploy/deploy.md:116

|

||||

#: 86a196e04cd1459ab83c0402b6c73c25

|

||||

msgid "Open http://localhost:5000 with your browser to see the product."

|

||||

msgstr "打开浏览器访问http://localhost:5000"

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md:114

|

||||

#: 43dd8b4a017f448f9be4f5432a083c08

|

||||

#: ../../getting_started/install/deploy/deploy.md:119

|

||||

#: e53c6021747e48298a9a94b33161aba2

|

||||

msgid "If you want to access an external LLM service, you need to"

|

||||

msgstr ""

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md:116

|

||||

#: 452a8bbc3e7f43e9a89f244ab0910fd6

|

||||

#: ../../getting_started/install/deploy/deploy.md:121

|

||||

#: a5d7891ca43a4da8a60e925cfdff829b

|

||||

msgid ""

|

||||

"1.set the variables LLM_MODEL=YOUR_MODEL_NAME, "

|

||||

"MODEL_SERVER=YOUR_MODEL_SERVER(eg:http://localhost:5000) in the .env "

|

||||

"file."

|

||||

msgstr ""

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md:118

|

||||

#: 216350f67d1a4056afb1c0277dd46a0c

|

||||

#: ../../getting_started/install/deploy/deploy.md:123

|

||||

#: adc8ccbdda9743aab58a3fdb8aa593fd

|

||||

msgid "2.execute dbgpt_server.py in light mode"

|

||||

msgstr ""

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md:121

|

||||

#: fc9c90ca4d974ee1ade9e972320debba

|

||||

#: ../../getting_started/install/deploy/deploy.md:126

|

||||

#: 29fb5c398fd94f5eaed6382ec7332097

|

||||

msgid ""

|

||||

"If you want to learn about dbgpt-webui, read https://github./csunny/DB-"

|

||||

"GPT/tree/new-page-framework/datacenter"

|

||||

@@ -359,55 +364,55 @@ msgstr ""

|

||||

"如果你想了解web-ui, 请访问https://github./csunny/DB-GPT/tree/new-page-"

|

||||

"framework/datacenter"

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md:127

|

||||

#: c0df0cdc5dea4ef3bef4d4c1f4cc52ad

|

||||

#: ../../getting_started/install/deploy/deploy.md:132

|

||||

#: d5a6113dc2844430bde6d1e62ae70eb9

|

||||

#, fuzzy

|

||||

msgid "Multiple GPUs"

|

||||

msgstr "4. Multiple GPUs"

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md:129

|

||||

#: c3aed00fa8364e6eaab79a23cf649558

|

||||

#: ../../getting_started/install/deploy/deploy.md:134

|

||||

#: 15d02416586344d19adfc39f6d05ee9f

|

||||

msgid ""

|

||||

"DB-GPT will use all available gpu by default. And you can modify the "

|

||||

"setting `CUDA_VISIBLE_DEVICES=0,1` in `.env` file to use the specific gpu"

|

||||

" IDs."

|

||||

msgstr "DB-GPT默认加载可利用的gpu,你也可以通过修改 在`.env`文件 `CUDA_VISIBLE_DEVICES=0,1`来指定gpu IDs"

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md:131

|

||||

#: 88b7510fef5943c5b4807bc92398a604

|

||||

#: ../../getting_started/install/deploy/deploy.md:136

|

||||

#: d6875130c3ba4bbf80a046a77c815f26

|

||||

msgid ""

|

||||

"Optionally, you can also specify the gpu ID to use before the starting "

|

||||

"command, as shown below:"

|

||||

msgstr "你也可以指定gpu ID启动"

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md:141

|

||||

#: 2cf93d3291bd4f56a3677e607f6185e7

|

||||

#: ../../getting_started/install/deploy/deploy.md:146

|

||||

#: b2d5855d7cf946a1a00ec55e0328b1a6

|

||||

msgid ""

|

||||

"You can modify the setting `MAX_GPU_MEMORY=xxGib` in `.env` file to "

|

||||

"configure the maximum memory used by each GPU."

|

||||

msgstr "同时你可以通过在.env文件设置`MAX_GPU_MEMORY=xxGib`修改每个GPU的最大使用内存"

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md:143

|

||||

#: 00aac35bec094f99bd1f2f5f344cd3f5

|

||||

#: ../../getting_started/install/deploy/deploy.md:148

|

||||

#: 385caf97c7d149b1bb1852198c8e04de

|

||||

#, fuzzy

|

||||

msgid "Not Enough Memory"

|

||||

msgstr "5. Not Enough Memory"

|

||||

|

||||

#: ../../getting_started/install/deploy/deploy.md:145

|

||||

#: d3d4b8cd24114a24929f62bbe7bae1a2

|

||||

#: ../../getting_started/install/deploy/deploy.md:150

|

||||

#: 30ec48dfe9f44ef88e55f7218b2d3967

|

||||

msgid "DB-GPT supported 8-bit quantization and 4-bit quantization."

|

||||

msgstr "DB-GPT 支持 8-bit quantization 和 4-bit quantization."

|

||||

|

||||