mirror of

https://github.com/hwchase17/langchain.git

synced 2026-02-08 10:09:46 +00:00

Compare commits

1 Commits

dev2049/pg

...

vwp/child_

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

0bdf280469 |

12

.github/PULL_REQUEST_TEMPLATE.md

vendored

12

.github/PULL_REQUEST_TEMPLATE.md

vendored

@@ -1,18 +1,18 @@

|

||||

# Your PR Title (What it does)

|

||||

|

||||

<!--

|

||||

Thank you for contributing to LangChain! Your PR will appear in our release under the title you set. Please make sure it highlights your valuable contribution.

|

||||

|

||||

Replace this with a description of the change, the issue it fixes (if applicable), and relevant context. List any dependencies required for this change.

|

||||

|

||||

After you're done, someone will review your PR. They may suggest improvements. If no one reviews your PR within a few days, feel free to @-mention the same people again, as notifications can get lost.

|

||||

|

||||

Finally, we'd love to show appreciation for your contribution - if you'd like us to shout you out on Twitter, please also include your handle!

|

||||

-->

|

||||

|

||||

<!-- Remove if not applicable -->

|

||||

|

||||

Fixes # (issue)

|

||||

|

||||

#### Before submitting

|

||||

## Before submitting

|

||||

|

||||

<!-- If you're adding a new integration, please include:

|

||||

|

||||

@@ -26,9 +26,9 @@ etc:

|

||||

https://github.com/hwchase17/langchain/blob/master/.github/CONTRIBUTING.md

|

||||

-->

|

||||

|

||||

#### Who can review?

|

||||

## Who can review?

|

||||

|

||||

Tag maintainers/contributors who might be interested:

|

||||

Community members can review the PR once tests pass. Tag maintainers/contributors who might be interested:

|

||||

|

||||

<!-- For a quicker response, figure out the right person to tag with @

|

||||

|

||||

@@ -52,5 +52,5 @@ Tag maintainers/contributors who might be interested:

|

||||

|

||||

VectorStores / Retrievers / Memory

|

||||

- @dev2049

|

||||

|

||||

|

||||

-->

|

||||

|

||||

5

.gitignore

vendored

5

.gitignore

vendored

@@ -149,7 +149,4 @@ wandb/

|

||||

|

||||

# integration test artifacts

|

||||

data_map*

|

||||

\[('_type', 'fake'), ('stop', None)]

|

||||

|

||||

# Replit files

|

||||

*replit*

|

||||

\[('_type', 'fake'), ('stop', None)]

|

||||

@@ -1,14 +1,13 @@

|

||||

# Tutorials

|

||||

|

||||

⛓ icon marks a new addition [last update 2023-05-15]

|

||||

This is a collection of `LangChain` tutorials mostly on `YouTube`.

|

||||

|

||||

### DeepLearning.AI course

|

||||

⛓[LangChain for LLM Application Development](https://learn.deeplearning.ai/langchain) by Harrison Chase presented by [Andrew Ng](https://en.wikipedia.org/wiki/Andrew_Ng)

|

||||

⛓ icon marks a new video [last update 2023-05-15]

|

||||

|

||||

### Handbook

|

||||

###

|

||||

[LangChain AI Handbook](https://www.pinecone.io/learn/langchain/) By **James Briggs** and **Francisco Ingham**

|

||||

|

||||

### Tutorials

|

||||

###

|

||||

[LangChain Tutorials](https://www.youtube.com/watch?v=FuqdVNB_8c0&list=PL9V0lbeJ69brU-ojMpU1Y7Ic58Tap0Cw6) by [Edrick](https://www.youtube.com/@edrickdch):

|

||||

- ⛓ [LangChain, Chroma DB, OpenAI Beginner Guide | ChatGPT with your PDF](https://youtu.be/FuqdVNB_8c0)

|

||||

|

||||

@@ -109,4 +108,4 @@ LangChain by [Chat with data](https://www.youtube.com/@chatwithdata)

|

||||

- ⛓ [Build ChatGPT Chatbots with LangChain Memory: Understanding and Implementing Memory in Conversations](https://youtu.be/CyuUlf54wTs)

|

||||

|

||||

---------------------

|

||||

⛓ icon marks a new addition [last update 2023-05-15]

|

||||

⛓ icon marks a new video [last update 2023-05-15]

|

||||

|

||||

@@ -20,12 +20,6 @@ Integrations by Module

|

||||

- `Toolkit Integrations <./modules/agents/toolkits.html>`_

|

||||

|

||||

|

||||

Dependencies

|

||||

----------------

|

||||

|

||||

| LangChain depends on `several hungered Python packages <https://github.com/hwchase17/langchain/network/dependencies>`_.

|

||||

|

||||

|

||||

All Integrations

|

||||

-------------------------------------------

|

||||

|

||||

|

||||

File diff suppressed because one or more lines are too long

@@ -1,29 +0,0 @@

|

||||

# Airbyte

|

||||

|

||||

>[Airbyte](https://github.com/airbytehq/airbyte) is a data integration platform for ELT pipelines from APIs,

|

||||

> databases & files to warehouses & lakes. It has the largest catalog of ELT connectors to data warehouses and databases.

|

||||

|

||||

## Installation and Setup

|

||||

|

||||

This instruction shows how to load any source from `Airbyte` into a local `JSON` file that can be read in as a document.

|

||||

|

||||

**Prerequisites:**

|

||||

Have `docker desktop` installed.

|

||||

|

||||

**Steps:**

|

||||

1. Clone Airbyte from GitHub - `git clone https://github.com/airbytehq/airbyte.git`.

|

||||

2. Switch into Airbyte directory - `cd airbyte`.

|

||||

3. Start Airbyte - `docker compose up`.

|

||||

4. In your browser, just visit http://localhost:8000. You will be asked for a username and password. By default, that's username `airbyte` and password `password`.

|

||||

5. Setup any source you wish.

|

||||

6. Set destination as Local JSON, with specified destination path - lets say `/json_data`. Set up a manual sync.

|

||||

7. Run the connection.

|

||||

8. To see what files are created, navigate to: `file:///tmp/airbyte_local/`.

|

||||

|

||||

## Document Loader

|

||||

|

||||

See a [usage example](../modules/indexes/document_loaders/examples/airbyte_json.ipynb).

|

||||

|

||||

```python

|

||||

from langchain.document_loaders import AirbyteJSONLoader

|

||||

```

|

||||

@@ -1,36 +0,0 @@

|

||||

# Aleph Alpha

|

||||

|

||||

>[Aleph Alpha](https://docs.aleph-alpha.com/) was founded in 2019 with the mission to research and build the foundational technology for an era of strong AI. The team of international scientists, engineers, and innovators researches, develops, and deploys transformative AI like large language and multimodal models and runs the fastest European commercial AI cluster.

|

||||

|

||||

>[The Luminous series](https://docs.aleph-alpha.com/docs/introduction/luminous/) is a family of large language models.

|

||||

|

||||

## Installation and Setup

|

||||

|

||||

```bash

|

||||

pip install aleph-alpha-client

|

||||

```

|

||||

|

||||

You have to create a new token. Please, see [instructions](https://docs.aleph-alpha.com/docs/account/#create-a-new-token).

|

||||

|

||||

```python

|

||||

from getpass import getpass

|

||||

|

||||

ALEPH_ALPHA_API_KEY = getpass()

|

||||

```

|

||||

|

||||

|

||||

## LLM

|

||||

|

||||

See a [usage example](../modules/models/llms/integrations/aleph_alpha.ipynb).

|

||||

|

||||

```python

|

||||

from langchain.llms import AlephAlpha

|

||||

```

|

||||

|

||||

## Text Embedding Models

|

||||

|

||||

See a [usage example](../modules/models/text_embedding/examples/aleph_alpha.ipynb).

|

||||

|

||||

```python

|

||||

from langchain.embeddings import AlephAlphaSymmetricSemanticEmbedding, AlephAlphaAsymmetricSemanticEmbedding

|

||||

```

|

||||

@@ -1,29 +0,0 @@

|

||||

# Argilla

|

||||

|

||||

|

||||

|

||||

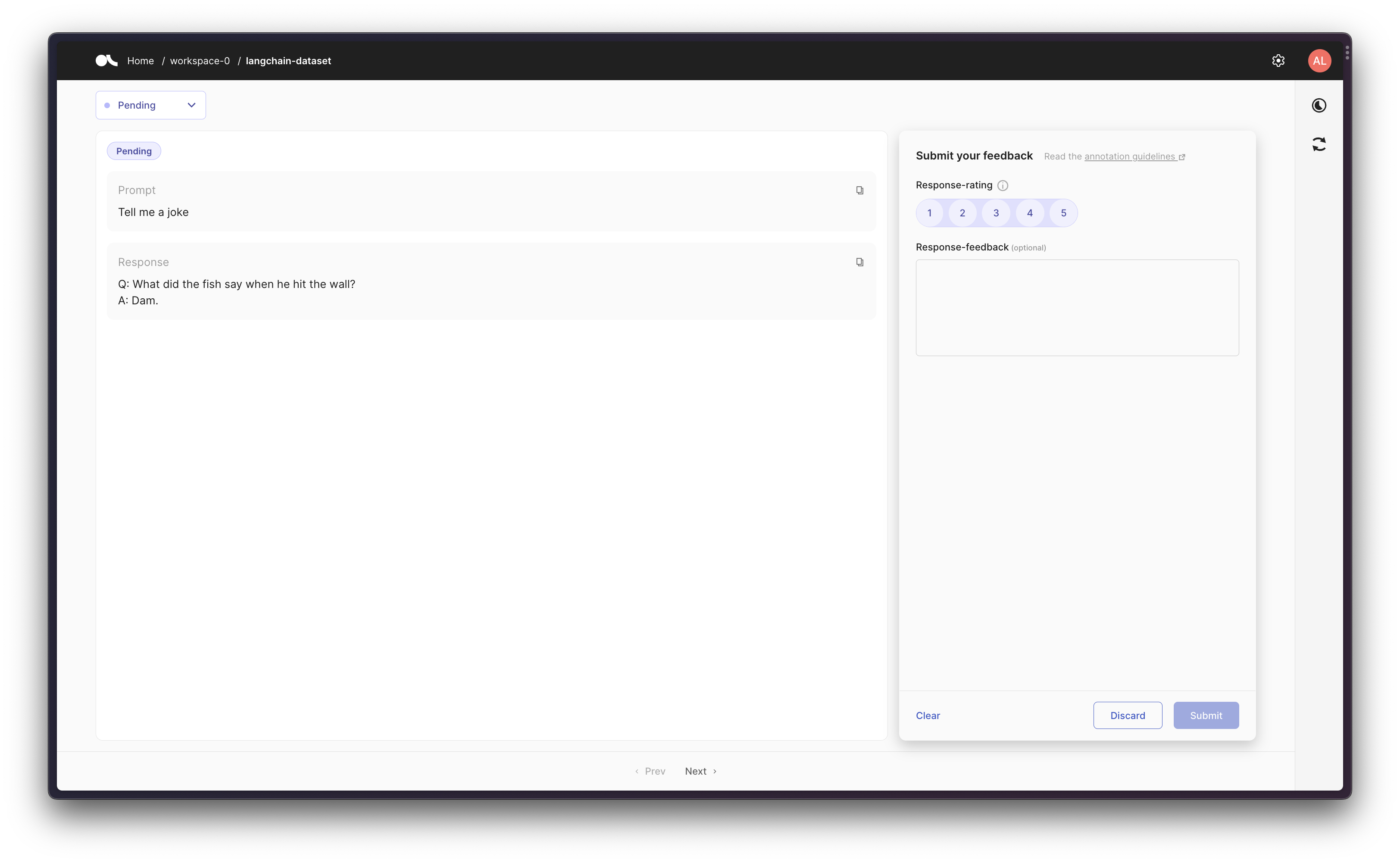

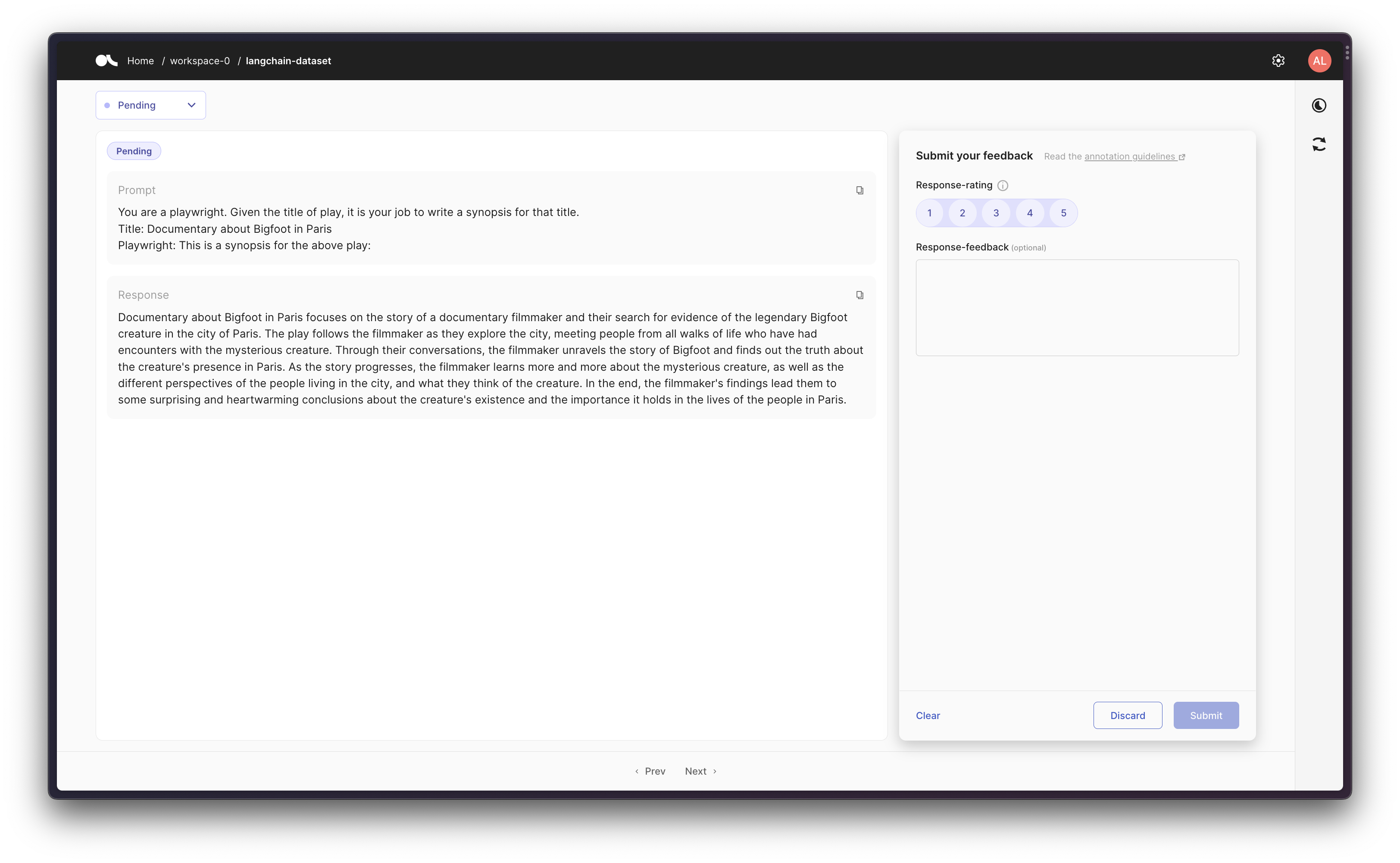

>[Argilla](https://argilla.io/) is an open-source data curation platform for LLMs.

|

||||

> Using Argilla, everyone can build robust language models through faster data curation

|

||||

> using both human and machine feedback. We provide support for each step in the MLOps cycle,

|

||||

> from data labeling to model monitoring.

|

||||

|

||||

## Installation and Setup

|

||||

|

||||

First, you'll need to install the `argilla` Python package as follows:

|

||||

|

||||

```bash

|

||||

pip install argilla --upgrade

|

||||

```

|

||||

|

||||

If you already have an Argilla Server running, then you're good to go; but if

|

||||

you don't, follow the next steps to install it.

|

||||

|

||||

If you don't you can refer to [Argilla - 🚀 Quickstart](https://docs.argilla.io/en/latest/getting_started/quickstart.html#Running-Argilla-Quickstart) to deploy Argilla either on HuggingFace Spaces, locally, or on a server.

|

||||

|

||||

## Tracking

|

||||

|

||||

See a [usage example of `ArgillaCallbackHandler`](../modules/callbacks/examples/examples/argilla.ipynb).

|

||||

|

||||

```python

|

||||

from langchain.callbacks import ArgillaCallbackHandler

|

||||

```

|

||||

@@ -1,28 +0,0 @@

|

||||

# Arxiv

|

||||

|

||||

>[arXiv](https://arxiv.org/) is an open-access archive for 2 million scholarly articles in the fields of physics,

|

||||

> mathematics, computer science, quantitative biology, quantitative finance, statistics, electrical engineering and

|

||||

> systems science, and economics.

|

||||

|

||||

|

||||

## Installation and Setup

|

||||

|

||||

First, you need to install `arxiv` python package.

|

||||

|

||||

```bash

|

||||

pip install arxiv

|

||||

```

|

||||

|

||||

Second, you need to install `PyMuPDF` python package which transforms PDF files downloaded from the `arxiv.org` site into the text format.

|

||||

|

||||

```bash

|

||||

pip install pymupdf

|

||||

```

|

||||

|

||||

## Document Loader

|

||||

|

||||

See a [usage example](../modules/indexes/document_loaders/examples/arxiv.ipynb).

|

||||

|

||||

```python

|

||||

from langchain.document_loaders import ArxivLoader

|

||||

```

|

||||

@@ -1,25 +0,0 @@

|

||||

# AWS S3 Directory

|

||||

|

||||

>[Amazon Simple Storage Service (Amazon S3)](https://docs.aws.amazon.com/AmazonS3/latest/userguide/using-folders.html) is an object storage service.

|

||||

|

||||

>[AWS S3 Directory](https://docs.aws.amazon.com/AmazonS3/latest/userguide/using-folders.html)

|

||||

|

||||

>[AWS S3 Buckets](https://docs.aws.amazon.com/AmazonS3/latest/userguide/UsingBucket.html)

|

||||

|

||||

|

||||

## Installation and Setup

|

||||

|

||||

```bash

|

||||

pip install boto3

|

||||

```

|

||||

|

||||

|

||||

## Document Loader

|

||||

|

||||

See a [usage example for S3DirectoryLoader](../modules/indexes/document_loaders/examples/aws_s3_directory.ipynb).

|

||||

|

||||

See a [usage example for S3FileLoader](../modules/indexes/document_loaders/examples/aws_s3_file.ipynb).

|

||||

|

||||

```python

|

||||

from langchain.document_loaders import S3DirectoryLoader, S3FileLoader

|

||||

```

|

||||

@@ -1,16 +0,0 @@

|

||||

# AZLyrics

|

||||

|

||||

>[AZLyrics](https://www.azlyrics.com/) is a large, legal, every day growing collection of lyrics.

|

||||

|

||||

## Installation and Setup

|

||||

|

||||

There isn't any special setup for it.

|

||||

|

||||

|

||||

## Document Loader

|

||||

|

||||

See a [usage example](../modules/indexes/document_loaders/examples/azlyrics.ipynb).

|

||||

|

||||

```python

|

||||

from langchain.document_loaders import AZLyricsLoader

|

||||

```

|

||||

@@ -1,36 +0,0 @@

|

||||

# Azure Blob Storage

|

||||

|

||||

>[Azure Blob Storage](https://learn.microsoft.com/en-us/azure/storage/blobs/storage-blobs-introduction) is Microsoft's object storage solution for the cloud. Blob Storage is optimized for storing massive amounts of unstructured data. Unstructured data is data that doesn't adhere to a particular data model or definition, such as text or binary data.

|

||||

|

||||

>[Azure Files](https://learn.microsoft.com/en-us/azure/storage/files/storage-files-introduction) offers fully managed

|

||||

> file shares in the cloud that are accessible via the industry standard Server Message Block (`SMB`) protocol,

|

||||

> Network File System (`NFS`) protocol, and `Azure Files REST API`. `Azure Files` are based on the `Azure Blob Storage`.

|

||||

|

||||

`Azure Blob Storage` is designed for:

|

||||

- Serving images or documents directly to a browser.

|

||||

- Storing files for distributed access.

|

||||

- Streaming video and audio.

|

||||

- Writing to log files.

|

||||

- Storing data for backup and restore, disaster recovery, and archiving.

|

||||

- Storing data for analysis by an on-premises or Azure-hosted service.

|

||||

|

||||

## Installation and Setup

|

||||

|

||||

```bash

|

||||

pip install azure-storage-blob

|

||||

```

|

||||

|

||||

|

||||

## Document Loader

|

||||

|

||||

See a [usage example for the Azure Blob Storage](../modules/indexes/document_loaders/examples/azure_blob_storage_container.ipynb).

|

||||

|

||||

```python

|

||||

from langchain.document_loaders import AzureBlobStorageContainerLoader

|

||||

```

|

||||

|

||||

See a [usage example for the Azure Files](../modules/indexes/document_loaders/examples/azure_blob_storage_file.ipynb).

|

||||

|

||||

```python

|

||||

from langchain.document_loaders import AzureBlobStorageFileLoader

|

||||

```

|

||||

@@ -1,50 +0,0 @@

|

||||

# Azure OpenAI

|

||||

|

||||

>[Microsoft Azure](https://en.wikipedia.org/wiki/Microsoft_Azure), often referred to as `Azure` is a cloud computing platform run by `Microsoft`, which offers access, management, and development of applications and services through global data centers. It provides a range of capabilities, including software as a service (SaaS), platform as a service (PaaS), and infrastructure as a service (IaaS). `Microsoft Azure` supports many programming languages, tools, and frameworks, including Microsoft-specific and third-party software and systems.

|

||||

|

||||

|

||||

>[Azure OpenAI](https://learn.microsoft.com/en-us/azure/cognitive-services/openai/) is an `Azure` service with powerful language models from `OpenAI` including the `GPT-3`, `Codex` and `Embeddings model` series for content generation, summarization, semantic search, and natural language to code translation.

|

||||

|

||||

|

||||

## Installation and Setup

|

||||

|

||||

```bash

|

||||

pip install openai

|

||||

pip install tiktoken

|

||||

```

|

||||

|

||||

|

||||

Set the environment variables to get access to the `Azure OpenAI` service.

|

||||

|

||||

```python

|

||||

import os

|

||||

|

||||

os.environ["OPENAI_API_TYPE"] = "azure"

|

||||

os.environ["OPENAI_API_BASE"] = "https://<your-endpoint.openai.azure.com/"

|

||||

os.environ["OPENAI_API_KEY"] = "your AzureOpenAI key"

|

||||

os.environ["OPENAI_API_VERSION"] = "2023-03-15-preview"

|

||||

```

|

||||

|

||||

## LLM

|

||||

|

||||

See a [usage example](../modules/models/llms/integrations/azure_openai_example.ipynb).

|

||||

|

||||

```python

|

||||

from langchain.llms import AzureOpenAI

|

||||

```

|

||||

|

||||

## Text Embedding Models

|

||||

|

||||

See a [usage example](../modules/models/text_embedding/examples/azureopenai.ipynb)

|

||||

|

||||

```python

|

||||

from langchain.embeddings import OpenAIEmbeddings

|

||||

```

|

||||

|

||||

## Chat Models

|

||||

|

||||

See a [usage example](../modules/models/chat/integrations/azure_chat_openai.ipynb)

|

||||

|

||||

```python

|

||||

from langchain.chat_models import AzureChatOpenAI

|

||||

```

|

||||

@@ -1,24 +0,0 @@

|

||||

# Amazon Bedrock

|

||||

|

||||

>[Amazon Bedrock](https://aws.amazon.com/bedrock/) is a fully managed service that makes FMs from leading AI startups and Amazon available via an API, so you can choose from a wide range of FMs to find the model that is best suited for your use case.

|

||||

|

||||

## Installation and Setup

|

||||

|

||||

```bash

|

||||

pip install boto3

|

||||

```

|

||||

|

||||

## LLM

|

||||

|

||||

See a [usage example](../modules/models/llms/integrations/bedrock.ipynb).

|

||||

|

||||

```python

|

||||

from langchain import Bedrock

|

||||

```

|

||||

|

||||

## Text Embedding Models

|

||||

|

||||

See a [usage example](../modules/models/text_embedding/examples/bedrock.ipynb).

|

||||

```python

|

||||

from langchain.embeddings import BedrockEmbeddings

|

||||

```

|

||||

@@ -1,17 +0,0 @@

|

||||

# BiliBili

|

||||

|

||||

>[Bilibili](https://www.bilibili.tv/) is one of the most beloved long-form video sites in China.

|

||||

|

||||

## Installation and Setup

|

||||

|

||||

```bash

|

||||

pip install bilibili-api-python

|

||||

```

|

||||

|

||||

## Document Loader

|

||||

|

||||

See a [usage example](../modules/indexes/document_loaders/examples/bilibili.ipynb).

|

||||

|

||||

```python

|

||||

from langchain.document_loaders import BiliBiliLoader

|

||||

```

|

||||

@@ -1,22 +0,0 @@

|

||||

# Blackboard

|

||||

|

||||

>[Blackboard Learn](https://en.wikipedia.org/wiki/Blackboard_Learn) (previously the `Blackboard Learning Management System`)

|

||||

> is a web-based virtual learning environment and learning management system developed by Blackboard Inc.

|

||||

> The software features course management, customizable open architecture, and scalable design that allows

|

||||

> integration with student information systems and authentication protocols. It may be installed on local servers,

|

||||

> hosted by `Blackboard ASP Solutions`, or provided as Software as a Service hosted on Amazon Web Services.

|

||||

> Its main purposes are stated to include the addition of online elements to courses traditionally delivered

|

||||

> face-to-face and development of completely online courses with few or no face-to-face meetings.

|

||||

|

||||

## Installation and Setup

|

||||

|

||||

There isn't any special setup for it.

|

||||

|

||||

## Document Loader

|

||||

|

||||

See a [usage example](../modules/indexes/document_loaders/examples/blackboard.ipynb).

|

||||

|

||||

```python

|

||||

from langchain.document_loaders import BlackboardLoader

|

||||

|

||||

```

|

||||

@@ -1,22 +1,13 @@

|

||||

{

|

||||

"cells": [

|

||||

{

|

||||

"attachments": {},

|

||||

"cell_type": "markdown",

|

||||

"metadata": {},

|

||||

"source": [

|

||||

"# ClearML\n",

|

||||

"# ClearML Integration\n",

|

||||

"\n",

|

||||

"> [ClearML](https://github.com/allegroai/clearml) is a ML/DL development and production suite, it contains 5 main modules:\n",

|

||||

"> - `Experiment Manager` - Automagical experiment tracking, environments and results\n",

|

||||

"> - `MLOps` - Orchestration, Automation & Pipelines solution for ML/DL jobs (K8s / Cloud / bare-metal)\n",

|

||||

"> - `Data-Management` - Fully differentiable data management & version control solution on top of object-storage (S3 / GS / Azure / NAS)\n",

|

||||

"> - `Model-Serving` - cloud-ready Scalable model serving solution!\n",

|

||||

" Deploy new model endpoints in under 5 minutes\n",

|

||||

" Includes optimized GPU serving support backed by Nvidia-Triton\n",

|

||||

" with out-of-the-box Model Monitoring\n",

|

||||

"> - `Fire Reports` - Create and share rich MarkDown documents supporting embeddable online content\n",

|

||||

"\n",

|

||||

"In order to properly keep track of your langchain experiments and their results, you can enable the `ClearML` integration. We use the `ClearML Experiment Manager` that neatly tracks and organizes all your experiment runs.\n",

|

||||

"In order to properly keep track of your langchain experiments and their results, you can enable the ClearML integration. ClearML is an experiment manager that neatly tracks and organizes all your experiment runs.\n",

|

||||

"\n",

|

||||

"<a target=\"_blank\" href=\"https://colab.research.google.com/github/hwchase17/langchain/blob/master/docs/ecosystem/clearml_tracking.ipynb\">\n",

|

||||

" <img src=\"https://colab.research.google.com/assets/colab-badge.svg\" alt=\"Open In Colab\"/>\n",

|

||||

@@ -24,32 +15,11 @@

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"metadata": {

|

||||

"tags": []

|

||||

},

|

||||

"source": [

|

||||

"## Installation and Setup"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

"metadata": {},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

"!pip install clearml\n",

|

||||

"!pip install pandas\n",

|

||||

"!pip install textstat\n",

|

||||

"!pip install spacy\n",

|

||||

"!python -m spacy download en_core_web_sm"

|

||||

]

|

||||

},

|

||||

{

|

||||

"attachments": {},

|

||||

"cell_type": "markdown",

|

||||

"metadata": {},

|

||||

"source": [

|

||||

"### Getting API Credentials\n",

|

||||

"## Getting API Credentials\n",

|

||||

"\n",

|

||||

"We'll be using quite some APIs in this notebook, here is a list and where to get them:\n",

|

||||

"\n",

|

||||

@@ -73,21 +43,24 @@

|

||||

]

|

||||

},

|

||||

{

|

||||

"attachments": {},

|

||||

"cell_type": "markdown",

|

||||

"metadata": {},

|

||||

"source": [

|

||||

"## Callbacks"

|

||||

"## Setting Up"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": 2,

|

||||

"metadata": {

|

||||

"tags": []

|

||||

},

|

||||

"execution_count": null,

|

||||

"metadata": {},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

"from langchain.callbacks import ClearMLCallbackHandler"

|

||||

"!pip install clearml\n",

|

||||

"!pip install pandas\n",

|

||||

"!pip install textstat\n",

|

||||

"!pip install spacy\n",

|

||||

"!python -m spacy download en_core_web_sm"

|

||||

]

|

||||

},

|

||||

{

|

||||

@@ -105,7 +78,7 @@

|

||||

],

|

||||

"source": [

|

||||

"from datetime import datetime\n",

|

||||

"from langchain.callbacks import StdOutCallbackHandler\n",

|

||||

"from langchain.callbacks import ClearMLCallbackHandler, StdOutCallbackHandler\n",

|

||||

"from langchain.llms import OpenAI\n",

|

||||

"\n",

|

||||

"# Setup and use the ClearML Callback\n",

|

||||

@@ -125,10 +98,11 @@

|

||||

]

|

||||

},

|

||||

{

|

||||

"attachments": {},

|

||||

"cell_type": "markdown",

|

||||

"metadata": {},

|

||||

"source": [

|

||||

"### Scenario 1: Just an LLM\n",

|

||||

"## Scenario 1: Just an LLM\n",

|

||||

"\n",

|

||||

"First, let's just run a single LLM a few times and capture the resulting prompt-answer conversation in ClearML"

|

||||

]

|

||||

@@ -370,6 +344,7 @@

|

||||

]

|

||||

},

|

||||

{

|

||||

"attachments": {},

|

||||

"cell_type": "markdown",

|

||||

"metadata": {},

|

||||

"source": [

|

||||

@@ -381,10 +356,11 @@

|

||||

]

|

||||

},

|

||||

{

|

||||

"attachments": {},

|

||||

"cell_type": "markdown",

|

||||

"metadata": {},

|

||||

"source": [

|

||||

"### Scenario 2: Creating an agent with tools\n",

|

||||

"## Scenario 2: Creating an agent with tools\n",

|

||||

"\n",

|

||||

"To show a more advanced workflow, let's create an agent with access to tools. The way ClearML tracks the results is not different though, only the table will look slightly different as there are other types of actions taken when compared to the earlier, simpler example.\n",

|

||||

"\n",

|

||||

@@ -560,10 +536,11 @@

|

||||

]

|

||||

},

|

||||

{

|

||||

"attachments": {},

|

||||

"cell_type": "markdown",

|

||||

"metadata": {},

|

||||

"source": [

|

||||

"### Tips and Next Steps\n",

|

||||

"## Tips and Next Steps\n",

|

||||

"\n",

|

||||

"- Make sure you always use a unique `name` argument for the `clearml_callback.flush_tracker` function. If not, the model parameters used for a run will override the previous run!\n",

|

||||

"\n",

|

||||

@@ -582,7 +559,7 @@

|

||||

],

|

||||

"metadata": {

|

||||

"kernelspec": {

|

||||

"display_name": "Python 3 (ipykernel)",

|

||||

"display_name": ".venv",

|

||||

"language": "python",

|

||||

"name": "python3"

|

||||

},

|

||||

@@ -596,8 +573,9 @@

|

||||

"name": "python",

|

||||

"nbconvert_exporter": "python",

|

||||

"pygments_lexer": "ipython3",

|

||||

"version": "3.10.6"

|

||||

"version": "3.10.9"

|

||||

},

|

||||

"orig_nbformat": 4,

|

||||

"vscode": {

|

||||

"interpreter": {

|

||||

"hash": "a53ebf4a859167383b364e7e7521d0add3c2dbbdecce4edf676e8c4634ff3fbb"

|

||||

@@ -605,5 +583,5 @@

|

||||

}

|

||||

},

|

||||

"nbformat": 4,

|

||||

"nbformat_minor": 4

|

||||

"nbformat_minor": 2

|

||||

}

|

||||

|

||||

@@ -1,16 +0,0 @@

|

||||

# College Confidential

|

||||

|

||||

>[College Confidential](https://www.collegeconfidential.com/) gives information on 3,800+ colleges and universities.

|

||||

|

||||

## Installation and Setup

|

||||

|

||||

There isn't any special setup for it.

|

||||

|

||||

|

||||

## Document Loader

|

||||

|

||||

See a [usage example](../modules/indexes/document_loaders/examples/college_confidential.ipynb).

|

||||

|

||||

```python

|

||||

from langchain.document_loaders import CollegeConfidentialLoader

|

||||

```

|

||||

@@ -1,22 +0,0 @@

|

||||

# Confluence

|

||||

|

||||

>[Confluence](https://www.atlassian.com/software/confluence) is a wiki collaboration platform that saves and organizes all of the project-related material. `Confluence` is a knowledge base that primarily handles content management activities.

|

||||

|

||||

|

||||

## Installation and Setup

|

||||

|

||||

```bash

|

||||

pip install atlassian-python-api

|

||||

```

|

||||

|

||||

We need to set up `username/api_key` or `Oauth2 login`.

|

||||

See [instructions](https://support.atlassian.com/atlassian-account/docs/manage-api-tokens-for-your-atlassian-account/).

|

||||

|

||||

|

||||

## Document Loader

|

||||

|

||||

See a [usage example](../modules/indexes/document_loaders/examples/confluence.ipynb).

|

||||

|

||||

```python

|

||||

from langchain.document_loaders import ConfluenceLoader

|

||||

```

|

||||

@@ -7,14 +7,6 @@ It is broken into two parts: installation and setup, and then references to spec

|

||||

- Get your DeepInfra api key from this link [here](https://deepinfra.com/).

|

||||

- Get an DeepInfra api key and set it as an environment variable (`DEEPINFRA_API_TOKEN`)

|

||||

|

||||

## Available Models

|

||||

|

||||

DeepInfra provides a range of Open Source LLMs ready for deployment.

|

||||

You can list supported models [here](https://deepinfra.com/models?type=text-generation).

|

||||

google/flan\* models can be viewed [here](https://deepinfra.com/models?type=text2text-generation).

|

||||

|

||||

You can view a list of request and response parameters [here](https://deepinfra.com/databricks/dolly-v2-12b#API)

|

||||

|

||||

## Wrappers

|

||||

|

||||

### LLM

|

||||

|

||||

@@ -1,18 +0,0 @@

|

||||

# Diffbot

|

||||

|

||||

>[Diffbot](https://docs.diffbot.com/docs) is a service to read web pages. Unlike traditional web scraping tools,

|

||||

> `Diffbot` doesn't require any rules to read the content on a page.

|

||||

>It starts with computer vision, which classifies a page into one of 20 possible types. Content is then interpreted by a machine learning model trained to identify the key attributes on a page based on its type.

|

||||

>The result is a website transformed into clean-structured data (like JSON or CSV), ready for your application.

|

||||

|

||||

## Installation and Setup

|

||||

|

||||

Read [instructions](https://docs.diffbot.com/reference/authentication) how to get the Diffbot API Token.

|

||||

|

||||

## Document Loader

|

||||

|

||||

See a [usage example](../modules/indexes/document_loaders/examples/diffbot.ipynb).

|

||||

|

||||

```python

|

||||

from langchain.document_loaders import DiffbotLoader

|

||||

```

|

||||

@@ -1,30 +0,0 @@

|

||||

# Discord

|

||||

|

||||

>[Discord](https://discord.com/) is a VoIP and instant messaging social platform. Users have the ability to communicate

|

||||

> with voice calls, video calls, text messaging, media and files in private chats or as part of communities called

|

||||

> "servers". A server is a collection of persistent chat rooms and voice channels which can be accessed via invite links.

|

||||

|

||||

## Installation and Setup

|

||||

|

||||

|

||||

```bash

|

||||

pip install pandas

|

||||

```

|

||||

|

||||

Follow these steps to download your `Discord` data:

|

||||

|

||||

1. Go to your **User Settings**

|

||||

2. Then go to **Privacy and Safety**

|

||||

3. Head over to the **Request all of my Data** and click on **Request Data** button

|

||||

|

||||

It might take 30 days for you to receive your data. You'll receive an email at the address which is registered

|

||||

with Discord. That email will have a download button using which you would be able to download your personal Discord data.

|

||||

|

||||

|

||||

## Document Loader

|

||||

|

||||

See a [usage example](../modules/indexes/document_loaders/examples/discord.ipynb).

|

||||

|

||||

```python

|

||||

from langchain.document_loaders import DiscordChatLoader

|

||||

```

|

||||

@@ -1,20 +1,25 @@

|

||||

# Docugami

|

||||

|

||||

>[Docugami](https://docugami.com) converts business documents into a Document XML Knowledge Graph, generating forests

|

||||

> of XML semantic trees representing entire documents. This is a rich representation that includes the semantic and

|

||||

> structural characteristics of various chunks in the document as an XML tree.

|

||||

This page covers how to use [Docugami](https://docugami.com) within LangChain.

|

||||

|

||||

## Installation and Setup

|

||||

## What is Docugami?

|

||||

|

||||

Docugami converts business documents into a Document XML Knowledge Graph, generating forests of XML semantic trees representing entire documents. This is a rich representation that includes the semantic and structural characteristics of various chunks in the document as an XML tree.

|

||||

|

||||

```bash

|

||||

pip install lxml

|

||||

```

|

||||

## Quick start

|

||||

|

||||

## Document Loader

|

||||

1. Create a Docugami workspace: <a href="http://www.docugami.com">http://www.docugami.com</a> (free trials available)

|

||||

2. Add your documents (PDF, DOCX or DOC) and allow Docugami to ingest and cluster them into sets of similar documents, e.g. NDAs, Lease Agreements, and Service Agreements. There is no fixed set of document types supported by the system, the clusters created depend on your particular documents, and you can [change the docset assignments](https://help.docugami.com/home/working-with-the-doc-sets-view) later.

|

||||

3. Create an access token via the Developer Playground for your workspace. Detailed instructions: https://help.docugami.com/home/docugami-api

|

||||

4. Explore the Docugami API at <a href="https://api-docs.docugami.com">https://api-docs.docugami.com</a> to get a list of your processed docset IDs, or just the document IDs for a particular docset.

|

||||

6. Use the DocugamiLoader as detailed in [this notebook](../modules/indexes/document_loaders/examples/docugami.ipynb), to get rich semantic chunks for your documents.

|

||||

7. Optionally, build and publish one or more [reports or abstracts](https://help.docugami.com/home/reports). This helps Docugami improve the semantic XML with better tags based on your preferences, which are then added to the DocugamiLoader output as metadata. Use techniques like [self-querying retriever](https://python.langchain.com/en/latest/modules/indexes/retrievers/examples/self_query_retriever.html) to do high accuracy Document QA.

|

||||

|

||||

See a [usage example](../modules/indexes/document_loaders/examples/docugami.ipynb).

|

||||

# Advantages vs Other Chunking Techniques

|

||||

|

||||

```python

|

||||

from langchain.document_loaders import DocugamiLoader

|

||||

```

|

||||

Appropriate chunking of your documents is critical for retrieval from documents. Many chunking techniques exist, including simple ones that rely on whitespace and recursive chunk splitting based on character length. Docugami offers a different approach:

|

||||

|

||||

1. **Intelligent Chunking:** Docugami breaks down every document into a hierarchical semantic XML tree of chunks of varying sizes, from single words or numerical values to entire sections. These chunks follow the semantic contours of the document, providing a more meaningful representation than arbitrary length or simple whitespace-based chunking.

|

||||

2. **Structured Representation:** In addition, the XML tree indicates the structural contours of every document, using attributes denoting headings, paragraphs, lists, tables, and other common elements, and does that consistently across all supported document formats, such as scanned PDFs or DOCX files. It appropriately handles long-form document characteristics like page headers/footers or multi-column flows for clean text extraction.

|

||||

3. **Semantic Annotations:** Chunks are annotated with semantic tags that are coherent across the document set, facilitating consistent hierarchical queries across multiple documents, even if they are written and formatted differently. For example, in set of lease agreements, you can easily identify key provisions like the Landlord, Tenant, or Renewal Date, as well as more complex information such as the wording of any sub-lease provision or whether a specific jurisdiction has an exception section within a Termination Clause.

|

||||

4. **Additional Metadata:** Chunks are also annotated with additional metadata, if a user has been using Docugami. This additional metadata can be used for high-accuracy Document QA without context window restrictions. See detailed code walk-through in [this notebook](../modules/indexes/document_loaders/examples/docugami.ipynb).

|

||||

|

||||

@@ -1,19 +0,0 @@

|

||||

# DuckDB

|

||||

|

||||

>[DuckDB](https://duckdb.org/) is an in-process SQL OLAP database management system.

|

||||

|

||||

## Installation and Setup

|

||||

|

||||

First, you need to install `duckdb` python package.

|

||||

|

||||

```bash

|

||||

pip install duckdb

|

||||

```

|

||||

|

||||

## Document Loader

|

||||

|

||||

See a [usage example](../modules/indexes/document_loaders/examples/duckdb.ipynb).

|

||||

|

||||

```python

|

||||

from langchain.document_loaders import DuckDBLoader

|

||||

```

|

||||

@@ -1,20 +0,0 @@

|

||||

# EverNote

|

||||

|

||||

>[EverNote](https://evernote.com/) is intended for archiving and creating notes in which photos, audio and saved web content can be embedded. Notes are stored in virtual "notebooks" and can be tagged, annotated, edited, searched, and exported.

|

||||

|

||||

## Installation and Setup

|

||||

|

||||

First, you need to install `lxml` and `html2text` python packages.

|

||||

|

||||

```bash

|

||||

pip install lxml

|

||||

pip install html2text

|

||||

```

|

||||

|

||||

## Document Loader

|

||||

|

||||

See a [usage example](../modules/indexes/document_loaders/examples/evernote.ipynb).

|

||||

|

||||

```python

|

||||

from langchain.document_loaders import EverNoteLoader

|

||||

```

|

||||

@@ -1,21 +0,0 @@

|

||||

# Facebook Chat

|

||||

|

||||

>[Messenger](https://en.wikipedia.org/wiki/Messenger_(software)) is an American proprietary instant messaging app and

|

||||

> platform developed by `Meta Platforms`. Originally developed as `Facebook Chat` in 2008, the company revamped its

|

||||

> messaging service in 2010.

|

||||

|

||||

## Installation and Setup

|

||||

|

||||

First, you need to install `pandas` python package.

|

||||

|

||||

```bash

|

||||

pip install pandas

|

||||

```

|

||||

|

||||

## Document Loader

|

||||

|

||||

See a [usage example](../modules/indexes/document_loaders/examples/facebook_chat.ipynb).

|

||||

|

||||

```python

|

||||

from langchain.document_loaders import FacebookChatLoader

|

||||

```

|

||||

@@ -1,21 +0,0 @@

|

||||

# Figma

|

||||

|

||||

>[Figma](https://www.figma.com/) is a collaborative web application for interface design.

|

||||

|

||||

## Installation and Setup

|

||||

|

||||

The Figma API requires an `access token`, `node_ids`, and a `file key`.

|

||||

|

||||

The `file key` can be pulled from the URL. https://www.figma.com/file/{filekey}/sampleFilename

|

||||

|

||||

`Node IDs` are also available in the URL. Click on anything and look for the '?node-id={node_id}' param.

|

||||

|

||||

`Access token` [instructions](https://help.figma.com/hc/en-us/articles/8085703771159-Manage-personal-access-tokens).

|

||||

|

||||

## Document Loader

|

||||

|

||||

See a [usage example](../modules/indexes/document_loaders/examples/figma.ipynb).

|

||||

|

||||

```python

|

||||

from langchain.document_loaders import FigmaFileLoader

|

||||

```

|

||||

@@ -1,19 +0,0 @@

|

||||

# Git

|

||||

|

||||

>[Git](https://en.wikipedia.org/wiki/Git) is a distributed version control system that tracks changes in any set of computer files, usually used for coordinating work among programmers collaboratively developing source code during software development.

|

||||

|

||||

## Installation and Setup

|

||||

|

||||

First, you need to install `GitPython` python package.

|

||||

|

||||

```bash

|

||||

pip install GitPython

|

||||

```

|

||||

|

||||

## Document Loader

|

||||

|

||||

See a [usage example](../modules/indexes/document_loaders/examples/git.ipynb).

|

||||

|

||||

```python

|

||||

from langchain.document_loaders import GitLoader

|

||||

```

|

||||

@@ -1,15 +0,0 @@

|

||||

# GitBook

|

||||

|

||||

>[GitBook](https://docs.gitbook.com/) is a modern documentation platform where teams can document everything from products to internal knowledge bases and APIs.

|

||||

|

||||

## Installation and Setup

|

||||

|

||||

There isn't any special setup for it.

|

||||

|

||||

## Document Loader

|

||||

|

||||

See a [usage example](../modules/indexes/document_loaders/examples/gitbook.ipynb).

|

||||

|

||||

```python

|

||||

from langchain.document_loaders import GitbookLoader

|

||||

```

|

||||

@@ -1,20 +0,0 @@

|

||||

# Google BigQuery

|

||||

|

||||

>[Google BigQuery](https://cloud.google.com/bigquery) is a serverless and cost-effective enterprise data warehouse that works across clouds and scales with your data.

|

||||

`BigQuery` is a part of the `Google Cloud Platform`.

|

||||

|

||||

## Installation and Setup

|

||||

|

||||

First, you need to install `google-cloud-bigquery` python package.

|

||||

|

||||

```bash

|

||||

pip install google-cloud-bigquery

|

||||

```

|

||||

|

||||

## Document Loader

|

||||

|

||||

See a [usage example](../modules/indexes/document_loaders/examples/google_bigquery.ipynb).

|

||||

|

||||

```python

|

||||

from langchain.document_loaders import BigQueryLoader

|

||||

```

|

||||

@@ -1,26 +0,0 @@

|

||||

# Google Cloud Storage

|

||||

|

||||

>[Google Cloud Storage](https://en.wikipedia.org/wiki/Google_Cloud_Storage) is a managed service for storing unstructured data.

|

||||

|

||||

## Installation and Setup

|

||||

|

||||

First, you need to install `google-cloud-bigquery` python package.

|

||||

|

||||

```bash

|

||||

pip install google-cloud-storage

|

||||

```

|

||||

|

||||

## Document Loader

|

||||

|

||||

There are two loaders for the `Google Cloud Storage`: the `Directory` and the `File` loaders.

|

||||

|

||||

See a [usage example](../modules/indexes/document_loaders/examples/google_cloud_storage_directory.ipynb).

|

||||

|

||||

```python

|

||||

from langchain.document_loaders import GCSDirectoryLoader

|

||||

```

|

||||

See a [usage example](../modules/indexes/document_loaders/examples/google_cloud_storage_file.ipynb).

|

||||

|

||||

```python

|

||||

from langchain.document_loaders import GCSFileLoader

|

||||

```

|

||||

@@ -1,22 +0,0 @@

|

||||

# Google Drive

|

||||

|

||||

>[Google Drive](https://en.wikipedia.org/wiki/Google_Drive) is a file storage and synchronization service developed by Google.

|

||||

|

||||

Currently, only `Google Docs` are supported.

|

||||

|

||||

## Installation and Setup

|

||||

|

||||

First, you need to install several python package.

|

||||

|

||||

```bash

|

||||

pip install google-api-python-client google-auth-httplib2 google-auth-oauthlib

|

||||

```

|

||||

|

||||

## Document Loader

|

||||

|

||||

See a [usage example and authorizing instructions](../modules/indexes/document_loaders/examples/google_drive.ipynb).

|

||||

|

||||

|

||||

```python

|

||||

from langchain.document_loaders import GoogleDriveLoader

|

||||

```

|

||||

@@ -1,15 +0,0 @@

|

||||

# Gutenberg

|

||||

|

||||

>[Project Gutenberg](https://www.gutenberg.org/about/) is an online library of free eBooks.

|

||||

|

||||

## Installation and Setup

|

||||

|

||||

There isn't any special setup for it.

|

||||

|

||||

## Document Loader

|

||||

|

||||

See a [usage example](../modules/indexes/document_loaders/examples/gutenberg.ipynb).

|

||||

|

||||

```python

|

||||

from langchain.document_loaders import GutenbergLoader

|

||||

```

|

||||

@@ -1,18 +0,0 @@

|

||||

# Hacker News

|

||||

|

||||

>[Hacker News](https://en.wikipedia.org/wiki/Hacker_News) (sometimes abbreviated as `HN`) is a social news

|

||||

> website focusing on computer science and entrepreneurship. It is run by the investment fund and startup

|

||||

> incubator `Y Combinator`. In general, content that can be submitted is defined as "anything that gratifies

|

||||

> one's intellectual curiosity."

|

||||

|

||||

## Installation and Setup

|

||||

|

||||

There isn't any special setup for it.

|

||||

|

||||

## Document Loader

|

||||

|

||||

See a [usage example](../modules/indexes/document_loaders/examples/hacker_news.ipynb).

|

||||

|

||||

```python

|

||||

from langchain.document_loaders import HNLoader

|

||||

```

|

||||

@@ -1,16 +0,0 @@

|

||||

# iFixit

|

||||

|

||||

>[iFixit](https://www.ifixit.com) is the largest, open repair community on the web. The site contains nearly 100k

|

||||

> repair manuals, 200k Questions & Answers on 42k devices, and all the data is licensed under `CC-BY-NC-SA 3.0`.

|

||||

|

||||

## Installation and Setup

|

||||

|

||||

There isn't any special setup for it.

|

||||

|

||||

## Document Loader

|

||||

|

||||

See a [usage example](../modules/indexes/document_loaders/examples/ifixit.ipynb).

|

||||

|

||||

```python

|

||||

from langchain.document_loaders import IFixitLoader

|

||||

```

|

||||

@@ -1,16 +0,0 @@

|

||||

# IMSDb

|

||||

|

||||

>[IMSDb](https://imsdb.com/) is the `Internet Movie Script Database`.

|

||||

>

|

||||

## Installation and Setup

|

||||

|

||||

There isn't any special setup for it.

|

||||

|

||||

## Document Loader

|

||||

|

||||

See a [usage example](../modules/indexes/document_loaders/examples/imsdb.ipynb).

|

||||

|

||||

|

||||

```python

|

||||

from langchain.document_loaders import IMSDbLoader

|

||||

```

|

||||

@@ -1,31 +0,0 @@

|

||||

# MediaWikiDump

|

||||

|

||||

>[MediaWiki XML Dumps](https://www.mediawiki.org/wiki/Manual:Importing_XML_dumps) contain the content of a wiki

|

||||

> (wiki pages with all their revisions), without the site-related data. A XML dump does not create a full backup

|

||||

> of the wiki database, the dump does not contain user accounts, images, edit logs, etc.

|

||||

|

||||

|

||||

## Installation and Setup

|

||||

|

||||

We need to install several python packages.

|

||||

|

||||

The `mediawiki-utilities` supports XML schema 0.11 in unmerged branches.

|

||||

```bash

|

||||

pip install -qU git+https://github.com/mediawiki-utilities/python-mwtypes@updates_schema_0.11

|

||||

```

|

||||

|

||||

The `mediawiki-utilities mwxml` has a bug, fix PR pending.

|

||||

|

||||

```bash

|

||||

pip install -qU git+https://github.com/gdedrouas/python-mwxml@xml_format_0.11

|

||||

pip install -qU mwparserfromhell

|

||||

```

|

||||

|

||||

## Document Loader

|

||||

|

||||

See a [usage example](../modules/indexes/document_loaders/examples/mediawikidump.ipynb).

|

||||

|

||||

|

||||

```python

|

||||

from langchain.document_loaders import MWDumpLoader

|

||||

```

|

||||

@@ -1,22 +0,0 @@

|

||||

# Microsoft OneDrive

|

||||

|

||||

>[Microsoft OneDrive](https://en.wikipedia.org/wiki/OneDrive) (formerly `SkyDrive`) is a file-hosting service operated by Microsoft.

|

||||

|

||||

## Installation and Setup

|

||||

|

||||

First, you need to install a python package.

|

||||

|

||||

```bash

|

||||

pip install o365

|

||||

```

|

||||

|

||||

Then follow instructions [here](../modules/indexes/document_loaders/examples/microsoft_onedrive.ipynb).

|

||||

|

||||

## Document Loader

|

||||

|

||||

See a [usage example](../modules/indexes/document_loaders/examples/microsoft_onedrive.ipynb).

|

||||

|

||||

|

||||

```python

|

||||

from langchain.document_loaders import OneDriveLoader

|

||||

```

|

||||

@@ -1,16 +0,0 @@

|

||||

# Microsoft PowerPoint

|

||||

|

||||

>[Microsoft PowerPoint](https://en.wikipedia.org/wiki/Microsoft_PowerPoint) is a presentation program by Microsoft.

|

||||

|

||||

## Installation and Setup

|

||||

|

||||

There isn't any special setup for it.

|

||||

|

||||

## Document Loader

|

||||

|

||||

See a [usage example](../modules/indexes/document_loaders/examples/microsoft_powerpoint.ipynb).

|

||||

|

||||

|

||||

```python

|

||||

from langchain.document_loaders import UnstructuredPowerPointLoader

|

||||

```

|

||||

@@ -1,16 +0,0 @@

|

||||

# Microsoft Word

|

||||

|

||||

>[Microsoft Word](https://www.microsoft.com/en-us/microsoft-365/word) is a word processor developed by Microsoft.

|

||||

|

||||

## Installation and Setup

|

||||

|

||||

There isn't any special setup for it.

|

||||

|

||||

## Document Loader

|

||||

|

||||

See a [usage example](../modules/indexes/document_loaders/examples/microsoft_word.ipynb).

|

||||

|

||||

|

||||

```python

|

||||

from langchain.document_loaders import UnstructuredWordDocumentLoader

|

||||

```

|

||||

@@ -1,19 +0,0 @@

|

||||

# Modern Treasury

|

||||

|

||||

>[Modern Treasury](https://www.moderntreasury.com/) simplifies complex payment operations. It is a unified platform to power products and processes that move money.

|

||||

>- Connect to banks and payment systems

|

||||

>- Track transactions and balances in real-time

|

||||

>- Automate payment operations for scale

|

||||

|

||||

## Installation and Setup

|

||||

|

||||

There isn't any special setup for it.

|

||||

|

||||

## Document Loader

|

||||

|

||||

See a [usage example](../modules/indexes/document_loaders/examples/modern_treasury.ipynb).

|

||||

|

||||

|

||||

```python

|

||||

from langchain.document_loaders import ModernTreasuryLoader

|

||||

```

|

||||

@@ -1,27 +0,0 @@

|

||||

# Notion DB

|

||||

|

||||

>[Notion](https://www.notion.so/) is a collaboration platform with modified Markdown support that integrates kanban

|

||||

> boards, tasks, wikis and databases. It is an all-in-one workspace for notetaking, knowledge and data management,

|

||||

> and project and task management.

|

||||

|

||||

## Installation and Setup

|

||||

|

||||

All instructions are in examples below.

|

||||

|

||||

## Document Loader

|

||||

|

||||

We have two different loaders: `NotionDirectoryLoader` and `NotionDBLoader`.

|

||||

|

||||

See a [usage example for the NotionDirectoryLoader](../modules/indexes/document_loaders/examples/notion.ipynb).

|

||||

|

||||

|

||||

```python

|

||||

from langchain.document_loaders import NotionDirectoryLoader

|

||||

```

|

||||

|

||||

See a [usage example for the NotionDBLoader](../modules/indexes/document_loaders/examples/notiondb.ipynb).

|

||||

|

||||

|

||||

```python

|

||||

from langchain.document_loaders import NotionDBLoader

|

||||

```

|

||||

@@ -1,19 +0,0 @@

|

||||

# Obsidian

|

||||

|

||||

>[Obsidian](https://obsidian.md/) is a powerful and extensible knowledge base

|

||||

that works on top of your local folder of plain text files.

|

||||

|

||||

## Installation and Setup

|

||||

|

||||

All instructions are in examples below.

|

||||

|

||||

## Document Loader

|

||||

|

||||

|

||||

See a [usage example](../modules/indexes/document_loaders/examples/obsidian.ipynb).

|

||||

|

||||

|

||||

```python

|

||||

from langchain.document_loaders import ObsidianLoader

|

||||

```

|

||||

|

||||

@@ -1,50 +1,40 @@

|

||||

# OpenAI

|

||||

|

||||

>[OpenAI](https://en.wikipedia.org/wiki/OpenAI) is American artificial intelligence (AI) research laboratory

|

||||

> consisting of the non-profit `OpenAI Incorporated`

|

||||

> and its for-profit subsidiary corporation `OpenAI Limited Partnership`.

|

||||

> `OpenAI` conducts AI research with the declared intention of promoting and developing a friendly AI.

|

||||

> `OpenAI` systems run on an `Azure`-based supercomputing platform from `Microsoft`.

|

||||

|

||||

>The [OpenAI API](https://platform.openai.com/docs/models) is powered by a diverse set of models with different capabilities and price points.

|

||||

>

|

||||

>[ChatGPT](https://chat.openai.com) is the Artificial Intelligence (AI) chatbot developed by `OpenAI`.

|

||||

This page covers how to use the OpenAI ecosystem within LangChain.

|

||||

It is broken into two parts: installation and setup, and then references to specific OpenAI wrappers.

|

||||

|

||||

## Installation and Setup

|

||||

- Install the Python SDK with

|

||||

```bash

|

||||

pip install openai

|

||||

```

|

||||

- Install the Python SDK with `pip install openai`

|

||||

- Get an OpenAI api key and set it as an environment variable (`OPENAI_API_KEY`)

|

||||

- If you want to use OpenAI's tokenizer (only available for Python 3.9+), install it

|

||||

```bash

|

||||

pip install tiktoken

|

||||

```

|

||||

- If you want to use OpenAI's tokenizer (only available for Python 3.9+), install it with `pip install tiktoken`

|

||||

|

||||

## Wrappers

|

||||

|

||||

## LLM

|

||||

### LLM

|

||||

|

||||

There exists an OpenAI LLM wrapper, which you can access with

|

||||

```python

|

||||

from langchain.llms import OpenAI

|

||||

```

|

||||

|

||||

If you are using a model hosted on `Azure`, you should use different wrapper for that:

|

||||

If you are using a model hosted on Azure, you should use different wrapper for that:

|

||||

```python

|

||||

from langchain.llms import AzureOpenAI

|

||||

```

|

||||

For a more detailed walkthrough of the `Azure` wrapper, see [this notebook](../modules/models/llms/integrations/azure_openai_example.ipynb)

|

||||

For a more detailed walkthrough of the Azure wrapper, see [this notebook](../modules/models/llms/integrations/azure_openai_example.ipynb)

|

||||

|

||||

|

||||

|

||||

## Text Embedding Model

|

||||

### Embeddings

|

||||

|

||||

There exists an OpenAI Embeddings wrapper, which you can access with

|

||||

```python

|

||||

from langchain.embeddings import OpenAIEmbeddings

|

||||

```

|

||||

For a more detailed walkthrough of this, see [this notebook](../modules/models/text_embedding/examples/openai.ipynb)

|

||||

|

||||

|

||||

## Tokenizer

|

||||

### Tokenizer

|

||||

|

||||

There are several places you can use the `tiktoken` tokenizer. By default, it is used to count tokens

|

||||

for OpenAI LLMs.

|

||||

@@ -56,18 +46,10 @@ CharacterTextSplitter.from_tiktoken_encoder(...)

|

||||

```

|

||||

For a more detailed walkthrough of this, see [this notebook](../modules/indexes/text_splitters/examples/tiktoken.ipynb)

|

||||

|

||||

## Chain

|

||||

|

||||

See a [usage example](../modules/chains/examples/moderation.ipynb).

|

||||

### Moderation

|

||||

You can also access the OpenAI content moderation endpoint with

|

||||

|

||||

```python

|

||||

from langchain.chains import OpenAIModerationChain

|

||||

```

|

||||

|

||||

## Document Loader

|

||||

|

||||

See a [usage example](../modules/indexes/document_loaders/examples/chatgpt_loader.ipynb).

|

||||

|

||||

```python

|

||||

from langchain.document_loaders.chatgpt import ChatGPTLoader

|

||||

```

|

||||

For a more detailed walkthrough of this, see [this notebook](../modules/chains/examples/moderation.ipynb)

|

||||

|

||||

@@ -1,21 +1,11 @@

|

||||

# OpenWeatherMap

|

||||

# OpenWeatherMap API

|

||||

|

||||

>[OpenWeatherMap](https://openweathermap.org/api/) provides all essential weather data for a specific location:

|

||||

>- Current weather

|

||||

>- Minute forecast for 1 hour

|

||||

>- Hourly forecast for 48 hours

|

||||

>- Daily forecast for 8 days

|

||||

>- National weather alerts

|

||||

>- Historical weather data for 40+ years back

|

||||

|

||||

This page covers how to use the `OpenWeatherMap API` within LangChain.

|

||||

This page covers how to use the OpenWeatherMap API within LangChain.

|

||||

It is broken into two parts: installation and setup, and then references to specific OpenWeatherMap API wrappers.

|

||||

|

||||

## Installation and Setup

|

||||

|

||||

- Install requirements with

|

||||

```bash

|

||||

pip install pyowm

|

||||

```

|

||||

- Install requirements with `pip install pyowm`

|

||||

- Go to OpenWeatherMap and sign up for an account to get your API key [here](https://openweathermap.org/api/)

|

||||

- Set your API key as `OPENWEATHERMAP_API_KEY` environment variable

|

||||

|

||||

|

||||

@@ -24,10 +24,6 @@ To import this vectorstore:

|

||||

from langchain.vectorstores.pgvector import PGVector

|

||||

```

|

||||

|

||||

PGVector embedding size is not autodetected. If you are using ChatGPT or any other embedding with 1536 dimensions

|

||||

default is fine. If you are going to use for example HuggingFaceEmbeddings you need to set the environment variable named `PGVECTOR_VECTOR_SIZE`

|

||||

to the needed value, In case of HuggingFaceEmbeddings is would be: `PGVECTOR_VECTOR_SIZE=768`

|

||||

|

||||

### Usage

|

||||

|

||||

For a more detailed walkthrough of the PGVector Wrapper, see [this notebook](../modules/indexes/vectorstores/examples/pgvector.ipynb)

|

||||

|

||||

@@ -14,85 +14,41 @@ There exists a Prediction Guard LLM wrapper, which you can access with

|

||||

from langchain.llms import PredictionGuard

|

||||

```

|

||||

|

||||

You can provide the name of the Prediction Guard model as an argument when initializing the LLM:

|

||||

You can provide the name of your Prediction Guard "proxy" as an argument when initializing the LLM:

|

||||

```python

|

||||

pgllm = PredictionGuard(model="MPT-7B-Instruct")

|

||||

pgllm = PredictionGuard(name="your-text-gen-proxy")

|

||||

```

|

||||

|

||||

Alternatively, you can use Prediction Guard's default proxy for SOTA LLMs:

|

||||

```python

|

||||

pgllm = PredictionGuard(name="default-text-gen")

|

||||

```

|

||||

|

||||

You can also provide your access token directly as an argument:

|

||||

```python

|

||||

pgllm = PredictionGuard(model="MPT-7B-Instruct", token="<your access token>")

|

||||

```

|

||||

|

||||

Finally, you can provide an "output" argument that is used to structure/ control the output of the LLM:

|

||||

```python

|

||||

pgllm = PredictionGuard(model="MPT-7B-Instruct", output={"type": "boolean"})

|

||||

pgllm = PredictionGuard(name="default-text-gen", token="<your access token>")

|

||||

```

|

||||

|

||||

## Example usage

|

||||

|

||||

Basic usage of the controlled or guarded LLM wrapper:

|

||||

Basic usage of the LLM wrapper:

|

||||

```python

|

||||

import os

|

||||

|

||||

import predictionguard as pg

|

||||

from langchain.llms import PredictionGuard

|

||||

from langchain import PromptTemplate, LLMChain

|

||||

|

||||

# Your Prediction Guard API key. Get one at predictionguard.com

|

||||

os.environ["PREDICTIONGUARD_TOKEN"] = "<your Prediction Guard access token>"

|

||||

|

||||

# Define a prompt template

|

||||

template = """Respond to the following query based on the context.

|

||||

|

||||

Context: EVERY comment, DM + email suggestion has led us to this EXCITING announcement! 🎉 We have officially added TWO new candle subscription box options! 📦

|

||||

Exclusive Candle Box - $80

|

||||

Monthly Candle Box - $45 (NEW!)

|

||||

Scent of The Month Box - $28 (NEW!)

|

||||

Head to stories to get ALLL the deets on each box! 👆 BONUS: Save 50% on your first box with code 50OFF! 🎉

|

||||

|

||||

Query: {query}

|

||||

|

||||

Result: """

|

||||

prompt = PromptTemplate(template=template, input_variables=["query"])

|

||||

|

||||

# With "guarding" or controlling the output of the LLM. See the

|

||||

# Prediction Guard docs (https://docs.predictionguard.com) to learn how to

|

||||

# control the output with integer, float, boolean, JSON, and other types and

|

||||

# structures.

|

||||

pgllm = PredictionGuard(model="MPT-7B-Instruct",

|

||||

output={

|

||||

"type": "categorical",

|

||||

"categories": [

|

||||

"product announcement",

|

||||

"apology",

|

||||

"relational"

|

||||

]

|

||||

})

|

||||

pgllm(prompt.format(query="What kind of post is this?"))

|

||||

pgllm = PredictionGuard(name="default-text-gen")

|

||||

pgllm("Tell me a joke")

|

||||

```

|

||||

|

||||

Basic LLM Chaining with the Prediction Guard wrapper:

|

||||

```python

|

||||

import os

|

||||

|

||||

from langchain import PromptTemplate, LLMChain

|

||||

from langchain.llms import PredictionGuard

|

||||

|

||||

# Optional, add your OpenAI API Key. This is optional, as Prediction Guard allows

|

||||

# you to access all the latest open access models (see https://docs.predictionguard.com)

|

||||

os.environ["OPENAI_API_KEY"] = "<your OpenAI api key>"

|

||||

|

||||

# Your Prediction Guard API key. Get one at predictionguard.com

|

||||

os.environ["PREDICTIONGUARD_TOKEN"] = "<your Prediction Guard access token>"