## Description

- Add [Friendli](https://friendli.ai/) integration for `Friendli` LLM

and `ChatFriendli` chat model.

- Unit tests and integration tests corresponding to this change are

added.

- Documentations corresponding to this change are added.

## Dependencies

- Optional dependency

[`friendli-client`](https://pypi.org/project/friendli-client/) package

is added only for those who use `Frienldi` or `ChatFriendli` model.

## Twitter handle

- https://twitter.com/friendliai

This pull request introduces initial support for the TiDB vector store.

The current version is basic, laying the foundation for the vector store

integration. While this implementation provides the essential features,

we plan to expand and improve the TiDB vector store support with

additional enhancements in future updates.

Upcoming Enhancements:

* Support for Vector Index Creation: To enhance the efficiency and

performance of the vector store.

* Support for max marginal relevance search.

* Customized Table Structure Support: Recognizing the need for

flexibility, we plan for more tailored and efficient data store

solutions.

Simple use case exmaple

```python

from typing import List, Tuple

from langchain.docstore.document import Document

from langchain_community.vectorstores import TiDBVectorStore

from langchain_openai import OpenAIEmbeddings

db = TiDBVectorStore.from_texts(

embedding=embeddings,

texts=['Andrew like eating oranges', 'Alexandra is from England', 'Ketanji Brown Jackson is a judge'],

table_name="tidb_vector_langchain",

connection_string=tidb_connection_url,

distance_strategy="cosine",

)

query = "Can you tell me about Alexandra?"

docs_with_score: List[Tuple[Document, float]] = db.similarity_search_with_score(query)

for doc, score in docs_with_score:

print("-" * 80)

print("Score: ", score)

print(doc.page_content)

print("-" * 80)

```

**Description:**

This integrates Infinispan as a vectorstore.

Infinispan is an open-source key-value data grid, it can work as single

node as well as distributed.

Vector search is supported since release 15.x

For more: [Infinispan Home](https://infinispan.org)

Integration tests are provided as well as a demo notebook

Follow up on https://github.com/langchain-ai/langchain/pull/17467.

- Update all references to the Elasticsearch classes to use the partners

package.

- Deprecate community classes.

---------

Co-authored-by: Bagatur <baskaryan@gmail.com>

Description:

This pull request addresses two key improvements to the langchain

repository:

**Fix for Crash in Flight Search Interface**:

Previously, the code would crash when encountering a failure scenario in

the flight ticket search interface. This PR resolves this issue by

implementing a fix to handle such scenarios gracefully. Now, the code

handles failures in the flight search interface without crashing,

ensuring smoother operation.

**Documentation Update for Amadeus Toolkit**:

Prior to this update, examples provided in the documentation for the

Amadeus Toolkit were unable to run correctly due to outdated

information. This PR includes an update to the documentation, ensuring

that all examples can now be executed successfully. With this update,

users can effectively utilize the Amadeus Toolkit with accurate and

functioning examples.

These changes aim to enhance the reliability and usability of the

langchain repository by addressing issues related to error handling and

ensuring that documentation remains up-to-date and actionable.

Issue: https://github.com/langchain-ai/langchain/issues/17375

Twitter Handle: SingletonYxx

**Description:**

modified the user_name to username to conform with the expected inputs

to TelegramChatApiLoader

**Issue:**

Current code fails in langchain-community 0.0.24

<loader = TelegramChatApiLoader(

chat_entity="<CHAT_URL>", # recommended to use Entity here

api_hash="<API HASH >",

api_id="<API_ID>",

user_name="", # needed only for caching the session.

)>

## **Description**

Migrate the `MongoDBChatMessageHistory` to the managed

`langchain-mongodb` partner-package

## **Dependencies**

None

## **Twitter handle**

@mongodb

## **tests and docs**

- [x] Migrate existing integration test

- [x ]~ Convert existing integration test to a unit test~ Creation is

out of scope for this ticket

- [x ] ~Considering delaying work until #17470 merges to leverage the

`MockCollection` object. ~

- [x] **Lint and test**: Run `make format`, `make lint` and `make test`

from the root of the package(s) you've modified. See contribution

guidelines for more: https://python.langchain.com/docs/contributing/

---------

Co-authored-by: Erick Friis <erick@langchain.dev>

# Description

- **Description:** Adding MongoDB LLM Caching Layer abstraction

- **Issue:** N/A

- **Dependencies:** None

- **Twitter handle:** @mongodb

Checklist:

- [x] PR title: Please title your PR "package: description", where

"package" is whichever of langchain, community, core, experimental, etc.

is being modified. Use "docs: ..." for purely docs changes, "templates:

..." for template changes, "infra: ..." for CI changes.

- Example: "community: add foobar LLM"

- [x] PR Message (above)

- [x] Pass lint and test: Run `make format`, `make lint` and `make test`

from the root of the package(s) you've modified to check that you're

passing lint and testing. See contribution guidelines for more

information on how to write/run tests, lint, etc:

https://python.langchain.com/docs/contributing/

- [ ] Add tests and docs: If you're adding a new integration, please

include

1. a test for the integration, preferably unit tests that do not rely on

network access,

2. an example notebook showing its use. It lives in

`docs/docs/integrations` directory.

Additional guidelines:

- Make sure optional dependencies are imported within a function.

- Please do not add dependencies to pyproject.toml files (even optional

ones) unless they are required for unit tests.

- Most PRs should not touch more than one package.

- Changes should be backwards compatible.

- If you are adding something to community, do not re-import it in

langchain.

If no one reviews your PR within a few days, please @-mention one of

@baskaryan, @efriis, @eyurtsev, @hwchase17.

---------

Co-authored-by: Jib <jib@byblack.us>

- **Description:**

This PR fixes some issues in the Jupyter notebook for the VectorStore

"SAP HANA Cloud Vector Engine":

* Slight textual adaptations

* Fix of wrong column name VEC_META (was: VEC_METADATA)

- **Issue:** N/A

- **Dependencies:** no new dependecies added

- **Twitter handle:** @sapopensource

path to notebook:

`docs/docs/integrations/vectorstores/hanavector.ipynb`

- **Description:** finishes adding the you.com functionality including:

- add async functions to utility and retriever

- add the You.com Tool

- add async testing for utility, retriever, and tool

- add a tool integration notebook page

- **Dependencies:** any dependencies required for this change

- **Twitter handle:** @scottnath

Description:

This pull request introduces several enhancements for Azure Cosmos

Vector DB, primarily focused on improving caching and search

capabilities using Azure Cosmos MongoDB vCore Vector DB. Here's a

summary of the changes:

- **AzureCosmosDBSemanticCache**: Added a new cache implementation

called AzureCosmosDBSemanticCache, which utilizes Azure Cosmos MongoDB

vCore Vector DB for efficient caching of semantic data. Added

comprehensive test cases for AzureCosmosDBSemanticCache to ensure its

correctness and robustness. These tests cover various scenarios and edge

cases to validate the cache's behavior.

- **HNSW Vector Search**: Added HNSW vector search functionality in the

CosmosDB Vector Search module. This enhancement enables more efficient

and accurate vector searches by utilizing the HNSW (Hierarchical

Navigable Small World) algorithm. Added corresponding test cases to

validate the HNSW vector search functionality in both

AzureCosmosDBSemanticCache and AzureCosmosDBVectorSearch. These tests

ensure the correctness and performance of the HNSW search algorithm.

- **LLM Caching Notebook** - The notebook now includes a comprehensive

example showcasing the usage of the AzureCosmosDBSemanticCache. This

example highlights how the cache can be employed to efficiently store

and retrieve semantic data. Additionally, the example provides default

values for all parameters used within the AzureCosmosDBSemanticCache,

ensuring clarity and ease of understanding for users who are new to the

cache implementation.

@hwchase17,@baskaryan, @eyurtsev,

* **Description:** adds `LlamafileEmbeddings` class implementation for

generating embeddings using

[llamafile](https://github.com/Mozilla-Ocho/llamafile)-based models.

Includes related unit tests and notebook showing example usage.

* **Issue:** N/A

* **Dependencies:** N/A

**Description:**

(a) Update to the module import path to reflect the splitting up of

langchain into separate packages

(b) Update to the documentation to include the new calling method

(invoke)

In this commit we update the documentation for Google El Carro for Oracle Workloads. We amend the documentation in the Google Providers page to use the correct name which is El Carro for Oracle Workloads. We also add changes to the document_loaders and memory pages to reflect changes we made in our repo.

- **Description**:

[`bigdl-llm`](https://github.com/intel-analytics/BigDL) is a library for

running LLM on Intel XPU (from Laptop to GPU to Cloud) using

INT4/FP4/INT8/FP8 with very low latency (for any PyTorch model). This PR

adds bigdl-llm integrations to langchain.

- **Issue**: NA

- **Dependencies**: `bigdl-llm` library

- **Contribution maintainer**: @shane-huang

Examples added:

- docs/docs/integrations/llms/bigdl.ipynb

Nvidia provider page is missing a Triton Inference Server package

reference.

Changes:

- added the Triton Inference Server reference

- copied the example notebook from the package into the doc files.

- added the Triton Inference Server description and links, the link to

the above example notebook

- formatted page to the consistent format

NOTE:

It seems that the [example

notebook](https://github.com/langchain-ai/langchain/blob/master/libs/partners/nvidia-trt/docs/llms.ipynb)

was originally created in wrong place. It should be in the LangChain

docs

[here](https://github.com/langchain-ai/langchain/tree/master/docs/docs/integrations/llms).

So, I've created a copy of this example. The original example is still

in the nvidia-trt package.

This PR migrates the existing MongoDBAtlasVectorSearch abstraction from

the `langchain_community` section to the partners package section of the

codebase.

- [x] Run the partner package script as advised in the partner-packages

documentation.

- [x] Add Unit Tests

- [x] Migrate Integration Tests

- [x] Refactor `MongoDBAtlasVectorStore` (autogenerated) to

`MongoDBAtlasVectorSearch`

- [x] ~Remove~ deprecate the old `langchain_community` VectorStore

references.

## Additional Callouts

- Implemented the `delete` method

- Included any missing async function implementations

- `amax_marginal_relevance_search_by_vector`

- `adelete`

- Added new Unit Tests that test for functionality of

`MongoDBVectorSearch` methods

- Removed [`del

res[self._embedding_key]`](e0c81e1cb0/libs/community/langchain_community/vectorstores/mongodb_atlas.py (L218))

in `_similarity_search_with_score` function as it would make the

`maximal_marginal_relevance` function fail otherwise. The `Document`

needs to store the embedding key in metadata to work.

Checklist:

- [x] PR title: Please title your PR "package: description", where

"package" is whichever of langchain, community, core, experimental, etc.

is being modified. Use "docs: ..." for purely docs changes, "templates:

..." for template changes, "infra: ..." for CI changes.

- Example: "community: add foobar LLM"

- [x] PR message

- [x] Pass lint and test: Run `make format`, `make lint` and `make test`

from the root of the package(s) you've modified to check that you're

passing lint and testing. See contribution guidelines for more

information on how to write/run tests, lint, etc:

https://python.langchain.com/docs/contributing/

- [x] Add tests and docs: If you're adding a new integration, please

include

1. Existing tests supplied in docs/docs do not change. Updated

docstrings for new functions like `delete`

2. an example notebook showing its use. It lives in

`docs/docs/integrations` directory. (This already exists)

If no one reviews your PR within a few days, please @-mention one of

baskaryan, efriis, eyurtsev, hwchase17.

---------

Co-authored-by: Steven Silvester <steven.silvester@ieee.org>

Co-authored-by: Erick Friis <erick@langchain.dev>

**Description:**

In this PR, I am adding a `PolygonFinancials` tool, which can be used to

get financials data for a given ticker. The financials data is the

fundamental data that is found in income statements, balance sheets, and

cash flow statements of public US companies.

**Twitter**:

[@virattt](https://twitter.com/virattt)

Several URL-s were broken (in the yesterday PR). Like

[Integrations/platforms/google/Document

Loaders](https://python.langchain.com/docs/integrations/platforms/google#document-loaders)

page, Example link to "Document Loaders / Cloud SQL for PostgreSQL" and

most of the new example links in the Document Loaders, Vectorstores,

Memory sections.

- fixed URL-s (manually verified all example links)

- sorted sections in page to follow the "integrations/components" menu

item order.

- fixed several page titles to fix Navbar item order

---------

Co-authored-by: Erick Friis <erick@langchain.dev>

**Description:** Update to the list of partner packages in the list of

providers

**Issue:** Google & Nvidia had two entries each, both pointing to the

same page

**Dependencies:** None

- **Description:** A generic document loader adapter for SQLAlchemy on

top of LangChain's `SQLDatabaseLoader`.

- **Needed by:** https://github.com/crate-workbench/langchain/pull/1

- **Depends on:** GH-16655

- **Addressed to:** @baskaryan, @cbornet, @eyurtsev

Hi from CrateDB again,

in the same spirit like GH-16243 and GH-16244, this patch breaks out

another commit from https://github.com/crate-workbench/langchain/pull/1,

in order to reduce the size of this patch before submitting it, and to

separate concerns.

To accompany the SQLAlchemy adapter implementation, the patch includes

integration tests for both SQLite and PostgreSQL. Let me know if

corresponding utility resources should be added at different spots.

With kind regards,

Andreas.

### Software Tests

```console

docker compose --file libs/community/tests/integration_tests/document_loaders/docker-compose/postgresql.yml up

```

```console

cd libs/community

pip install psycopg2-binary

pytest -vvv tests/integration_tests -k sqldatabase

```

```

14 passed

```

---------

Co-authored-by: Andreas Motl <andreas.motl@crate.io>

**Description**: This PR adds support for using the [LLMLingua project

](https://github.com/microsoft/LLMLingua) especially the LongLLMLingua

(Enhancing Large Language Model Inference via Prompt Compression) as a

document compressor / transformer.

The LLMLingua project is an interesting project that can greatly improve

RAG system by compressing prompts and contexts while keeping their

semantic relevance.

**Issue**: https://github.com/microsoft/LLMLingua/issues/31

**Dependencies**: [llmlingua](https://pypi.org/project/llmlingua/)

@baskaryan

---------

Co-authored-by: Ayodeji Ayibiowu <ayodeji.ayibiowu@getinge.com>

Co-authored-by: Harrison Chase <hw.chase.17@gmail.com>

### Description

This PR moves the Elasticsearch classes to a partners package.

Note that we will not move (and later remove) `ElasticKnnSearch`. It

were previously deprecated.

`ElasticVectorSearch` is going to stay in the community package since it

is used quite a lot still.

Also note that I left the `ElasticsearchTranslator` for self query

untouched because it resides in main `langchain` package.

### Dependencies

There will be another PR that updates the notebooks (potentially pulling

them into the partners package) and templates and removes the classes

from the community package, see

https://github.com/langchain-ai/langchain/pull/17468

#### Open question

How to make the transition smooth for users? Do we move the import

aliases and require people to install `langchain-elasticsearch`? Or do

we remove the import aliases from the `langchain` package all together?

What has worked well for other partner packages?

---------

Co-authored-by: Erick Friis <erick@langchain.dev>

**Description:** Update the example fiddler notebook to use community

path, instead of langchain.callback

**Dependencies:** None

**Twitter handle:** @bhalder

Co-authored-by: Barun Halder <barun@fiddler.ai>

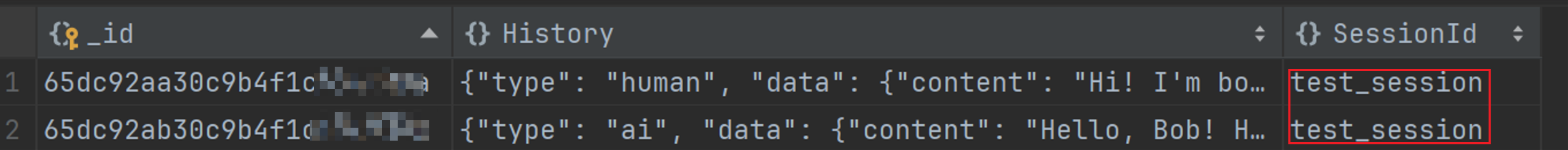

I tried to configure MongoDBChatMessageHistory using the code from the

original documentation to store messages based on the passed session_id

in MongoDB. However, this configuration did not take effect, and the

session id in the database remained as 'test_session'. To resolve this

issue, I found that when configuring MongoDBChatMessageHistory, it is

necessary to set session_id=session_id instead of

session_id=test_session.

Issue: DOC: Ineffective Configuration of MongoDBChatMessageHistory for

Custom session_id Storage

previous code:

```python

chain_with_history = RunnableWithMessageHistory(

chain,

lambda session_id: MongoDBChatMessageHistory(

session_id="test_session",

connection_string="mongodb://root:Y181491117cLj@123.56.224.232:27017",

database_name="my_db",

collection_name="chat_histories",

),

input_messages_key="question",

history_messages_key="history",

)

config = {"configurable": {"session_id": "mmm"}}

chain_with_history.invoke({"question": "Hi! I'm bob"}, config)

```

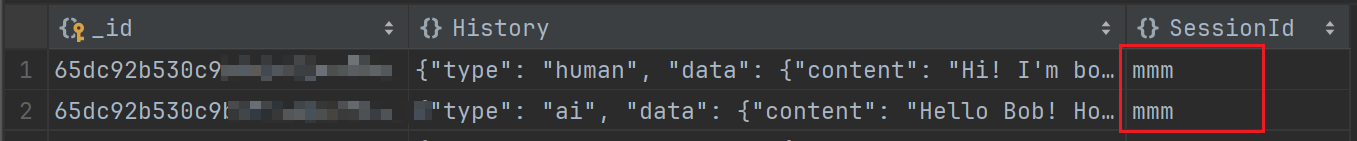

Modified code:

```python

chain_with_history = RunnableWithMessageHistory(

chain,

lambda session_id: MongoDBChatMessageHistory(

session_id=session_id, # here is my modify code

connection_string="mongodb://root:Y181491117cLj@123.56.224.232:27017",

database_name="my_db",

collection_name="chat_histories",

),

input_messages_key="question",

history_messages_key="history",

)

config = {"configurable": {"session_id": "mmm"}}

chain_with_history.invoke({"question": "Hi! I'm bob"}, config)

```

Effect after modification (it works):

**Description:** Update the azure search notebook to have more

descriptive comments, and an option to choose between OpenAI and

AzureOpenAI Embeddings

---------

Co-authored-by: Matt Gotteiner <[email protected]>

Co-authored-by: Bagatur <baskaryan@gmail.com>

**Description:** Callback handler to integrate fiddler with langchain.

This PR adds the following -

1. `FiddlerCallbackHandler` implementation into langchain/community

2. Example notebook `fiddler.ipynb` for usage documentation

[Internal Tracker : FDL-14305]

**Issue:**

NA

**Dependencies:**

- Installation of langchain-community is unaffected.

- Usage of FiddlerCallbackHandler requires installation of latest

fiddler-client (2.5+)

**Twitter handle:** @fiddlerlabs @behalder

Co-authored-by: Barun Halder <barun@fiddler.ai>

**Description:** Initial pull request for Kinetica LLM wrapper

**Issue:** N/A

**Dependencies:** No new dependencies for unit tests. Integration tests

require gpudb, typeguard, and faker

**Twitter handle:** @chad_juliano

Note: There is another pull request for Kinetica vectorstore. Ultimately

we would like to make a partner package but we are starting with a

community contribution.

- **Description:** Update the Azure Search vector store notebook for the

latest version of the SDK

---------

Co-authored-by: Matt Gotteiner <[email protected]>

**Description:** Clean up Google product names and fix document loader

section

**Issue:** NA

**Dependencies:** None

---------

Co-authored-by: Bagatur <baskaryan@gmail.com>

- **Description:** Update IBM watsonx.ai docs and add IBM as a provider

docs

- **Dependencies:**

[ibm-watsonx-ai](https://pypi.org/project/ibm-watsonx-ai/),

- **Tag maintainer:** :

Please make sure your PR is passing linting and testing before

submitting. Run `make format`, `make lint` and `make test` to check this

locally. ✅

**Description:** This PR changes the module import path for SQLDatabase

in the documentation

**Issue:** Updates the documentation to reflect the move of integrations

to langchain-community

- **Description:** The URL in the tigris tutorial was htttps instead of

https, leading to a bad link.

- **Issue:** N/A

- **Dependencies:** N/A

- **Twitter handle:** Speucey

In this pull request, we introduce the add_images method to the

SingleStoreDB vector store class, expanding its capabilities to handle

multi-modal embeddings seamlessly. This method facilitates the

incorporation of image data into the vector store by associating each

image's URI with corresponding document content, metadata, and either

pre-generated embeddings or embeddings computed using the embed_image

method of the provided embedding object.

the change includes integration tests, validating the behavior of the

add_images. Additionally, we provide a notebook showcasing the usage of

this new method.

---------

Co-authored-by: Volodymyr Tkachuk <vtkachuk-ua@singlestore.com>

Description:

In this PR, I am adding a PolygonTickerNews Tool, which can be used to

get the latest news for a given ticker / stock.

Twitter handle: [@virattt](https://twitter.com/virattt)

**Description**: CogniSwitch focusses on making GenAI usage more

reliable. It abstracts out the complexity & decision making required for

tuning processing, storage & retrieval. Using simple APIs documents /

URLs can be processed into a Knowledge Graph that can then be used to

answer questions.

**Dependencies**: No dependencies. Just network calls & API key required

**Tag maintainer**: @hwchase17

**Twitter handle**: https://github.com/CogniSwitch

**Documentation**: Please check

`docs/docs/integrations/toolkits/cogniswitch.ipynb`

**Tests**: The usual tool & toolkits tests using `test_imports.py`

PR has passed linting and testing before this submission.

---------

Co-authored-by: Saicharan Sridhara <145636106+saiCogniswitch@users.noreply.github.com>

Hi, I'm from the LanceDB team.

Improves LanceDB integration by making it easier to use - now you aren't

required to create tables manually and pass them in the constructor,

although that is still backward compatible.

Bug fix - pandas was being used even though it's not a dependency for

LanceDB or langchain

PS - this issue was raised a few months ago but lost traction. It is a

feature improvement for our users kindly review this , Thanks !

This PR replaces the imports of the Astra DB vector store with the

newly-released partner package, in compliance with the deprecation

notice now attached to the community "legacy" store.

**Description:** This PR introduces a new "Astra DB" Partner Package.

So far only the vector store class is _duplicated_ there, all others

following once this is validated and established.

Along with the move to separate package, incidentally, the class name

will change `AstraDB` => `AstraDBVectorStore`.

The strategy has been to duplicate the module (with prospected removal

from community at LangChain 0.2). Until then, the code will be kept in

sync with minimal, known differences (there is a makefile target to

automate drift control. Out of convenience with this check, the

community package has a class `AstraDBVectorStore` aliased to `AstraDB`

at the end of the module).

With this PR several bugfixes and improvement come to the vector store,

as well as a reshuffling of the doc pages/notebooks (Astra and

Cassandra) to align with the move to a separate package.

**Dependencies:** A brand new pyproject.toml in the new package, no

changes otherwise.

**Twitter handle:** `@rsprrs`

---------

Co-authored-by: Christophe Bornet <cbornet@hotmail.com>

Co-authored-by: Erick Friis <erick@langchain.dev>

This PR is adding support for NVIDIA NeMo embeddings issue #16095.

---------

Co-authored-by: Praveen Nakshatrala <pnakshatrala@gmail.com>

Co-authored-by: Harrison Chase <hw.chase.17@gmail.com>

Co-authored-by: Bagatur <baskaryan@gmail.com>

1. integrate with

[`Yuan2.0`](https://github.com/IEIT-Yuan/Yuan-2.0/blob/main/README-EN.md)

2. update `langchain.llms`

3. add a new doc for [Yuan2.0

integration](docs/docs/integrations/llms/yuan2.ipynb)

---------

Co-authored-by: Harrison Chase <hw.chase.17@gmail.com>

Co-authored-by: Bagatur <baskaryan@gmail.com>

This pull request introduces support for various Approximate Nearest

Neighbor (ANN) vector index algorithms in the VectorStore class,

starting from version 8.5 of SingleStore DB. Leveraging this enhancement

enables users to harness the power of vector indexing, significantly

boosting search speed, particularly when handling large sets of vectors.

---------

Co-authored-by: Volodymyr Tkachuk <vtkachuk-ua@singlestore.com>

Co-authored-by: Bagatur <baskaryan@gmail.com>

Thank you for contributing to LangChain!

Checklist:

- **PR title**: docs: add & update docs for Oracle Cloud Infrastructure

(OCI) integrations

- **Description**: adding and updating documentation for two

integrations - OCI Generative AI & OCI Data Science

(1) adding integration page for OCI Generative AI embeddings (@baskaryan

request,

docs/docs/integrations/text_embedding/oci_generative_ai.ipynb)

(2) updating integration page for OCI Generative AI llms

(docs/docs/integrations/llms/oci_generative_ai.ipynb)

(3) adding platform documentation for OCI (@baskaryan request,

docs/docs/integrations/platforms/oci.mdx). this combines the

integrations of OCI Generative AI & OCI Data Science

(4) if possible, requesting to be added to 'Featured Community

Providers' so supplying a modified

docs/docs/integrations/platforms/index.mdx to reflect the addition

- **Issue:** none

- **Dependencies:** no new dependencies

- **Twitter handle:**

---------

Co-authored-by: MING KANG <ming.kang@oracle.com>

1. integrate chat models with

[`Yuan2.0`](https://github.com/IEIT-Yuan/Yuan-2.0/blob/main/README-EN.md)

2. add a new doc for [Yuan2.0

integration](docs/docs/integrations/llms/yuan2.ipynb)

Yuan2.0 is a new generation Fundamental Large Language Model developed

by IEIT System. We have published all three models, Yuan 2.0-102B, Yuan

2.0-51B, and Yuan 2.0-2B.

---------

Co-authored-by: Bagatur <baskaryan@gmail.com>

## Description

I am submitting this for a school project as part of a team of 5. Other

team members are @LeilaChr, @maazh10, @Megabear137, @jelalalamy. This PR

also has contributions from community members @Harrolee and @Mario928.

Initial context is in the issue we opened (#11229).

This pull request adds:

- Generic framework for expanding the languages that `LanguageParser`

can handle, using the

[tree-sitter](https://github.com/tree-sitter/py-tree-sitter#py-tree-sitter)

parsing library and existing language-specific parsers written for it

- Support for the following additional languages in `LanguageParser`:

- C

- C++

- C#

- Go

- Java (contributed by @Mario928

https://github.com/ThatsJustCheesy/langchain/pull/2)

- Kotlin

- Lua

- Perl

- Ruby

- Rust

- Scala

- TypeScript (contributed by @Harrolee

https://github.com/ThatsJustCheesy/langchain/pull/1)

Here is the [design

document](https://docs.google.com/document/d/17dB14cKCWAaiTeSeBtxHpoVPGKrsPye8W0o_WClz2kk)

if curious, but no need to read it.

## Issues

- Closes#11229

- Closes#10996

- Closes#8405

## Dependencies

`tree_sitter` and `tree_sitter_languages` on PyPI. We have tried to add

these as optional dependencies.

## Documentation

We have updated the list of supported languages, and also added a

section to `source_code.ipynb` detailing how to add support for

additional languages using our framework.

## Maintainer

- @hwchase17 (previously reviewed

https://github.com/langchain-ai/langchain/pull/6486)

Thanks!!

## Git commits

We will gladly squash any/all of our commits (esp merge commits) if

necessary. Let us know if this is desirable, or if you will be

squash-merging anyway.

<!-- Thank you for contributing to LangChain!

Replace this entire comment with:

- **Description:** a description of the change,

- **Issue:** the issue # it fixes (if applicable),

- **Dependencies:** any dependencies required for this change,

- **Tag maintainer:** for a quicker response, tag the relevant

maintainer (see below),

- **Twitter handle:** we announce bigger features on Twitter. If your PR

gets announced, and you'd like a mention, we'll gladly shout you out!

Please make sure your PR is passing linting and testing before

submitting. Run `make format`, `make lint` and `make test` to check this

locally.

See contribution guidelines for more information on how to write/run

tests, lint, etc:

https://github.com/langchain-ai/langchain/blob/master/.github/CONTRIBUTING.md

If you're adding a new integration, please include:

1. a test for the integration, preferably unit tests that do not rely on

network access,

2. an example notebook showing its use. It lives in `docs/extras`

directory.

If no one reviews your PR within a few days, please @-mention one of

@baskaryan, @eyurtsev, @hwchase17.

-->

---------

Co-authored-by: Maaz Hashmi <mhashmi373@gmail.com>

Co-authored-by: LeilaChr <87657694+LeilaChr@users.noreply.github.com>

Co-authored-by: Jeremy La <jeremylai511@gmail.com>

Co-authored-by: Megabear137 <zubair.alnoor27@gmail.com>

Co-authored-by: Lee Harrold <lhharrold@sep.com>

Co-authored-by: Mario928 <88029051+Mario928@users.noreply.github.com>

Co-authored-by: Bagatur <baskaryan@gmail.com>

Co-authored-by: Harrison Chase <hw.chase.17@gmail.com>

- **Description:** Pebblo opensource project enables developers to

safely load data to their Gen AI apps. It identifies semantic topics and

entities found in the loaded data and summarizes them in a

developer-friendly report.

- **Dependencies:** none

- **Twitter handle:** srics

@hwchase17

**Description:** This PR adds support for

[flashrank](https://github.com/PrithivirajDamodaran/FlashRank) for

reranking as alternative to Cohere.

I'm not sure `libs/langchain` is the right place for this change. At

first, I wanted to put it under `libs/community`. All the compressors

were under `libs/langchain/retrievers/document_compressors` though. Hope

this makes sense!

- Reordered sections

- Applied consistent formatting

- Fixed headers (there were 2 H1 headers; this breaks CoT)

- Added `Settings` header and moved all related sections under it

Description: Updated doc for integrations/chat/anthropic_functions with

new functions: invoke. Changed structure of the document to match the

required one.

Issue: https://github.com/langchain-ai/langchain/issues/15664

Dependencies: None

Twitter handle: None

---------

Co-authored-by: NaveenMaltesh <naveen@onmeta.in>

- **Description:** Adds the document loader for [AWS

Athena](https://aws.amazon.com/athena/), a serverless and interactive

analytics service.

- **Dependencies:** Added boto3 as a dependency

This PR updates the `TF-IDF.ipynb` documentation to reflect the new

import path for TFIDFRetriever in the langchain-community package. The

previous path, `from langchain.retrievers import TFIDFRetriever`, has

been updated to `from langchain_community.retrievers import

TFIDFRetriever` to align with the latest changes in the langchain

library.

according to https://youtu.be/rZus0JtRqXE?si=aFo1JTDnu5kSEiEN&t=678 by

@efriis

- **Description:** Seems the requirements for tool names have changed

and spaces are no longer allowed. Changed the tool name from Google

Search to google_search in the notebook

- **Issue:** n/a

- **Dependencies:** none

- **Twitter handle:** @mesirii

**Description**

Make some functions work with Milvus:

1. get_ids: Get primary keys by field in the metadata

2. delete: Delete one or more entities by ids

3. upsert: Update/Insert one or more entities

**Issue**

None

**Dependencies**

None

**Tag maintainer:**

@hwchase17

**Twitter handle:**

None

---------

Co-authored-by: HoaNQ9 <hoanq.1811@gmail.com>

Co-authored-by: Erick Friis <erick@langchain.dev>

- **Issue:** Issue with model argument support (been there for a while

actually):

- Non-specially-handled arguments like temperature don't work when

passed through constructor.

- Such arguments DO work quite well with `bind`, but also do not abide

by field requirements.

- Since initial push, server-side error messages have gotten better and

v0.0.2 raises better exceptions. So maybe it's better to let server-side

handle such issues?

- **Description:**

- Removed ChatNVIDIA's argument fields in favor of

`model_kwargs`/`model_kws` arguments which aggregates constructor kwargs

(from constructor pathway) and merges them with call kwargs (bind

pathway).

- Shuffled a few functions from `_NVIDIAClient` to `ChatNVIDIA` to

streamline construction for future integrations.

- Minor/Optional: Old services didn't have stop support, so client-side

stopping was implemented. Now do both.

- **Any Breaking Changes:** Minor breaking changes if you strongly rely

on chat_model.temperature, etc. This is captured by

chat_model.model_kwargs.

PR passes tests and example notebooks and example testing. Still gonna

chat with some people, so leaving as draft for now.

---------

Co-authored-by: Erick Friis <erick@langchain.dev>

<!-- Thank you for contributing to LangChain!

Please title your PR "<package>: <description>", where <package> is

whichever of langchain, community, core, experimental, etc. is being

modified.

Replace this entire comment with:

- **Description:** a description of the change,

- **Issue:** the issue # it fixes if applicable,

- **Dependencies:** any dependencies required for this change,

- **Twitter handle:** we announce bigger features on Twitter. If your PR

gets announced, and you'd like a mention, we'll gladly shout you out!

Please make sure your PR is passing linting and testing before

submitting. Run `make format`, `make lint` and `make test` from the root

of the package you've modified to check this locally.

See contribution guidelines for more information on how to write/run

tests, lint, etc: https://python.langchain.com/docs/contributing/

If you're adding a new integration, please include:

1. a test for the integration, preferably unit tests that do not rely on

network access,

2. an example notebook showing its use. It lives in

`docs/docs/integrations` directory.

If no one reviews your PR within a few days, please @-mention one of

@baskaryan, @eyurtsev, @hwchase17.

-->

- **Description: changes to you.com files**

- general cleanup

- adds community/utilities/you.py, moving bulk of code from retriever ->

utility

- removes `snippet` as endpoint

- adds `news` as endpoint

- adds more tests

<s>**Description: update community MAKE file**

- adds `integration_tests`

- adds `coverage`</s>

- **Issue:** the issue # it fixes if applicable,

- [For New Contributors: Update Integration

Documentation](https://github.com/langchain-ai/langchain/issues/15664#issuecomment-1920099868)

- **Dependencies:** n/a

- **Twitter handle:** @scottnath

- **Mastodon handle:** scottnath@mastodon.social

---------

Co-authored-by: Bagatur <baskaryan@gmail.com>

Ran

```python

import glob

import re

def update_prompt(x):

return re.sub(

r"(?P<start>\b)PromptTemplate\(template=(?P<template>.*), input_variables=(?:.*)\)",

"\g<start>PromptTemplate.from_template(\g<template>)",

x

)

for fn in glob.glob("docs/**/*", recursive=True):

try:

content = open(fn).readlines()

except:

continue

content = [update_prompt(l) for l in content]

with open(fn, "w") as f:

f.write("".join(content))

```

Several notebooks have Title != file name. That results in corrupted

sorting in Navbar (ToC).

- Fixed titles and file names.

- Changed text formats to the consistent form

- Redirected renamed files in the `Vercel.json`

### Description

support load any github file content based on file extension.

Why not use [git

loader](https://python.langchain.com/docs/integrations/document_loaders/git#load-existing-repository-from-disk)

?

git loader clones the whole repo even only interested part of files,

that's too heavy. This GithubFileLoader only downloads that you are

interested files.

### Twitter handle

my twitter: @shufanhaotop

---------

Co-authored-by: Hao Fan <h_fan@apple.com>

Co-authored-by: Bagatur <baskaryan@gmail.com>

**Description:** Link to the Brave Website added to the

`brave-search.ipynb` notebook.

This notebook is shown in the docs as an example for the brave tool.

**Issue:** There was to reference on where / how to get an api key

**Dependencies:** none

**Twitter handle:** not for this one :)

- **Description:** docs: update StreamlitCallbackHandler example.

- **Issue:** None

- **Dependencies:** None

I have updated the example for StreamlitCallbackHandler in the

documentation bellow.

https://python.langchain.com/docs/integrations/callbacks/streamlit

Previously, the example used `initialize_agent`, which has been

deprecated, so I've updated it to use `create_react_agent` instead. Many

langchain users are likely searching examples of combining

`create_react_agent` or `openai_tools_agent_chain` with

StreamlitCallbackHandler. I'm sure this update will be really helpful

for them!

Unfortunately, writing unit tests for this example is difficult, so I

have not written any tests. I have run this code in a standalone Python

script file and ensured it runs correctly.

- **Description:** Adds an additional class variable to `BedrockBase`

called `provider` that allows sending a model provider such as amazon,

cohere, ai21, etc.

Up until now, the model provider is extracted from the `model_id` using

the first part before the `.`, such as `amazon` for

`amazon.titan-text-express-v1` (see [supported list of Bedrock model IDs

here](https://docs.aws.amazon.com/bedrock/latest/userguide/model-ids-arns.html)).

But for custom Bedrock models where the ARN of the provisioned

throughput must be supplied, the `model_id` is like

`arn:aws:bedrock:...` so the `model_id` cannot be extracted from this. A

model `provider` is required by the LangChain Bedrock class to perform

model-based processing. To allow the same processing to be performed for

custom-models of a specific base model type, passing this `provider`

argument can help solve the issues.

The alternative considered here was the use of

`provider.arn:aws:bedrock:...` which then requires ARN to be extracted

and passed separately when invoking the model. The proposed solution

here is simpler and also does not cause issues for current models

already using the Bedrock class.

- **Issue:** N/A

- **Dependencies:** N/A

---------

Co-authored-by: Piyush Jain <piyushjain@duck.com>

- **Description:** Several meta/usability updates, including User-Agent.

- **Issue:**

- User-Agent metadata for tracking connector engagement. @milesial

please check and advise.

- Better error messages. Tries harder to find a request ID. @milesial

requested.

- Client-side image resizing for multimodal models. Hope to upgrade to

Assets API solution in around a month.

- `client.payload_fn` allows you to modify payload before network

request. Use-case shown in doc notebook for kosmos_2.

- `client.last_inputs` put back in to allow for advanced

support/debugging.

- **Dependencies:**

- Attempts to pull in PIL for image resizing. If not installed, prints

out "please install" message, warns it might fail, and then tries

without resizing. We are waiting on a more permanent solution.

For LC viz: @hinthornw

For NV viz: @fciannella @milesial @vinaybagade

---------

Co-authored-by: Erick Friis <erick@langchain.dev>

<!-- Thank you for contributing to LangChain!

Please title your PR "<package>: <description>", where <package> is

whichever of langchain, community, core, experimental, etc. is being

modified.

Replace this entire comment with:

- **Description:** a description of the change,

- **Issue:** the issue # it fixes if applicable,

- **Dependencies:** any dependencies required for this change,

- **Twitter handle:** we announce bigger features on Twitter. If your PR

gets announced, and you'd like a mention, we'll gladly shout you out!

Please make sure your PR is passing linting and testing before

submitting. Run `make format`, `make lint` and `make test` from the root

of the package you've modified to check this locally.

See contribution guidelines for more information on how to write/run

tests, lint, etc: https://python.langchain.com/docs/contributing/

If you're adding a new integration, please include:

1. a test for the integration, preferably unit tests that do not rely on

network access,

2. an example notebook showing its use. It lives in

`docs/docs/integrations` directory.

If no one reviews your PR within a few days, please @-mention one of

@baskaryan, @eyurtsev, @hwchase17.

-->