# Expose full params in Qdrant

There were many questions regarding supporting some additional

parameters in Qdrant integration. Qdrant supports many vector search

optimizations that were impossible to use directly in Qdrant before.

That includes:

1. Possibility to manipulate collection params while using

`Qdrant.from_texts`. The PR allows setting things such as quantization,

HNWS config, optimizers config, etc. That makes it consistent with raw

`QdrantClient`.

2. Extended options while searching. It includes HNSW options, exact

search, score threshold filtering, and read consistency in distributed

mode.

After merging that PR, #4858 might also be closed.

## Who can review?

VectorStores / Retrievers / Memory

@dev2049 @hwchase17

This PR adds the possibility of specifying the endpoint URL to AWS in

the DynamoDBChatMessageHistory, so that it is possible to target not

only the AWS cloud services, but also a local installation.

Specifying the endpoint URL, which is normally not done when addressing

the cloud services, is very helpful when targeting a local instance

(like [Localstack](https://localstack.cloud/)) when running local tests.

Fixes#5835

#### Who can review?

Tag maintainers/contributors who might be interested: @dev2049

<!-- For a quicker response, figure out the right person to tag with @

@hwchase17 - project lead

Tracing / Callbacks

- @agola11

Async

- @agola11

DataLoaders

- @eyurtsev

Models

- @hwchase17

- @agola11

Agents / Tools / Toolkits

- @vowelparrot

VectorStores / Retrievers / Memory

- @dev2049

-->

---------

Co-authored-by: Harrison Chase <hw.chase.17@gmail.com>

Fixes proxy error.

Since openai does not parse proxy parameters and uses openai.proxy

directly, the proxy method needs to be modified.

7610c5adfa/openai/api_requestor.py (LL90)

#### Who can review?

@hwchase17 - project lead

Models

- @hwchase17

- @agola11

---------

Co-authored-by: Harrison Chase <hw.chase.17@gmail.com>

<!--

Thank you for contributing to LangChain! Your PR will appear in our

release under the title you set. Please make sure it highlights your

valuable contribution.

Replace this with a description of the change, the issue it fixes (if

applicable), and relevant context. List any dependencies required for

this change.

After you're done, someone will review your PR. They may suggest

improvements. If no one reviews your PR within a few days, feel free to

@-mention the same people again, as notifications can get lost.

Finally, we'd love to show appreciation for your contribution - if you'd

like us to shout you out on Twitter, please also include your handle!

-->

#### Add start index to metadata in TextSplitter

- Modified method `create_documents` to track start position of each

chunk

- The `start_index` is included in the metadata if the `add_start_index`

parameter in the class constructor is set to `True`

This enables referencing back to the original document, particularly

useful when a specific chunk is retrieved.

<!-- If you're adding a new integration, please include:

1. a test for the integration - favor unit tests that does not rely on

network access.

2. an example notebook showing its use

See contribution guidelines for more information on how to write tests,

lint

etc:

https://github.com/hwchase17/langchain/blob/master/.github/CONTRIBUTING.md

-->

#### Who can review?

Tag maintainers/contributors who might be interested:

@eyurtsev @agola11

<!-- For a quicker response, figure out the right person to tag with @

@hwchase17 - project lead

Tracing / Callbacks

- @agola11

Async

- @agola11

DataLoaders

- @eyurtsev

Models

- @hwchase17

- @agola11

Agents / Tools / Toolkits

- @vowelparrot

VectorStores / Retrievers / Memory

- @dev2049

-->

This PR adds a Baseten integration. I've done my best to follow the

contributor's guidelines and add docs, an example notebook, and an

integration test modeled after similar integrations' test.

Please let me know if there is anything I can do to improve the PR. When

it is merged, please tag https://twitter.com/basetenco and

https://twitter.com/philip_kiely as contributors (the note on the PR

template said to include Twitter accounts)

+ this private attribute is referenced as `arxiv_search` in internal

usage and is set when verifying the environment

twitter: @spazm

#### Who can review?

Any of @hwchase17, @leo-gan, or @bongsang might be interested in

reviewing.

+ Mismatch between `arxiv_client` attribute vs `arxiv_search` in

validation and usage is present in the initial commit by @hwchase17.

+ @leo-gan has made most of the edits.

+ @bongsang implemented pdf download.

<!--

Thank you for contributing to LangChain! Your PR will appear in our

release under the title you set. Please make sure it highlights your

valuable contribution.

Replace this with a description of the change, the issue it fixes (if

applicable), and relevant context. List any dependencies required for

this change.

After you're done, someone will review your PR. They may suggest

improvements. If no one reviews your PR within a few days, feel free to

@-mention the same people again, as notifications can get lost.

Finally, we'd love to show appreciation for your contribution - if you'd

like us to shout you out on Twitter, please also include your handle!

-->

<!-- Remove if not applicable -->

Fixes # (issue)

#### Before submitting

<!-- If you're adding a new integration, please include:

1. a test for the integration - favor unit tests that does not rely on

network access.

2. an example notebook showing its use

See contribution guidelines for more information on how to write tests,

lint

etc:

https://github.com/hwchase17/langchain/blob/master/.github/CONTRIBUTING.md

-->

#### Who can review?

Tag maintainers/contributors who might be interested:

<!-- For a quicker response, figure out the right person to tag with @

@hwchase17 - project lead

Tracing / Callbacks

- @agola11

Async

- @agola11

DataLoaders

- @eyurtsev

Models

- @hwchase17

- @agola11

Agents / Tools / Toolkits

- @vowelparrot

VectorStores / Retrievers / Memory

- @dev2049

-->

---------

Co-authored-by: rlm <pexpresss31@gmail.com>

Fix the document page to open both search and Mendable when pressing

Ctrl+K.

I have changed the shortcut for Mendable to Ctrl+J.

<!-- If you're adding a new integration, please include:

1. a test for the integration - favor unit tests that does not rely on

network access.

2. an example notebook showing its use

See contribution guidelines for more information on how to write tests,

lint

etc:

https://github.com/hwchase17/langchain/blob/master/.github/CONTRIBUTING.md

-->

#### Who can review?

@hwchase17

Tag maintainers/contributors who might be interested:

<!-- For a quicker response, figure out the right person to tag with @

@hwchase17 - project lead

Tracing / Callbacks

- @agola11

Async

- @agola11

DataLoaders

- @eyurtsev

Models

- @hwchase17

- @agola11

Agents / Tools / Toolkits

- @vowelparrot

VectorStores / Retrievers / Memory

- @dev2049

-->

<!--

Thank you for contributing to LangChain! Your PR will appear in our

release under the title you set. Please make sure it highlights your

valuable contribution.

Replace this with a description of the change, the issue it fixes (if

applicable), and relevant context. List any dependencies required for

this change.

After you're done, someone will review your PR. They may suggest

improvements. If no one reviews your PR within a few days, feel free to

@-mention the same people again, as notifications can get lost.

Finally, we'd love to show appreciation for your contribution - if you'd

like us to shout you out on Twitter, please also include your handle!

-->

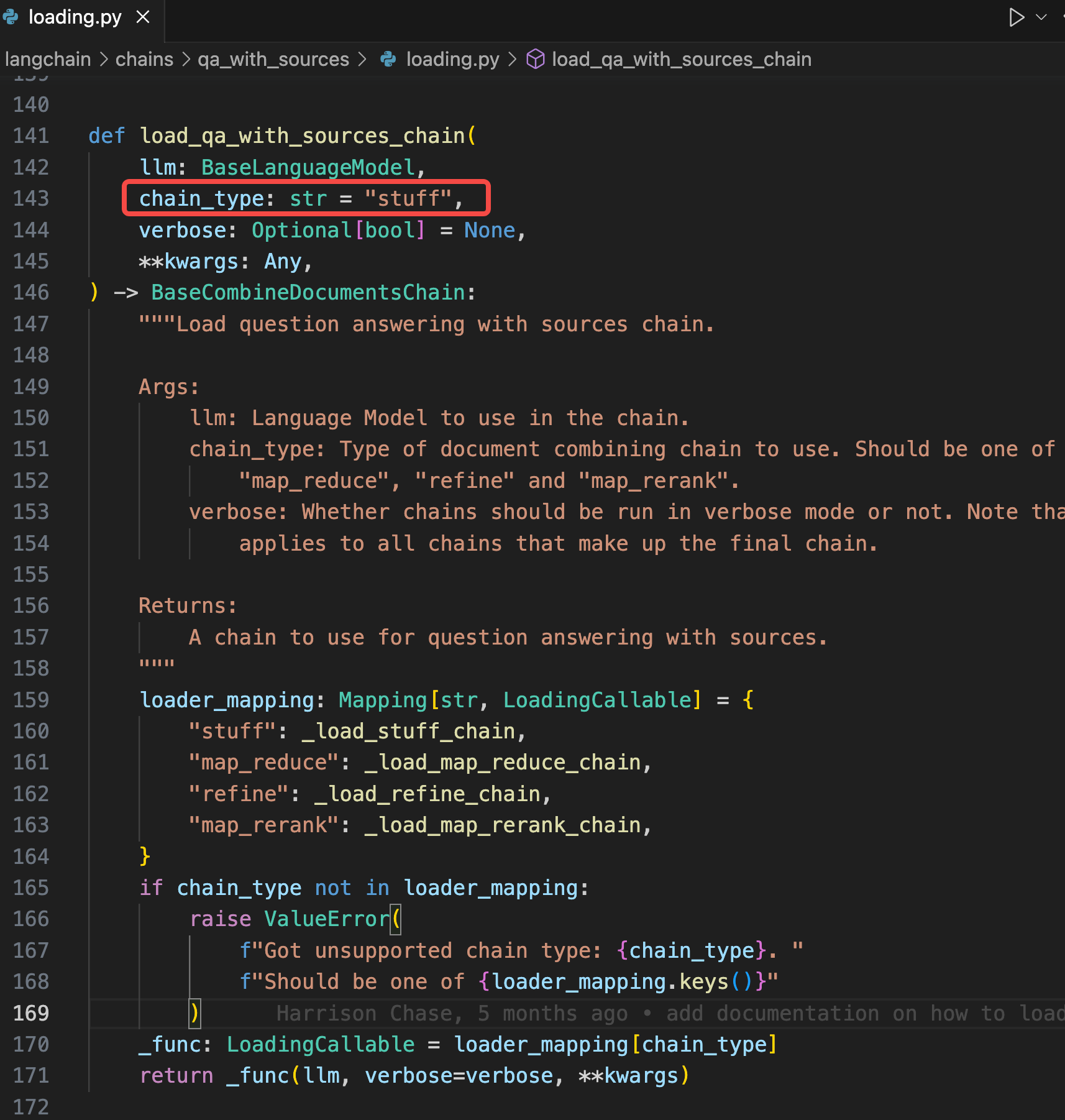

`load_qa_with_sources_chain` method already support four type of chain,

including `map_rerank`. update document to prevent any misunderstandings

😀.

<!-- Remove if not applicable -->

Fixes # (issue)

No, just update document.

#### Before submitting

<!-- If you're adding a new integration, please include:

1. a test for the integration - favor unit tests that does not rely on

network access.

2. an example notebook showing its use

See contribution guidelines for more information on how to write tests,

lint

etc:

https://github.com/hwchase17/langchain/blob/master/.github/CONTRIBUTING.md

-->

#### Who can review?

@hwchase17

Tag maintainers/contributors who might be interested:

<!-- For a quicker response, figure out the right person to tag with @

@hwchase17 - project lead

Tracing / Callbacks

- @agola11

Async

- @agola11

DataLoaders

- @eyurtsev

Models

- @hwchase17

- @agola11

Agents / Tools / Toolkits

- @vowelparrot

VectorStores / Retrievers / Memory

- @dev2049

-->

<!--

Thank you for contributing to LangChain! Your PR will appear in our

release under the title you set. Please make sure it highlights your

valuable contribution.

Replace this with a description of the change, the issue it fixes (if

applicable), and relevant context. List any dependencies required for

this change.

After you're done, someone will review your PR. They may suggest

improvements. If no one reviews your PR within a few days, feel free to

@-mention the same people again, as notifications can get lost.

Finally, we'd love to show appreciation for your contribution - if you'd

like us to shout you out on Twitter, please also include your handle!

-->

<!-- Remove if not applicable -->

Fixes#3983

Mimicing what we do for saving and loading VectorDBQA chain, I added the

logic for RetrievalQA chain.

Also added a unit test. I did not find how we test other chains for

their saving and loading functionality, so I just added a file with one

test case. Let me know if there are recommended ways to test it.

#### Before submitting

<!-- If you're adding a new integration, please include:

1. a test for the integration - favor unit tests that does not rely on

network access.

2. an example notebook showing its use

See contribution guidelines for more information on how to write tests,

lint

etc:

https://github.com/hwchase17/langchain/blob/master/.github/CONTRIBUTING.md

-->

#### Who can review?

Tag maintainers/contributors who might be interested:

@dev2049

<!-- For a quicker response, figure out the right person to tag with @

@hwchase17 - project lead

Tracing / Callbacks

- @agola11

Async

- @agola11

DataLoaders

- @eyurtsev

Models

- @hwchase17

- @agola11

Agents / Tools / Toolkits

- @vowelparrot

VectorStores / Retrievers / Memory

- @dev2049

-->

---------

Co-authored-by: Harrison Chase <hw.chase.17@gmail.com>

# Your PR Title (What it does)

<!--

Thank you for contributing to LangChain! Your PR will appear in our

release under the title you set. Please make sure it highlights your

valuable contribution.

Replace this with a description of the change, the issue it fixes (if

applicable), and relevant context. List any dependencies required for

this change.

After you're done, someone will review your PR. They may suggest

improvements. If no one reviews your PR within a few days, feel free to

@-mention the same people again, as notifications can get lost.

Finally, we'd love to show appreciation for your contribution - if you'd

like us to shout you out on Twitter, please also include your handle!

-->

<!-- Remove if not applicable -->

Fixes # (issue)

## Before submitting

<!-- If you're adding a new integration, please include:

1. a test for the integration - favor unit tests that does not rely on

network access.

2. an example notebook showing its use

See contribution guidelines for more information on how to write tests,

lint

etc:

https://github.com/hwchase17/langchain/blob/master/.github/CONTRIBUTING.md

-->

## Who can review?

Community members can review the PR once tests pass. Tag

maintainers/contributors who might be interested:

<!-- For a quicker response, figure out the right person to tag with @

@hwchase17 - project lead

Tracing / Callbacks

- @agola11

Async

- @agola11

DataLoaders

- @eyurtsev

Models

- @hwchase17

- @agola11

Agents / Tools / Toolkits

- @vowelparrot

VectorStores / Retrievers / Memory

- @dev2049

-->

- Added `SingleStoreDB` vector store, which is a wrapper over the

SingleStore DB database, that can be used as a vector storage and has an

efficient similarity search.

- Added integration tests for the vector store

- Added jupyter notebook with the example

@dev2049

---------

Co-authored-by: Volodymyr Tkachuk <vtkachuk-ua@singlestore.com>

Co-authored-by: Dev 2049 <dev.dev2049@gmail.com>

Co-authored-by: Harrison Chase <hw.chase.17@gmail.com>

# Allow callbacks to monitor ConversationalRetrievalChain

<!--

Thank you for contributing to LangChain! Your PR will appear in our

release under the title you set. Please make sure it highlights your

valuable contribution.

Replace this with a description of the change, the issue it fixes (if

applicable), and relevant context. List any dependencies required for

this change.

After you're done, someone will review your PR. They may suggest

improvements. If no one reviews your PR within a few days, feel free to

@-mention the same people again, as notifications can get lost.

Finally, we'd love to show appreciation for your contribution - if you'd

like us to shout you out on Twitter, please also include your handle!

-->

I ran into an issue where load_qa_chain was not passing the callbacks

down to the child LLM chains, and so made sure that callbacks are

propagated. There are probably more improvements to do here but this

seemed like a good place to stop.

Note that I saw a lot of references to callbacks_manager, which seems to

be deprecated. I left that code alone for now.

## Before submitting

<!-- If you're adding a new integration, please include:

1. a test for the integration - favor unit tests that does not rely on

network access.

2. an example notebook showing its use

See contribution guidelines for more information on how to write tests,

lint

etc:

https://github.com/hwchase17/langchain/blob/master/.github/CONTRIBUTING.md

-->

## Who can review?

Community members can review the PR once tests pass. Tag

maintainers/contributors who might be interested:

@agola11

<!-- For a quicker response, figure out the right person to tag with @

@hwchase17 - project lead

Tracing / Callbacks

- @agola11

Async

- @agola11

DataLoaders

- @eyurtsev

Models

- @hwchase17

- @agola11

Agents / Tools / Toolkits

- @vowelparrot

VectorStores / Retrievers / Memory

- @dev2049

-->

in the `ElasticKnnSearch` class added 2 arguments that were not exposed

properly

`knn_search` added:

- `vector_query_field: Optional[str] = 'vector'`

-- vector_query_field: Field name to use in knn search if not default

'vector'

`knn_hybrid_search` added:

- `vector_query_field: Optional[str] = 'vector'`

-- vector_query_field: Field name to use in knn search if not default

'vector'

- `query_field: Optional[str] = 'text'`

-- query_field: Field name to use in search if not default 'text'

Fixes # https://github.com/hwchase17/langchain/issues/5633

cc: @dev2049 @hwchase17

---------

Co-authored-by: Harrison Chase <hw.chase.17@gmail.com>

Simply fixing a small typo in the memory page.

Also removed an extra code block at the end of the file.

Along the way, the current outputs seem to have changed in a few places

so left that for posterity, and updated the number of runs which seems

harmless, though I can clean that up if preferred.

Implementation of similarity_search_with_relevance_scores for quadrant

vector store.

As implemented the method is also compatible with other capacities such

as filtering.

Integration tests updated.

#### Who can review?

Tag maintainers/contributors who might be interested:

VectorStores / Retrievers / Memory

- @dev2049

This PR adds documentation for Shale Protocol's integration with

LangChain.

[Shale Protocol](https://shaleprotocol.com) provides forever-free

production-ready inference APIs to the open-source community. We have

global data centers and plan to support all major open LLMs (estimated

~1,000 by 2025).

The team consists of software and ML engineers, AI researchers,

designers, and operators across North America and Asia. Combined

together, the team has 50+ years experience in machine learning, cloud

infrastructure, software engineering and product development. Team

members have worked at places like Google and Microsoft.

#### Who can review?

Tag maintainers/contributors who might be interested:

- @hwchase17

- @agola11

---------

Co-authored-by: Karen Sheng <46656667+karensheng@users.noreply.github.com>

## Changes

- Added the `stop` param to the `_VertexAICommon` class so it can be set

at llm initialization

## Example Usage

```python

VertexAI(

# ...

temperature=0.15,

max_output_tokens=128,

top_p=1,

top_k=40,

stop=["\n```"],

)

```

## Possible Reviewers

- @hwchase17

- @agola11

<!--

Thank you for contributing to LangChain! Your PR will appear in our

release under the title you set. Please make sure it highlights your

valuable contribution.

Replace this with a description of the change, the issue it fixes (if

applicable), and relevant context. List any dependencies required for

this change.

After you're done, someone will review your PR. They may suggest

improvements. If no one reviews your PR within a few days, feel free to

@-mention the same people again, as notifications can get lost.

Finally, we'd love to show appreciation for your contribution - if you'd

like us to shout you out on Twitter, please also include your handle!

-->

Add some logging into the powerbi tool so that you can see the queries

being sent to PBI and attempts to correct them.

<!-- Remove if not applicable -->

Fixes # (issue)

#### Before submitting

<!-- If you're adding a new integration, please include:

1. a test for the integration - favor unit tests that does not rely on

network access.

2. an example notebook showing its use

See contribution guidelines for more information on how to write tests,

lint

etc:

https://github.com/hwchase17/langchain/blob/master/.github/CONTRIBUTING.md

-->

#### Who can review?

Tag maintainers/contributors who might be interested: @vowelparrot

<!-- For a quicker response, figure out the right person to tag with @

@hwchase17 - project lead

Tracing / Callbacks

- @agola11

Async

- @agola11

DataLoaders

- @eyurtsev

Models

- @hwchase17

- @agola11

Agents / Tools / Toolkits

- @vowelparrot

VectorStores / Retrievers / Memory

- @dev2049

-->

### Summary

Adds an `UnstructuredCSVLoader` for loading CSVs. One advantage of using

`UnstructuredCSVLoader` relative to the standard `CSVLoader` is that if

you use `UnstructuredCSVLoader` in `"elements"` mode, an HTML

representation of the table will be available in the metadata.

#### Who can review?

@hwchase17

@eyurtsev

Hi! I just added an example of how to use a custom scraping function

with the sitemap loader. I recently used this feature and had to dig in

the source code to find it. I thought it might be useful to other devs

to have an example in the Jupyter Notebook directly.

I only added the example to the documentation page.

@eyurtsev I was not able to run the lint. Please let me know if I have

to do anything else.

I know this is a very small contribution, but I hope it will be

valuable. My Twitter handle is @web3Dav3.

<!-- For a quicker response, figure out the right person to tag with @

@hwchase17 - project lead

Tracing / Callbacks

- @agola11

Async

- @agola11

DataLoaders

- @eyurtsev

Models

- @hwchase17

- @agola11

Agents / Tools / Toolkits

- @vowelparrot

VectorStores / Retrievers / Memory

- @dev2049

-->

#### Who can review?

Tag maintainers/contributors who might be interested:

@hwchase17 - project lead

- @agola11

---------

Co-authored-by: Yessen Kanapin <yessen@deepinfra.com>

<!--

Thank you for contributing to LangChain! Your PR will appear in our

release under the title you set. Please make sure it highlights your

valuable contribution.

Replace this with a description of the change, the issue it fixes (if

applicable), and relevant context. List any dependencies required for

this change.

After you're done, someone will review your PR. They may suggest

improvements. If no one reviews your PR within a few days, feel free to

@-mention the same people again, as notifications can get lost.

Finally, we'd love to show appreciation for your contribution - if you'd

like us to shout you out on Twitter, please also include your handle!

-->

<!-- Remove if not applicable -->

LatexTextSplitter needs to use "\n\\\chapter" when separators are

escaped, such as "\n\\\chapter", otherwise it will report an error:

(re.error: bad escape \c at position 1 (line 2, column 1))

Fixes # (issue)

#### Before submitting

<!-- If you're adding a new integration, please include:

1. a test for the integration - favor unit tests that does not rely on

network access.

2. an example notebook showing its use

re.error: bad escape \c at position 1 (line 2, column 1)

See contribution guidelines for more information on how to write tests,

lint

etc:

https://github.com/hwchase17/langchain/blob/master/.github/CONTRIBUTING.md

-->

#### Who can review?

@hwchase17 @dev2049

<!-- For a quicker response, figure out the right person to tag with @

@hwchase17 - project lead

Tracing / Callbacks

- @agola11

Async

- @agola11

DataLoaders

- @eyurtsev

Models

- @hwchase17

- @agola11

Agents / Tools / Toolkits

- @vowelparrot

VectorStores / Retrievers / Memory

- @dev2049

-->

Co-authored-by: Pang <ugfly@qq.com>

<!--

Thank you for contributing to LangChain! Your PR will appear in our

release under the title you set. Please make sure it highlights your

valuable contribution.

Replace this with a description of the change, the issue it fixes (if

applicable), and relevant context. List any dependencies required for

this change.

After you're done, someone will review your PR. They may suggest

improvements. If no one reviews your PR within a few days, feel free to

@-mention the same people again, as notifications can get lost.

Finally, we'd love to show appreciation for your contribution - if you'd

like us to shout you out on Twitter, please also include your handle!

-->

<!-- Remove if not applicable -->

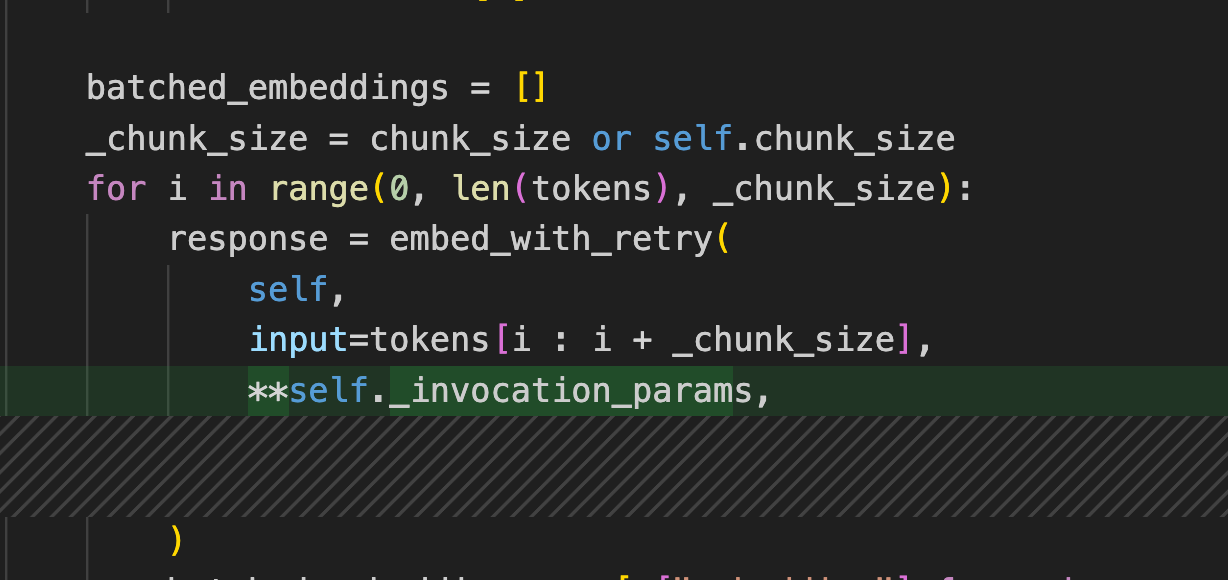

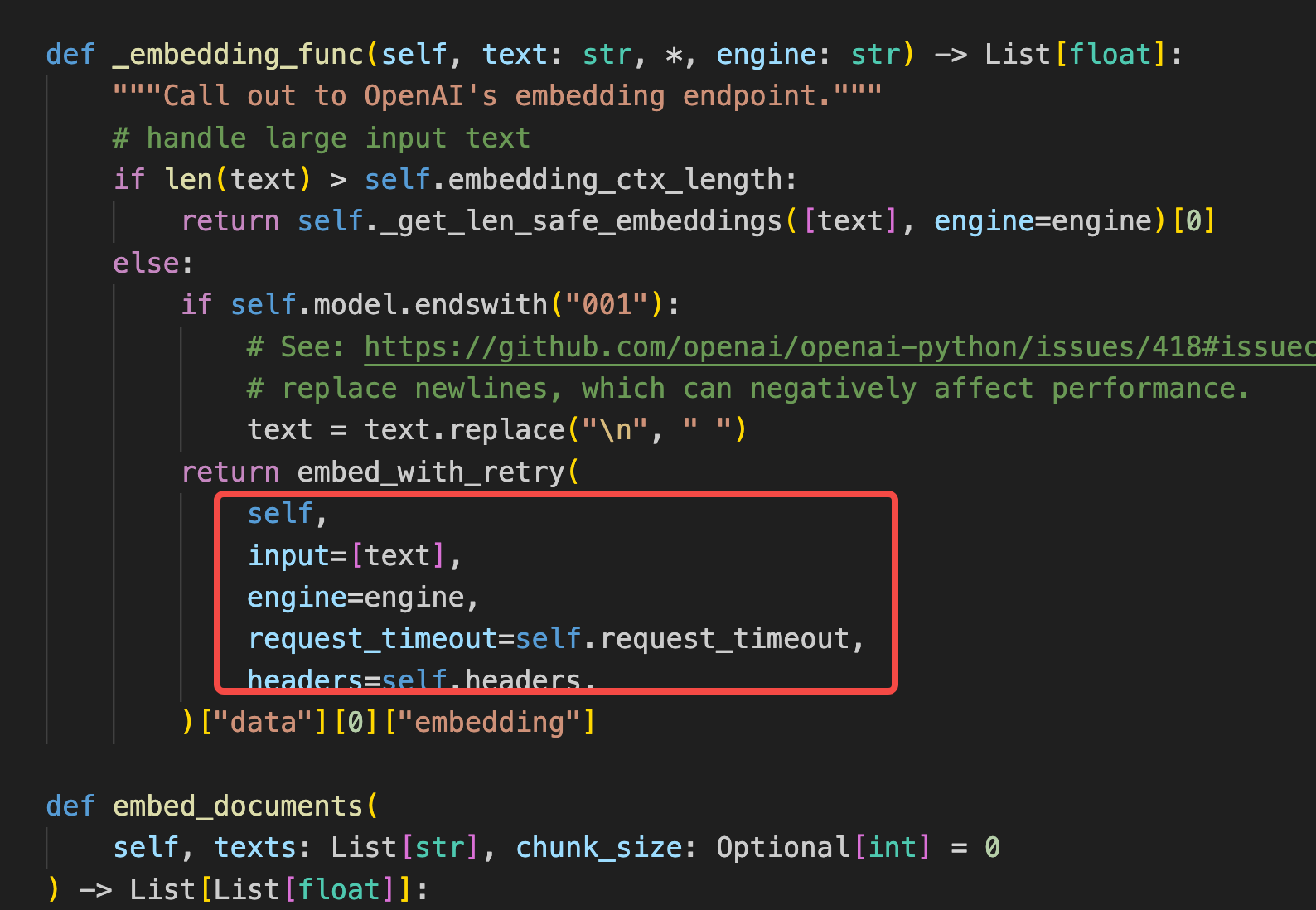

Fixes#5822

I upgrade my langchain lib by execute `pip install -U langchain`, and

the verion is 0.0.192。But i found that openai.api_base not working. I

use azure openai service as openai backend, the openai.api_base is very

import for me. I hava compared tag/0.0.192 and tag/0.0.191, and figure

out that:

openai params is moved inside `_invocation_params` function,and used in

some openai invoke:

but still some case not covered like:

#### Before submitting

<!-- If you're adding a new integration, please include:

1. a test for the integration - favor unit tests that does not rely on

network access.

2. an example notebook showing its use

See contribution guidelines for more information on how to write tests,

lint

etc:

https://github.com/hwchase17/langchain/blob/master/.github/CONTRIBUTING.md

-->

#### Who can review?

Tag maintainers/contributors who might be interested:

@hwchase17

<!-- For a quicker response, figure out the right person to tag with @

@hwchase17 - project lead

Tracing / Callbacks

- @agola11

Async

- @agola11

DataLoaders

- @eyurtsev

Models

- @hwchase17

- @agola11

Agents / Tools / Toolkits

- @vowelparrot

VectorStores / Retrievers / Memory

- @dev2049

-->

---------

Co-authored-by: Harrison Chase <hw.chase.17@gmail.com>

just change "to" to "too" so it matches the above prompt

<!--

Thank you for contributing to LangChain! Your PR will appear in our

release under the title you set. Please make sure it highlights your

valuable contribution.

Replace this with a description of the change, the issue it fixes (if

applicable), and relevant context. List any dependencies required for

this change.

After you're done, someone will review your PR. They may suggest

improvements. If no one reviews your PR within a few days, feel free to

@-mention the same people again, as notifications can get lost.

Finally, we'd love to show appreciation for your contribution - if you'd

like us to shout you out on Twitter, please also include your handle!

-->

<!-- Remove if not applicable -->

Fixes # (issue)

#### Before submitting

<!-- If you're adding a new integration, please include:

1. a test for the integration - favor unit tests that does not rely on

network access.

2. an example notebook showing its use

See contribution guidelines for more information on how to write tests,

lint

etc:

https://github.com/hwchase17/langchain/blob/master/.github/CONTRIBUTING.md

-->

#### Who can review?

Tag maintainers/contributors who might be interested:

<!-- For a quicker response, figure out the right person to tag with @

@hwchase17 - project lead

Tracing / Callbacks

- @agola11

Async

- @agola11

DataLoaders

- @eyurtsev

Models

- @hwchase17

- @agola11

Agents / Tools / Toolkits

- @vowelparrot

VectorStores / Retrievers / Memory

- @dev2049

-->

Fixes # 5807

Realigned tests with implementation.

Also reinforced folder unicity for the test_faiss_local_save_load test

using date-time suffix

#### Before submitting

- Integration test updated

- formatting and linting ok (locally)

#### Who can review?

Tag maintainers/contributors who might be interested:

@hwchase17 - project lead

VectorStores / Retrievers / Memory

-@dev2049

I added support for specifing different types with ResponseSchema

objects:

## before

`

extracted_info = ResponseSchema(name="extracted_info", description="List

of extracted information")

`

generate the following doc: ```json\n{\n\t\"extracted_info\": string //

List of extracted information}```

This brings GPT to create a JSON with only one string in the specified

field even if you requested a List in the description.

## now

`extracted_info = ResponseSchema(name="extracted_info",

type="List[string]", description="List of extracted information")

`

generate the following doc: ```json\n{\n\t\"extracted_info\":

List[string] // List of extracted information}```

This way the model responds better to the prompt generating an array of

strings.

Tag maintainers/contributors who might be interested:

Agents / Tools / Toolkits

@vowelparrot

Don't know who can be interested, I suppose this is a tool, so I tagged

you vowelparrot,

anyway, it's a minor change, and shouldn't impact any other part of the

framework.

<!--

Thank you for contributing to LangChain! Your PR will appear in our

release under the title you set. Please make sure it highlights your

valuable contribution.

Replace this with a description of the change, the issue it fixes (if

applicable), and relevant context. List any dependencies required for

this change.

After you're done, someone will review your PR. They may suggest

improvements. If no one reviews your PR within a few days, feel free to

@-mention the same people again, as notifications can get lost.

Finally, we'd love to show appreciation for your contribution - if you'd

like us to shout you out on Twitter, please also include your handle!

-->

<!-- Remove if not applicable -->

Some links were broken from the previous merge. This PR fixes them.

Tested locally.

#### Before submitting

<!-- If you're adding a new integration, please include:

1. a test for the integration - favor unit tests that does not rely on

network access.

2. an example notebook showing its use

See contribution guidelines for more information on how to write tests,

lint

etc:

https://github.com/hwchase17/langchain/blob/master/.github/CONTRIBUTING.md

-->

#### Who can review?

Tag maintainers/contributors who might be interested:

<!-- For a quicker response, figure out the right person to tag with @

@hwchase17 - project lead

Tracing / Callbacks

- @agola11

Async

- @agola11

DataLoaders

- @eyurtsev

Models

- @hwchase17

- @agola11

Agents / Tools / Toolkits

- @vowelparrot

VectorStores / Retrievers / Memory

- @dev2049

-->

Signed-off-by: Kourosh Hakhamaneshi <kourosh@anyscale.com>

This introduces the `YoutubeAudioLoader`, which will load blobs from a

YouTube url and write them. Blobs are then parsed by

`OpenAIWhisperParser()`, as show in this

[PR](https://github.com/hwchase17/langchain/pull/5580), but we extend

the parser to split audio such that each chuck meets the 25MB OpenAI

size limit. As shown in the notebook, this enables a very simple UX:

```

# Transcribe the video to text

loader = GenericLoader(YoutubeAudioLoader([url],save_dir),OpenAIWhisperParser())

docs = loader.load()

```

Tested on full set of Karpathy lecture videos:

```

# Karpathy lecture videos

urls = ["https://youtu.be/VMj-3S1tku0"

"https://youtu.be/PaCmpygFfXo",

"https://youtu.be/TCH_1BHY58I",

"https://youtu.be/P6sfmUTpUmc",

"https://youtu.be/q8SA3rM6ckI",

"https://youtu.be/t3YJ5hKiMQ0",

"https://youtu.be/kCc8FmEb1nY"]

# Directory to save audio files

save_dir = "~/Downloads/YouTube"

# Transcribe the videos to text

loader = GenericLoader(YoutubeAudioLoader(urls,save_dir),OpenAIWhisperParser())

docs = loader.load()

```

<!--

Thank you for contributing to LangChain! Your PR will appear in our

release under the title you set. Please make sure it highlights your

valuable contribution.

Replace this with a description of the change, the issue it fixes (if

applicable), and relevant context. List any dependencies required for

this change.

After you're done, someone will review your PR. They may suggest

improvements. If no one reviews your PR within a few days, feel free to

@-mention the same people again, as notifications can get lost.

Finally, we'd love to show appreciation for your contribution - if you'd

like us to shout you out on Twitter, please also include your handle!

-->

In the [Databricks

integration](https://python.langchain.com/en/latest/integrations/databricks.html)

and [Databricks

LLM](https://python.langchain.com/en/latest/modules/models/llms/integrations/databricks.html),

we suggestted users to set the ENV variable `DATABRICKS_API_TOKEN`.

However, this is inconsistent with the other Databricks library. To make

it consistent, this PR changes the variable from `DATABRICKS_API_TOKEN`

to `DATABRICKS_TOKEN`

After changes, there is no more `DATABRICKS_API_TOKEN` in the doc

```

$ git grep DATABRICKS_API_TOKEN|wc -l

0

$ git grep DATABRICKS_TOKEN|wc -l

8

```

cc @hwchase17 @dev2049 @mengxr since you have reviewed the previous PRs.

# What does this PR do?

Change the HTML tags so that a tag with attributes can be found.

## Before submitting

- [x] Tests added

- [x] CI/CD validated

### Who can review?

Anyone in the community is free to review the PR once the tests have

passed. Feel free to tag

members/contributors who may be interested in your PR.

- Remove the client implementation (this breaks backwards compatibility

for existing testers. I could keep the stub in that file if we want, but

not many people are using it yet

- Add SDK as dependency

- Update the 'run_on_dataset' method to be a function that optionally

accepts a client as an argument

- Remove the langchain plus server implementation (you get it for free

with the SDK now)

We could make the SDK optional for now, but the plan is to use w/in the

tracer so it would likely become a hard dependency at some point.

# Scores in Vectorestores' Docs Are Explained

Following vectorestores can return scores with similar documents by

using `similarity_search_with_score`:

- chroma

- docarray_hnsw

- docarray_in_memory

- faiss

- myscale

- qdrant

- supabase

- vectara

- weaviate

However, in documents, these scores were either not explained at all or

explained in a way that could lead to misunderstandings (e.g., FAISS).

For instance in FAISS document: if we consider the score returned by the

function as a similarity score, we understand that a document returning

a higher score is more similar to the source document. However, since

the scores returned by the function are distance scores, we should

understand that smaller scores correspond to more similar documents.

For the libraries other than Vectara, I wrote the scores they use by

investigating from the source libraries. Since I couldn't be certain

about the score metric used by Vectara, I didn't make any changes in its

documentation. The links mentioned in Vectara's documentation became

broken due to updates, so I replaced them with working ones.

VectorStores / Retrievers / Memory

- @dev2049

my twitter: [berkedilekoglu](https://twitter.com/berkedilekoglu)

---------

Co-authored-by: Harrison Chase <hw.chase.17@gmail.com>

# Added an overview of LangChain modules

Aimed at introducing newcomers to LangChain's main modules :)

Twitter handle is @edrick_dch

## Who can review?

@eyurtsev

Fixes#5614

#### Issue

The `***` combination produces an exception when used as a seperator in

`re.split`. Instead `\*\*\*` should be used for regex exprations.

#### Who can review?

@eyurtsev

Fixes#5699

#### Who can review?

Tag maintainers/contributors who might be interested:

@woodworker @LeSphax @johannhartmann

---------

Co-authored-by: Harrison Chase <hw.chase.17@gmail.com>

…719)

A minor update to retry Cohore API call in case of errors using tenacity

as it is done for OpenAI LLMs.

#### Who can review?

@hwchase17, @agola11

<!-- For a quicker response, figure out the right person to tag with @

@hwchase17 - project lead

Tracing / Callbacks

- @agola11

Async

- @agola11

DataLoaders

- @eyurtsev

Models

- @hwchase17

- @agola11

Agents / Tools / Toolkits

- @vowelparrot

VectorStores / Retrievers / Memory

- @dev2049

-->

<!--

Thank you for contributing to LangChain! Your PR will appear in our

release under the title you set. Please make sure it highlights your

valuable contribution.

Replace this with a description of the change, the issue it fixes (if

applicable), and relevant context. List any dependencies required for

this change.

After you're done, someone will review your PR. They may suggest

improvements. If no one reviews your PR within a few days, feel free to

@-mention the same people again, as notifications can get lost.

Finally, we'd love to show appreciation for your contribution - if you'd

like us to shout you out on Twitter, please also include your handle!

-->

<!-- Remove if not applicable -->

Fixes # (issue)

#### Before submitting

<!-- If you're adding a new integration, please include:

1. a test for the integration - favor unit tests that does not rely on

network access.

2. an example notebook showing its use

See contribution guidelines for more information on how to write tests,

lint

etc:

https://github.com/hwchase17/langchain/blob/master/.github/CONTRIBUTING.md

-->

#### Who can review?

Tag maintainers/contributors who might be interested:

<!-- For a quicker response, figure out the right person to tag with @

@hwchase17 - project lead

Tracing / Callbacks

- @agola11

Async

- @agola11

DataLoaders

- @eyurtsev

Models

- @hwchase17

- @agola11

Agents / Tools / Toolkits

- @vowelparrot

VectorStores / Retrievers / Memory

- @dev2049

-->

---------

Co-authored-by: Sagar Sapkota <22609549+sagar-spkt@users.noreply.github.com>

Aviary is an open source toolkit for evaluating and deploying open

source LLMs. You can find out more about it on

[http://github.com/ray-project/aviary). You can try it out at

[http://aviary.anyscale.com](aviary.anyscale.com).

This code adds support for Aviary in LangChain. To minimize

dependencies, it connects directly to the HTTP endpoint.

The current implementation is not accelerated and uses the default

implementation of `predict` and `generate`.

It includes a test and a simple example.

@hwchase17 and @agola11 could you have a look at this?

---------

Co-authored-by: Dev 2049 <dev.dev2049@gmail.com>

<!--

Thank you for contributing to LangChain! Your PR will appear in our

release under the title you set. Please make sure it highlights your

valuable contribution.

Replace this with a description of the change, the issue it fixes (if

applicable), and relevant context. List any dependencies required for

this change.

After you're done, someone will review your PR. They may suggest

improvements. If no one reviews your PR within a few days, feel free to

@-mention the same people again, as notifications can get lost.

Finally, we'd love to show appreciation for your contribution - if you'd

like us to shout you out on Twitter, please also include your handle!

-->

Adding a class attribute "return_generated_question" to class

"BaseConversationalRetrievalChain". If set to `True`, the chain's output

has a key "generated_question" with the question generated by the

sub-chain `question_generator` as the value. This way the generated

question can be logged.

<!-- If you're adding a new integration, please include:

1. a test for the integration - favor unit tests that does not rely on

network access.

2. an example notebook showing its use

See contribution guidelines for more information on how to write tests,

lint

etc:

https://github.com/hwchase17/langchain/blob/master/.github/CONTRIBUTING.md

-->

#### Who can review?

<!-- For a quicker response, figure out the right person to tag with @

@hwchase17 - project lead

Tracing / Callbacks

- @agola11

Async

- @agola11

DataLoaders

- @eyurtsev

Models

- @hwchase17

- @agola11

Agents / Tools / Toolkits

- @vowelparrot

VectorStores / Retrievers / Memory

- @dev2049

-->

@dev2049 @vowelparrot

# OpenAIWhisperParser

This PR creates a new parser, `OpenAIWhisperParser`, that uses the

[OpenAI Whisper

model](https://platform.openai.com/docs/guides/speech-to-text/quickstart)

to perform transcription of audio files to text (`Documents`). Please

see the notebook for usage.

Fixed python deprecation warning:

DeprecationWarning: invalid escape sequence '`'

backticks (`) do not have special meaning in python strings and should

not be escaped.

-- @spazm on twitter

### Who can review:

@nfcampos ported this change from javascript, @hwchase17 wrote the

original STRUCTURED_FORMAT_INSTRUCTIONS,

Zep now supports persisting custom metadata with messages and hybrid

search across both message embeddings and structured metadata. This PR

implements custom metadata and enhancements to the

`ZepChatMessageHistory` and `ZepRetriever` classes to implement this

support.

Tag maintainers/contributors who might be interested:

VectorStores / Retrievers / Memory

- @dev2049

---------

Co-authored-by: Daniel Chalef <daniel.chalef@private.org>

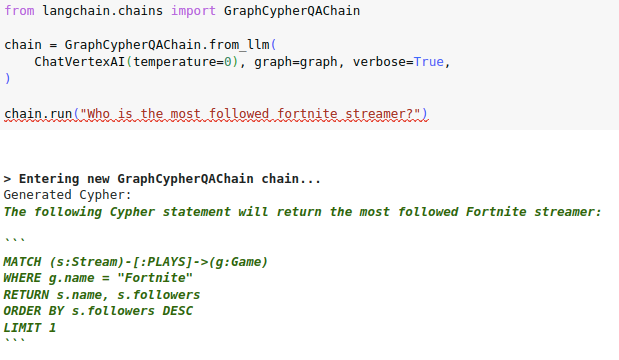

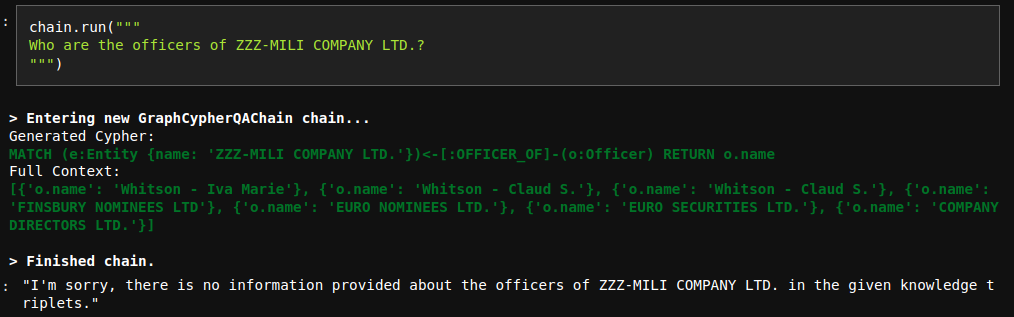

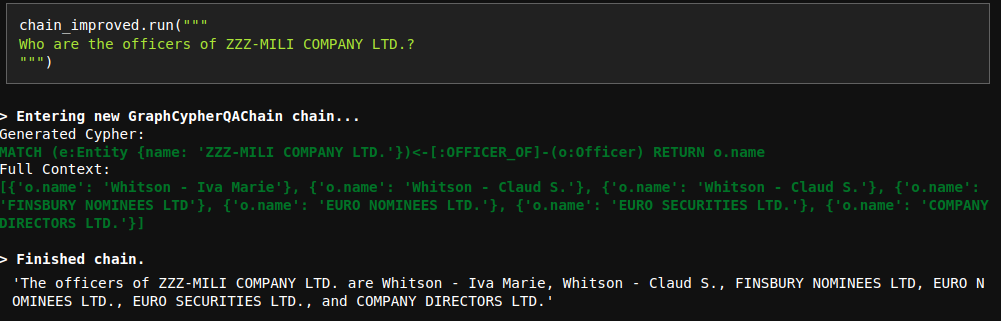

# Check if generated Cypher code is wrapped in backticks

Some LLMs like the VertexAI like to explain how they generated the

Cypher statement and wrap the actual code in three backticks:

I have observed a similar pattern with OpenAI chat models in a

conversational settings, where multiple user and assistant message are

provided to the LLM to generate Cypher statements, where then the LLM

wants to maybe apologize for previous steps or explain its thoughts.

Interestingly, both OpenAI and VertexAI wrap the code in three backticks

if they are doing any explaining or apologizing. Checking if the

generated cypher is wrapped in backticks seems like a low-hanging fruit

to expand the cypher search to other LLMs and conversational settings.

# Adding support to save multiple memories at a time. Cuts save time by

more then half

<!--

Thank you for contributing to LangChain! Your PR will appear in our next

release under the title you set. Please make sure it highlights your

valuable contribution.

Replace this with a description of the change, the issue it fixes (if

applicable), and relevant context. List any dependencies required for

this change.

After you're done, someone will review your PR. They may suggest

improvements. If no one reviews your PR within a few days, feel free to

@-mention the same people again, as notifications can get lost.

-->

## Who can review?

Community members can review the PR once tests pass. Tag

maintainers/contributors who might be interested:

<!-- For a quicker response, figure out the right person to tag with @

@hwchase17 - project lead

Tracing / Callbacks

- @agola11

Async

- @agola11

DataLoaders

- @eyurtsev

Models

- @hwchase17

- @agola11

-

VectorStores / Retrievers / Memory

- @dev2049

-->

@dev2049

@vowelparrot

---------

Co-authored-by: Dev 2049 <dev.dev2049@gmail.com>

Fixes#5720.

A more in-depth discussion is in my comment here:

https://github.com/hwchase17/langchain/issues/5720#issuecomment-1577047018

In a nutshell, there has been a subtle change in the latest version of

GPT4Alls Python bindings. The change I submitted yesterday is compatible

with this version, however, this version is as of yet unreleased and

thus the code change breaks Langchain's wrapper under the currently

released version of GPT4All.

This pull request proposes a backwards-compatible solution.

fix for the sqlalchemy deprecated declarative_base import :

```

MovedIn20Warning: The ``declarative_base()`` function is now available as sqlalchemy.orm.declarative_base(). (deprecated since: 2.0) (Background on SQLAlchemy 2.0 at: https://sqlalche.me/e/b8d9)

Base = declarative_base() # type: Any

```

Import is wrapped in an try catch Block to fallback to the old import if

needed.

---------

Co-authored-by: Dev 2049 <dev.dev2049@gmail.com>

# Token text splitter for sentence transformers

The current TokenTextSplitter only works with OpenAi models via the

`tiktoken` package. This is not clear from the name `TokenTextSplitter`.

In this (first PR) a token based text splitter for sentence transformer

models is added. In the future I think we should work towards injecting

a tokenizer into the TokenTextSplitter to make ti more flexible.

Could perhaps be reviewed by @dev2049

---------

Co-authored-by: Harrison Chase <hw.chase.17@gmail.com>

Raises exception if OutputParsers receive a response with both a valid

action and a final answer

Currently, if an OutputParser receives a response which includes both an

action and a final answer, they return a FinalAnswer object. This allows

the parser to accept responses which propose an action and hallucinate

an answer without the action being parsed or taken by the agent.

This PR changes the logic to:

1. store a variable checking whether a response contains the

`FINAL_ANSWER_ACTION` (this is the easier condition to check).

2. store a variable checking whether the response contains a valid

action

3. if both are present, raise a new exception stating that both are

present

4. if an action is present, return an AgentAction

5. if an answer is present, return an AgentAnswer

6. if neither is present, raise the relevant exception based around the

action format (these have been kept consistent with the prior exception

messages)

Disclaimer:

* Existing mock data included strings which did include an action and an

answer. This might indicate that prioritising returning AgentAnswer was

always correct, and I am patching out desired behaviour? @hwchase17 to

advice. Curious if there are allowed cases where this is not

hallucinating, and we do want the LLM to output an action which isn't

taken.

* I have not passed `send_to_llm` through this new exception

Fixes#5601

## Who can review?

Community members can review the PR once tests pass. Tag

maintainers/contributors who might be interested:

@hwchase17 - project lead

@vowelparrot

All the queries to the database are done based on the SessionId

property, this will optimize how Mongo retrieves all messages from a

session

#### Who can review?

Tag maintainers/contributors who might be interested:

@dev2049

<!--

Thank you for contributing to LangChain! Your PR will appear in our

release under the title you set. Please make sure it highlights your

valuable contribution.

Replace this with a description of the change, the issue it fixes (if

applicable), and relevant context. List any dependencies required for

this change.

After you're done, someone will review your PR. They may suggest

improvements. If no one reviews your PR within a few days, feel free to

@-mention the same people again, as notifications can get lost.

Finally, we'd love to show appreciation for your contribution - if you'd

like us to shout you out on Twitter, please also include your handle!

-->

<!-- Remove if not applicable -->

Fixes#5638. Retitles "Amazon Bedrock" page to "Bedrock" so that the

Integrations section of the left nav is properly sorted in alphabetical

order.

#### Who can review?

Tag maintainers/contributors who might be interested:

<!-- For a quicker response, figure out the right person to tag with @

@hwchase17 - project lead

Tracing / Callbacks

- @agola11

Async

- @agola11

DataLoaders

- @eyurtsev

Models

- @hwchase17

- @agola11

Agents / Tools / Toolkits

- @vowelparrot

VectorStores / Retrievers / Memory

- @dev2049

-->

@vowelparrot:

Minor change to the SQL agent:

Tells agent to introspect the schema of the most relevant tables, I

found this to dramatically decrease the chance that the agent wastes

times guessing column names.

Fixes https://github.com/hwchase17/langchain/issues/5067

Verified the following code now works correctly:

```

db = Chroma(persist_directory=index_directory(index_name), embedding_function=embeddings)

retriever = db.as_retriever(search_type="similarity_score_threshold", search_kwargs={"score_threshold": 0.4})

docs = retriever.get_relevant_documents(query)

```

## Improve Error Messaging for APOC Procedure Failure in Neo4jGraph

This commit revises the error message provided when the

'apoc.meta.data()' procedure fails. Previously, the message simply

instructed the user to install the APOC plugin in Neo4j. The new error

message is more specific.

Also removed an unnecessary newline in the Cypher statement variable:

`node_properties_query`.

Fixes#5545

## Who can review?

- @vowelparrot

- @dev2049

This commit addresses a ValueError occurring when the YoutubeLoader

class tries to add datetime metadata from a YouTube video's publish

date. The error was happening because the ChromaDB metadata validation

only accepts str, int, or float data types.

In the `_get_video_info` method of the `YoutubeLoader` class, the

publish date retrieved from the YouTube video was of datetime type. This

commit fixes the issue by converting the datetime object to a string

before adding it to the metadata dictionary.

Additionally, this commit introduces error handling in the

`_get_video_info` method to ensure that all metadata fields have valid

values. If a metadata field is found to be None, a default value is

assigned. This prevents potential errors during metadata validation when

metadata fields are None.

The file modified in this commit is youtube.py.

# Your PR Title (What it does)

<!--

Thank you for contributing to LangChain! Your PR will appear in our

release under the title you set. Please make sure it highlights your

valuable contribution.

Replace this with a description of the change, the issue it fixes (if

applicable), and relevant context. List any dependencies required for

this change.

After you're done, someone will review your PR. They may suggest

improvements. If no one reviews your PR within a few days, feel free to

@-mention the same people again, as notifications can get lost.

Finally, we'd love to show appreciation for your contribution - if you'd

like us to shout you out on Twitter, please also include your handle!

-->

<!-- Remove if not applicable -->

Fixes # (issue)

## Before submitting

<!-- If you're adding a new integration, please include:

1. a test for the integration - favor unit tests that does not rely on

network access.

2. an example notebook showing its use

See contribution guidelines for more information on how to write tests,

lint

etc:

https://github.com/hwchase17/langchain/blob/master/.github/CONTRIBUTING.md

-->

## Who can review?

Community members can review the PR once tests pass. Tag

maintainers/contributors who might be interested:

<!-- For a quicker response, figure out the right person to tag with @

@hwchase17 - project lead

Tracing / Callbacks

- @agola11

Async

- @agola11

DataLoaders

- @eyurtsev

Models

- @hwchase17

- @agola11

Agents / Tools / Toolkits

- @vowelparrot

VectorStores / Retrievers / Memory

- @dev2049

-->

---------

Co-authored-by: Harrison Chase <hw.chase.17@gmail.com>

# refactor BaseStringMessagePromptTemplate from_template method

Refactor the `from_template` method of the

`BaseStringMessagePromptTemplate` class to allow passing keyword

arguments to the `from_template` method of `PromptTemplate`.

Enable the usage of arguments like `template_format`.

In my scenario, I intend to utilize Jinja2 for formatting the human

message prompt in the chat template.

## Who can review?

Community members can review the PR once tests pass. Tag

maintainers/contributors who might be interested:

<!-- For a quicker response, figure out the right person to tag with @

@hwchase17 - project lead

Models

- @hwchase17

- @agola11

- @jonasalexander

-->

---------

Co-authored-by: Dev 2049 <dev.dev2049@gmail.com>

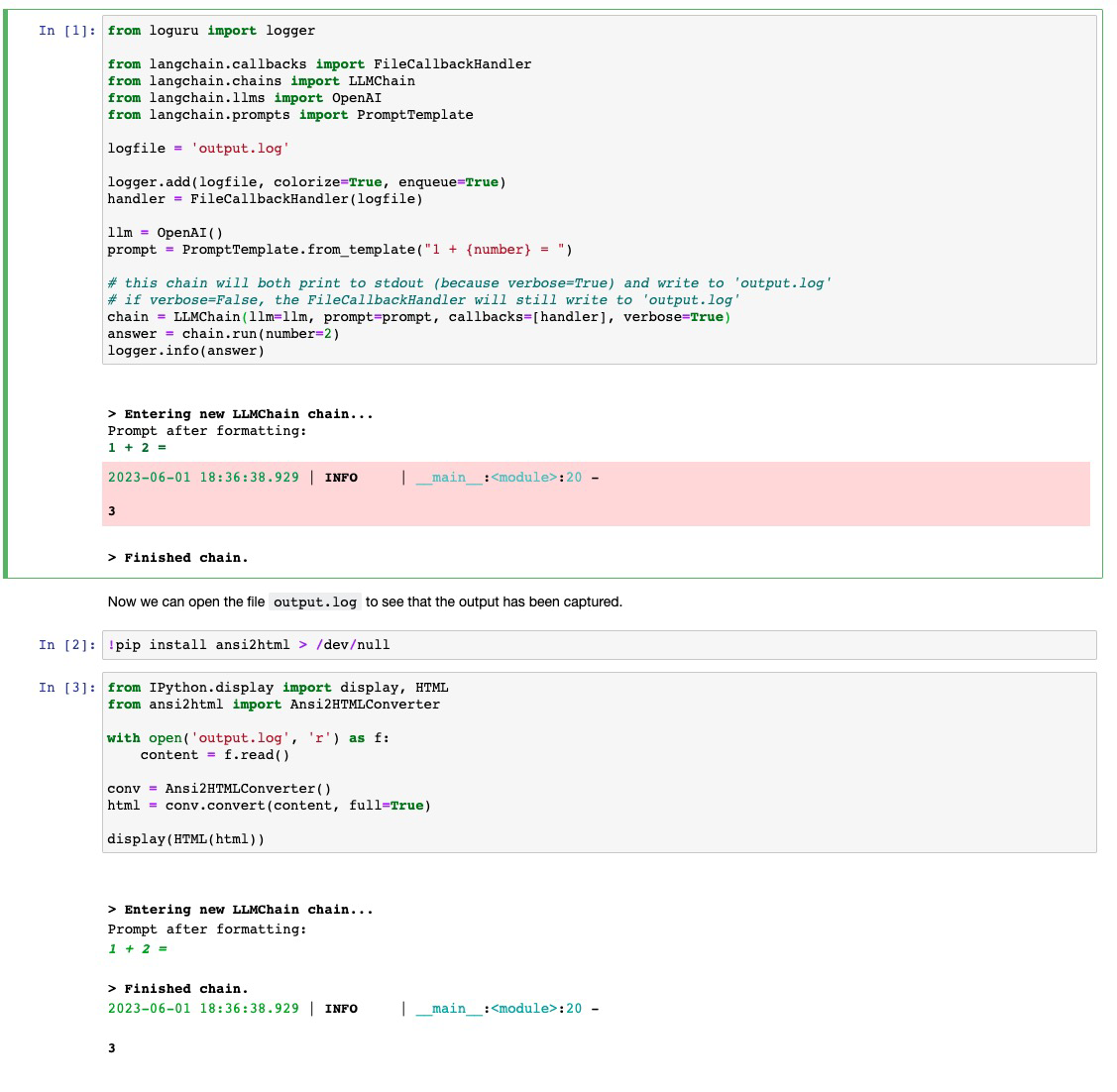

# like

[StdoutCallbackHandler](https://github.com/hwchase17/langchain/blob/master/langchain/callbacks/stdout.py),

but writes to a file

When running experiments I have found myself wanting to log the outputs

of my chains in a more lightweight way than using WandB tracing. This PR

contributes a callback handler that writes to file what

`StdoutCallbackHandler` would print.

<!--

Thank you for contributing to LangChain! Your PR will appear in our

release under the title you set. Please make sure it highlights your

valuable contribution.

Replace this with a description of the change, the issue it fixes (if

applicable), and relevant context. List any dependencies required for

this change.

After you're done, someone will review your PR. They may suggest

improvements. If no one reviews your PR within a few days, feel free to

@-mention the same people again, as notifications can get lost.

Finally, we'd love to show appreciation for your contribution - if you'd

like us to shout you out on Twitter, please also include your handle!

-->

## Example Notebook

<!-- If you're adding a new integration, please include:

1. a test for the integration - favor unit tests that does not rely on

network access.

2. an example notebook showing its use

See contribution guidelines for more information on how to write tests,

lint

etc:

https://github.com/hwchase17/langchain/blob/master/.github/CONTRIBUTING.md

-->

See the included `filecallbackhandler.ipynb` notebook for usage. Would

it be better to include this notebook under `modules/callbacks` or under

`integrations/`?

## Who can review?

Community members can review the PR once tests pass. Tag

maintainers/contributors who might be interested:

@agola11

<!-- For a quicker response, figure out the right person to tag with @

@hwchase17 - project lead

Tracing / Callbacks

- @agola11

Async

- @agola11

DataLoaders

- @eyurtsev

Models

- @hwchase17

- @agola11

Agents / Tools / Toolkits

- @vowelparrot

VectorStores / Retrievers / Memory

- @dev2049

-->

Created fix for 5475

Currently in PGvector, we do not have any function that returns the

instance of an existing store. The from_documents always adds embeddings

and then returns the store. This fix is to add a function that will

return the instance of an existing store

Also changed the jupyter example for PGVector to show the example of

using the function

<!-- Remove if not applicable -->

Fixes # 5475

#### Before submitting

<!-- If you're adding a new integration, please include:

1. a test for the integration - favor unit tests that does not rely on

network access.

2. an example notebook showing its use

See contribution guidelines for more information on how to write tests,

lint

etc:

https://github.com/hwchase17/langchain/blob/master/.github/CONTRIBUTING.md

-->

#### Who can review?

@dev2049

@hwchase17

Tag maintainers/contributors who might be interested:

<!-- For a quicker response, figure out the right person to tag with @

@hwchase17 - project lead

Tracing / Callbacks

- @agola11

Async

- @agola11

DataLoaders

- @eyurtsev

Models

- @hwchase17

- @agola11

Agents / Tools / Toolkits

- @vowelparrot

VectorStores / Retrievers / Memory

- @dev2049

-->

---------

Co-authored-by: rajib76 <rajib76@yahoo.com>

Co-authored-by: Dev 2049 <dev.dev2049@gmail.com>

This PR corrects a minor typo in the Momento chat message history

notebook and also expands the title from "Momento" to "Momento Chat

History", inline with other chat history storage providers.

#### Before submitting

<!-- If you're adding a new integration, please include:

1. a test for the integration - favor unit tests that does not rely on

network access.

2. an example notebook showing its use

See contribution guidelines for more information on how to write tests,

lint

etc:

https://github.com/hwchase17/langchain/blob/master/.github/CONTRIBUTING.md

-->

#### Who can review?

cc @dev2049 who reviewed the original integration

# Your PR Title (What it does)

Fixes the pgvector python example notebook : one of the variables was

not referencing anything

## Before submitting

## Who can review?

Community members can review the PR once tests pass. Tag

maintainers/contributors who might be interested:

VectorStores / Retrievers / Memory

- @dev2049

# Ensure parameters are used by vertexai chat models (PaLM2)

The current version of the google aiplatform contains a bug where

parameters for a chat model are not used as intended.

See https://github.com/googleapis/python-aiplatform/issues/2263

Params can be passed both to start_chat() and send_message(); however,

the parameters passed to start_chat() will not be used if send_message()

is called without the overrides. This is due to the defaults in

send_message() being global values rather than None (there is code in

send_message() which would use the params from start_chat() if the param

passed to send_message() evaluates to False, but that won't happen as

the defaults are global values).

Fixes # 5531

@hwchase17

@agola11

# Make FinalStreamingStdOutCallbackHandler more robust by ignoring new

lines & white spaces

`FinalStreamingStdOutCallbackHandler` doesn't work out of the box with

`ChatOpenAI`, as it tokenized slightly differently than `OpenAI`. The

response of `OpenAI` contains the tokens `["\nFinal", " Answer", ":"]`

while `ChatOpenAI` contains `["Final", " Answer", ":"]`.

This PR make `FinalStreamingStdOutCallbackHandler` more robust by

ignoring new lines & white spaces when determining if the answer prefix

has been reached.

Fixes#5433

## Who can review?

Community members can review the PR once tests pass. Tag

maintainers/contributors who might be interested:

Tracing / Callbacks

- @agola11

Twitter: [@UmerHAdil](https://twitter.com/@UmerHAdil) | Discord:

RicChilligerDude#7589

# Adds the option to pass the original prompt into the AgentExecutor for

PlanAndExecute agents

This PR allows the user to optionally specify that they wish for the

original prompt/objective to be passed into the Executor agent used by

the PlanAndExecute agent. This solves a potential problem where the plan

is formed referring to some context contained in the original prompt,

but which is not included in the current prompt.

Currently, the prompt format given to the Executor is:

```

System: Respond to the human as helpfully and accurately as possible. You have access to the following tools:

<Tool and Action Description>

<Output Format Description>

Begin! Reminder to ALWAYS respond with a valid json blob of a single action. Use tools if necessary. Respond directly if appropriate. Format is Action:```$JSON_BLOB```then Observation:.

Thought:

Human: <Previous steps>

<Current step>

```

This PR changes the final part after `Human:` to optionally insert the

objective:

```

Human: <objective>

<Previous steps>

<Current step>

```

I have given a specific example in #5400 where the context of a database

path is lost, since the plan refers to the "given path".

The PR has been linted and formatted. So that existing behaviour is not

changed, I have defaulted the argument to `False` and added it as the

last argument in the signature, so it does not cause issues for any

users passing args positionally as opposed to using keywords.

Happy to take any feedback or make required changes!

Fixes#5400

## Who can review?

Community members can review the PR once tests pass. Tag

maintainers/contributors who might be interested:

@vowelparrot

---------

Co-authored-by: Nathan Azrak <nathan.azrak@gmail.com>

# Implements support for Personal Access Token Authentication in the

ConfluenceLoader

Fixes#5191

Implements a new optional parameter for the ConfluenceLoader: `token`.

This allows the use of personal access authentication when using the

on-prem server version of Confluence.

## Who can review?

Community members can review the PR once tests pass. Tag

maintainers/contributors who might be interested:

@eyurtsev @Jflick58

Twitter Handle: felipe_yyc

---------

Co-authored-by: Felipe <feferreira@ea.com>

Co-authored-by: Dev 2049 <dev.dev2049@gmail.com>

# Update confluence.py to return spaces between elements like headers

and links.

Please see

https://stackoverflow.com/questions/48913975/how-to-return-nicely-formatted-text-in-beautifulsoup4-when-html-text-is-across-m

Given:

```html

<address>

183 Main St<br>East Copper<br>Massachusetts<br>U S A<br>

MA 01516-113

</address>

```

The document loader currently returns:

```

'183 Main StEast CopperMassachusettsU S A MA 01516-113'

```

After this change, the document loader will return:

```

183 Main St East Copper Massachusetts U S A MA 01516-113

```

@eyurtsev would you prefer this to be an option that can be passed in?

# Reduce DB query error rate

If you use sql agent of `SQLDatabaseToolkit` to query data, it is prone

to errors in query fields and often uses fields that do not exist in

database tables for queries. However, the existing prompt does not

effectively make the agent aware that there are problems with the fields

they query. At this time, we urgently need to improve the prompt so that

the agent realizes that they have queried non-existent fields and allows

them to use the `schema_sql_db`, that is,` ListSQLDatabaseTool` first

queries the corresponding fields in the table in the database, and then

uses `QuerySQLDatabaseTool` for querying.

There is a demo of my project to show this problem.

**Original Agent**

```python

def create_mysql_kit():

db = SQLDatabase.from_uri("mysql+pymysql://xxxxxxx")

llm = OpenAI(temperature=0)

toolkit = SQLDatabaseToolkit(db=db, llm=llm)

agent_executor = create_sql_agent(

llm=OpenAI(temperature=0),

toolkit=toolkit,

verbose=True

)

agent_executor.run("Who are the users of sysuser in this system? Tell me the username of all users")

if __name__ == '__main__':

create_mysql_kit()

```

**original output**

```

> Entering new AgentExecutor chain...

Action: list_tables_sql_db

Action Input: ""

Observation: app_sysrole_menus, app_bimfacemodel, app_project_users, app_measuringpointdata, auth_user, auth_user_groups, django_apscheduler_djangojobexecution, app_project, app_elementpoint, django_apscheduler_djangojob, django_content_type, app_sysrole, django_admin_log, app_bimfaceaccount, app_measuringpoint_warning_thresholds, app_measuringpoint, app_company, auth_group_permissions, app_sysuser, app_sysuser_companies, app_sysmenu, app_datawarningthreshold, auth_group, auth_permission, app_datawarningrecord, auth_user_user_permissions, app_bimfaceaccount_bimface_models, django_migrations, app_measuringitem, django_session

Thought: I should query the app_sysuser table to get the users in the system.

Action: query_sql_db

Action Input: SELECT username FROM app_sysuser LIMIT 10;

Observation: Error: (pymysql.err.OperationalError) (1054, "Unknown column 'username' in 'field list'")

[SQL: SELECT username FROM app_sysuser LIMIT 10;]

(Background on this error at: https://sqlalche.me/e/20/e3q8)

Thought: I should double check my query before executing it.

Action: query_checker_sql_db

Action Input: SELECT username FROM app_sysuser LIMIT 10;

Observation:

SELECT username FROM app_sysuser LIMIT 10;

Thought: The query looks correct, so I should execute it.

Action: query_sql_db

Action Input: SELECT username FROM app_sysuser LIMIT 10;

Observation: Error: (pymysql.err.OperationalError) (1054, "Unknown column 'username' in 'field list'")

[SQL: SELECT username FROM app_sysuser LIMIT 10;]

(Background on this error at: https://sqlalche.me/e/20/e3q8)

Thought: I should double check my query before executing it.

Action: query_checker_sql_db

Action Input: SELECT name FROM app_sysuser LIMIT 10;

Observation:

SELECT name FROM app_sysuser LIMIT 10;

Thought: The query looks correct, so I should execute it.

Action: query_sql_db

Action Input: SELECT name FROM app_sysuser LIMIT 10;

Observation: Error: (pymysql.err.OperationalError) (1054, "Unknown column 'name' in 'field list'")

[SQL: SELECT name FROM app_sysuser LIMIT 10;]

(Background on this error at: https://sqlalche.me/e/20/e3q8)

Thought: I should double check my query before executing it.

Action: query_checker_sql_db

Action Input: SELECT id, username FROM app_sysuser LIMIT 10;

Observation:

SELECT id, username FROM app_sysuser LIMIT 10;

Thought: The query looks correct, so I should execute it.

Action: query_sql_db

Action Input: SELECT id, username FROM app_sysuser LIMIT 10;

Observation: Error: (pymysql.err.OperationalError) (1054, "Unknown column 'id' in 'field list'")

[SQL: SELECT id, username FROM app_sysuser LIMIT 10;]

(Background on this error at: https://sqlalche.me/e/20/e3q8)

Thought: I should double check my query before executing it.

Action: query_checker_sql_db

Action Input: SELECT user_id, username FROM app_sysuser LIMIT 10;

Observation:

SELECT user_id, username FROM app_sysuser WHERE user_id IS NOT NULL AND user_id BETWEEN 0 AND 10 UNION ALL SELECT user_id, username FROM app_sysuser WHERE username IS NOT NULL ORDER BY user_id ASC LIMIT 10;

Thought: The query looks correct, so I should execute it.

Action: query_sql_db

Action Input: SELECT user_id, username FROM app_sysuser WHERE user_id IS NOT NULL AND user_id BETWEEN 0 AND 10 UNION ALL SELECT user_id, username FROM app_sysuser WHERE username IS NOT NULL ORDER BY user_id ASC LIMIT 10;

Observation: Error: (pymysql.err.OperationalError) (1054, "Unknown column 'username' in 'field list'")

[SQL: SELECT user_id, username FROM app_sysuser WHERE user_id IS NOT NULL AND user_id BETWEEN 0 AND 10 UNION ALL SELECT user_id, username FROM app_sysuser WHERE username IS NOT NULL ORDER BY user_id ASC LIMIT 10;]

(Background on this error at: https://sqlalche.me/e/20/e3q8)

Thought: I should double check my query before executing it.

Action: query_checker_sql_db

Action Input: SELECT user_id, user_name FROM app_sysuser LIMIT 10;

Observation:

SELECT user_id, user_name FROM app_sysuser WHERE user_id NOT IN (NULL) AND user_name BETWEEN 'A' AND 'Z' UNION ALL SELECT user_id, user_name FROM app_sysuser WHERE user_id IS NOT NULL AND user_name LIKE '%a%' LIMIT 10;

Thought: The query looks correct, so I should execute it.

Action: query_sql_db

Action Input: SELECT user_id, user_name FROM app_sysuser WHERE user_id NOT IN (NULL) AND user_name BETWEEN 'A' AND 'Z' UNION ALL SELECT user_id, user_name FROM app_sysuser WHERE user_id IS NOT NULL AND user_name LIKE '%a%' LIMIT 10;

Observation: []

Thought: I now know the final answer.

Final Answer: There are no users in the sysuser table.

> Finished chain.

Process finished with exit code 0

```

As you can see, agent always use incorrect sql to query the data.

Actually, the username field name of my `app_sysuser` table is

`user_name`, not the `username` believed by the agent.

**Optimized agent output**

```

> Entering new AgentExecutor chain...

Action: list_tables_sql_db

Action Input: ""

Observation: app_project_users, django_content_type, app_measuringpoint_warning_thresholds, app_bimfaceaccount_bimface_models, django_session, app_sysuser, django_migrations, app_bimfacemodel, app_elementpoint, app_measuringpoint, django_apscheduler_djangojobexecution, auth_permission, app_sysuser_companies, app_measuringpointdata, app_measuringitem, app_sysrole_menus, django_admin_log, auth_group, django_apscheduler_djangojob, app_sysmenu, app_project, app_bimfaceaccount, app_datawarningthreshold, app_datawarningrecord, auth_user_groups, auth_user_user_permissions, auth_group_permissions, auth_user, app_company, app_sysrole

Thought: I should query the app_sysuser table to get the usernames of all the users.

Action: query_sql_db

Action Input: SELECT username FROM app_sysuser

Observation: Error: (pymysql.err.OperationalError) (1054, "Unknown column 'username' in 'field list'")

[SQL: SELECT username FROM app_sysuser]

(Background on this error at: https://sqlalche.me/e/20/e3q8)

Thought: I should use the schema_sql_db tool to get the correct table fields.

Action: schema_sql_db

Action Input: app_sysuser

Observation:

CREATE TABLE app_sysuser (

user_id INTEGER NOT NULL AUTO_INCREMENT,

user_account VARCHAR(50) COLLATE utf8mb4_general_ci NOT NULL,

user_password VARCHAR(255) COLLATE utf8mb4_general_ci NOT NULL,

user_name VARCHAR(50) COLLATE utf8mb4_general_ci NOT NULL,

user_avatar VARCHAR(255) COLLATE utf8mb4_general_ci,

user_email VARCHAR(254) COLLATE utf8mb4_general_ci,

user_phone VARCHAR(20) COLLATE utf8mb4_general_ci,

user_create_time DATETIME(6) NOT NULL,

user_update_time DATETIME(6) NOT NULL,

user_status SMALLINT,

role_id_id INTEGER,

PRIMARY KEY (user_id),

CONSTRAINT app_sysuser_role_id_id_51b50f39_fk_app_sysrole_role_id FOREIGN KEY(role_id_id) REFERENCES app_sysrole (role_id)

)COLLATE utf8mb4_general_ci DEFAULT CHARSET=utf8mb4 ENGINE=InnoDB

/*

3 rows from app_sysuser table:

user_id user_account user_password user_name user_avatar user_email user_phone user_create_time user_update_time user_status role_id_id

xxxxxxxxxxxxxx

*/

Thought: I should query the app_sysuser table to get the usernames of all the users.

Action: query_sql_db

Action Input: SELECT user_account FROM app_sysuser LIMIT 10

Observation: [('baiyun',), ('eatrice',), ('lisi',), ('pingxiang',), ('wangwu',), ('zeeland',), ('zsj',), ('zzw',)]

Thought: I now know the final answer

Final Answer: The usernames of the users in the sysuser table are baiyun, eatrice, lisi, pingxiang, wangwu, zeeland, zsj, and zzw.

> Finished chain.

Process finished with exit code 0

```

I have tested about 10 related prompts and they all work properly, with

a much lower error rate compared to before

## Who can review?

@vowelparrot

---------

Co-authored-by: Dev 2049 <dev.dev2049@gmail.com>

# minor refactor of GenerativeAgentMemory

<!--

Thank you for contributing to LangChain! Your PR will appear in our

release under the title you set. Please make sure it highlights your

valuable contribution.

Replace this with a description of the change, the issue it fixes (if

applicable), and relevant context. List any dependencies required for

this change.

After you're done, someone will review your PR. They may suggest

improvements. If no one reviews your PR within a few days, feel free to

@-mention the same people again, as notifications can get lost.

-->

<!-- Remove if not applicable -->

- refactor `format_memories_detail` to be more reusable

- modified prompts for getting topics for reflection and for generating

insights

- update `characters.ipynb` to reflect changes

## Before submitting

<!-- If you're adding a new integration, please include:

1. a test for the integration - favor unit tests that does not rely on

network access.

2. an example notebook showing its use

See contribution guidelines for more information on how to write tests,

lint

etc:

https://github.com/hwchase17/langchain/blob/master/.github/CONTRIBUTING.md

-->

## Who can review?

Community members can review the PR once tests pass. Tag

maintainers/contributors who might be interested:

<!-- For a quicker response, figure out the right person to tag with @

@hwchase17 - project lead

Tracing / Callbacks

- @agola11

Async

- @agola11

DataLoaders

- @eyurtsev

Models

- @hwchase17

- @agola11

Agents / Tools / Toolkits

- @vowelparrot

VectorStores / Retrievers / Memory

- @dev2049

-->

@vowelparrot

@hwchase17

@dev2049

# docs: modules pages simplified

Fixied #5627 issue

Merged several repetitive sections in the `modules` pages. Some texts,

that were hard to understand, were also simplified.

## Who can review?

@hwchase17

@dev2049

# Fixed multi input prompt for MapReduceChain

Added `kwargs` support for inner chains of `MapReduceChain` via

`from_params` method

Currently the `from_method` method of intialising `MapReduceChain` chain

doesn't work if prompt has multiple inputs. It happens because it uses

`StuffDocumentsChain` and `MapReduceDocumentsChain` underneath, both of

them require specifying `document_variable_name` if `prompt` of their

`llm_chain` has more than one `input`.

With this PR, I have added support for passing their respective `kwargs`

via the `from_params` method.

## Fixes https://github.com/hwchase17/langchain/issues/4752

## Who can review?

@dev2049 @hwchase17 @agola11

---------

Co-authored-by: imeckr <chandanroutray2012@gmail.com>

# Unstructured Excel Loader

Adds an `UnstructuredExcelLoader` class for `.xlsx` and `.xls` files.

Works with `unstructured>=0.6.7`. A plain text representation of the

Excel file will be available under the `page_content` attribute in the

doc. If you use the loader in `"elements"` mode, an HTML representation

of the Excel file will be available under the `text_as_html` metadata

key. Each sheet in the Excel document is its own document.

### Testing

```python

from langchain.document_loaders import UnstructuredExcelLoader

loader = UnstructuredExcelLoader(

"example_data/stanley-cups.xlsx",

mode="elements"

)