**Description:**

modified the user_name to username to conform with the expected inputs

to TelegramChatApiLoader

**Issue:**

Current code fails in langchain-community 0.0.24

<loader = TelegramChatApiLoader(

chat_entity="<CHAT_URL>", # recommended to use Entity here

api_hash="<API HASH >",

api_id="<API_ID>",

user_name="", # needed only for caching the session.

)>

## **Description**

Migrate the `MongoDBChatMessageHistory` to the managed

`langchain-mongodb` partner-package

## **Dependencies**

None

## **Twitter handle**

@mongodb

## **tests and docs**

- [x] Migrate existing integration test

- [x ]~ Convert existing integration test to a unit test~ Creation is

out of scope for this ticket

- [x ] ~Considering delaying work until #17470 merges to leverage the

`MockCollection` object. ~

- [x] **Lint and test**: Run `make format`, `make lint` and `make test`

from the root of the package(s) you've modified. See contribution

guidelines for more: https://python.langchain.com/docs/contributing/

---------

Co-authored-by: Erick Friis <erick@langchain.dev>

# Description

- **Description:** Adding MongoDB LLM Caching Layer abstraction

- **Issue:** N/A

- **Dependencies:** None

- **Twitter handle:** @mongodb

Checklist:

- [x] PR title: Please title your PR "package: description", where

"package" is whichever of langchain, community, core, experimental, etc.

is being modified. Use "docs: ..." for purely docs changes, "templates:

..." for template changes, "infra: ..." for CI changes.

- Example: "community: add foobar LLM"

- [x] PR Message (above)

- [x] Pass lint and test: Run `make format`, `make lint` and `make test`

from the root of the package(s) you've modified to check that you're

passing lint and testing. See contribution guidelines for more

information on how to write/run tests, lint, etc:

https://python.langchain.com/docs/contributing/

- [ ] Add tests and docs: If you're adding a new integration, please

include

1. a test for the integration, preferably unit tests that do not rely on

network access,

2. an example notebook showing its use. It lives in

`docs/docs/integrations` directory.

Additional guidelines:

- Make sure optional dependencies are imported within a function.

- Please do not add dependencies to pyproject.toml files (even optional

ones) unless they are required for unit tests.

- Most PRs should not touch more than one package.

- Changes should be backwards compatible.

- If you are adding something to community, do not re-import it in

langchain.

If no one reviews your PR within a few days, please @-mention one of

@baskaryan, @efriis, @eyurtsev, @hwchase17.

---------

Co-authored-by: Jib <jib@byblack.us>

- **Description:**

This PR fixes some issues in the Jupyter notebook for the VectorStore

"SAP HANA Cloud Vector Engine":

* Slight textual adaptations

* Fix of wrong column name VEC_META (was: VEC_METADATA)

- **Issue:** N/A

- **Dependencies:** no new dependecies added

- **Twitter handle:** @sapopensource

path to notebook:

`docs/docs/integrations/vectorstores/hanavector.ipynb`

## PR title

Docs: Updated callbacks/index.mdx adding example on runnable methods

## PR message

- **Description:** Updated callbacks/index.mdx adding an example on how

to pass callbacks to the runnable methods (invoke, batch, ...)

- **Issue:** #16379

- **Dependencies:** None

- **Description:** finishes adding the you.com functionality including:

- add async functions to utility and retriever

- add the You.com Tool

- add async testing for utility, retriever, and tool

- add a tool integration notebook page

- **Dependencies:** any dependencies required for this change

- **Twitter handle:** @scottnath

Description:

This pull request introduces several enhancements for Azure Cosmos

Vector DB, primarily focused on improving caching and search

capabilities using Azure Cosmos MongoDB vCore Vector DB. Here's a

summary of the changes:

- **AzureCosmosDBSemanticCache**: Added a new cache implementation

called AzureCosmosDBSemanticCache, which utilizes Azure Cosmos MongoDB

vCore Vector DB for efficient caching of semantic data. Added

comprehensive test cases for AzureCosmosDBSemanticCache to ensure its

correctness and robustness. These tests cover various scenarios and edge

cases to validate the cache's behavior.

- **HNSW Vector Search**: Added HNSW vector search functionality in the

CosmosDB Vector Search module. This enhancement enables more efficient

and accurate vector searches by utilizing the HNSW (Hierarchical

Navigable Small World) algorithm. Added corresponding test cases to

validate the HNSW vector search functionality in both

AzureCosmosDBSemanticCache and AzureCosmosDBVectorSearch. These tests

ensure the correctness and performance of the HNSW search algorithm.

- **LLM Caching Notebook** - The notebook now includes a comprehensive

example showcasing the usage of the AzureCosmosDBSemanticCache. This

example highlights how the cache can be employed to efficiently store

and retrieve semantic data. Additionally, the example provides default

values for all parameters used within the AzureCosmosDBSemanticCache,

ensuring clarity and ease of understanding for users who are new to the

cache implementation.

@hwchase17,@baskaryan, @eyurtsev,

* **Description:** adds `LlamafileEmbeddings` class implementation for

generating embeddings using

[llamafile](https://github.com/Mozilla-Ocho/llamafile)-based models.

Includes related unit tests and notebook showing example usage.

* **Issue:** N/A

* **Dependencies:** N/A

**Description:**

(a) Update to the module import path to reflect the splitting up of

langchain into separate packages

(b) Update to the documentation to include the new calling method

(invoke)

**Description:**

The URL of the data to index, specified to `WebBaseLoader` to import is

incorrect, causing the `langsmith_search` retriever to return a `404:

NOT_FOUND`.

Incorrect URL: https://docs.smith.langchain.com/overview

Correct URL: https://docs.smith.langchain.com

**Issue:**

This commit corrects the URL and prevents the LangServe Playground from

returning an error from its inability to use the retriever when

inquiring, "how can langsmith help with testing?".

**Dependencies:**

None.

**Twitter Handle:**

@ryanmeinzer

In this commit we update the documentation for Google El Carro for Oracle Workloads. We amend the documentation in the Google Providers page to use the correct name which is El Carro for Oracle Workloads. We also add changes to the document_loaders and memory pages to reflect changes we made in our repo.

- **Description**:

[`bigdl-llm`](https://github.com/intel-analytics/BigDL) is a library for

running LLM on Intel XPU (from Laptop to GPU to Cloud) using

INT4/FP4/INT8/FP8 with very low latency (for any PyTorch model). This PR

adds bigdl-llm integrations to langchain.

- **Issue**: NA

- **Dependencies**: `bigdl-llm` library

- **Contribution maintainer**: @shane-huang

Examples added:

- docs/docs/integrations/llms/bigdl.ipynb

Nvidia provider page is missing a Triton Inference Server package

reference.

Changes:

- added the Triton Inference Server reference

- copied the example notebook from the package into the doc files.

- added the Triton Inference Server description and links, the link to

the above example notebook

- formatted page to the consistent format

NOTE:

It seems that the [example

notebook](https://github.com/langchain-ai/langchain/blob/master/libs/partners/nvidia-trt/docs/llms.ipynb)

was originally created in wrong place. It should be in the LangChain

docs

[here](https://github.com/langchain-ai/langchain/tree/master/docs/docs/integrations/llms).

So, I've created a copy of this example. The original example is still

in the nvidia-trt package.

This PR migrates the existing MongoDBAtlasVectorSearch abstraction from

the `langchain_community` section to the partners package section of the

codebase.

- [x] Run the partner package script as advised in the partner-packages

documentation.

- [x] Add Unit Tests

- [x] Migrate Integration Tests

- [x] Refactor `MongoDBAtlasVectorStore` (autogenerated) to

`MongoDBAtlasVectorSearch`

- [x] ~Remove~ deprecate the old `langchain_community` VectorStore

references.

## Additional Callouts

- Implemented the `delete` method

- Included any missing async function implementations

- `amax_marginal_relevance_search_by_vector`

- `adelete`

- Added new Unit Tests that test for functionality of

`MongoDBVectorSearch` methods

- Removed [`del

res[self._embedding_key]`](e0c81e1cb0/libs/community/langchain_community/vectorstores/mongodb_atlas.py (L218))

in `_similarity_search_with_score` function as it would make the

`maximal_marginal_relevance` function fail otherwise. The `Document`

needs to store the embedding key in metadata to work.

Checklist:

- [x] PR title: Please title your PR "package: description", where

"package" is whichever of langchain, community, core, experimental, etc.

is being modified. Use "docs: ..." for purely docs changes, "templates:

..." for template changes, "infra: ..." for CI changes.

- Example: "community: add foobar LLM"

- [x] PR message

- [x] Pass lint and test: Run `make format`, `make lint` and `make test`

from the root of the package(s) you've modified to check that you're

passing lint and testing. See contribution guidelines for more

information on how to write/run tests, lint, etc:

https://python.langchain.com/docs/contributing/

- [x] Add tests and docs: If you're adding a new integration, please

include

1. Existing tests supplied in docs/docs do not change. Updated

docstrings for new functions like `delete`

2. an example notebook showing its use. It lives in

`docs/docs/integrations` directory. (This already exists)

If no one reviews your PR within a few days, please @-mention one of

baskaryan, efriis, eyurtsev, hwchase17.

---------

Co-authored-by: Steven Silvester <steven.silvester@ieee.org>

Co-authored-by: Erick Friis <erick@langchain.dev>

This PR adds links to some more free resources for people to get

acquainted with Langhchain without having to configure their system.

<!-- If no one reviews your PR within a few days, please @-mention one

of baskaryan, efriis, eyurtsev, hwchase17. -->

Co-authored-by: Filip Schouwenaars <filipsch@users.noreply.github.com>

**Description:**

In this PR, I am adding a `PolygonFinancials` tool, which can be used to

get financials data for a given ticker. The financials data is the

fundamental data that is found in income statements, balance sheets, and

cash flow statements of public US companies.

**Twitter**:

[@virattt](https://twitter.com/virattt)

Several URL-s were broken (in the yesterday PR). Like

[Integrations/platforms/google/Document

Loaders](https://python.langchain.com/docs/integrations/platforms/google#document-loaders)

page, Example link to "Document Loaders / Cloud SQL for PostgreSQL" and

most of the new example links in the Document Loaders, Vectorstores,

Memory sections.

- fixed URL-s (manually verified all example links)

- sorted sections in page to follow the "integrations/components" menu

item order.

- fixed several page titles to fix Navbar item order

---------

Co-authored-by: Erick Friis <erick@langchain.dev>

**Description:** Update to the list of partner packages in the list of

providers

**Issue:** Google & Nvidia had two entries each, both pointing to the

same page

**Dependencies:** None

- **Description:** A generic document loader adapter for SQLAlchemy on

top of LangChain's `SQLDatabaseLoader`.

- **Needed by:** https://github.com/crate-workbench/langchain/pull/1

- **Depends on:** GH-16655

- **Addressed to:** @baskaryan, @cbornet, @eyurtsev

Hi from CrateDB again,

in the same spirit like GH-16243 and GH-16244, this patch breaks out

another commit from https://github.com/crate-workbench/langchain/pull/1,

in order to reduce the size of this patch before submitting it, and to

separate concerns.

To accompany the SQLAlchemy adapter implementation, the patch includes

integration tests for both SQLite and PostgreSQL. Let me know if

corresponding utility resources should be added at different spots.

With kind regards,

Andreas.

### Software Tests

```console

docker compose --file libs/community/tests/integration_tests/document_loaders/docker-compose/postgresql.yml up

```

```console

cd libs/community

pip install psycopg2-binary

pytest -vvv tests/integration_tests -k sqldatabase

```

```

14 passed

```

---------

Co-authored-by: Andreas Motl <andreas.motl@crate.io>

**Description**: This PR adds support for using the [LLMLingua project

](https://github.com/microsoft/LLMLingua) especially the LongLLMLingua

(Enhancing Large Language Model Inference via Prompt Compression) as a

document compressor / transformer.

The LLMLingua project is an interesting project that can greatly improve

RAG system by compressing prompts and contexts while keeping their

semantic relevance.

**Issue**: https://github.com/microsoft/LLMLingua/issues/31

**Dependencies**: [llmlingua](https://pypi.org/project/llmlingua/)

@baskaryan

---------

Co-authored-by: Ayodeji Ayibiowu <ayodeji.ayibiowu@getinge.com>

Co-authored-by: Harrison Chase <hw.chase.17@gmail.com>

### Description

This PR moves the Elasticsearch classes to a partners package.

Note that we will not move (and later remove) `ElasticKnnSearch`. It

were previously deprecated.

`ElasticVectorSearch` is going to stay in the community package since it

is used quite a lot still.

Also note that I left the `ElasticsearchTranslator` for self query

untouched because it resides in main `langchain` package.

### Dependencies

There will be another PR that updates the notebooks (potentially pulling

them into the partners package) and templates and removes the classes

from the community package, see

https://github.com/langchain-ai/langchain/pull/17468

#### Open question

How to make the transition smooth for users? Do we move the import

aliases and require people to install `langchain-elasticsearch`? Or do

we remove the import aliases from the `langchain` package all together?

What has worked well for other partner packages?

---------

Co-authored-by: Erick Friis <erick@langchain.dev>

**Description**

Adding different threshold types to the semantic chunker. I’ve had much

better and predictable performance when using standard deviations

instead of percentiles.

For all the documents I’ve tried, the distribution of distances look

similar to the above: positively skewed normal distribution. All skews

I’ve seen are less than 1 so that explains why standard deviations

perform well, but I’ve included IQR if anyone wants something more

robust.

Also, using the percentile method backwards, you can declare the number

of clusters and use semantic chunking to get an ‘optimal’ splitting.

---------

Co-authored-by: Harrison Chase <hw.chase.17@gmail.com>

**Description:** Update the example fiddler notebook to use community

path, instead of langchain.callback

**Dependencies:** None

**Twitter handle:** @bhalder

Co-authored-by: Barun Halder <barun@fiddler.ai>

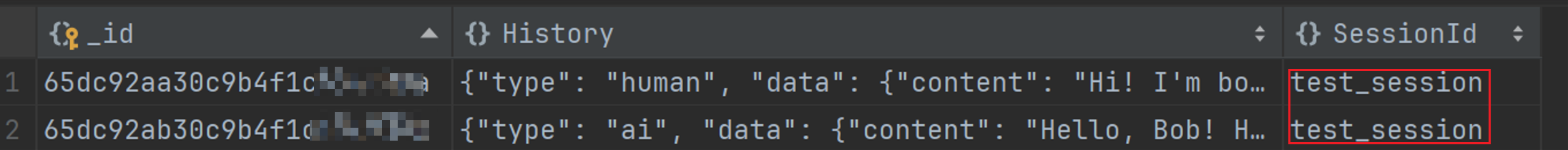

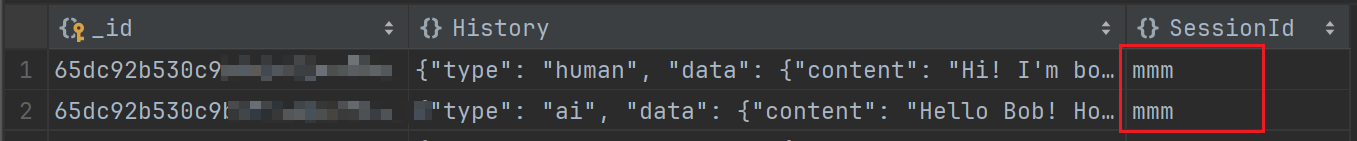

I tried to configure MongoDBChatMessageHistory using the code from the

original documentation to store messages based on the passed session_id

in MongoDB. However, this configuration did not take effect, and the

session id in the database remained as 'test_session'. To resolve this

issue, I found that when configuring MongoDBChatMessageHistory, it is

necessary to set session_id=session_id instead of

session_id=test_session.

Issue: DOC: Ineffective Configuration of MongoDBChatMessageHistory for

Custom session_id Storage

previous code:

```python

chain_with_history = RunnableWithMessageHistory(

chain,

lambda session_id: MongoDBChatMessageHistory(

session_id="test_session",

connection_string="mongodb://root:Y181491117cLj@123.56.224.232:27017",

database_name="my_db",

collection_name="chat_histories",

),

input_messages_key="question",

history_messages_key="history",

)

config = {"configurable": {"session_id": "mmm"}}

chain_with_history.invoke({"question": "Hi! I'm bob"}, config)

```

Modified code:

```python

chain_with_history = RunnableWithMessageHistory(

chain,

lambda session_id: MongoDBChatMessageHistory(

session_id=session_id, # here is my modify code

connection_string="mongodb://root:Y181491117cLj@123.56.224.232:27017",

database_name="my_db",

collection_name="chat_histories",

),

input_messages_key="question",

history_messages_key="history",

)

config = {"configurable": {"session_id": "mmm"}}

chain_with_history.invoke({"question": "Hi! I'm bob"}, config)

```

Effect after modification (it works):

**Description:** Update the azure search notebook to have more

descriptive comments, and an option to choose between OpenAI and

AzureOpenAI Embeddings

---------

Co-authored-by: Matt Gotteiner <[email protected]>

Co-authored-by: Bagatur <baskaryan@gmail.com>

**Description:** Callback handler to integrate fiddler with langchain.

This PR adds the following -

1. `FiddlerCallbackHandler` implementation into langchain/community

2. Example notebook `fiddler.ipynb` for usage documentation

[Internal Tracker : FDL-14305]

**Issue:**

NA

**Dependencies:**

- Installation of langchain-community is unaffected.

- Usage of FiddlerCallbackHandler requires installation of latest

fiddler-client (2.5+)

**Twitter handle:** @fiddlerlabs @behalder

Co-authored-by: Barun Halder <barun@fiddler.ai>

- **Description:** Added the `return_sparql_query` feature to the

`GraphSparqlQAChain` class, allowing users to get the formatted SPARQL

query along with the chain's result.

- **Issue:** NA

- **Dependencies:** None

Note: I've ensured that the PR passes linting and testing by running

make format, make lint, and make test locally.

I have added a test for the integration (which relies on network access)

and I have added an example to the notebook showing its use.

https://github.com/langchain-ai/langchain/issues/17657

Thank you for contributing to LangChain!

Checklist:

- [ ] PR title: Please title your PR "package: description", where

"package" is whichever of langchain, community, core, experimental, etc.

is being modified. Use "docs: ..." for purely docs changes, "templates:

..." for template changes, "infra: ..." for CI changes.

- Example: "community: add foobar LLM"

- [ ] PR message: **Delete this entire template message** and replace it

with the following bulleted list

- **Description:** a description of the change

- **Issue:** the issue # it fixes, if applicable

- **Dependencies:** any dependencies required for this change

- **Twitter handle:** if your PR gets announced, and you'd like a

mention, we'll gladly shout you out!

- [ ] Pass lint and test: Run `make format`, `make lint` and `make test`

from the root of the package(s) you've modified to check that you're

passing lint and testing. See contribution guidelines for more

information on how to write/run tests, lint, etc:

https://python.langchain.com/docs/contributing/

- [ ] Add tests and docs: If you're adding a new integration, please

include

1. a test for the integration, preferably unit tests that do not rely on

network access,

2. an example notebook showing its use. It lives in

`docs/docs/integrations` directory.

Additional guidelines:

- Make sure optional dependencies are imported within a function.

- Please do not add dependencies to pyproject.toml files (even optional

ones) unless they are required for unit tests.

- Most PRs should not touch more than one package.

- Changes should be backwards compatible.

- If you are adding something to community, do not re-import it in

langchain.

If no one reviews your PR within a few days, please @-mention one of

baskaryan, efriis, eyurtsev, hwchase17.

**Description:** Initial pull request for Kinetica LLM wrapper

**Issue:** N/A

**Dependencies:** No new dependencies for unit tests. Integration tests

require gpudb, typeguard, and faker

**Twitter handle:** @chad_juliano

Note: There is another pull request for Kinetica vectorstore. Ultimately

we would like to make a partner package but we are starting with a

community contribution.

- **Description:** Update the Azure Search vector store notebook for the

latest version of the SDK

---------

Co-authored-by: Matt Gotteiner <[email protected]>

**Description:** Clean up Google product names and fix document loader

section

**Issue:** NA

**Dependencies:** None

---------

Co-authored-by: Bagatur <baskaryan@gmail.com>

- **Description:** Update IBM watsonx.ai docs and add IBM as a provider

docs

- **Dependencies:**

[ibm-watsonx-ai](https://pypi.org/project/ibm-watsonx-ai/),

- **Tag maintainer:** :

Please make sure your PR is passing linting and testing before

submitting. Run `make format`, `make lint` and `make test` to check this

locally. ✅

**Description:** This PR changes the module import path for SQLDatabase

in the documentation

**Issue:** Updates the documentation to reflect the move of integrations

to langchain-community

- **Description:** The URL in the tigris tutorial was htttps instead of

https, leading to a bad link.

- **Issue:** N/A

- **Dependencies:** N/A

- **Twitter handle:** Speucey

In this pull request, we introduce the add_images method to the

SingleStoreDB vector store class, expanding its capabilities to handle

multi-modal embeddings seamlessly. This method facilitates the

incorporation of image data into the vector store by associating each

image's URI with corresponding document content, metadata, and either

pre-generated embeddings or embeddings computed using the embed_image

method of the provided embedding object.

the change includes integration tests, validating the behavior of the

add_images. Additionally, we provide a notebook showcasing the usage of

this new method.

---------

Co-authored-by: Volodymyr Tkachuk <vtkachuk-ua@singlestore.com>

Issue in the API Reference:

If the `Classes` of `Functions` section is empty, it still shown in API

Reference. Here is an

[example](https://api.python.langchain.com/en/latest/core_api_reference.html#module-langchain_core.agents)

where `Functions` table is empty but still presented.

It happens only if this section has only the "private" members (with

names started with '_'). Those members are not shown but the whole

member section (empty) is shown.

This way we can document APIs in methods signature only where they are

checked by the typing system and we get them also in the param

description without having to duplicate in the docstrings (where they

are unchecked).

Twitter: @cbornet_

Description:

In this PR, I am adding a PolygonTickerNews Tool, which can be used to

get the latest news for a given ticker / stock.

Twitter handle: [@virattt](https://twitter.com/virattt)

**Description**: CogniSwitch focusses on making GenAI usage more

reliable. It abstracts out the complexity & decision making required for

tuning processing, storage & retrieval. Using simple APIs documents /

URLs can be processed into a Knowledge Graph that can then be used to

answer questions.

**Dependencies**: No dependencies. Just network calls & API key required

**Tag maintainer**: @hwchase17

**Twitter handle**: https://github.com/CogniSwitch

**Documentation**: Please check

`docs/docs/integrations/toolkits/cogniswitch.ipynb`

**Tests**: The usual tool & toolkits tests using `test_imports.py`

PR has passed linting and testing before this submission.

---------

Co-authored-by: Saicharan Sridhara <145636106+saiCogniswitch@users.noreply.github.com>

Hi, I'm from the LanceDB team.

Improves LanceDB integration by making it easier to use - now you aren't

required to create tables manually and pass them in the constructor,

although that is still backward compatible.

Bug fix - pandas was being used even though it's not a dependency for

LanceDB or langchain

PS - this issue was raised a few months ago but lost traction. It is a

feature improvement for our users kindly review this , Thanks !

This PR replaces the imports of the Astra DB vector store with the

newly-released partner package, in compliance with the deprecation

notice now attached to the community "legacy" store.

- **Description:** Update documentation for RunnableWithMessageHistory

- **Issue:** https://github.com/langchain-ai/langchain/issues/16642

I don't have access to an Anthropic API key so I updated things to use

OpenAI. Let me know if you'd prefer another provider.

**Description:** This PR introduces a new "Astra DB" Partner Package.

So far only the vector store class is _duplicated_ there, all others

following once this is validated and established.

Along with the move to separate package, incidentally, the class name

will change `AstraDB` => `AstraDBVectorStore`.

The strategy has been to duplicate the module (with prospected removal

from community at LangChain 0.2). Until then, the code will be kept in

sync with minimal, known differences (there is a makefile target to

automate drift control. Out of convenience with this check, the

community package has a class `AstraDBVectorStore` aliased to `AstraDB`

at the end of the module).

With this PR several bugfixes and improvement come to the vector store,

as well as a reshuffling of the doc pages/notebooks (Astra and

Cassandra) to align with the move to a separate package.

**Dependencies:** A brand new pyproject.toml in the new package, no

changes otherwise.

**Twitter handle:** `@rsprrs`

---------

Co-authored-by: Christophe Bornet <cbornet@hotmail.com>

Co-authored-by: Erick Friis <erick@langchain.dev>

A few minor changes for contribution:

1) Updating link to say "Contributing" rather than "Developer's guide"

2) Minor changes after going through the contributing documentation

page.

This PR is adding support for NVIDIA NeMo embeddings issue #16095.

---------

Co-authored-by: Praveen Nakshatrala <pnakshatrala@gmail.com>

Co-authored-by: Harrison Chase <hw.chase.17@gmail.com>

Co-authored-by: Bagatur <baskaryan@gmail.com>

1. integrate with

[`Yuan2.0`](https://github.com/IEIT-Yuan/Yuan-2.0/blob/main/README-EN.md)

2. update `langchain.llms`

3. add a new doc for [Yuan2.0

integration](docs/docs/integrations/llms/yuan2.ipynb)

---------

Co-authored-by: Harrison Chase <hw.chase.17@gmail.com>

Co-authored-by: Bagatur <baskaryan@gmail.com>

This pull request introduces support for various Approximate Nearest

Neighbor (ANN) vector index algorithms in the VectorStore class,

starting from version 8.5 of SingleStore DB. Leveraging this enhancement

enables users to harness the power of vector indexing, significantly

boosting search speed, particularly when handling large sets of vectors.

---------

Co-authored-by: Volodymyr Tkachuk <vtkachuk-ua@singlestore.com>

Co-authored-by: Bagatur <baskaryan@gmail.com>

- **Description:**

1. Added _clear_edges()_ and _get_number_of_nodes()_ functions in

NetworkxEntityGraph class.

2. Added the above two function in graph_networkx_qa.ipynb

documentation.

Thank you for contributing to LangChain!

Checklist:

- **PR title**: docs: add & update docs for Oracle Cloud Infrastructure

(OCI) integrations

- **Description**: adding and updating documentation for two

integrations - OCI Generative AI & OCI Data Science

(1) adding integration page for OCI Generative AI embeddings (@baskaryan

request,

docs/docs/integrations/text_embedding/oci_generative_ai.ipynb)

(2) updating integration page for OCI Generative AI llms

(docs/docs/integrations/llms/oci_generative_ai.ipynb)

(3) adding platform documentation for OCI (@baskaryan request,

docs/docs/integrations/platforms/oci.mdx). this combines the

integrations of OCI Generative AI & OCI Data Science

(4) if possible, requesting to be added to 'Featured Community

Providers' so supplying a modified

docs/docs/integrations/platforms/index.mdx to reflect the addition

- **Issue:** none

- **Dependencies:** no new dependencies

- **Twitter handle:**

---------

Co-authored-by: MING KANG <ming.kang@oracle.com>

1. integrate chat models with

[`Yuan2.0`](https://github.com/IEIT-Yuan/Yuan-2.0/blob/main/README-EN.md)

2. add a new doc for [Yuan2.0

integration](docs/docs/integrations/llms/yuan2.ipynb)

Yuan2.0 is a new generation Fundamental Large Language Model developed

by IEIT System. We have published all three models, Yuan 2.0-102B, Yuan

2.0-51B, and Yuan 2.0-2B.

---------

Co-authored-by: Bagatur <baskaryan@gmail.com>

## Description

I am submitting this for a school project as part of a team of 5. Other

team members are @LeilaChr, @maazh10, @Megabear137, @jelalalamy. This PR

also has contributions from community members @Harrolee and @Mario928.

Initial context is in the issue we opened (#11229).

This pull request adds:

- Generic framework for expanding the languages that `LanguageParser`

can handle, using the

[tree-sitter](https://github.com/tree-sitter/py-tree-sitter#py-tree-sitter)

parsing library and existing language-specific parsers written for it

- Support for the following additional languages in `LanguageParser`:

- C

- C++

- C#

- Go

- Java (contributed by @Mario928

https://github.com/ThatsJustCheesy/langchain/pull/2)

- Kotlin

- Lua

- Perl

- Ruby

- Rust

- Scala

- TypeScript (contributed by @Harrolee

https://github.com/ThatsJustCheesy/langchain/pull/1)

Here is the [design

document](https://docs.google.com/document/d/17dB14cKCWAaiTeSeBtxHpoVPGKrsPye8W0o_WClz2kk)

if curious, but no need to read it.

## Issues

- Closes#11229

- Closes#10996

- Closes#8405

## Dependencies

`tree_sitter` and `tree_sitter_languages` on PyPI. We have tried to add

these as optional dependencies.

## Documentation

We have updated the list of supported languages, and also added a

section to `source_code.ipynb` detailing how to add support for

additional languages using our framework.

## Maintainer

- @hwchase17 (previously reviewed

https://github.com/langchain-ai/langchain/pull/6486)

Thanks!!

## Git commits

We will gladly squash any/all of our commits (esp merge commits) if

necessary. Let us know if this is desirable, or if you will be

squash-merging anyway.

<!-- Thank you for contributing to LangChain!

Replace this entire comment with:

- **Description:** a description of the change,

- **Issue:** the issue # it fixes (if applicable),

- **Dependencies:** any dependencies required for this change,

- **Tag maintainer:** for a quicker response, tag the relevant

maintainer (see below),

- **Twitter handle:** we announce bigger features on Twitter. If your PR

gets announced, and you'd like a mention, we'll gladly shout you out!

Please make sure your PR is passing linting and testing before

submitting. Run `make format`, `make lint` and `make test` to check this

locally.

See contribution guidelines for more information on how to write/run

tests, lint, etc:

https://github.com/langchain-ai/langchain/blob/master/.github/CONTRIBUTING.md

If you're adding a new integration, please include:

1. a test for the integration, preferably unit tests that do not rely on

network access,

2. an example notebook showing its use. It lives in `docs/extras`

directory.

If no one reviews your PR within a few days, please @-mention one of

@baskaryan, @eyurtsev, @hwchase17.

-->

---------

Co-authored-by: Maaz Hashmi <mhashmi373@gmail.com>

Co-authored-by: LeilaChr <87657694+LeilaChr@users.noreply.github.com>

Co-authored-by: Jeremy La <jeremylai511@gmail.com>

Co-authored-by: Megabear137 <zubair.alnoor27@gmail.com>

Co-authored-by: Lee Harrold <lhharrold@sep.com>

Co-authored-by: Mario928 <88029051+Mario928@users.noreply.github.com>

Co-authored-by: Bagatur <baskaryan@gmail.com>

Co-authored-by: Harrison Chase <hw.chase.17@gmail.com>

- **Description:** Pebblo opensource project enables developers to

safely load data to their Gen AI apps. It identifies semantic topics and

entities found in the loaded data and summarizes them in a

developer-friendly report.

- **Dependencies:** none

- **Twitter handle:** srics

@hwchase17

**Description**: This PR adds a chain for Amazon Neptune graph database

RDF format. It complements the existing Neptune Cypher chain. The PR

also includes a Neptune RDF graph class to connect to, introspect, and

query a Neptune RDF graph database from the chain. A sample notebook is

provided under docs that demonstrates the overall effect: invoking the

chain to make natural language queries against Neptune using an LLM.

**Issue**: This is a new feature

**Dependencies**: The RDF graph class depends on the AWS boto3 library

if using IAM authentication to connect to the Neptune database.

---------

Co-authored-by: Piyush Jain <piyushjain@duck.com>

Co-authored-by: Bagatur <baskaryan@gmail.com>

**Description:** This PR adds support for

[flashrank](https://github.com/PrithivirajDamodaran/FlashRank) for

reranking as alternative to Cohere.

I'm not sure `libs/langchain` is the right place for this change. At

first, I wanted to put it under `libs/community`. All the compressors

were under `libs/langchain/retrievers/document_compressors` though. Hope

this makes sense!

- **Description:** This adds a delete method so that rocksetdb can be

used with `RecordManager`.

- **Issue:** N/A

- **Dependencies:** N/A

- **Twitter handle:** `@_morgan_adams_`

---------

Co-authored-by: Rockset API Bot <admin@rockset.io>

- Reordered sections

- Applied consistent formatting

- Fixed headers (there were 2 H1 headers; this breaks CoT)

- Added `Settings` header and moved all related sections under it

Description: Updated doc for integrations/chat/anthropic_functions with

new functions: invoke. Changed structure of the document to match the

required one.

Issue: https://github.com/langchain-ai/langchain/issues/15664

Dependencies: None

Twitter handle: None

---------

Co-authored-by: NaveenMaltesh <naveen@onmeta.in>

- **Description:** Adds the document loader for [AWS

Athena](https://aws.amazon.com/athena/), a serverless and interactive

analytics service.

- **Dependencies:** Added boto3 as a dependency

This PR updates the `TF-IDF.ipynb` documentation to reflect the new

import path for TFIDFRetriever in the langchain-community package. The

previous path, `from langchain.retrievers import TFIDFRetriever`, has

been updated to `from langchain_community.retrievers import

TFIDFRetriever` to align with the latest changes in the langchain

library.

according to https://youtu.be/rZus0JtRqXE?si=aFo1JTDnu5kSEiEN&t=678 by

@efriis

- **Description:** Seems the requirements for tool names have changed

and spaces are no longer allowed. Changed the tool name from Google

Search to google_search in the notebook

- **Issue:** n/a

- **Dependencies:** none

- **Twitter handle:** @mesirii

**Description**

Make some functions work with Milvus:

1. get_ids: Get primary keys by field in the metadata

2. delete: Delete one or more entities by ids

3. upsert: Update/Insert one or more entities

**Issue**

None

**Dependencies**

None

**Tag maintainer:**

@hwchase17

**Twitter handle:**

None

---------

Co-authored-by: HoaNQ9 <hoanq.1811@gmail.com>

Co-authored-by: Erick Friis <erick@langchain.dev>

## Summary

This PR upgrades LangChain's Ruff configuration in preparation for

Ruff's v0.2.0 release. (The changes are compatible with Ruff v0.1.5,

which LangChain uses today.) Specifically, we're now warning when

linter-only options are specified under `[tool.ruff]` instead of

`[tool.ruff.lint]`.

---------

Co-authored-by: Erick Friis <erick@langchain.dev>

Co-authored-by: Bagatur <baskaryan@gmail.com>

- **Issue:** Issue with model argument support (been there for a while

actually):

- Non-specially-handled arguments like temperature don't work when

passed through constructor.

- Such arguments DO work quite well with `bind`, but also do not abide

by field requirements.

- Since initial push, server-side error messages have gotten better and

v0.0.2 raises better exceptions. So maybe it's better to let server-side

handle such issues?

- **Description:**

- Removed ChatNVIDIA's argument fields in favor of

`model_kwargs`/`model_kws` arguments which aggregates constructor kwargs

(from constructor pathway) and merges them with call kwargs (bind

pathway).

- Shuffled a few functions from `_NVIDIAClient` to `ChatNVIDIA` to

streamline construction for future integrations.

- Minor/Optional: Old services didn't have stop support, so client-side

stopping was implemented. Now do both.

- **Any Breaking Changes:** Minor breaking changes if you strongly rely

on chat_model.temperature, etc. This is captured by

chat_model.model_kwargs.

PR passes tests and example notebooks and example testing. Still gonna

chat with some people, so leaving as draft for now.

---------

Co-authored-by: Erick Friis <erick@langchain.dev>

The Integrations `Toolkits` menu was named as [`Agents and

toolkits`](https://python.langchain.com/docs/integrations/toolkits).

This name has a historical reason that is not correct anymore. Now this

menu is all about community `Toolkits`. There is a separate menu for

[Agents](https://python.langchain.com/docs/modules/agents/). Also Agents

are officially not part of Integrations (Community package) but part of

LangChain package.

<!-- Thank you for contributing to LangChain!

Please title your PR "<package>: <description>", where <package> is

whichever of langchain, community, core, experimental, etc. is being

modified.

Replace this entire comment with:

- **Description:** a description of the change,

- **Issue:** the issue # it fixes if applicable,

- **Dependencies:** any dependencies required for this change,

- **Twitter handle:** we announce bigger features on Twitter. If your PR

gets announced, and you'd like a mention, we'll gladly shout you out!

Please make sure your PR is passing linting and testing before

submitting. Run `make format`, `make lint` and `make test` from the root

of the package you've modified to check this locally.

See contribution guidelines for more information on how to write/run

tests, lint, etc: https://python.langchain.com/docs/contributing/

If you're adding a new integration, please include:

1. a test for the integration, preferably unit tests that do not rely on

network access,

2. an example notebook showing its use. It lives in

`docs/docs/integrations` directory.

If no one reviews your PR within a few days, please @-mention one of

@baskaryan, @eyurtsev, @hwchase17.

-->

- **Description: changes to you.com files**

- general cleanup

- adds community/utilities/you.py, moving bulk of code from retriever ->

utility

- removes `snippet` as endpoint

- adds `news` as endpoint

- adds more tests

<s>**Description: update community MAKE file**

- adds `integration_tests`

- adds `coverage`</s>

- **Issue:** the issue # it fixes if applicable,

- [For New Contributors: Update Integration

Documentation](https://github.com/langchain-ai/langchain/issues/15664#issuecomment-1920099868)

- **Dependencies:** n/a

- **Twitter handle:** @scottnath

- **Mastodon handle:** scottnath@mastodon.social

---------

Co-authored-by: Bagatur <baskaryan@gmail.com>

- **Description:** This adds a recursive json splitter class to the

existing text_splitters as well as unit tests

- **Issue:** splitting text from structured data can cause issues if you

have a large nested json object and you split it as regular text you may

end up losing the structure of the json. To mitigate against this you

can split the nested json into large chunks and overlap them, but this

causes unnecessary text processing and there will still be times where

the nested json is so big that the chunks get separated from the parent

keys.

As an example you wouldn't want the following to be split in half:

```shell

{'val0': 'DFWeNdWhapbR',

'val1': {'val10': 'QdJo',

'val11': 'FWSDVFHClW',

'val12': 'bkVnXMMlTiQh',

'val13': 'tdDMKRrOY',

'val14': 'zybPALvL',

'val15': 'JMzGMNH',

'val16': {'val160': 'qLuLKusFw',

'val161': 'DGuotLh',

'val162': 'KztlcSBropT',

-----------------------------------------------------------------------split-----

'val163': 'YlHHDrN',

'val164': 'CtzsxlGBZKf',

'val165': 'bXzhcrWLmBFp',

'val166': 'zZAqC',

'val167': 'ZtyWno',

'val168': 'nQQZRsLnaBhb',

'val169': 'gSpMbJwA'},

'val17': 'JhgiyF',

'val18': 'aJaqjUSFFrI',

'val19': 'glqNSvoyxdg'}}

```

Any llm processing the second chunk of text may not have the context of

val1, and val16 reducing accuracy. Embeddings will also lack this

context and this makes retrieval less accurate.

Instead you want it to be split into chunks that retain the json

structure.

```shell

{'val0': 'DFWeNdWhapbR',

'val1': {'val10': 'QdJo',

'val11': 'FWSDVFHClW',

'val12': 'bkVnXMMlTiQh',

'val13': 'tdDMKRrOY',

'val14': 'zybPALvL',

'val15': 'JMzGMNH',

'val16': {'val160': 'qLuLKusFw',

'val161': 'DGuotLh',

'val162': 'KztlcSBropT',

'val163': 'YlHHDrN',

'val164': 'CtzsxlGBZKf'}}}

```

and

```shell

{'val1':{'val16':{

'val165': 'bXzhcrWLmBFp',

'val166': 'zZAqC',

'val167': 'ZtyWno',

'val168': 'nQQZRsLnaBhb',

'val169': 'gSpMbJwA'},

'val17': 'JhgiyF',

'val18': 'aJaqjUSFFrI',

'val19': 'glqNSvoyxdg'}}

```

This recursive json text splitter does this. Values that contain a list

can be converted to dict first by using split(... convert_lists=True)

otherwise long lists will not be split and you may end up with chunks

larger than the max chunk.

In my testing large json objects could be split into small chunks with

✅ Increased question answering accuracy

✅ The ability to split into smaller chunks meant retrieval queries can

use fewer tokens

- **Dependencies:** json import added to text_splitter.py, and random

added to the unit test

- **Twitter handle:** @joelsprunger

---------

Co-authored-by: Harrison Chase <hw.chase.17@gmail.com>

**Description:**: Fix 422 error in example with LangServe client code

httpx.HTTPStatusError: Client error '422 Unprocessable Entity' for url

'http://localhost:8000/agent/invoke'

- **Description:** Fixes in the Ontotext GraphDB Graph and QA Chain

related to the error handling in case of invalid SPARQL queries, for

which `prepareQuery` doesn't throw an exception, but the server returns

400 and the query is indeed invalid

- **Issue:** N/A

- **Dependencies:** N/A

- **Twitter handle:** @OntotextGraphDB

Ran

```python

import glob

import re

def update_prompt(x):

return re.sub(

r"(?P<start>\b)PromptTemplate\(template=(?P<template>.*), input_variables=(?:.*)\)",

"\g<start>PromptTemplate.from_template(\g<template>)",

x

)

for fn in glob.glob("docs/**/*", recursive=True):

try:

content = open(fn).readlines()

except:

continue

content = [update_prompt(l) for l in content]

with open(fn, "w") as f:

f.write("".join(content))

```

Replace this entire comment with:

- **Description:** Added missing link for Quickstart in Model IO

documentation,

- **Issue:** N/A,

- **Dependencies:** N/A,

- **Twitter handle:** N/A

<!--

If no one reviews your PR within a few days, please @-mention one of

@baskaryan, @eyurtsev, @hwchase17.

-->

Co-authored-by: Eugene Yurtsev <eyurtsev@gmail.com>

Several notebooks have Title != file name. That results in corrupted

sorting in Navbar (ToC).

- Fixed titles and file names.

- Changed text formats to the consistent form

- Redirected renamed files in the `Vercel.json`

This PR is opinionated.

- Moved `Embedding models` item to place after `LLMs` and `Chat model`,

so all items with models are together.

- Renamed `Text embedding models` to `Embedding models`. Now, it is

shorter and easier to read. `Text` is obvious from context. The same as

the `Text LLMs` vs. `LLMs` (we also have multi-modal LLMs).

The `Partner libs` menu is not sorted. Now it is long enough, and items

should be sorted to simplify a package search.

- Sorted items in the `Partner libs` menu

### Description

support load any github file content based on file extension.

Why not use [git

loader](https://python.langchain.com/docs/integrations/document_loaders/git#load-existing-repository-from-disk)

?

git loader clones the whole repo even only interested part of files,

that's too heavy. This GithubFileLoader only downloads that you are

interested files.

### Twitter handle

my twitter: @shufanhaotop

---------

Co-authored-by: Hao Fan <h_fan@apple.com>

Co-authored-by: Bagatur <baskaryan@gmail.com>

**Description:** Link to the Brave Website added to the

`brave-search.ipynb` notebook.

This notebook is shown in the docs as an example for the brave tool.

**Issue:** There was to reference on where / how to get an api key

**Dependencies:** none

**Twitter handle:** not for this one :)

- **Description:** docs: update StreamlitCallbackHandler example.

- **Issue:** None

- **Dependencies:** None

I have updated the example for StreamlitCallbackHandler in the

documentation bellow.

https://python.langchain.com/docs/integrations/callbacks/streamlit

Previously, the example used `initialize_agent`, which has been

deprecated, so I've updated it to use `create_react_agent` instead. Many

langchain users are likely searching examples of combining

`create_react_agent` or `openai_tools_agent_chain` with

StreamlitCallbackHandler. I'm sure this update will be really helpful

for them!

Unfortunately, writing unit tests for this example is difficult, so I

have not written any tests. I have run this code in a standalone Python

script file and ensured it runs correctly.

- **Description:** "load HTML **form** web URLs" should be "load HTML

**from** web URLs"? 🤔

- **Issue:** Typo

- **Dependencies:** Nope

- **Twitter handle:** n0vad3v

- **Description:** Adds an additional class variable to `BedrockBase`

called `provider` that allows sending a model provider such as amazon,

cohere, ai21, etc.

Up until now, the model provider is extracted from the `model_id` using

the first part before the `.`, such as `amazon` for

`amazon.titan-text-express-v1` (see [supported list of Bedrock model IDs

here](https://docs.aws.amazon.com/bedrock/latest/userguide/model-ids-arns.html)).

But for custom Bedrock models where the ARN of the provisioned

throughput must be supplied, the `model_id` is like

`arn:aws:bedrock:...` so the `model_id` cannot be extracted from this. A

model `provider` is required by the LangChain Bedrock class to perform

model-based processing. To allow the same processing to be performed for

custom-models of a specific base model type, passing this `provider`

argument can help solve the issues.

The alternative considered here was the use of

`provider.arn:aws:bedrock:...` which then requires ARN to be extracted

and passed separately when invoking the model. The proposed solution

here is simpler and also does not cause issues for current models

already using the Bedrock class.

- **Issue:** N/A

- **Dependencies:** N/A

---------

Co-authored-by: Piyush Jain <piyushjain@duck.com>

- **Description:** Several meta/usability updates, including User-Agent.

- **Issue:**

- User-Agent metadata for tracking connector engagement. @milesial

please check and advise.

- Better error messages. Tries harder to find a request ID. @milesial

requested.

- Client-side image resizing for multimodal models. Hope to upgrade to

Assets API solution in around a month.

- `client.payload_fn` allows you to modify payload before network

request. Use-case shown in doc notebook for kosmos_2.

- `client.last_inputs` put back in to allow for advanced

support/debugging.

- **Dependencies:**

- Attempts to pull in PIL for image resizing. If not installed, prints

out "please install" message, warns it might fail, and then tries

without resizing. We are waiting on a more permanent solution.

For LC viz: @hinthornw

For NV viz: @fciannella @milesial @vinaybagade

---------

Co-authored-by: Erick Friis <erick@langchain.dev>

<!-- Thank you for contributing to LangChain!

Please title your PR "<package>: <description>", where <package> is

whichever of langchain, community, core, experimental, etc. is being

modified.

Replace this entire comment with:

- **Description:** a description of the change,

- **Issue:** the issue # it fixes if applicable,

- **Dependencies:** any dependencies required for this change,

- **Twitter handle:** we announce bigger features on Twitter. If your PR

gets announced, and you'd like a mention, we'll gladly shout you out!

Please make sure your PR is passing linting and testing before

submitting. Run `make format`, `make lint` and `make test` from the root

of the package you've modified to check this locally.

See contribution guidelines for more information on how to write/run

tests, lint, etc: https://python.langchain.com/docs/contributing/

If you're adding a new integration, please include:

1. a test for the integration, preferably unit tests that do not rely on

network access,

2. an example notebook showing its use. It lives in

`docs/docs/integrations` directory.

If no one reviews your PR within a few days, please @-mention one of

@baskaryan, @eyurtsev, @hwchase17.

-->

- **Description:** Updating one line code sample for Ollama with new

**langchain_community** package

- **Issue:**

- **Dependencies:** none

- **Twitter handle:** @picsoung

Description: Updated doc for llm/aleph_alpha with new functions: invoke.

Changed structure of the document to match the required one.

Issue: https://github.com/langchain-ai/langchain/issues/15664

Dependencies: None

Twitter handle: None

---------

Co-authored-by: Radhakrishnan Iyer <radhakrishnan.iyer@ibm.com>

Added notification about limited preview status of Guardrails for Amazon

Bedrock feature to code example.

---------

Co-authored-by: Piyush Jain <piyushjain@duck.com>

Description: Added the parameter for a possibility to change a language

model in SpacyEmbeddings. The default value is still the same:

"en_core_web_sm", so it shouldn't affect a code which previously did not

specify this parameter, but it is not hard-coded anymore and easy to

change in case you want to use it with other languages or models.

Issue: At Barcelona Supercomputing Center in Aina project

(https://github.com/projecte-aina), a project for Catalan Language

Models and Resources, we would like to use Langchain for one of our

current projects and we would like to comment that Langchain, while

being a very powerful and useful open-source tool, is pretty much

focused on English language. We would like to contribute to make it a

bit more adaptable for using with other languages.

Dependencies: This change requires the Spacy library and a language

model, specified in the model parameter.

Tag maintainer: @dev2049

Twitter handle: @projecte_aina

---------

Co-authored-by: Marina Pliusnina <marina.pliusnina@bsc.es>

Co-authored-by: Harrison Chase <hw.chase.17@gmail.com>

Replace this entire comment with:

- **Description:** Add Baichuan LLM to integration/llm, also updated

related docs.

Co-authored-by: BaiChuanHelper <wintergyc@WinterGYCs-MacBook-Pro.local>

- **Description:**

Filtering in a FAISS vectorstores is very inflexible and doesn't allow

that many use case. I think supporting callable like this enables a lot:

regular expressions, condition on multiple keys etc. **Note** I had to

manually alter a test. I don't understand if it was falty to begin with

or if there is something funky going on.

- **Issue:** None

- **Dependencies:** None

- **Twitter handle:** None

Signed-off-by: thiswillbeyourgithub <26625900+thiswillbeyourgithub@users.noreply.github.com>

This PR includes updates for OctoAI integrations:

- The LLM class was updated to fix a bug that occurs with multiple

sequential calls

- The Embedding class was updated to support the new GTE-Large endpoint

released on OctoAI lately

- The documentation jupyter notebook was updated to reflect using the

new LLM sdk

Thank you!

Description: One too many set of triple-ticks in a sample code block in

the QuickStart doc was causing "\`\`\`shell" to appear in the shell

command that was being demonstrated. I just deleted the extra "```".

Issue: Didn't see one

Dependencies: None

## Summary

This PR implements the "Connery Action Tool" and "Connery Toolkit".

Using them, you can integrate Connery actions into your LangChain agents

and chains.

Connery is an open-source plugin infrastructure for AI.

With Connery, you can easily create a custom plugin with a set of

actions and seamlessly integrate them into your LangChain agents and

chains. Connery will handle the rest: runtime, authorization, secret

management, access management, audit logs, and other vital features.

Additionally, Connery and our community offer a wide range of

ready-to-use open-source plugins for your convenience.

Learn more about Connery:

- GitHub: https://github.com/connery-io/connery-platform

- Documentation: https://docs.connery.io

- Twitter: https://twitter.com/connery_io

## TODOs

- [x] API wrapper

- [x] Integration tests

- [x] Connery Action Tool

- [x] Docs

- [x] Example

- [x] Integration tests

- [x] Connery Toolkit

- [x] Docs

- [x] Example

- [x] Formatting (`make format`)

- [x] Linting (`make lint`)

- [x] Testing (`make test`)

**Description:**

Updated the retry.ipynb notebook, it contains the illustrations of

RetryOutputParser in LangChain. But the notebook lacks to explain the

compatibility of RetryOutputParser with existing chains. This changes

adds some code to illustrate the workflow of using RetryOutputParser

with the user chain.

Changes:

1. Changed RetryWithErrorOutputParser with RetryOutputParser, as the

markdown text says so.

2. Added code at the last of the notebook to define a chain which passes

the LLM completions to the retry parser, which can be customised for

user needs.

**Issue:**

Since RetryOutputParser/RetryWithErrorOutputParser does not implement

the parse function it cannot be used with LLMChain directly like

[this](https://python.langchain.com/docs/expression_language/cookbook/prompt_llm_parser#prompttemplate-llm-outputparser).

This also raised various issues #15133#12175#11719 still open, instead

of adding new features/code changes its best to explain the "how to

integrate LLMChain with retry parsers" clearly with an example in the

corresponding notebook.

Inspired from:

https://github.com/langchain-ai/langchain/issues/15133#issuecomment-1868972580

---------

Co-authored-by: Harrison Chase <hw.chase.17@gmail.com>

**Description** : This PR updates the documentation for installing

llama-cpp-python on Windows.

- Updates install command to support pyproject.toml

- Makes CPU/GPU install instructions clearer

- Adds reinstall with GPU support command

**Issue**: Existing

[documentation](https://python.langchain.com/docs/integrations/llms/llamacpp#compiling-and-installing)

lists the following commands for installing llama-cpp-python

```

python setup.py clean

python setup.py install

````

The current version of the repo does not include a `setup.py` and uses a

`pyproject.toml` instead.

This can be replaced with

```

python -m pip install -e .

```

As explained in

https://github.com/abetlen/llama-cpp-python/issues/965#issuecomment-1837268339

**Dependencies**: None

**Twitter handle**: None

---------

Co-authored-by: blacksmithop <angstycoder101@gmaii.com>

- **Description:** The current pubmed tool documentation is referencing

the path to langchain core not the path to the tool in community. The

old tool redirects anyways, but for efficiency of using the more direct

path, just adding this documentation so it references the new path

- **Issue:** doesn't fix an issue

- **Dependencies:** no dependencies

- **Twitter handle:** rooftopzen

- **Description:** Syntax correction according to langchain version

update in 'Retry Parser' tutorial example,

- **Issue:** #16698

---------

Co-authored-by: Harrison Chase <hw.chase.17@gmail.com>

- **Description:** Adds Wikidata support to langchain. Can read out

documents from Wikidata.

- **Issue:** N/A

- **Dependencies:** Adds implicit dependencies for

`wikibase-rest-api-client` (for turning items into docs) and

`mediawikiapi` (for hitting the search endpoint)

- **Twitter handle:** @derenrich

You can see an example of this tool used in a chain

[here](https://nbviewer.org/urls/d.erenrich.net/upload/Wikidata_Langchain.ipynb)

or

[here](https://nbviewer.org/urls/d.erenrich.net/upload/Wikidata_Lars_Kai_Hansen.ipynb)

<!-- Thank you for contributing to LangChain!

Please make sure your PR is passing linting and testing before

submitting. Run `make format`, `make lint` and `make test` from the root

of the package you've modified to check this locally.

See contribution guidelines for more information on how to write/run

tests, lint, etc: https://python.langchain.com/docs/contributing/

If you're adding a new integration, please include:

1. a test for the integration, preferably unit tests that do not rely on

network access,

2. an example notebook showing its use. It lives in

`docs/docs/integrations` directory.

If no one reviews your PR within a few days, please @-mention one of

@baskaryan, @eyurtsev, @hwchase17.

-->

URL : https://python.langchain.com/docs/use_cases/extraction

Desc:

<b> While the following statement executes successfully, it throws an

error which is described below when we use the imported packages</b>

```py

from pydantic import BaseModel, Field, validator

```

Code:

```python

from langchain.output_parsers import PydanticOutputParser

from langchain.prompts import (

PromptTemplate,

)

from langchain_openai import OpenAI

from pydantic import BaseModel, Field, validator

# Define your desired data structure.

class Joke(BaseModel):

setup: str = Field(description="question to set up a joke")

punchline: str = Field(description="answer to resolve the joke")

# You can add custom validation logic easily with Pydantic.

@validator("setup")

def question_ends_with_question_mark(cls, field):

if field[-1] != "?":

raise ValueError("Badly formed question!")

return field

```

Error:

```md

PydanticUserError: The `field` and `config` parameters are not available

in Pydantic V2, please use the `info` parameter instead.

For further information visit

https://errors.pydantic.dev/2.5/u/validator-field-config-info

```

Solution:

Instead of doing:

```py

from pydantic import BaseModel, Field, validator

```

We should do:

```py

from langchain_core.pydantic_v1 import BaseModel, Field, validator

```

Thanks.

---------

Co-authored-by: Bagatur <baskaryan@gmail.com>

- **Description:** Adding Baichuan Text Embedding Model and Baichuan Inc

introduction.

Baichuan Text Embedding ranks #1 in C-MTEB leaderboard:

https://huggingface.co/spaces/mteb/leaderboard

Co-authored-by: BaiChuanHelper <wintergyc@WinterGYCs-MacBook-Pro.local>

- **Description:** This PR adds [EdenAI](https://edenai.co/) for the

chat model (already available in LLM & Embeddings). It supports all

[ChatModel] functionality: generate, async generate, stream, astream and

batch. A detailed notebook was added.

- **Dependencies**: No dependencies are added as we call a rest API.

---------

Co-authored-by: Eugene Yurtsev <eyurtsev@gmail.com>

… converters

One way to convert anything to an OAI function:

convert_to_openai_function

One way to convert anything to an OAI tool: convert_to_openai_tool

Corresponding bind functions on OAI models: bind_functions, bind_tools

community:

- **Description:**

- Add new ChatLiteLLMRouter class that allows a client to use a LiteLLM

Router as a LangChain chat model.

- Note: The existing ChatLiteLLM integration did not cover the LiteLLM

Router class.

- Add tests and Jupyter notebook.

- **Issue:** None

- **Dependencies:** Relies on existing ChatLiteLLM integration

- **Twitter handle:** @bburgin_0

---------

Co-authored-by: Bagatur <baskaryan@gmail.com>

<!-- Thank you for contributing to LangChain!

Please title your PR "<package>: <description>", where <package> is

whichever of langchain, community, core, experimental, etc. is being

modified.

Replace this entire comment with:

- **Description:** Adding Oracle Cloud Infrastructure Generative AI

integration. Oracle Cloud Infrastructure (OCI) Generative AI is a fully

managed service that provides a set of state-of-the-art, customizable

large language models (LLMs) that cover a wide range of use cases, and

which is available through a single API. Using the OCI Generative AI

service you can access ready-to-use pretrained models, or create and

host your own fine-tuned custom models based on your own data on

dedicated AI clusters.

https://docs.oracle.com/en-us/iaas/Content/generative-ai/home.htm

- **Issue:** None,

- **Dependencies:** OCI Python SDK,

- **Twitter handle:** we announce bigger features on Twitter. If your PR

gets announced, and you'd like a mention, we'll gladly shout you out!

Please make sure your PR is passing linting and testing before

submitting. Run `make format`, `make lint` and `make test` from the root

of the package you've modified to check this locally.

Passed

See contribution guidelines for more information on how to write/run

tests, lint, etc: https://python.langchain.com/docs/contributing/

If you're adding a new integration, please include:

1. a test for the integration, preferably unit tests that do not rely on

network access,

2. an example notebook showing its use. It lives in

`docs/docs/integrations` directory.

we provide unit tests. However, we cannot provide integration tests due

to Oracle policies that prohibit public sharing of api keys.

If no one reviews your PR within a few days, please @-mention one of

@baskaryan, @eyurtsev, @hwchase17.

-->

---------

Co-authored-by: Arthur Cheng <arthur.cheng@oracle.com>

Co-authored-by: Bagatur <baskaryan@gmail.com>

Added support for optionally supplying 'Guardrails for Amazon Bedrock'

on both types of model invocations (batch/regular and streaming) and for

all models supported by the Amazon Bedrock service.

@baskaryan @hwchase17

```python

llm = Bedrock(model_id="<model_id>", client=bedrock,

model_kwargs={},

guardrails={"id": " <guardrail_id>",

"version": "<guardrail_version>",

"trace": True}, callbacks=[BedrockAsyncCallbackHandler()])

class BedrockAsyncCallbackHandler(AsyncCallbackHandler):

"""Async callback handler that can be used to handle callbacks from langchain."""

async def on_llm_error(

self,

error: BaseException,

**kwargs: Any,

) -> Any:

reason = kwargs.get("reason")

if reason == "GUARDRAIL_INTERVENED":

# kwargs contains additional trace information sent by 'Guardrails for Bedrock' service.

print(f"""Guardrails: {kwargs}""")

# streaming

llm = Bedrock(model_id="<model_id>", client=bedrock,

model_kwargs={},

streaming=True,

guardrails={"id": "<guardrail_id>",

"version": "<guardrail_version>"})

```

---------

Co-authored-by: Bagatur <baskaryan@gmail.com>

- **Description:**

This PR adds a VectorStore integration for SAP HANA Cloud Vector Engine,

which is an upcoming feature in the SAP HANA Cloud database

(https://blogs.sap.com/2023/11/02/sap-hana-clouds-vector-engine-announcement/).

- **Issue:** N/A

- **Dependencies:** [SAP HANA Python

Client](https://pypi.org/project/hdbcli/)

- **Twitter handle:** @sapopensource

Implementation of the integration:

`libs/community/langchain_community/vectorstores/hanavector.py`

Unit tests:

`libs/community/tests/unit_tests/vectorstores/test_hanavector.py`

Integration tests:

`libs/community/tests/integration_tests/vectorstores/test_hanavector.py`

Example notebook:

`docs/docs/integrations/vectorstores/hanavector.ipynb`

Access credentials for execution of the integration tests can be

provided to the maintainers.

---------

Co-authored-by: sascha <sascha.stoll@sap.com>

Co-authored-by: Bagatur <baskaryan@gmail.com>

Description:

- checked that the doc chat/google_vertex_ai_palm is using new

functions: invoke, stream etc.

- added Gemini example

- fixed wrong output in Sanskrit example

Issue: https://github.com/langchain-ai/langchain/issues/15664

Dependencies: None

Twitter handle: None

- **Description:** Updated `_get_elements()` function of

`UnstructuredFileLoader `class to check if the argument self.file_path

is a file or list of files. If it is a list of files then it iterates

over the list of file paths, calls the partition function for each one,

and appends the results to the elements list. If self.file_path is not a

list, it calls the partition function as before.

- **Issue:** Fixed#15607,

- **Dependencies:** NA

- **Twitter handle:** NA

Co-authored-by: H161961 <Raunak.Raunak@Honeywell.com>

- **Description:** This PR enables LangChain to access the iFlyTek's

Spark LLM via the chat_models wrapper.

- **Dependencies:** websocket-client ^1.6.1

- **Tag maintainer:** @baskaryan

### SparkLLM chat model usage

Get SparkLLM's app_id, api_key and api_secret from [iFlyTek SparkLLM API

Console](https://console.xfyun.cn/services/bm3) (for more info, see

[iFlyTek SparkLLM Intro](https://xinghuo.xfyun.cn/sparkapi) ), then set

environment variables `IFLYTEK_SPARK_APP_ID`, `IFLYTEK_SPARK_API_KEY`

and `IFLYTEK_SPARK_API_SECRET` or pass parameters when using it like the

demo below:

```python3

from langchain.chat_models.sparkllm import ChatSparkLLM

client = ChatSparkLLM(

spark_app_id="<app_id>",

spark_api_key="<api_key>",

spark_api_secret="<api_secret>"

)

```

Description:

- Added output and environment variables

- Updated the documentation for chat/anthropic, changing references from

`langchain.schema` to `langchain_core.prompts`.

Issue: https://github.com/langchain-ai/langchain/issues/15664

Dependencies: None

Twitter handle: None

Since this is my first open-source PR, please feel free to point out any

mistakes, and I'll be eager to make corrections.

This PR introduces update to Konko Integration with LangChain.

1. **New Endpoint Addition**: Integration of a new endpoint to utilize

completion models hosted on Konko.

2. **Chat Model Updates for Backward Compatibility**: We have updated

the chat models to ensure backward compatibility with previous OpenAI

versions.

4. **Updated Documentation**: Comprehensive documentation has been

updated to reflect these new changes, providing clear guidance on

utilizing the new features and ensuring seamless integration.

Thank you to the LangChain team for their exceptional work and for

considering this PR. Please let me know if any additional information is

needed.

---------

Co-authored-by: Shivani Modi <shivanimodi@Shivanis-MacBook-Pro.local>

Co-authored-by: Shivani Modi <shivanimodi@Shivanis-MBP.lan>

- **Description:** Baichuan Chat (with both Baichuan-Turbo and

Baichuan-Turbo-192K models) has updated their APIs. There are breaking

changes. For example, BAICHUAN_SECRET_KEY is removed in the latest API

but is still required in Langchain. Baichuan's Langchain integration

needs to be updated to the latest version.

- **Issue:** #15206

- **Dependencies:** None,

- **Twitter handle:** None

@hwchase17.

Co-authored-by: BaiChuanHelper <wintergyc@WinterGYCs-MacBook-Pro.local>

**Description:**

- Implement `SQLStrStore` and `SQLDocStore` classes that inherits from

`BaseStore` to allow to persist data remotely on a SQL server.

- SQL is widely used and sometimes we do not want to install a caching

solution like Redis.

- Multiple issues/comments complain that there is no easy remote and

persistent solution that are not in memory (users want to replace

InMemoryStore), e.g.,

https://github.com/langchain-ai/langchain/issues/14267,

https://github.com/langchain-ai/langchain/issues/15633,

https://github.com/langchain-ai/langchain/issues/14643,

https://stackoverflow.com/questions/77385587/persist-parentdocumentretriever-of-langchain

- This is particularly painful when wanting to use

`ParentDocumentRetriever `

- This implementation is particularly useful when:

* it's expensive to construct an InMemoryDocstore/dict

* you want to retrieve documents from remote sources

* you just want to reuse existing objects

- This implementation integrates well with PGVector, indeed, when using

PGVector, you already have a SQL instance running. `SQLDocStore` is a

convenient way of using this instance to store documents associated to

vectors. An integration example with ParentDocumentRetriever and

PGVector is provided in docs/docs/integrations/stores/sql.ipynb or

[here](https://github.com/gcheron/langchain/blob/sql-store/docs/docs/integrations/stores/sql.ipynb).

- It persists `str` and `Document` objects but can be easily extended.

**Issue:**

Provide an easy SQL alternative to `InMemoryStore`.

---------

Co-authored-by: Harrison Chase <hw.chase.17@gmail.com>

**Description** : New documents loader for visio files (with extension

.vsdx)

A [visio file](https://fr.wikipedia.org/wiki/Microsoft_Visio) (with

extension .vsdx) is associated with Microsoft Visio, a diagram creation

software. It stores information about the structure, layout, and

graphical elements of a diagram. This format facilitates the creation

and sharing of visualizations in areas such as business, engineering,

and computer science.

A Visio file can contain multiple pages. Some of them may serve as the

background for others, and this can occur across multiple layers. This

loader extracts the textual content from each page and its associated

pages, enabling the extraction of all visible text from each page,

similar to what an OCR algorithm would do.

**Dependencies** : xmltodict package

- **Description:** Updated the Chat/Ollama docs notebook with LCEL chain

examples

- **Issue:** #15664 I'm a new contributor 😊

- **Dependencies:** No dependencies

- **Twitter handle:**

Comments:

- How do I truncate the output of the stream in the notebook if and or

when it goes on and on and on for even the basic of prompts?

Edit:

Looking forward to feedback @baskaryan

---------

Co-authored-by: Bagatur <baskaryan@gmail.com>

## Problem

Spent several hours trying to figure out how to pass

`RedisChatMessageHistory` as a `GetSessionHistoryCallable` with a

different REDIS hostname. This example kept connecting to

`redis://localhost:6379`, but I wanted to connect to a server not hosted

locally.

## Cause

Assumption the user knows how to implement `BaseChatMessageHistory` and

`GetSessionHistoryCallable`

## Solution

Update documentation to show how to explicitly set the REDIS hostname

using a lambda function much like the MongoDB and SQLite examples.

After merging [PR

#16304](https://github.com/langchain-ai/langchain/pull/16304), I

realized that our notebook example for integrating TiDB with LangChain

was too basic. To make it more useful and user-friendly, I plan to

create a detailed example. This will show how to use TiDB for saving

history messages in LangChain, offering a clearer, more practical guide

for our users

I also added LANGCHAIN_COMET_TRACING to enable the CometLLM tracing

integration similar to other tracing integrations. This is easier for

end-users to enable it rather than importing the callback and pass it

manually.

(This is the same content as

https://github.com/langchain-ai/langchain/pull/14650 but rebased and

squashed as something seems to confuse Github Action).

- **Description:** add milvus multitenancy doc, it is an example for

this [pr](https://github.com/langchain-ai/langchain/pull/15740) .

- **Issue:** No,

- **Dependencies:** No,